Strategic Approaches to Reduce False Rejection Rates in Biomedical Quality Control

This article provides researchers, scientists, and drug development professionals with a comprehensive framework for optimizing quality control procedures to minimize false rejection rates while maintaining high error detection capability.

Strategic Approaches to Reduce False Rejection Rates in Biomedical Quality Control

Abstract

This article provides researchers, scientists, and drug development professionals with a comprehensive framework for optimizing quality control procedures to minimize false rejection rates while maintaining high error detection capability. Covering foundational principles through advanced applications, we explore key metrics like Probability for False Rejection (Pfr) and Probability for Error Detection (Ped), systematic methodologies including Quality Control Circles and Six Sigma, practical troubleshooting strategies using root cause analysis, and validation through cost-benefit assessment. The content synthesizes current best practices with emerging innovations, offering actionable strategies to enhance reliability, reduce costs, and improve operational efficiency in biomedical research and pharmaceutical development.

Understanding False Rejection: Fundamentals and Impact on Laboratory Efficiency

Defining False Rejection Rates (Pfr) and Error Detection (Ped) in QC Systems

Frequently Asked Questions (FAQs)

What is the difference between the Probability for False Rejection (Pfr) and the Probability for Error Detection (Ped)?

Probability for False Rejection (Pfr): This is the probability that a quality control (QC) procedure will incorrectly reject an analytical run when the method is actually performing stably and no significant error is present. It represents the rate of "false alarms" [1]. In an ideal system, the Pfr would be 0.00, meaning no false rejections occur [1]. A high Pfr can lead to wasted resources, unnecessary troubleshooting, and reduced productivity [2] [1].

Probability for Error Detection (Ped): This is the probability that a QC procedure will correctly identify and reject an analytical run that has an actual error. It measures the system's ability to detect real problems, such as shifts or increases in random error [1]. Ideally, the Ped should be 1.00, or 100% [1]. A high Ped is crucial for ensuring the reliability of test results and patient safety [3].

In summary, Pfr measures the system's tendency to "cry wolf," while Ped measures its ability to spot a real "fire" [1].

How do Pfr and Ped affect the security and usability of my QC system?

There is a fundamental trade-off between Pfr and Ped. Making your QC system more sensitive to detect real errors (increasing Ped) often also increases the rate of false rejections (Pfr). Conversely, making the system more lenient to reduce false alarms (lowering Pfr) can decrease its ability to catch real errors (lowering Ped) [2] [1].

This relationship is often managed by adjusting the system's threshold value [2]. A more lenient threshold may decrease Pfr but increase the False Accept Rate (FAR), compromising security. A stricter threshold may decrease FAR but increase Pfr, hurting usability [4]. The goal is to find a balance that provides sufficient error detection for your quality requirements while keeping false rejections at an acceptable level to maintain workflow efficiency [2] [3].

Why does using a 1:2s control rule lead to a high false rejection rate?

The 1:2s rule (a single control measurement outside ±2 standard deviations) is often used as a warning rule. However, when used as a rejection rule, it has a high probability of false rejection, which gets worse as more control measurements are used per run [1] [5].

The table below shows how the false rejection rate increases with the number of control measurements (N) when using a 1:2s rule:

| Number of Control Measurements (N) | Approximate False Rejection Rate (Pfr) for 1:2s Rule |

|---|---|

| 1 | ~5% |

| 2 | ~9% |

| 3 | ~14% |

| 4 | ~18% |

Data adapted from Westgard QC lessons [1] [5].

This means that with two control measurements per run—a common practice—you can expect nearly 1 in 10 runs to be falsely rejected, leading to significant waste and inefficiency [1] [5]. It is therefore considered poor practice to use the 1:2s rule as the sole criterion for run rejection [5].

What are some best practices for troubleshooting a failed QC run?

When a QC failure occurs, follow a systematic approach [6]:

- Put the problem in perspective: First, estimate the magnitude of the error. Use established QC multi-rules to determine if the error is a true failure or a single warning. Do not release patient results until the problem is resolved [6].

- Review Levey-Jennings charts: Identify the pattern of the error to understand its type [6]:

- Systematic Error (Shift/Trend): Indicated by multiple consecutive controls on one side of the mean (e.g., violating 2:2s, 4:1s, or 7T rules). This suggests a change in the method's accuracy, potentially from calibration issues, new reagent lots, or instrument maintenance [1] [6].

- Random Error: Indicated by a single control outside ±3s or a large spread between control values (e.g., violating R4s rule). This suggests an increase in imprecision, potentially from bubbles in samples, improperly mixed reagents, or power fluctuations [1] [6].

- Relate the error to possible causes: Based on the error type, investigate the most common root causes [6].

- Implement corrective actions and document: Perform corrective actions (e.g., calibration, maintenance, preparing fresh reagents) one at a time and monitor the effect. Document the entire process for future reference [6].

Troubleshooting Guides

Guide 1: Addressing High False Rejection Rates (Pfr)

A high Pfr disrupts workflow and wastes resources. Below are common causes and solutions.

| Problem Cause | Description | Recommended Solution |

|---|---|---|

| Use of over-sensitive QC rules (e.g., 1:2s) | Using control rules with high inherent false rejection rates, especially with multiple control measurements [1] [5]. | Implement multirule QC procedures (e.g., 1:3s/2:2s/R4s/4:1s). These combinations provide better error detection (Ped) while maintaining a low Pfr (typically ≤5% for N=4) [1] [5]. |

| Improperly configured thresholds | The decision threshold for accepting/rejecting a run is set too strictly, making the system overly sensitive to minor, insignificant fluctuations [2] [4]. | Re-calibrate the system's threshold based on the Equal Error Rate (EER) or to meet the specific security and usability needs of your laboratory [2] [4]. |

| Inadequate number of control measurements | Using too few or too many control measurements without adjusting the control rules can disrupt the balance between Pfr and Ped [1] [3]. | Follow a risk-based approach. Use QC design tools like the Sigma-metric Run Size Nomogram to determine the optimal number of control measurements and rules based on your assay's performance (sigma metric) and required run size [3]. |

Guide 2: Addressing Low Error Detection (Ped)

Low Ped means real errors go undetected, risking the release of unreliable results.

| Problem Cause | Description | Recommended Solution |

|---|---|---|

| Insufficiently sensitive QC rules | The control rules in use are not powerful enough to detect medically important errors [1]. | Adopt more sensitive rules or combinations. For systematic error, use rules like 2:2s, 4:1s, or 8x. For random error, the R4s and 1:3s rules are effective [1]. |

| Poor analytical method performance | The measurement procedure itself has high imprecision (CV) or bias, making it difficult to distinguish error from normal variation [7]. | Improve the method's performance. Calculate the sigma metric for your assay: σ = (TEa - |Bias|) / CV [3] [7]. Methods with a sigma ≥6 are world-class, while those with σ<4 may need improvement or more intensive QC monitoring [3] [7]. |

| Inadequate run size strategy | Testing too many patient samples between QC events increases the chance that an error will go undetected for a long time [3]. | Implement a multistage bracketed QC strategy. Use a more demanding "startup" QC design after maintenance or calibration, and a "monitor" design with a defined run size during continuous operation to maintain quality [3]. Use the Max E(Nuf) model to set a run size that keeps the expected number of unreliable results below one [3]. |

Experimental Protocols & Methodologies

Protocol 1: Calculating Sigma Metrics for QC Design

Purpose: To objectively evaluate the performance of an analytical method and design a statistically appropriate QC strategy.

Materials:

- Internal Quality Control (IQC) data (20+ data points recommended) [7]

- Proficiency Testing (PT) or External Quality Assessment (EQAS) data for bias estimation [7]

- Allowable Total Error (TEa) source (e.g., from CLIA, Ricos biological variation database) [3] [7]

Methodology:

- Calculate Imprecision: From your IQC data, calculate the mean (x̄) and standard deviation (SD) for each level of control. The coefficient of variation (%CV) is calculated as: %CV = (SD / x̄) * 100 [3] [7].

- Calculate Bias: Using PT/EQAS data, calculate the percentage bias relative to the target value: %Bias = [(Lab Result - Target Value) / Target Value] * 100 [3] [7].

- Determine Sigma Metric: Use the TEa, bias, and CV in the following formula: Sigma (σ) = (TEa - \%Bias) / \%CV [3] [7].

Interpretation and QC Design:

- σ ≥ 6: Excellent performance. Simple QC rules with fewer control measurements may be sufficient [3].

- σ = 5 - 6: Good performance. Standard multirule QC with N=2 is typically appropriate [3].

- σ = 4 - 5: Marginal performance. Requires more robust QC procedures, potentially with increased control measurements [3].

- σ < 4: Unacceptable performance for most clinical purposes. Method improvement is required before implementation [3].

Protocol 2: Implementing a Multistage QC Procedure

Purpose: To optimize QC efficiency by applying different stringency levels at different phases of operation, ensuring quality while managing false rejections [3].

Materials:

- Defined sigma metric for the assay [3]

- Defined workload and desired run size (maximum number of patient samples between QC events) [3]

- Sigma-metric Statistical QC Run Size Nomogram [3]

Methodology:

- Categorize Run Size: Based on daily workload, assign the test to a run size category (e.g., 12, 25, 50, 75, or 100 samples) [3].

- Design "Startup" QC: This is applied after major maintenance, calibration, or reagent lot changes. Using the nomogram, select a QC rule and number of control measurements (N) that provide a high Ped (>90%) for the assay's sigma value and run size [3].

- Design "Monitor" QC: This is applied during routine operation after the "startup" QC has been passed. Select a rule and N from the nomogram that maintains a low Pfr (<5%) while still providing adequate error detection for the defined run size [3].

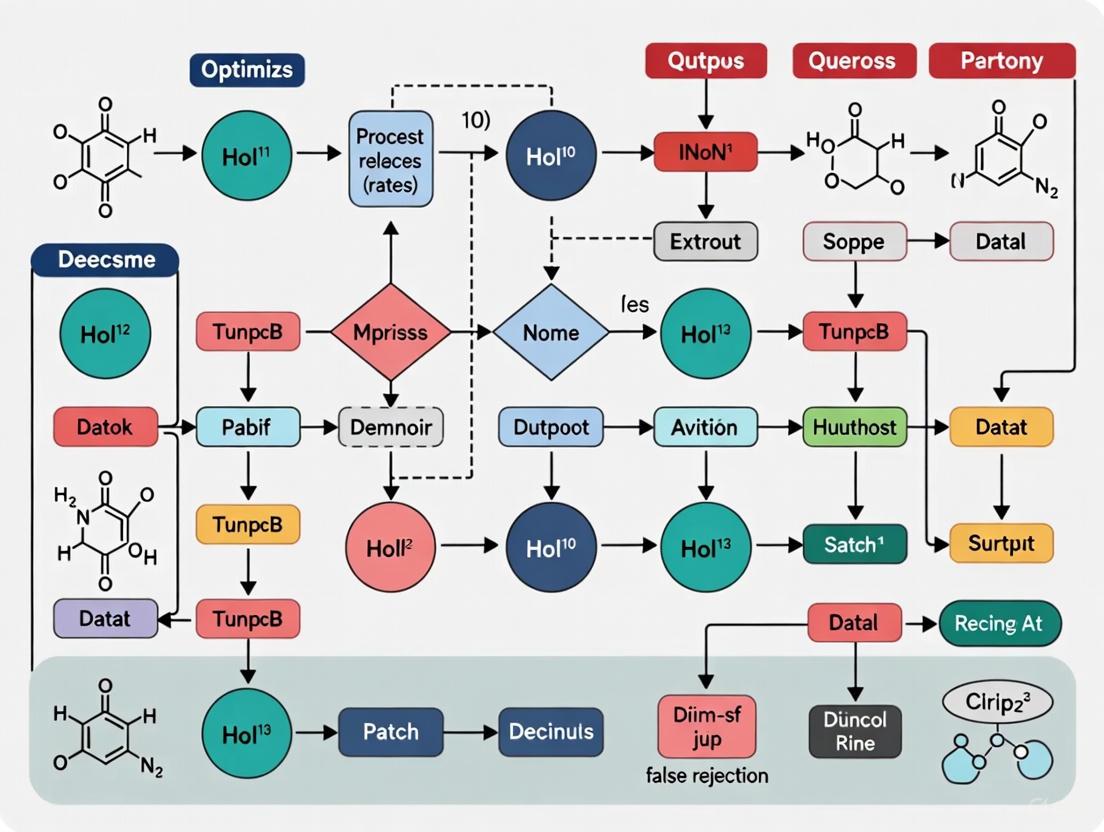

Workflow and Relationship Diagrams

QC Error Decision Pathway

Relationship Between Pfr, Ped, and QC Threshold

The Scientist's Toolkit: Essential QC Reagents and Materials

| Item Name | Function in QC and Experimentation |

|---|---|

| IQC Materials | Stable, assayed materials used to monitor the precision and accuracy of the analytical method on a daily basis. Examples include Multichem S Plus, PreciControl [3]. |

| Calibrators | Solutions with known analyte concentrations used to establish a calibration curve for the instrument, ensuring that measurements are accurate and traceable to a standard [3]. |

| Proficiency Testing (PT) Samples | Samples provided by an external program to assess a laboratory's analytical performance compared to peers and reference methods, used for bias estimation [7]. |

| Sigma-metric Run Size Nomogram | A graphical tool used to select appropriate QC rules and the number of control measurements based on the assay's sigma metric and the desired patient sample run size [3]. |

| Levey-Jennings Charts | A visual tool for plotting QC results over time, allowing for the easy identification of trends, shifts, and increased random error [6]. |

| Multirule QC Procedures | A set of statistical rules (e.g., 1:3s, 2:2s, R4s) used in combination to improve error detection while minimizing the probability of false rejection [1] [5] [8]. |

The Clinical and Operational Impact of False Rejections on Laboratory Workflows

In clinical and research laboratories, a "false rejection" occurs when a valid specimen or test result is incorrectly classified as unacceptable or erroneous. This disrupts workflows, increases costs, and delays critical outcomes. This technical support center provides troubleshooting guides and FAQs to help researchers and scientists identify, address, and prevent the root causes of false rejections in their experimental and quality control procedures.

Understanding the scale and common causes of specimen rejection is the first step in optimizing workflows. The following data summarizes findings from broad analyses.

Table 1: Global Pooled Prevalence and Primary Causes of Blood Specimen Rejection [9]

| Metric | Value |

|---|---|

| Pooled Prevalence of Rejection | 1.99% (95% CI: 1.73, 2.25) |

| Highest Prevalence by Region | Asia: 2.82% (95% CI: 2.21, 3.43) |

| Lowest Prevalence by Region | America: 0.55% (95% CI: 0.27, 0.82) |

| Leading Cause of Rejection | Clotted Specimen: 32.23% (95% CI: 21.02, 43.43) |

| Second Leading Cause | Hemolysis: 22.87% (95% CI: 16.72, 29.02) |

| Third Leading Cause | Insufficient Volume: 22.81% (95% CI: 16.75, 28.87) |

Table 2: Detailed Causes from a Single-Institution Study [10]

| Rejection Cause | Frequency (n) | Percentage of Rejections |

|---|---|---|

| Contamination (e.g., by IV fluid) | 764 | 35.1% |

| Inappropriate Collection Container/Tube | 330 | 15.2% |

| Quantity Not Sufficient (QNS) | 329 | 15.1% |

| Labeling Errors | 321 | 14.7% |

| Hemolyzed Specimen | 205 | 9.4% |

| Clotted Specimen | 203 | 9.3% |

Experimental Protocols for Monitoring and Reducing Rejections

Protocol 1: Systematic Review and Meta-Analysis of Rejection Rates

This methodology is used to establish global baselines and identify major error sources. [9]

- Literature Search: Conduct a comprehensive online search of databases (e.g., MEDLINE, PubMed, EMBASE, Google Scholar) using terms like "specimen rejection," "blood specimen rejection," and "pre-analytical error."

- Study Selection: Apply strict eligibility criteria using guidelines like PRISMA. Include prospective/retrospective cohort and cross-sectional studies reporting blood specimen rejection rates. Exclude reviews, case reports, and non-blood specimens.

- Data Extraction: Independently extract data from selected studies onto a standardized spreadsheet. Key data points include: author, publication year, sample size, rejection rate, and causes of rejection.

- Quality Assessment: Appraise study quality using a critical appraisal checklist (e.g., Joanna Briggs Institute JBI tool). Include studies meeting a predefined quality score threshold.

- Statistical Synthesis: Perform meta-analysis using statistical software (e.g., STATA). Estimate pooled prevalence of rejection with a random-effects model, calculate 95% confidence intervals, and assess heterogeneity using I² statistics.

Protocol 2: Quality Improvement Initiative to Reduce Rejections

A practical framework for implementing and measuring interventions within a department or institution. [11]

- Baseline Measurement: Audit the laboratory information system to determine the current specimen rejection rate over a defined period (e.g., 1 year).

- Goal Setting: Define a specific, measurable goal. For example, "Reduce the percentage of rejected blood samples from 1.43% by 50% in the emergency department and coronary ICU within 20 months."

- Form a Multidisciplinary Team: Include phlebotomists, laboratory technologists, nurses, and physicians.

- Implement Interventions:

- Education & Training: Provide targeted phlebotomy education and competency validation through direct observation.

- Process Improvement: Ensure the use of appropriate consumables (e.g., collection tubes, needles).

- Stakeholder Engagement: Educate physicians on proper test ordering to reduce errors.

- Monitor with Rapid Cycle Improvements: Use weekly data reviews to assess the impact of changes and adapt strategies quickly.

Troubleshooting Guides & FAQs

Frequently Asked Questions

Q1: What is the typical clinical impact of a specimen rejection? Specimen rejections lead to prolonged turnaround times for test results, which can delay diagnosis and treatment. One study found recollections increased turnaround time by an average of 108 minutes [10]. This also necessitates re-drawing blood, which is uncomfortable for patients and carries risks like hematoma or iatrogenic anemia [9].

Q2: Our lab's rejection rate is high due to clotted samples. What are the primary causes? Clotted specimens, the leading cause of rejection globally, often result from improper mixing of blood with anticoagulant in the collection tube [9]. This can be due to insufficient inversion of the tube after collection or a slow draw that allows clotting to begin before mixing.

Q3: How can technology help reduce false rejections and improve workflow efficiency? Laboratory Information Management Systems (LIMS) and other centralized data platforms can dramatically optimize workflows [12]. For example, Merck leveraged advanced analytics on AWS to reduce false rejection rates by over 80% and accelerate investigation times from weeks to seconds [13]. Automated systems for task management and standardized procedures also reduce manual entry errors [12].

Q4: In analytical chemistry, what is the relationship between false positives and false negatives? There is often a trade-off. For instance, concentrating a sample might decrease the chance of a false negative but increase the risk of a false positive, and vice-versa for dilution [14]. The most effective way to reduce both is to use a high-quality, optimized method and to employ multiple analytical techniques to confirm results [14].

Q5: How does experimental design affect false acceptance and rejection in precision verification? The design of a precision verification experiment directly impacts its error rates. Using more samples increases the False Rejection Rate (FRR). Increasing the number of days, runs, or replicates in the experiment design reduces the FRR and also lowers the False Acceptance Rate (FAR) for between-day imprecision and repeatability [15].

Troubleshooting Common Pre-Analytical Errors

| Problem | Potential Causes | Corrective & Preventive Actions |

|---|---|---|

| Clotted Specimen | - Improper tube mixing- Slow draw time- Incorrect needle gauge | - Train on proper inversion technique (e.g., 5-10 times for EDTA tubes)- Ensure swift, smooth venipuncture [9] |

| Hemolyzed Specimen | - Difficult draw- Using a small-gauge needle- Vigorous shaking of tubes | - Use correct needle size- Train on gentle handling and mixing- Use specialized tubes or devices for difficult draws [11] |

| Insufficient Volume | - Tube pulled early- Vein collapsed during draw- Misunderstanding of test requirements | - Train to fill tubes to the correct volume- Verify test volume requirements in SOPs [9] [10] |

| Labeling Errors | - Rush to process patients- Unclear labeling policies | - Implement a policy of labeling at the patient's bedside- Use barcode systems integrated with the Hospital Information System (HIS) [16] [10] |

| Sample Contamination | - Drawing from an IV line- Improper site cleansing | - Always draw below an IV infusion site- Follow proper venipuncture site cleansing protocols [10] |

Workflow Visualization: From Problem to Optimization

The following diagram illustrates a structured approach to diagnosing and addressing high specimen rejection rates, moving from problem identification to sustainable solution implementation.

Table 3: Key Research Reagent Solutions for Quality Control [14] [17] [18]

| Item | Function |

|---|---|

| Adherence Markers (e.g., Riboflavin) | Inert biomarkers added to investigational drugs to objectively verify medication adherence in clinical trial participants via urine testing. [17] |

| Electronic Pill Monitoring Systems | Smart pill bottles or caps that record the date and time of opening, providing real-time, objective data on medication adherence. [17] |

| Laboratory Information Management System (LIMS) | A centralized software platform for tracking samples, test results, and associated data, standardizing procedures and reducing manual errors. [12] [18] |

| Sample Preparation Kits | Optimized kits for specific sample types (e.g., DNA/RNA extraction, protein purification) to minimize variability and contamination, reducing pre-analytical errors. [18] |

| Quality Control Materials | Commercial quality control samples with known analyte concentrations used to verify the precision and accuracy of analytical methods before testing patient or research samples. [15] |

| Automated Structure Verification (ASV) Software | Software that uses NMR and LC-MS data to automatically identify compounds, reducing human error in structure verification. [14] |

FAQs on QC Metrics and False Rejection Rates

This section addresses common questions from researchers about optimizing quality control procedures.

1. What is the difference between a 'false reject' and a 'false pass' in automated systems?

A false reject (or false positive) occurs when a conforming, good-quality item is incorrectly identified as defective and rejected by the QC system [19]. Conversely, a false pass (false negative) happens when a genuinely defective item is incorrectly passed by the system. The goal of optimization is to minimize both, with a specific research focus on reducing false rejection rates to decrease unnecessary waste and costs [20] [19].

2. Which metrics most directly indicate the effectiveness of our QC procedures?

The most direct metrics form a linked chain from process efficiency to real-world outcomes [21]:

- Process Health: Test Reliability (or Flake Rate) measures whether your tests fail randomly rather than due to actual defects. A high rate means results can't be trusted to make pass/fail decisions [21].

- Analytical Power: Defect Removal Efficiency (DRE) shows the percentage of defects caught before a product is released. A low DRE indicates defects are escaping to later, more costly stages [21].

- Real-World Outcome: Escaped Defects and Defect Leakage measure the failures that your process did not catch, providing the ultimate measure of QC effectiveness [21] [22].

3. How can a risk-based approach to QC reduce false rejects?

A risk-based approach focuses efforts where they matter most. It involves:

- Categorizing Defects: Prioritize based on severity and impact on patient safety or product function [23].

- Tailoring QC Rules: Use more stringent QC rules for high-risk parameters and simpler, more efficient rules for stable, well-performing processes. This prevents over-testing and unnecessary rejection of good items [23].

- Leveraging Sigma Metrics: Using the sigma scale (see Table 1) to objectively classify the performance of each analytical method allows for precisely tailored QC strategies [23].

4. Our automated visual inspection system has a high false reject rate. What are the primary troubleshooting steps?

High false rejects in Automated Visual Inspection (AVI) systems are often caused by the interaction between the system's sensitivity and the product itself [20] [19].

- Verify Lighting and Optics: Ensure consistent, optimized lighting to minimize reflections and shadows on shiny or complex parts that can be misinterpreted as defects [19].

- Calibrate and Validate Thresholds: Review the acceptance thresholds in your inspection recipe. Overly strict settings will reject good units [20].

- Implement a Re-inspection Process: A dedicated, secondary inspection station for rejects can automatically verify them, rescuing good products and providing data to fine-tune the primary AVI system [20].

- Explore AI/ML Algorithms: Traditional rule-based vision systems can struggle with subtle variations. AI-powered vision systems can learn to distinguish between acceptable variation and true defects more accurately [19].

Key QC Metrics and Terminology Framework

The tables below define core metrics and terminology essential for establishing a common language and measuring QC performance.

Table 1: Foundational QC Process Metrics

| Metric | Definition | Formula / Calculation | Interpretation & Target |

|---|---|---|---|

| Defect Removal Efficiency (DRE) [21] | Percentage of defects found before release. | (Defects found pre-release / Total defects found) x 100 | Higher is better. Measures in-process control effectiveness. Target > 90-95% [21]. |

| False Rejection Rate [19] | Percentage of conforming items incorrectly flagged as defective. | (Number of false rejects / Total number of good items) x 100 | Lower is better. Directly impacts waste and cost. Target < 0.2% in mature systems [19]. |

| Test Reliability / Flake Rate [21] | Measure of test result consistency, not failure due to actual defects. | (Number of flaky test runs / Total test runs) x 100 | Lower is better. A high rate undermines trust in QC gates. Target < 1% [21]. |

| Sigma Metric [23] | Measure of process capability and performance. | (Allowable Total Error - Bias) / Coefficient of Variation (CV) | Higher is better. A sigma ≥ 6 is world-class; σ < 4 requires more stringent (and potentially slower) QC [23]. |

| Max E(Nuf) [23] | Maximum Expected number of Unreliable final results. Estimates erroneous results released before error detection. | Calculated via model considering sigma, QC frequency, and rule design [23] | A value < 1.0 indicates the QC strategy is effective at minimizing risk to the patient or end-user [23]. |

Table 2: Key Outcome and Business Impact Metrics

| Metric | Definition | Formula / Calculation | Interpretation & Target |

|---|---|---|---|

| Defect Density [24] [22] | Concentration of defects in a specific product module or component. | (Number of defects / Size of module) | Lower is better. Pinpoints unstable areas for improvement. Used to gate releases [22]. |

| Defect Leakage [22] | Percentage of defects missed by QC and found post-release. | (Defects found in production / Total defects) x 100 | Lower is better. Directly reflects customer impact. Track trends and set severity-based tolerances [22]. |

| Mean Time to Detect (MTTD) [24] | Average time from defect introduction to its detection. | Total time to detect defects / Number of defects | Lower is better. Indicates efficiency of monitoring and feedback loops. |

| Mean Time to Resolve (MTTR) [24] | Average time from defect detection to its resolution and verification. | Total time to resolve defects / Number of defects | Lower is better. Reflects team responsiveness and rework cost. |

| Cost of Quality [21] | Total cost of quality activities plus cost of failures. | Cost of Prevention + Cost of Appraisal + Cost of Internal/External Failures | A business-level metric. Optimization aims to reduce total cost by balancing prevention and failure costs [21]. |

Experimental Protocols for QC Optimization

The following section provides a detailed methodology for implementing and validating an optimized, risk-based QC strategy, as demonstrated in a clinical laboratory setting [23].

Protocol: Implementing a Multi-Stage, Risk-Based QC Strategy

1. Objective To design and implement a statistical quality control plan that integrates multi-stage designs and risk management criteria to minimize the risk of reporting erroneous results while maintaining operational efficiency [23].

2. Experimental Workflow The process for designing the QC strategy follows a logical, sequential path, as visualized below.

3. Materials and Equipment

- Quality Control Materials: Commercial IQC materials (e.g., Multichem S Plus, PreciControl) [23].

- Analyzers: The automated analytical systems used for routine testing (e.g., clinical chemistry analyzers like Alinity c, Cobas Pro) [23].

- Data Analysis Software: Software capable of statistical analysis and sigma metric calculation (e.g., R, Python, specialized QC software).

- Reference Materials: For establishing target values for calculating bias.

4. Step-by-Step Methodology

Step 1: Evaluate Analytical Performance

- For each parameter on each analyzer, calculate the imprecision (as Coefficient of Variation, CV%) and bias (as percent deviation from target) over a significant period (e.g., 6 months) [23].

- Obtain the Allowable Total Error (TEa) from appropriate sources (e.g., biological variation, regulatory guidelines) [23].

- Calculate the Sigma Metric for each parameter and analyzer using the formula: Sigma = (TEa - Bias) / CV [23].

Step 2: Categorize Parameters by Workload

- Group parameters based on their daily testing volume. This determines the "sample run size" (number of patient samples between two QC events).

- Example categories from the study [23]:

- Category A (High): Run size of 100 samples.

- Category C (Medium): Run size of 50 samples.

- Category E (Low): Run size of 12 samples.

Step 3: Design a Multi-Stage QC Plan

- Use a tool like the Sigma Metric Run Size Nomogram [23] to select appropriate statistical QC rules for two distinct phases:

- 'Startup' Stage: Applied at the beginning of an analytical run. Use a QC rule with a high Probability of Error Detection (Ped ≥ 0.90) to ensure the system is in control before reporting patient results. Example: Use a

13.5s / 2 of 3² / R4smulti-rule for a parameter with Sigma = 5 and a run size of 50 [23]. - 'Monitor' Stage: Applied during continuous operation. Use a QC rule with a low Probability of False Rejection (Pfr ≤ 0.05) to maintain efficiency while monitoring quality. Example: Use a

13srule for the same parameter to monitor quality during the run [23].

- 'Startup' Stage: Applied at the beginning of an analytical run. Use a QC rule with a high Probability of Error Detection (Ped ≥ 0.90) to ensure the system is in control before reporting patient results. Example: Use a

- Use a tool like the Sigma Metric Run Size Nomogram [23] to select appropriate statistical QC rules for two distinct phases:

Step 4: Implement and Validate the Plan

- Implement the selected QC rules in the laboratory information system or manual QC review process.

- Harmonize plans across multiple analyzers for the same parameter to simplify operations [23].

- Continuously monitor the Max E(Nuf) value to ensure it remains below 1, confirming the strategy effectively minimizes risk [23].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Materials for Automated Inspection and QC Research

| Item | Function in Research Context |

|---|---|

| AI-Powered Vision System [19] | Used to develop and train machine learning models for distinguishing true defects from acceptable variations, directly tackling the problem of high false rejection rates. |

| Internal Quality Control (IQC) Materials [23] | Stable, characterized samples used to run the experiments that determine a method's imprecision and bias, which are the foundational data for calculating Sigma metrics. |

| 3D Laser Scanner / Metrology System [19] | Provides high-accuracy, volumetric measurement data as a "gold standard" to validate the measurements and defect calls made by faster, inline automated visual inspection systems. |

| Digital Twin Software [19] | A digital replica of the physical process. Researchers use it to model different QC scenarios, predict how changes will affect false rejection rates, and optimize strategies before live implementation. |

| Sigma Metric Run Size Nomogram [23] | A practical tool (often a chart or software) that translates a parameter's Sigma value and sample run size into a recommended statistical QC rule, guiding the experimental design of optimized QC plans. |

FAQs: Troubleshooting Quality Control in the Laboratory

What is the practical impact of sensitivity and specificity in my QC procedures?

In quality control, sensitivity is the probability that your QC procedure will correctly identify an out-of-control error (also known as Probability of Error Detection, Ped). Specificity is the probability that your procedure will correctly identify an in-control process, with a high specificity meaning a low chance of false rejection (Pfr) [25].

A common issue laboratories face is the false rejection of good runs, which wastes significant time and resources [26]. For example, using a simple 12s rule with N=2 can lead to falsely rejecting about 9% of good runs [26]. Conversely, a procedure with low sensitivity will fail to detect medically important errors, potentially leading to incorrect patient results and increased costs from further, unnecessary confirmatory testing [25].

My lab is experiencing high false rejection rates. How can I reduce them without compromising error detection?

High false rejection rates are often caused by using QC procedures that are too sensitive for the stable performance of your assay [26]. To address this, consider implementing multirule QC procedures.

Multirule QC uses a combination of control rules (e.g., 13s, 22s, R4s) to judge an analytical run [26]. The advantage is that these rules are structured to maintain high error detection (sensitivity) while keeping false rejections (low specificity) low. For instance, using a 12s rule as a "warning" to trigger the application of other, more specific rejection rules can significantly reduce false alarms without missing true errors [26].

How can I objectively determine the best QC rules and frequency for my specific tests?

The optimal QC procedure depends on the sigma metric of your analytical process [25]. The sigma metric is a measure of process performance, calculated as (TEa% - Bias%) / CV% [25].

The table below summarizes how sigma performance can guide your QC strategy:

| Sigma Metric | Process Quality | Recommended QC Strategy |

|---|---|---|

| > 6 | World-Class | Minimal QC; simple rules with fewer controls may be sufficient [25]. |

| 4 - 6 | Good | Flexible QC procedures; use of multirules is often appropriate [25]. |

| < 4 | Low | Stricter control guidelines and more frequent QC are needed [25]. |

You can use software tools to validate and select candidate QC rules based on your test's sigma value, aiming for a high Probability of Error Detection (Ped ≥ 90%) and a low Probability of False Rejection (Pfr ≤ 5%) [25].

A run was rejected by a QC rule. What is a systematic troubleshooting approach?

When a run is rejected, follow this logical workflow to identify the cause:

This systematic approach helps to correct problems that may occur with instruments, reagents, or quality control material, thereby avoiding the reporting of erroneous patient results and contributing to patient safety [27].

Experimental Protocols for QC Optimization

Protocol: Calculating Sigma Metrics for QC Validation

Purpose: To objectively evaluate the performance of laboratory tests and select evidence-based QC procedures [25].

Materials:

- Internal Quality Control (IQC) data collected over a significant period (e.g., 30 days minimum).

- External Quality Assessment (EQA) or peer group data for bias calculation.

- Acceptable Total Allowable Error (TEa) from sources like CLIA or biological variation databases.

Method:

- Calculate Imprecision (CV%): Using your IQC data, calculate the laboratory mean and standard deviation for each test and control level. CV% = (Standard Deviation / Laboratory Mean) × 100 [25].

- Calculate Inaccuracy (Bias%): Using EQA or peer group data, determine the difference between your lab's result and the target value. Bias% = [(Observed Value - Target Value) / Target Value] × 100 [25].

- Determine Sigma Metric: Use the formula: Sigma (σ) = (TEa% - Bias%) / CV% [25].

- Average Sigma Values: If multiple control levels are used, average the sigma values for each level to get a single sigma metric for the test [25].

Protocol: Implementing and Validating a New Multirule QC Procedure

Purpose: To transition from a single-rule QC procedure to a multirule procedure to reduce false rejections and improve error detection [25] [26].

Materials:

- Established test mean and standard deviation for control materials.

- QC validation software (e.g., Biorad Unity) or manual Levey-Jennings charts with limits at ±1s, ±2s, and ±3s.

Method:

- Define the Rule Set: Select a combination of rules appropriate for your N (total number of control measurements per run). A common starting point is the Westgard multirule: 1

3s/22s/R4s[26]. - Establish a Warning System: In manual applications, use the 1

2srule as a warning. When a control point exceeds a 2s limit, it triggers a review using the other rejection rules [26]. - Apply Rejection Rules:

- 1

3s: Reject the run if a single control measurement exceeds the ±3s limit. - 2

2s: Reject the run if two consecutive controls exceed the same ±2s limit. - R

4s: Reject the run if one control in a group exceeds +2s and another exceeds -2s within the same run [26].

- 1

- Monitor Performance: Track the false rejection rate and error detection rate before and after implementation to quantify improvements [25].

Financial and Operational Savings from QC Optimization

A one-year study on 23 biochemistry parameters demonstrated significant cost savings after implementing sigma-based QC rules [25]. The results are summarized below:

| Cost Category | Savings After Optimization (INR) | Percentage Reduction |

|---|---|---|

| Internal Failure Costs (e.g., reagent waste, repeat labor) | 501,808.08 | 50% |

| External Failure Costs (e.g., further patient testing) | 187,102.80 | 47% |

| Total Combined Savings | 750,105.27 | -- |

Performance of Common QC Rules

Understanding the properties of different control rules helps in designing a balanced QC strategy [26].

| QC Rule | False Rejection (Specificity Impact) | Typical Use |

|---|---|---|

12s |

High (~9% with N=2) | Warning rule to trigger further checks [26]. |

13s |

Very Low (~1% with N=2) | Good for high-sigma processes; low error detection for smaller shifts [26]. |

Multirule (e.g., 13s/22s/R4s) |

Low | Balances high error detection with low false rejection [26]. |

Visualizing the Sensitivity-Specificity Relationship in QC

The relationship between a test's performance (sigma metric) and the optimal QC strategy can be visualized as a continuum. The following diagram illustrates how the focus of your QC procedure should shift as sigma metric changes.

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Material | Function in QC Optimization |

|---|---|

| Third-Party Assayed Controls | Used to independently verify analyzer performance and calculate bias without manufacturer influence [25]. |

| Lyphocheck Clinical Chemistry Control | An example of a stable control material used for daily Internal Quality Control (IQC) to determine assay imprecision (CV%) [25]. |

| Biorad Unity 2.0 Software | A software tool that aids in QC validation, sigma metric calculation, and the selection of candidate QC rules based on performance data [25]. |

| External Quality Assessment (EQA) Scheme | Provides target values for peer comparison, which are essential for calculating the inaccuracy (Bias%) of your method [25]. |

| CLIA / Biological Variation Database | Authoritative sources for obtaining the Total Allowable Error (TEa) required for sigma metric calculations [25]. |

Systematic Methodologies for Implementing Effective QC Optimization

In laboratory medicine and pharmaceutical development, false rejection of analytical runs poses a significant challenge to operational efficiency and resource utilization. A false rejection occurs when quality control (QC) procedures incorrectly flag an analytical run as unacceptable despite the method performing within its stable imprecision limits [1]. This not only wastes reagents and personnel time but can also delay critical test results and drug development timelines. The probability for false rejection (Pfr) describes the likelihood of these false alarms, while the probability for error detection (Ped) indicates how well the QC system identifies genuine problems [1]. This article explores how the integrated application of Quality Control Circles (QCC) and the Plan-Do-Check-Act (PDCA) cycle creates a structured framework for optimizing QC procedures, effectively reducing false rejection rates while maintaining high error detection capability.

Understanding Quality Control Circles and the PDCA Framework

Fundamentals of Quality Control Circles (QCC)

Quality Control Circles (QCC) are collaborative, team-based initiatives where small groups of employees (typically 3-12 members) from the same or cross-functional departments voluntarily meet regularly to identify, analyze, and solve work-related problems [28]. Originating in 1960s Japan through Kaoru Ishikawa and the Japanese Union of Scientists and Engineers (JUSE), QCCs emphasize employee engagement, systematic problem-solving, and continuous improvement [28]. In research and laboratory settings, QCCs provide a structured mechanism for tackling persistent quality issues, including suboptimal QC procedures that lead to high false rejection rates.

The PDCA Cycle as an Engine for Systematic Improvement

The Plan-Do-Check-Act (PDCA) cycle is a four-stage iterative methodology for continuous improvement that serves as the operational engine for QCC activities [29]. Also known as the Deming Cycle, it provides a structured framework for testing and implementing changes:

- Plan: Identify an opportunity, define the problem, and plan the change

- Do: Implement the change on a small scale

- Check: Measure the results and analyze whether the change worked

- Act: If successful, implement the change on a wider scale and standardize the process [29]

The power of PDCA lies in its iterative nature – each cycle builds on previous learning, creating continuous refinement of processes and systems [29].

Integrated QCC-PDCA Methodology for Error Reduction

Phase 1: QCC Formation and Planning (P of PDCA)

QCC Team Composition: Establish a cross-functional team comprising 5-8 members representing relevant specialties – laboratory technicians, quality assurance officers, data analysts, and research scientists [30] [28]. Including diverse perspectives ensures comprehensive understanding of the QC rejection issues.

Problem Definition and Baseline Measurement:

- Clearly define "false rejection rate" as a key performance indicator

- Collect historical QC data to establish baseline false rejection percentages

- Document current QC procedures, including control rules, number of control measurements (N), and acceptance criteria [1]

Analytical Tools Application:

- Flowchart Analysis: Map the entire QC process from sample preparation to result reporting [30]

- Pareto Analysis: Identify the most significant factors contributing to false rejections using the 80/20 principle [30]

- Cause-and-Effect Analysis: Utilize fishbone diagrams to systematically identify root causes of unnecessary rejections [30]

Phase 2: Implementation and Data Collection (D of PDCA)

Targeted Interventions: Based on root cause analysis, implement specific improvements to QC procedures:

- QC Rule Optimization: Replace high false rejection rules (e.g., 1₂s with N≥2 showing 9-18% Pfr) with more appropriate multirule procedures (e.g., 1₃s/2₂s/R₄s with ≤5% Pfr) [1]

- Control Measurement Strategy: Adjust the number of control measurements (N) based on quality goals and error detection requirements

- Procedure Standardization: Develop and implement standardized operating procedures (SOPs) for QC evaluation and troubleshooting [30]

Staff Training and Engagement:

- Conduct specialized training on proper QC techniques and interpretation

- Establish specimen collection liaisons for timely communication [30]

- Implement quality control teams to oversee specimen quality before testing [30]

Phase 3: Performance Monitoring and Analysis (C of PDCA)

Data Collection and Statistical Analysis:

- Monitor false rejection rates (Pfr) daily using statistical process control charts

- Track error detection capabilities (Ped) to ensure maintained sensitivity to genuine problems

- Utilize statistical software (e.g., SPSS) for trend analysis and significance testing [30]

Performance Metrics Evaluation: Compare key performance indicators against baseline measurements and established targets:

Table 1: Key Performance Indicators for QC Optimization

| Metric | Definition | Target | Measurement Method |

|---|---|---|---|

| Probability for False Rejection (Pfr) | Probability of rejecting an analytical run when no error exists | ≤5% [1] | Statistical analysis of stable process data |

| Probability for Error Detection (Ped) | Probability of detecting genuine analytical errors | ≥90% [1] | Challenge testing with introduced errors |

| Specimen Rejection Rate | Percentage of specimens rejected due to pre-analytical errors | Laboratory-specific benchmark | Laboratory information system tracking |

| Process Sigma Level | Overall process capability | Industry benchmark | Statistical calculation of process capability |

Phase 4: Standardization and Continuous Improvement (A of PDCA)

Standardization of Successful Interventions:

- Document and implement refined QC procedures as standard operating protocols

- Establish ongoing monitoring systems with defined review cycles

- Integrate successful changes into the quality management system [28]

Continuous Improvement Cycle:

- Address unresolved issues in subsequent PDCA cycles

- Share best practices across departments and facilities

- Regularly review and update QC procedures based on performance data and emerging technologies

Troubleshooting Guide: Common QC Challenges and Solutions

Table 2: Troubleshooting Common QC Implementation Challenges

| Problem | Potential Causes | Corrective Actions | Preventive Measures |

|---|---|---|---|

| High False Rejection Rates | Overly sensitive control rules (e.g., 1₂s with N>1) [1] | Implement multirule procedures with lower Pfr | Conduct QC validation studies before implementation |

| Inconsistent Error Detection | Insufficient control measurements (N) [1] | Increase N based on quality requirements | Perform power function analysis to determine optimal N |

| Poor Staff Compliance with QC Procedures | Inadequate training, unclear instructions [30] | Provide hands-on training, appoint QC liaisons | Establish clear SOPs with visual aids |

| Variable Pre-analytical Quality | Lack of standardized collection procedures [30] | Implement standardized collection protocols | Establish specimen collection liaisons |

Experimental Protocols for QC Procedure Validation

Protocol 1: Establishing Baseline False Rejection Rates

Purpose: To determine the current probability for false rejection (Pfr) of existing QC procedures.

Materials and Equipment:

- Historical QC data (minimum 30 days)

- Statistical analysis software (e.g., SPSS, R)

- QC validation worksheets

Procedure:

- Collect at least 30 consecutive days of stable QC data

- Apply current control rules to this data set

- Count the number of rejection signals generated

- Calculate Pfr as: (Number of false rejections / Total number of runs) × 100

- Compare calculated Pfr with theoretical expectations [1]

Interpretation: Pfr > 5% indicates need for procedure optimization [1]

Protocol 2: Evaluating Error Detection Capability

Purpose: To verify the probability for error detection (Ped) of proposed QC procedures.

Materials and Equipment:

- Computer simulation software or stable process data

- Quality requirements (allowable total error)

- Statistical power function curves

Procedure:

- Define medically important systematic and random errors

- Introduce simulated errors of increasing magnitude into stable baseline data

- Apply proposed QC rules to challenged data

- Calculate Ped as: (Number of errors detected / Total errors introduced) × 100

- Verify Ped ≥ 90% for critical errors [1]

Interpretation: Ped < 90% for critical errors requires procedure modification.

Essential Research Reagent Solutions for QC Studies

Table 3: Essential Materials for QC Optimization Research

| Item | Specifications | Application in QC Research |

|---|---|---|

| Stable Control Materials | Long-term stability, commutable with patient samples | Baseline establishment, false rejection studies |

| Computer Simulation Software | Capable of incorporating biological variation, error simulation | Error detection evaluation, power function analysis |

| Statistical Analysis Package | SPSS, R, or equivalent with quality control modules | Data analysis, trend identification, significance testing |

| Quality Control Documentation System | Electronic with audit trail capability | SOP management, change control, data integrity |

| Process Mapping Tools | Visual workflow software | Process analysis, bottleneck identification |

Case Study: Successful Implementation in Laboratory Medicine

A recent study demonstrated the effective application of QCC-PDCA methodology to reduce specimen rejection rates in a hospital clinical laboratory [30]. The initiative followed structured PDCA phases:

Planning Phase: The QCC team analyzed rejection causes using Pareto analysis, identifying that lack of sample collection information (48.7%) and blood clotting (29.1%) accounted for nearly 80% of rejections [30].

Implementation Phase: Targeted interventions included appointing specimen collection liaisons, establishing quality control teams, and providing specialized training on blood collection procedures [30].

Results: The monthly specimen rejection rate decreased significantly from 1.13% to 0.27% (p<0.001), demonstrating the methodology's effectiveness in improving quality while reducing unnecessary rejections [30].

The integrated QCC-PDCA methodology provides a robust framework for systematically reducing false rejection rates while maintaining high error detection capability in laboratory and pharmaceutical settings. Through structured team-based problem solving, data-driven decision making, and continuous improvement cycles, organizations can optimize their quality control procedures to enhance efficiency, reduce costs, and maintain high-quality standards. The case examples and protocols provided offer practical guidance for implementation across various research and development environments.

Leveraging Six Sigma Metrics for QC Procedure Validation and Performance Assessment

Technical Support Center

Troubleshooting Guides

Guide 1: Addressing High False Rejection Rates (Pfr)

Problem: Quality control procedures are yielding unacceptably high false rejection rates, leading to increased reagent costs and labor for unnecessary reruns.

Symptoms:

- Frequent out-of-control flags even when no analytical error is present

- Increased costs from repeated control measurements and patient sample reruns

- Decreased laboratory efficiency and prolonged turnaround times

Investigation and Resolution:

| Step | Action | Expected Outcome |

|---|---|---|

| 1. Calculate Sigma Performance | Determine sigma metrics for problematic analytes using formula: σ = (TEa - Bias%) / CV% [25] [23] [31]. | Identify which parameters have sigma values < 4, indicating inherently unstable processes requiring different QC rules. |

| 2. Evaluate Current QC Rules | Assess if uniform QC rules (e.g., 1(_{2s})) are applied to all tests regardless of performance [23]. | Recognition that high-performing tests (σ ≥ 6) are being subjected to overly sensitive control rules. |

| 3. Implement Risk-Based QC | Apply multistage QC strategy: Use "startup" design with high Ped (>90%) initially, then "monitor" design with low Pfr (≤5%) for continuous operation [23]. | Reduced false rejections during routine monitoring while maintaining error detection capability. |

| 4. Validate New QC Strategy | Use power function graphs to verify new QC rules meet predefined Ped and Pfr thresholds [23]. | Confirmation that optimized strategy maintains quality while reducing false rejections. |

Verification: Monitor false rejection rates weekly after implementation. Target reduction of Pfr to ≤5% [23].

Guide 2: Managing Analytical Tests with Low Sigma Performance

Problem: Certain tests consistently show poor performance (sigma < 4) despite acceptable imprecision and bias.

Symptoms:

- Frequent legitimate QC failures

- Difficulty in maintaining stable performance

- Increased risk of reporting unreliable patient results

Investigation and Resolution:

| Step | Action | Expected Outcome |

|---|---|---|

| 1. Perform Root Cause Analysis | Use Quality Goal Index (QGI) to determine whether poor performance is driven primarily by imprecision (CV%) or inaccuracy (Bias%) [31]. | Clear identification of the main source of performance problem. |

| 2. Address Identified Issues | If QGI < 0.8: Focus on improving imprecision through maintenance, calibration, or reagent optimization [31]. If QGI > 1.2: Address inaccuracy through calibration verification or method comparison [31]. | Systematic improvement of the underlying performance issue. |

| 3. Implement Stricter QC | For low sigma tests (σ < 4), apply more stringent multirule QC procedures (e.g., 1({3s})/2({2s})/R(_{4s}) with N=4) [25]. | Better error detection capability for medically significant errors. |

| 4. Monitor Max E(Nuf) | Ensure maximum expected number of unreliable final patient results between QC events remains below 1 [23]. | Reduced risk of reporting erroneous patient results. |

Verification: Recalculate sigma metrics after improvements; monitor error detection rates for medically significant errors.

Frequently Asked Questions (FAQs)

Q1: What are the key differences between Six Sigma and traditional Westgard rules for quality control?

A1: While traditional Westgard rules apply a uniform set of control rules across all tests, Six Sigma enables tailored QC strategies based on the specific performance of each test [31]. Six Sigma quantifies performance on a universal scale (typically 0-6σ), allowing laboratories to match QC rules to the actual robustness of each method. High sigma methods (σ ≥ 6) can use simpler QC rules, while low sigma methods (σ < 4) require more sophisticated multirule procedures [25] [31].

Q2: How can we effectively reduce costs associated with quality control without compromising quality?

A2: Implementing sigma metric-based QC optimization can significantly reduce costs while maintaining quality. One study demonstrated absolute savings of INR 750,105.27 annually by optimizing QC procedures [25]. This was achieved by reducing internal failure costs (reagents, controls, reruns) by 50% and external failure costs (incorrect diagnoses, additional confirmatory tests) by 47% through proper QC rule selection based on each test's sigma performance [25].

Q3: What is the relationship between risk management and Six Sigma in quality control planning?

A3: Six Sigma provides the quantitative framework for assessing analytical performance, while risk management principles guide the implementation of appropriate control measures based on that performance. The CLSI C24-Ed4 guidelines and ISO 15189:2022 standard require considering both analytical performance and the risk of reporting erroneous results when designing QC plans [23]. Concepts like Max E(Nuf) - the maximum expected number of unreliable final patient results - help laboratories balance quality assurance with operational efficiency [23].

Q4: How should we handle multiple comparison problems when statistically evaluating numerous tests simultaneously?

A4: When conducting multiple hypothesis tests (e.g., evaluating many analytes), false discovery rate (FDR) methods are generally preferred over familywise error rate (FWER) methods for exploratory analysis. The Benjamini-Hochberg (BH) FDR procedure provides a less conservative approach while still controlling the expected proportion of false positives among significant findings [32]. For confirmatory analyses requiring strict control of false positives, Bonferroni correction or Tukey's HSD remain appropriate [32].

Experimental Protocols and Data Presentation

Protocol 1: Sigma Metric Calculation for QC Validation

Purpose: To quantitatively assess analytical performance of laboratory tests using Six Sigma methodology [25] [31].

Materials:

- Internal Quality Control (IQC) data (minimum 20 measurements) [33]

- External Quality Assessment (EQA) data for bias calculation [31]

- Total Allowable Error (TEa) sources (CLIA, biological variation, or state-of-the-art specifications) [23]

Procedure:

- Calculate Imprecision: Compute coefficient of variation (CV%) from IQC data: CV% = (Standard Deviation / Mean) × 100 [25] [31]

- Determine Bias: Calculate percentage bias from EQA or method comparison studies: Bias% = [(Laboratory Mean - Target Value) / Target Value] × 100 [25] [31]

- Select TEa: Choose appropriate total allowable error based on intended use of test [23]

- Compute Sigma Metric: Apply formula: σ = (TEa - Bias%) / CV% [25] [31]

- Categorize Performance:

Protocol 2: Implementation of Risk-Based Multistage QC Strategy

Purpose: To establish a QC strategy that minimizes false rejections while maintaining error detection capability [23].

Materials:

- Sigma metric data for all tests

- Daily workload statistics

- QC validation software or power function graphs

Procedure:

- Categorize Tests by Sigma Performance:

Determine Sample Run Size:

Design Startup QC Rules:

Design Monitor QC Rules:

Validate Using Power Function Graphs:

- Verify that selected rules meet Ped and Pfr thresholds [23]

- Adjust as necessary based on actual performance

Table 1: Sigma-Based QC Rule Selection Guidelines

| Sigma Value | Performance Category | Recommended QC Rules | Expected Pfr | Expected Ped |

|---|---|---|---|---|

| ≥ 6 | World-class [31] | 1(_{3.5s}) N=2 [23] | < 0.01 | > 0.90 |

| 5-6 | Good [31] | 1({3s})/2({2s}) N=4 [23] | 0.02-0.05 | 0.80-0.90 |

| 4-5 | Marginal [31] | 1({3s})/2({2s})/R(_{4s}) N=4 [25] [23] | 0.03-0.06 | 0.70-0.85 |

| < 4 | Unacceptable [31] | Multirule with increased frequency [25] | > 0.05 | < 0.70 |

Table 2: Cost-Benefit Analysis of Sigma-Based QC Optimization

| Cost Category | Before Optimization | After Optimization | Reduction (%) |

|---|---|---|---|

| Internal Failure Costs (INR) [25] | 1,003,616.16 | 501,808.08 | 50% |

| External Failure Costs (INR) [25] | 374,205.60 | 187,102.80 | 47% |

| Total Annual Savings (INR) [25] | - | 750,105.27 | - |

| False Rejection Rate [23] | > 5% | ≤ 5% | > 50% |

Visualizations

Six Sigma QC Implementation Workflow

Multistage QC Strategy Diagram

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Six Sigma QC Implementation

| Item | Function | Application Notes |

|---|---|---|

| Third-Party QC Materials [25] [31] | Independent assessment of analytical performance without manufacturer bias | Use same lot for extended period to minimize variation; Biorad Unity recommended [25] |

| Multichem S Plus & U Controls [23] | Multianalyte quality control for clinical chemistry analyzers | Provides multiple concentration levels for precision and accuracy evaluation |

| PreciControl CARD, PCT, TN [23] | Specialized controls for specific testing platforms | Platform-specific controls ensure optimal performance verification |

| EQA/PT Samples [31] | External verification of accuracy and bias estimation | Use samples with concentrations comparable to IQC materials [31] |

| Calibrators [31] | Establishment of accurate measurement scales | Use manufacturer-matched calibrators for optimal performance |

| Six Sigma Calculation Software [25] | Automated sigma metric computation and QC rule selection | Biorad Unity 2.0 software enables efficient QC validation [25] |

In the context of optimizing quality control procedures to reduce false rejection rates in research, Root Cause Analysis (RCA) provides a systematic framework for identifying the fundamental sources of errors. False rejections, where acceptable samples or results are incorrectly flagged as erroneous, can significantly impede research progress, consume valuable resources, and compromise data integrity. For researchers, scientists, and drug development professionals, implementing structured RCA tools is paramount for enhancing the reliability and efficiency of experimental workflows. This guide focuses on two powerful RCA tools—the Fishbone Diagram and Pareto Analysis—providing detailed methodologies for their application in a research setting to pinpoint and eliminate the root causes of false rejections and other experimental errors.

Understanding the Core Tools

The Fishbone Diagram

Also known as an Ishikawa or cause-and-effect diagram, a Fishbone Diagram is a visual tool that helps teams systematically identify and categorize all potential causes of a problem (the effect) [34]. Its structure resembles a fish skeleton, with the problem statement at the "head" and potential causes branching off from the central "spine" into major categories [34]. This tool is exceptionally valuable for structuring brainstorming sessions and ensuring no potential source of error is overlooked, making it ideal for investigating complex issues like false rejection rates where multiple factors may be involved.

Pareto Analysis

A Pareto Chart is a bar graph that ranks factors related to a problem in decreasing order of frequency or impact [35]. It is based on the Pareto Principle, often called the 80/20 rule, which suggests that roughly 80% of problems are due to 20% of the causes [36]. By visually highlighting the most significant factors, it allows research teams to prioritize their efforts on the few causes that will have the greatest impact on reducing false rejections, thereby optimizing resource allocation and improvement time.

Detailed Methodologies and Experimental Protocols

Protocol for Creating and Using a Fishbone Diagram

The following procedure, adapted from quality management best practices, can be used to investigate the root causes of false rejection rates in your research processes [34].

Step 1: Define the Problem Statement Convene a team with firsthand knowledge of the process. Collaboratively agree on a clear and specific problem statement. For example: "15% false rejection rate in HPLC analysis of compound X during Q3 quality checks." Write this problem statement in a box on the right side of a whiteboard or digital canvas; this is the "fish's head."

Step 2: Identify Major Cause Categories Draw a horizontal arrow (the "spine") pointing to the problem statement. Then, decide on the main categories of causes. While Ishikawa's original "6 Ms" (Materials, Machinery, Methods, Measurement, Manpower, Mother Nature) are a common starting point, adapt them to your research context [34]. For a laboratory setting, relevant categories might be:

- Methods: Standard Operating Procedures (SOPs), protocols, techniques.

- Materials: Reagents, solvents, samples, consumables.

- Equipment: Instruments (e.g., HPLC, mass spectrometer), calibration, maintenance.

- Measurement: Data analysis algorithms, acceptance criteria, threshold settings.

- Personnel: Training, technique, experience.

- Environment: Laboratory temperature, humidity, lighting, contamination.

Draw these categories as branches emanating from the main spine.

Step 3: Brainstorm Potential Causes For each category, brainstorm all possible causes that could contribute to the false rejection rate. Ask "Why does this happen?" for each category. Be succinct in your descriptions.

- Example for "Methods": "Sample preparation time exceeds SOP specification."

- Example for "Measurement": "Calibration curve generated with degraded standard."

Write each cause as a smaller branch off the relevant main category branch.

Step 4: Drill Down to Root Causes For each cause identified, ask "Why?" again to delve deeper. This helps move from symptoms to root causes.

- Example:

- Cause: "Sample preparation time exceeds SOP specification."

- Why? "Centrifuge is frequently overloaded with samples."

- Why? "Only one centrifuge is available for high-throughput experiments." This final point is a more fundamental, addressable root cause. Add these sub-causes as smaller branches, creating layers of detail.

Step 5: Analyze and Prioritize Once all ideas are exhausted, analyze the diagram. Identify causes that appear repeatedly or those that the team agrees are most likely to be significant. These become the candidates for further investigation and data collection. The final output provides a comprehensive map of all suspected causes.

Protocol for Creating and Using a Pareto Chart

This protocol guides you through creating a Pareto Chart to prioritize the causes identified from a tool like the Fishbone Diagram [35].

Step 1: Define the Data Categories and Measurement Decide on the categories you will measure (e.g., types of errors leading to false rejections: "Peak Integration Error," "Calibration Drift," "Contaminated Blank," etc.). Choose an appropriate measurement, such as frequency (count of occurrences) or cost (lost time or materials). Define the data collection period (e.g., one week, one month, or 100 experimental runs).

Step 2: Collect and Tally Data Create a check sheet to collect data over the predetermined period. Tally the number of occurrences or the cost associated with each error category.

Step 3: Construct the Bar Chart List the categories in descending order of frequency/cost on the horizontal (x) axis. The vertical (y) axis on the left represents the count or cost. Construct a bar for each category, with the height corresponding to its measured value.

Step 4: Add the Cumulative Line (Optional but Recommended) Calculate the percentage each category contributes to the total. Then, calculate the cumulative percentage from left to right.

- Example: If "Peak Integration Error" represents 50 out of 100 total errors, it is 50%. The first bar is 50%.

- If "Calibration Drift" is 25, its percentage is 25%, and its cumulative percentage is 50% + 25% = 75%. Add a dot above the second bar at the 75% mark. Continue this for all categories. Connect the dots with a line graph. Add a second vertical axis on the right representing the cumulative percentage (0% to 100%).

Step 5: Analyze the Chart The Pareto Chart will visually identify the "vital few" categories that account for the majority of the problem. Focus your improvement efforts on these top categories for the greatest return on investment.

Troubleshooting Guides and FAQs

FAQ 1: When should I use a Fishbone Diagram versus a Pareto Chart?

These tools are often used together, not as alternatives [37]. Use a Fishbone Diagram during the initial brainstorming phase when you need to explore all possible causes of a complex problem with multiple potential sources [34]. Use a Pareto Chart after you have identified potential causes and collected data, to help you prioritize which of those causes to address first [35]. A common workflow is to use the Fishbone Diagram to generate a list of hypotheses, then collect data on the frequency of those issues, and finally use a Pareto Chart to visualize which hypotheses are the most significant.

FAQ 2: What are the most common pitfalls when using the Fishbone Diagram and how can I avoid them?

- Pitfall 1: Vague Problem Statement. A statement like "bad results" is too broad.

- Solution: Be specific and quantitative. For example: "False rejection rate exceeds 5% threshold due to unexplained variance in absorbance readings."

- Pitfall 2: Blaming People. The "Personnel" category can easily devolve into blaming individuals.

- Solution: Focus on process-oriented root causes behind human error, such as "inadequate training on new software," "ambiguous SOP," or "high workload leading to fatigue," rather than "technician is careless."

- Pitfall 3: Stopping at Symptoms. The team may list symptoms (e.g., "instrument gives noisy baseline") rather than root causes.

- Solution: Persistently use the "5 Whys" technique [38]. Ask "why" repeatedly until you reach a fundamental process or system failure. For example: Noisy baseline -> Why? Unstable power supply -> Why? Lack of a dedicated voltage regulator for sensitive equipment.

FAQ 3: The 80/20 rule didn't hold in my Pareto Analysis. Did I do something wrong?

Not necessarily. The 80/20 ratio is a guideline, not a rigid law [36]. The core purpose of the Pareto Chart is to separate the "significant few" from the "trivial many." Your analysis is still valid if it clearly shows that a small number of categories are responsible for a disproportionately large share of the problem, even if the ratio is 70/30 or 90/10. The chart's value is in its ability to direct your attention objectively.

FAQ 4: How can I ensure my RCA leads to sustainable solutions and not just a one-time fix?

Identifying the root cause is only half the battle. To create a sustainable solution:

- Verify the Root Cause: Before implementing a change, confirm the suspected root cause with data. For example, if you suspect a specific reagent lot is the problem, test with a new lot and compare results.

- Implement Corrective Actions: Design actions that eliminate the root cause, such as updating an SOP, modifying a calibration schedule, or implementing new training.

- Implement Preventive Actions: Modify management systems to prevent recurrence, such as adding a reagent quality check upon arrival or installing a voltage regulator.

- Monitor and Track: After implementation, continue to monitor the false rejection rate to ensure it remains low. Use control charts to track process performance over time.

Data Presentation and Comparison Tables

Table 1: Comparison of Fishbone Diagram and Pareto Analysis

| Feature | Fishbone (Ishikawa) Diagram | Pareto Chart |

|---|---|---|

| Primary Purpose | Identify and categorize all potential causes of a problem [34]. | Prioritize the most significant causes based on frequency or impact [35]. |

| Nature of Tool | Qualitative, visual brainstorming tool. | Quantitative, data-driven ranking tool. |

| Best Used When | Dealing with complex problems with unknown causes; during team brainstorming sessions [34]. | You have data on error frequencies and need to decide where to focus improvement efforts [35]. |

| Typical Output | A structured diagram showing a wide range of possible causes grouped into categories. | A bar chart showing a ranked list of causes, often revealing the "vital few." |

| Key Advantage | Promotes systematic thinking and prevents overlooking potential causes [34]. | Objectively directs resources to the areas that will have the greatest impact [37]. |

Table 2: Common RCA Tools and Their Applications

| Tool | Key Advantage | Best For |

|---|---|---|

| Fishbone Diagram | Visually organizes complex relationships between potential causes [34]. | Complex problems with multiple, interconnected potential causes. |

| Pareto Chart | Prioritizes key drivers based on empirical data [35] [37]. | Identifying which issues will deliver the highest ROI when solved. |

| 5 Whys | Simple, fast analysis to drill down to a root cause [38]. | Relatively straightforward problems with a likely linear cause-and-effect chain. |

| FMEA | Proactively identifies and prevents potential failure modes [38]. | High-risk processes where prevention is critical, such as new assay development. |

| Scatter Plot | Determines if a relationship exists between two variables [39]. | Investigating potential correlations, e.g., between room temperature and rejection rate. |

Visualization of Methodologies

Fishbone Diagram Creation Workflow

Pareto Analysis Procedure

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key materials and reagents commonly used in quality control research, particularly in fields like drug development, where precise and reliable results are critical. Understanding their function is essential for troubleshooting root causes related to materials.

Table 3: Key Research Reagent Solutions for Quality Control

| Item | Function in Research & Quality Control |

|---|---|

| Certified Reference Materials (CRMs) | Provides a highly characterized standard with known properties and purity. Used to calibrate instruments, validate methods, and ensure the accuracy and traceability of measurements, directly impacting false acceptance/rejection rates. |

| High-Purity Solvents & Reagents | The foundation of sample preparation and analysis. Impurities can cause interference, baseline noise, or unexpected reactions, leading to inaccurate readings and false rejections. Using HPLC or MS-grade solvents minimizes this risk. |

| Stable Isotope-Labeled Internal Standards | Used in mass spectrometry to correct for sample loss during preparation and matrix effects. By adding a known quantity of a labeled analog of the analyte, researchers can achieve more precise and accurate quantification, reducing variability-based rejections. |

| Quality Control (QC) Samples | Prepared samples with known concentrations of the analyte(s) of interest. They are run alongside experimental samples to monitor the performance of the assay. Trends in QC data can signal instrument drift, reagent degradation, or other issues causing false results. |

| Enzymes & Biological Reagents | In biochemical assays, the specificity and activity of enzymes (e.g., proteases, kinases) are critical. Using reagents from qualified suppliers with documented performance data ensures consistent assay behavior and reduces run-to-run variability. |

Total Quality Control (TQC) is a comprehensive management approach that focuses on improving quality at every organizational level and process stage, emphasizing defect prevention over detection and continuous improvement [40]. In research and drug development, a critical objective of TQC is to reduce false rejection rates—instances where acceptable data or products are incorrectly flagged as failures. High false rejection rates lead to wasted resources, unnecessary troubleshooting, and delayed project timelines [41]. Optimizing TQC requires a balanced integration of statistical components (quantitative process control) and non-statistical components (human factors and systematic processes) to ensure reliability and efficiency [40] [41].

Troubleshooting Guides

Troubleshooting High False Rejection Rates in QC Results

Problem: The quality control (QC) system indicates an out-of-control situation, suggesting a high rate of false rejections.

Investigation & Resolution Protocol:

Step 1: Verify the Signal: Determine if the out-of-control signal is valid or a false rejection. Consult the table below to understand the false rejection characteristics of different statistical rules [41].

Step 2: Systematic Root Cause Analysis: Do not automatically repeat the control test or open a new control vial without investigation, as these are common bad habits that mask underlying problems [41]. Instead, use a structured approach. The diagram below outlines the logical workflow for troubleshooting.

Figure 1: Troubleshooting high false rejection rates workflow.

Step 3: Investigate Common Causes:

- Process Calibration: Investigate if frequent recalibration is masking an instrument malfunction or sub-optimal reagent quality [41].

- Control Material: Confirm proper reconstitution, storage conditions, and expiration dates if a control issue is suspected [41].

- Operator Technique: Review adherence to standardized protocols and manufacturer's maintenance schedules [41].

Step 4: Implement and Monitor: Apply the corrective action and closely monitor key performance indicators to confirm the false rejection rate has been reduced [40].

Troubleshooting Resistance to TQC Implementation

Problem: Employee and management resistance hinders the adoption of integrated TQC strategies, undermining their effectiveness.

Investigation & Resolution Protocol:

- Step 1: Diagnose the Root Cause: Common causes include lack of understanding, fear of change, or previous negative experiences with quality initiatives [40] [42].

- Step 2: Implement Corrective Actions:

- Strong Leadership Commitment: Secure visible, unwavering commitment from senior management to drive the quality culture [40] [42].