Six Sigma vs. Traditional QC: A Strategic Guide for Enhanced Quality in Drug Development

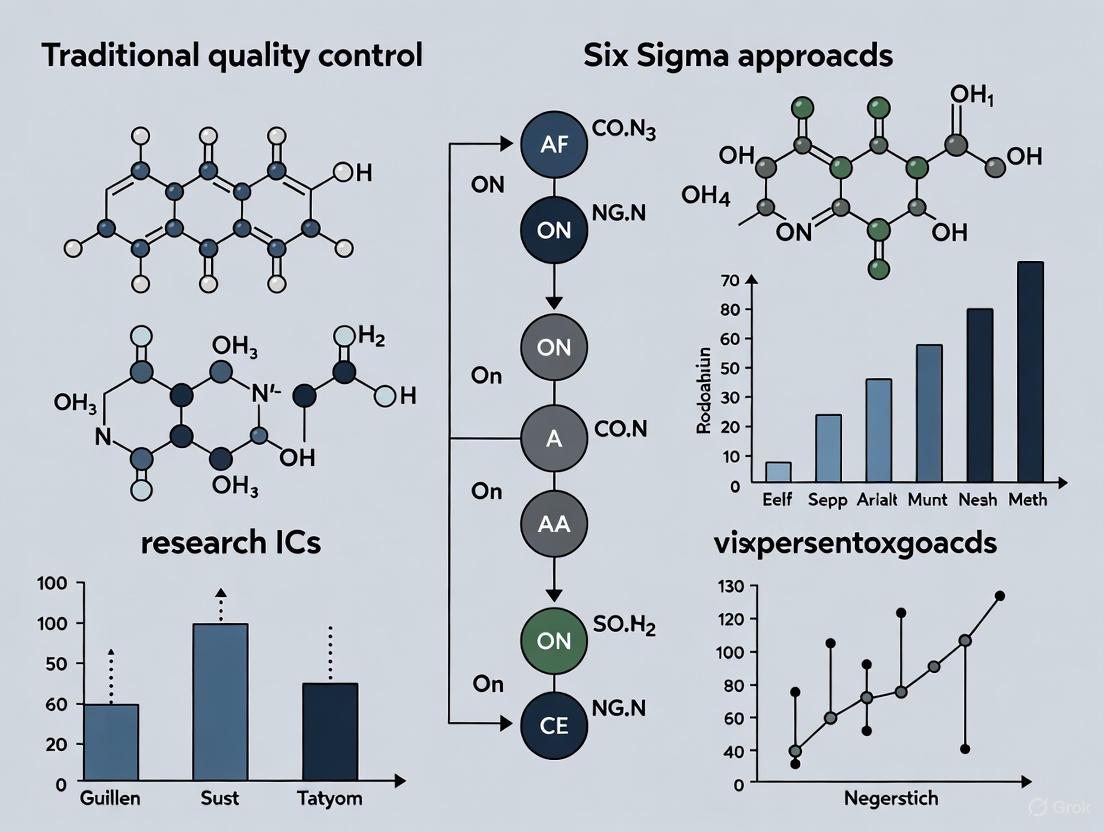

This article provides a comprehensive comparison for researchers and drug development professionals between traditional quality control (QC) methods and the data-driven Six Sigma methodology.

Six Sigma vs. Traditional QC: A Strategic Guide for Enhanced Quality in Drug Development

Abstract

This article provides a comprehensive comparison for researchers and drug development professionals between traditional quality control (QC) methods and the data-driven Six Sigma methodology. It explores the foundational principles of both approaches, detailing the structured DMAIC framework of Six Sigma and its application in regulated environments. The content covers practical implementation strategies, troubleshooting common pitfalls, and a direct validation of performance outcomes. By synthesizing these elements, the article offers a clear, evidence-based guide for selecting and integrating quality methodologies to improve efficiency, reduce defects, and ensure compliance in biomedical and clinical research.

Quality Control Foundations: From Traditional Inspection to Six Sigma's Data-Driven Revolution

Traditional Quality Control (QC) represents a foundational approach to managing product quality, primarily characterized by its focus on reactive detection of defects in finished products or during the production process. Unlike modern, proactive quality methodologies, traditional QC operates on the principle of identifying and rectifying problems after they have occurred [1] [2]. Its core activities are centered on inspection, sampling, and corrective actions aimed at ensuring that outputs meet predefined specifications. For decades, this model has served as the quality management backbone for manufacturing industries, ensuring that products adhere to consistent standards and specifications before they reach the customer.

The philosophy of traditional QC is fundamentally different from preventive approaches. It considers quality as an outcome to be verified rather than a characteristic to be built into the process itself. This "inspect-and-repair" paradigm aims to safeguard the customer from receiving defective products by employing a series of checks and filters at various stages of production [3]. While its methods are often superseded by more advanced, data-driven systems in contemporary settings, understanding traditional QC remains crucial as it forms the historical basis for modern quality management and is still actively used in various sectors today.

Core Principles of Traditional Quality Control

The traditional quality control framework is built upon three interdependent pillars: inspection, sampling, and a reactive problem-solving cycle. These components work in concert to screen for defects and maintain quality standards.

The Role of Inspection

Inspection is the most common and foundational method in traditional QC [1]. It involves the physical examination, testing, and measurement of products or services to detect any deviations from established standards [1] [2] [4]. This process can be conducted at different stages:

- Pre-Production Inspection: Also known as Incoming Quality Control (IQC), this occurs before manufacturing begins and focuses on verifying the quality of raw materials and components from suppliers [4].

- In-Process Inspection: These are ongoing assessments during the active manufacturing stage to identify defects or non-conformities as they occur, allowing for immediate corrective action [4].

- Final Inspection: This comprehensive evaluation is performed on finished products before they are released for shipment, covering appearance, functionality, safety, and packaging integrity [1] [4].

The inspection process is typically carried out by quality control inspectors who use tools ranging from simple visual checks and micrometers to more sophisticated measurement instruments [1] [2]. The outcomes are documented, and any identified defects are flagged for rework or disposal [2].

Sampling Techniques in Traditional QC

Given the impracticality and cost of inspecting 100% of production, especially in high-volume environments, traditional QC heavily relies on statistical sampling [1] [4]. Instead of examining every single unit, a representative sample is selected from a production lot to make inferences about the overall quality. Key sampling methods include:

- Simple Random Sampling: Each item in the lot has an equal chance of being selected, ensuring an unbiased representation [4].

- Stratified Sampling: The lot is divided into homogeneous subgroups (strata), and samples are drawn from each stratum for more targeted inspection [4].

- Acceptance Sampling: This technique uses predetermined acceptance criteria and an Acceptable Quality Level (AQL)—the maximum permissible defect rate—to decide whether to accept or reject an entire lot based on the sample results [1] [4].

These sampling plans provide a balance between inspection effort and risk management, offering a cost-effective approach to quality verification [4].

Reactive Problem-Solving Cycle

The problem-solving ethos in traditional QC is inherently reactive [2] [5]. The typical cycle begins only after a defect or non-conformance is identified through inspection. The sequence of activities is as follows:

- Defect Identification: Flaws are found during inspections and documented [1].

- Containment: The defective products are separated to prevent them from progressing down the line or reaching the customer.

- Corrective Action: Immediate actions are taken to address the specific issue, which may involve reworking the defective items, adjusting machinery, or re-training staff [1].

- Disposition: The non-conforming products are ultimately repaired, used as-is, downgraded, or scrapped.

This "band-aid" approach focuses on treating the symptoms (the defects) rather than investigating and eliminating the root causes of the problem [5]. The following workflow diagram illustrates this reactive cycle.

Experimental Data and Methodologies in Traditional QC

To objectively compare traditional QC with modern approaches like Six Sigma, it is essential to examine the quantitative data and experimental protocols that define its performance. The following table summarizes key performance indicators and methodologies associated with traditional QC practices.

Table 1: Key Experimental Data and Methodologies in Traditional Quality Control

| Aspect | Experimental/Methodological Approach | Typical Data Outputs & Performance Indicators |

|---|---|---|

| Defect Detection | Physical inspection and testing of products against specifications [1] [4]. | Defect rate; Proportion of defective units identified post-production. |

| Process Monitoring | Use of basic Statistical Process Control (SPC) charts to monitor process behavior over time [1] [2]. | Control charts showing process shifts or trends; Number of points outside control limits. |

| Lot Acceptance | Acceptance sampling based on Acceptable Quality Level (AQL) [1] [4]. | Lot Acceptance/Rejection rate; AQL (e.g., 2.5 defects per 100 units). |

| Cost Analysis | Tracking costs associated with inspection, rework, scrap, and warranties [4]. | Cost of Quality (COQ); Scrap and rework costs as a percentage of production cost. |

Experimental Protocol: Acceptance Sampling

A standard experimental protocol in traditional QC is the execution of an Acceptance Sampling plan to determine the fate of a production lot. The detailed methodology is as follows:

- Define AQL and Sampling Plan: Prior to inspection, the Acceptable Quality Level (AQL) is defined based on product criticality, customer requirements, and risk tolerance. A corresponding sampling plan (sample size and acceptance/rejection numbers) is selected from standard tables (e.g., ANSI/ASQ Z1.4, ISO 2859-1) [4].

- Random Sample Selection: A random sample of

nitems is drawn from the lot of sizeN[4]. - Inspection and Testing: Each unit in the sample is thoroughly inspected and tested against all defined quality and performance specifications [1] [4].

- Defect Tally and Decision: The number of defective units (

d) in the sample is counted.- If

d≤ acceptance number (c), the lot is accepted. - If

d>c, the lot is rejected [4].

- If

- Lot Disposition: Rejected lots are typically subjected to 100% inspection, where all defective items are sorted out and removed [4].

This protocol emphasizes the focus on output rather than process improvement, a hallmark of the traditional QC approach.

Essential Tools for Traditional QC Research and Application

Researchers and professionals implementing or studying traditional QC utilize a specific set of tools and reagents to conduct inspections and analyses. The following table details these essential components.

Table 2: Key Research Reagent Solutions and Tools for Traditional QC

| Tool / Reagent | Primary Function in QC Process |

|---|---|

| Control Charts [1] [2] | A graphical tool for monitoring process behavior over time to distinguish between common and special cause variation. |

| Pareto Analysis [1] | A statistical technique for identifying the most significant factors causing defects by applying the 80/20 rule. |

| Cause-and-Effect (Fishbone) Diagram [1] | A visualization tool for brainstorming and categorizing all potential causes of a quality problem to identify the root cause. |

| Check Sheets | A simple, structured form for collecting and analyzing real-time data in a systematic and easy-to-use format. |

| Measurement Instruments (e.g., Calipers, Micrometers, Vision Systems) [4] | Precision tools for conducting physical, dimensional, and visual inspections of products against specifications. |

Comparative Analysis: Traditional QC vs. Six Sigma

When framed within a broader thesis comparing quality methodologies, traditional QC stands in stark contrast to data-driven, preventive approaches like Six Sigma. The fundamental differences are systematic and philosophical, as illustrated in the following comparative diagram and table.

Table 3: Systematic Comparison of Traditional QC and Six Sigma

| Parameter | Traditional Quality Control | Six Sigma |

|---|---|---|

| Core Focus | Output (The "Y" or the defect) [5]. | Process inputs and root causes (The "X's" that cause the defect) [5]. |

| Primary Goal | Identify and remove defective products through inspection [1] [2]. | Reduce variation and prevent defects from occurring [6] [7] [5]. |

| Problem-Solving Approach | Reactive ("Band-aid" fixes on found defects) [5]. | Proactive and preventive (Structured DMAIC to eliminate root causes) [6] [7] [8]. |

| Decision Basis | Combination of data and 'gut feel' [5]. | Driven rigorously by data and statistical analysis [6] [7] [5]. |

| Methodology Structure | Lacks a formal, structured improvement deployment [5]. | Highly structured via DMAIC (Define, Measure, Analyze, Improve, Control) [7] [8]. |

| Performance Metric | Defect rate found in inspected samples. | Defects Per Million Opportunities (DPMO) and Sigma Level [8]. |

| Training | Less intensive, often focused on inspection techniques [6]. | Rigorous, tiered training (e.g., Green Belt, Black Belt) in statistical tools [6] [8]. |

| Economic Impact | Reduces cost of poor quality (rework, scrap) but retains inspection costs. | Aims for breakthrough performance gains and strengthening of the bottom line [5]. |

Empirical evidence underscores the performance gap between these approaches. A two-year longitudinal study across manufacturing firms found that those adopting the integrated Lean Six Sigma methodology achieved a mean defect rate of 3.18%, a significant improvement over typical baseline operations where defect rates can often be an order of magnitude higher without such systematic, data-driven control [9].

Traditional Quality Control, defined by its principles of inspection, sampling, and reactive problem-solving, has played a critical role in industrial quality management. Its strength lies in its ability to serve as a final gatekeeper, preventing defective products from reaching the customer. However, its inherent limitations—including its reactive nature, focus on outputs rather than causes, and failure to drive continuous process improvement—render it insufficient as a standalone strategy in highly competitive or precision-critical fields like pharmaceutical development [5].

For researchers and scientists, this analysis clarifies that while traditional QC provides the foundational language of quality, modern operational excellence is achieved through proactive, data-driven methodologies like Six Sigma. The comparative data and structured frameworks presented herein offer a basis for making informed decisions on quality strategy, underscoring that sustainable quality and efficiency are achieved not by inspecting quality into a product, but by building it into the process through deep, statistical understanding and control.

Traditional Quality Control (QC) and Six Sigma represent two fundamentally different philosophies in quality management. Traditional QC is a reactive, detection-based system, primarily focusing on identifying and sorting defective products from the good at the end of the production line through inspections and audits [10]. In contrast, Six Sigma is a proactive, prevention-based methodology that uses statistical analysis and a structured problem-solving framework to reduce process variation and eliminate defects at their root cause [11] [12]. This shift from merely finding problems to building robust processes that prevent them from occurring is the core of the Six Sigma philosophy.

This guide objectively compares the performance of these two approaches, providing data and experimental protocols to illustrate their effectiveness in industrial and research settings, including highly regulated sectors like drug development.

Core Philosophical Differences

The distinction between these methodologies is foundational, influencing their goals, tools, and overall impact on an organization.

Traditional QC operates on the principle of detection. Its focus is on the final output, treating quality as a function of the production line's end. It is often described as "treating symptoms" [10]. This approach relies heavily on inspections, checklists, and control charts to separate conforming products from non-conforming ones after they have been produced, often leading to costly rework or scrap [13].

Six Sigma, however, is built on the principle of prevention. It views all work as processes that can be defined, measured, analyzed, improved, and controlled (DMAIC) [11]. Its goal is to achieve near-perfect quality, defined as 3.4 defects per million opportunities (DPMO), by systematically reducing process variation [12] [14]. Six Sigma posits that by controlling inputs, one can control outputs, thereby building quality into the process itself from the very beginning [11] [15].

Table: Philosophical Comparison of Traditional QC and Six Sigma

| Aspect | Traditional Quality Control | Six Sigma |

|---|---|---|

| Primary Focus | Output (Final Product) | Process |

| Core Approach | Reactive (Detection) | Proactive (Prevention) |

| Goal | Identify and remove defects | Eliminate causes of defects |

| View of Quality | A function of inspection | A function of process design |

| Primary Toolset | Inspection, sorting, check sheets | Statistical analysis, DMAIC, DOE |

| Cost Implication | Higher cost of poor quality (rework, scrap) | Lower cost of poor quality through robust design |

Quantitative Performance Comparison

The performance disparity between these methodologies becomes clear when examining defect rates, financial impact, and scope of influence.

Companies implementing Six Sigma have reported significant financial gains; for instance, Motorola attributed over $17 billion in savings to Six Sigma, while General Electric announced over $1 billion in cost savings [14]. These savings stem from a drastic reduction in the costs of poor quality, which can consume 15-20% of a company's sales revenue in a traditional QC environment [15].

The most cited metric is the defect rate. A process operating at a typical Three Sigma level has a defect rate of approximately 66,807 DPMO. In contrast, a Six Sigma process aims for just 3.4 DPMO, a difference of several orders of magnitude [11] [14]. This level of performance is not the target of traditional QC methods.

Table: Defect Rate and Sigma Level Comparison

| Sigma Level | Defects Per Million Opportunities (DPMO) | Yield (%) |

|---|---|---|

| 2σ | 308,537 | 69.1% |

| 3σ | 66,807 | 93.3% |

| 4σ | 6,210 | 99.4% |

| 5σ | 233 | 99.97% |

| 6σ | 3.4 | 99.99966% |

Methodological Comparison: DMAIC in Action

The Five-Phase DMAIC Framework is the engine of Six Sigma's data-driven approach. The following workflow diagram illustrates this structured, iterative process for solving root problems.

Experimental Protocol: A DMAIC Case Study

Objective: Reduce the rate of cosmetic paint blemishes on a product line from 8% (80,000 DPMO) to under 1% (10,000 DPMO) within three months [15].

- Define Phase: The project charter is created, specifying the goal. The "Voice of the Customer" is captured, confirming that surface blemishes are a major driver of customer dissatisfaction and returns.

- Measure Phase: A data collection plan is executed. Technicians collect data on blemish frequency, type, and location using a standardized check sheet. The current process is mapped, and a baseline capability is established, confirming the ~80,000 DPMO (approx. 2.9σ) performance.

- Analyze Phase: The team uses a Fishbone (Ishikawa) Diagram to brainstorm potential causes, including "Man, Method, Machine, Material, Measurement, Environment." Data analysis using Pareto Charts reveals that over 70% of blemishes are "orange peel" texture and are associated with a specific painting booth. Hypothesis testing (e.g., using a 2-sample t-test) confirms that ambient humidity in that booth is statistically significantly higher (p-value < 0.05).

- Improve Phase: The team brainstorms solutions, including installing a dehumidifier and adjusting paint viscosity. An experiment is designed using a Taguchi Method orthogonal array (e.g., an L4 array) to test humidity and viscosity settings with minimal runs [16]. The optimal settings are identified by calculating the Signal-to-Noise (S/N) ratio to find the most robust combination. A pilot run with these settings is executed.

- Control Phase: The new humidity and viscosity parameters are documented in updated Standard Operating Procedures (SOPs). A Statistical Process Control (SPC) chart is implemented to monitor the painting process in real-time, providing an early warning if the process begins to drift from the new optimal settings [15]. The project result is a reduction in blemishes to 0.7% (7,000 DPMO), successfully meeting the project goal.

The Scientist's Toolkit: Key Reagents for Process Improvement

The successful application of Six Sigma, particularly in technical fields, relies on a suite of analytical tools and reagents.

Table: Essential Six Sigma Research Reagents and Tools

| Tool/Reagent | Function & Purpose |

|---|---|

| Control Charts | Monitors process stability over time by distinguishing between common-cause and special-cause variation [12] [14]. |

| Pareto Analysis | Prioritizes efforts by identifying the "vital few" causes that contribute to the majority of problems (80/20 rule) [12]. |

| Fishbone Diagram | A structured brainstorming tool used to visually map out and explore all potential root causes of a problem [12] [14]. |

| Failure Mode and Effects Analysis (FMEA) | A proactive risk-assessment tool for identifying where and how a process might fail, and prioritizing which failures to address first [12]. |

| Design of Experiments (DOE) | A systematic, statistical method for determining the relationship between factors affecting a process and the output of that process [16]. |

| Taguchi Method | A specific, robust approach to DOE that uses orthogonal arrays to optimize processes for minimal performance variation [16]. |

| Process Capability (Cp/Cpk) | Statistical measures that compare the output of an in-control process to its specification limits to determine if the process is capable [15]. |

Modern Context: Integration with Quality 4.0

The core principles of Six Sigma are being amplified by modern technologies, a trend often referred to as Quality 4.0. In 2025, quality control laboratories and manufacturing environments are leveraging Artificial Intelligence (AI) and Machine Learning (ML) to move from descriptive to predictive and prescriptive analytics [17] [18]. These technologies can automatically identify risks, suggest corrective actions, and analyze vast datasets to uncover hidden patterns that human analysts might miss.

Furthermore, the Internet of Things (IoT) enables real-time data collection from connected sensors on equipment and production lines. This provides a comprehensive view of production and quality processes, allowing for immediate response to deviations and predictive maintenance, which aligns perfectly with Six Sigma's goal of controlling inputs to control outputs [17] [18]. This integration represents the natural evolution of the data-driven Six Sigma philosophy, making it more powerful and accessible than ever.

The evidence demonstrates a clear performance differential between traditional QC and Six Sigma. Traditional QC, while useful for basic compliance and detection, is a reactive system that often leads to higher costs of poor quality. Six Sigma provides a proactive, data-driven framework focused on process improvement and variation control, yielding dramatically lower defect rates and significant financial returns. For researchers, scientists, and drug development professionals operating in a data-intensive and compliance-driven environment, the rigorous, statistical, and project-based nature of Six Sigma offers a superior methodology for achieving operational excellence, ensuring product quality, and maintaining a competitive advantage.

Six Sigma represents a systematic, data-driven methodology for process improvement that has fundamentally shifted the paradigm of quality control (QC) since its development at Motorola in the 1980s [19] [20]. Unlike traditional QC methods, which often rely on reactive inspection and detection-based quality assurance, Six Sigma employs a proactive, structured framework to reduce process variation and defects, thereby delivering products and services that meet customer-defined quality standards [20] [21]. The core of this methodology is built upon three foundational pillars: an unwavering customer focus, decision-making based on verifiable data and statistical analysis, and the systematic reduction of process variation [19]. This guide provides an objective comparison between traditional process improvement methods and the Six Sigma approach, supported by experimental data and detailed protocols, to inform researchers and professionals in scientific and drug development fields.

Comparative Analysis: Six Sigma vs. Traditional QC Methods

The distinction between Six Sigma and traditional quality control methods is evident in their strategic focus, operational mechanisms, and resultant outcomes. The table below summarizes the key differentiating factors.

Table 1: Strategic and Operational Comparison of Methodologies

| Aspect | Traditional QC Methods | Six Sigma Approach |

|---|---|---|

| Primary Focus | Detection and correction of defects [20] | Prevention of defects and reduction of process variation [19] [20] |

| Decision-Making Basis | Intuition, experience; limited data analysis [22] | Rigorous statistical analysis of verifiable data [19] [22] |

| Problem-Solving Framework | Less structured; often relies on PDCA or simple Kaizen events [22] | Highly structured DMAIC/DMADV roadmap [19] [21] |

| Quality Goal | Conformance to internal specifications | Meeting customer-defined Critical-to-Quality (CTQ) characteristics [19] |

| Organizational Role | Often limited to QC/QA departments | Cross-functional team involvement with defined belts (Champion, MBB, BB, GB) [19] [20] |

| Financial Impact | Cost of Poor Quality (COPQ) as a primary driver [23] | Focus on COPQ plus waste elimination and cycle time reduction [23] |

Quantitative Outcomes from Multi-Firm Study

A two-year longitudinal quasi-experimental study across 20 manufacturing firms provides empirical evidence for the effectiveness of integrating Six Sigma with lean principles (Lean Six Sigma). The results demonstrate measurable improvements in key operational metrics [9].

Table 2: Experimental Outcomes of Lean Six Sigma Implementation

| Performance Metric | Baseline Performance (Pre-Implementation) | Post-Implementation Performance |

|---|---|---|

| Mean Defect Rate | Not Specified | 3.18 % |

| Production Throughput | Not Specified | 134.08 units/hour |

| Leadership Commitment (5-pt scale) | Not Specified | 3.47 |

| Structured Training per Employee | Not Specified | 26.3 hours |

The study employed multilevel modeling (MLM) to capture both firm- and employee-level effects, identifying leadership commitment and structured workforce training as critical moderators for sustaining continuous improvement initiatives [9].

Core Principles and Experimental Protocols

Principle 1: Customer Focus

The primary goal of Six Sigma is to deliver business value as defined by the customer [19]. This principle moves beyond internal specifications to focus on understanding and meeting Customer Requirements and Critical-to-Quality (CTQ) characteristics.

- Experimental Protocol: Capturing the Voice of the Customer (VOC)

- Define Customer Groups: Identify internal and external customer segments affected by the process or product.

- Gather Customer Data: Use surveys, interviews, focus groups, and feedback systems to collect qualitative and quantitative data on customer needs, expectations, and pain points.

- Analyze and Translate VOC: Categorize and prioritize customer statements. Translate abstract needs into measurable, actionable CTQ metrics (e.g., purity >99.8%, delivery within 72 hours).

- Validate Requirements: Confirm with customers that the translated CTQs accurately reflect their requirements.

Principle 2: Data-Driven Decision Making

Six Sigma relies on verifiable data and statistics rather than assumptions or intuition for decision-making [19] [21]. This ensures that process improvements are based on objective evidence of root causes and their effects.

- Experimental Protocol: DMAIC Analyze Phase

- Define Data Collection Plan: Identify what data to collect, the operational definitions, and the sampling plan to ensure data accuracy and integrity [24].

- Measure Process Performance: Collect baseline data on the current process using the defined metrics.

- Analyze for Root Cause: Use statistical tools to investigate and validate the relationship between input variables (X's) and the key output (Y). Common tools include:

- Hypothesis Testing (e.g., t-test, ANOVA): To determine if differences between process groups are statistically significant.

- Regression Analysis: To model and quantify the relationship between variables [25].

- Design of Experiments (DOE): To systematically investigate the effects of multiple input factors and their interactions on the output [21].

Principle 3: Reduce Process Variation

Six Sigma aims to eliminate special cause variation and reduce common cause variation to achieve stable, predictable, and capable processes [19]. A process operating at the Six Sigma level produces only 3.4 defects per million opportunities [23] [21].

- Experimental Protocol: Statistical Process Control (SPC)

- Identify Key Characteristics: Select the critical process or product output characteristic (Y) to be controlled.

- Establish Control Charts: Select and implement the appropriate control chart (e.g., X-bar R chart for continuous data, p-chart for attribute data) to monitor process behavior over time [19] [25].

- Calculate Control Limits: Compute upper and lower control limits (UCL, LCL) based on the natural variation of the process (typically ±3σ from the process mean).

- Monitor and Interpret: Plot data in time order. Investigate for signs of special cause variation (e.g., points outside control limits, runs, trends) to trigger corrective actions [19].

- Calculate Process Capability: Once the process is stable, assess its ability to meet specifications by calculating indices like Cp, Cpk, and DPMO [23].

Diagram 1: SPC Implementation Workflow

The Researcher's Toolkit: Essential Six Sigma Instruments

Successful application of Six Sigma requires a suite of qualitative and quantitative tools. The table below details key reagents for the analytical phases of a Six Sigma project.

Table 3: Essential Six Sigma Tools and Their Functions in Research

| Tool Name | Primary Function in Analysis | Applicable Data Type |

|---|---|---|

| Process Mapping [19] [21] | Visually describes the sequence of steps in a process, identifying bottlenecks, redundancies, and handoff points. | Qualitative / Attribute |

| Cause and Effect Diagram (Fishbone/Ishikawa) [25] [21] | Brainstorms and categorizes all potential root causes of a problem into major categories (e.g., 6 M's: Machine, Method, Material, Manpower, Measurement, Mother Nature). | Qualitative / Attribute |

| Pareto Chart [25] | Displays the frequency of defects or problems in descending order, helping to identify the "vital few" causes that account for the majority of issues (80/20 rule). | Discrete / Attribute |

| Control Chart [19] [25] | Monitors process stability over time and distinguishes between common cause and special cause variation. | Continuous / Discrete (Over Time) |

| Histogram [25] | Shows the frequency distribution of continuous data, revealing the shape, central tendency, and spread of the data. | Continuous |

| Scatter Plot [25] | Investigates and displays the potential relationship or correlation between two continuous variables. | Continuous (Paired) |

Diagram 2: Tool Selection Logic Based on Data Type

The comparative analysis demonstrates that Six Sigma provides a structured, data-intensive framework that complements and enhances traditional QC methods. While traditional methods offer simplicity and a foundation in continuous improvement, Six Sigma's rigorous, customer-centric, and statistical approach is particularly suited for complex problems requiring deep root cause analysis and sustained defect control [22]. The emergence of Lean Six Sigma, which integrates the waste-elimination focus of Lean with the variation-reduction power of Six Sigma, represents a synergistic evolution in quality management [23] [20]. For research and drug development professionals, adopting these principles and tools can lead to more robust, reliable, and efficient processes, ultimately accelerating development timelines and enhancing product quality. The empirical data confirms that successful implementation is heavily dependent on committed leadership and comprehensive training, underscoring that Six Sigma is as much a cultural transformation as it is a technical one [9] [24].

The landscape of quality management has evolved from rigid, inspection-based Quality Control (QC) to dynamic, proactive methodologies aimed at building quality into every process. Traditional QC methods often focus on detecting defects post-production, a reactive approach that can be costly and inefficient. In contrast, modern methodologies like Six Sigma, Total Quality Management (TQM), Lean, and Kaizen represent a proactive philosophy of preventing defects at the source through continuous, systematic improvement [6]. This evolution is particularly critical in drug development, where quality directly impacts patient safety, regulatory approval, and the efficiency of bringing new therapies to market.

This guide objectively compares these methodologies, with a specific focus on how Six Sigma complements—rather than replaces—older systems like TQM, Lean, and Kaizen. Framed within the broader thesis of comparing traditional QC with Six Sigma approaches, this analysis provides researchers and drug development professionals with a structured framework for selecting and integrating these powerful tools to optimize research processes, reduce variability in experimental data, and accelerate the path from discovery to market.

Core Methodologies and Comparative Analysis

Understanding the distinct principles and tools of each methodology is a prerequisite for their effective application and integration.

Principles and Tools of Each Methodology

Total Quality Management (TQM): TQM is a comprehensive, organization-wide philosophy centered on continuous improvement in all aspects of operations through employee involvement and leadership dedication [6]. It prioritizes establishing a quality-empowered mindset and culture. Key tools include Plan-Do-Check-Act (PDCA) cycles for iterative improvement, quality circles for employee engagement, and qualitative metrics like customer satisfaction scores [6].

Lean Manufacturing: Originating from the Toyota Production System, Lean is a systematic method for eliminating waste ("muda") and creating flow in the production process [26] [27]. It aims to maximize value for the customer while using as few resources as possible. Its focus is on the "Seven Deadly Wastes": overproduction, waiting, transport, over-processing, inventory, motion, and defects [26]. Common tools include Value Stream Mapping (to visualize and analyze the flow of materials and information), 5S (for workplace organization), and Kanban (a pull-system for inventory control) [27].

Kaizen: Meaning "continuous improvement" in Japanese, Kaizen is a philosophy that goes beyond tools and data, focusing on making small, incremental changes on a daily basis [28] [29]. It relies heavily on teamwork, employee empowerment, and the belief that every individual's suggestions for improvement are valuable. The Five S framework (Sort, Set in order, Shine, Standardize, Sustain) is a core component for enhancing work culture, alongside quality circles that foster employee participation in problem-solving [28] [29].

Six Sigma: Developed by Motorola, Six Sigma is a data-driven, disciplined approach focused on reducing variation and defects in processes through statistical analysis [6] [30]. Its goal is to achieve near-perfect quality, defined as 3.4 defects per million opportunities. It follows a structured, project-based methodology known as DMAIC (Define, Measure, Analyze, Improve, Control) and utilizes a rigorous belt-based certification system (e.g., Green Belts, Black Belts) to deploy experts on improvement projects [6] [30] [27].

Comparative Analysis: Objectives, Approaches, and Applications

The table below provides a structured comparison of the four methodologies, highlighting their primary focus, core approach, and typical application contexts.

Table 1: Comparative Analysis of Quality Management Methodologies

| Feature | Total Quality Management (TQM) | Lean | Kaizen | Six Sigma |

|---|---|---|---|---|

| Primary Focus | Organization-wide quality culture and continuous improvement [6] | Eliminating waste and improving process flow [26] [27] | Continuous, incremental improvement through employee involvement [28] [29] | Reducing variation and defects using statistical methods [6] [30] |

| Core Approach | Philosophical, cultural, and qualitative; employee empowerment [6] | Process-focused; value stream analysis [27] | People-focused; small, daily changes and suggestions [28] | Data-driven, project-based (DMAIC); expert-driven (Belts) [6] [30] |

| Typical Tools | PDCA, quality circles, cause-and-effect diagrams [6] | Value stream mapping, 5S, Kanban [27] | Five S, quality circles, suggestion systems [28] [29] | DMAIC, control charts, hypothesis testing, process capability analysis [6] [30] |

| Application Context | Broad, company-wide culture change [6] | Manufacturing and service processes with visible waste [26] | Daily work environment and culture improvements [29] | Complex problems with unknown root causes requiring data analysis [6] |

The Complementary Relationship: Six Sigma and Other Methods

The relationship between these methodologies is not one of replacement but of synergy. Six Sigma's rigorous, data-driven approach powerfully complements the broader, cultural focus of TQM, the flow-oriented perspective of Lean, and the people-centric philosophy of Kaizen.

Six Sigma and TQM: While TQM establishes the foundational culture of quality and employee involvement, Six Sigma provides the structured, statistical toolkit to solve complex problems that TQM's qualitative approach might not effectively address [6]. A TQM culture can foster the employee engagement necessary for Six Sigma projects to succeed.

Six Sigma and Lean: The combination of Lean and Six Sigma, known as Lean Six Sigma, is particularly potent. Lean's focus on speed and waste elimination (e.g., reducing cycle times) is perfectly complemented by Six Sigma's focus on precision and quality (e.g., reducing errors and variation) [26] [27]. For instance, Lean can streamline a process, and then Six Sigma can be applied to stabilize and control the new, leaner process.

Six Sigma and Kaizen: Kaizen creates an environment of continuous, small improvements and empowers employees to identify problems. When a problem is too complex for a quick Kaizen "blitz," it becomes an ideal candidate for a more in-depth Six Sigma DMAIC project [28] [29]. Kaizen maintains the momentum of improvement, while Six Sigma tackles the deep-rooted, high-impact issues.

The following diagram illustrates the synergistic relationship between these methodologies and how they can be integrated into a cohesive quality management system.

Diagram: The Synergistic Integration of Quality Management Methodologies

Experimental Protocols and Application in Drug Development

The theoretical strengths of Six Sigma and its complementary nature are best demonstrated through practical application. The following case study from pharmaceutical R&D provides a template for implementation.

Case Study: Improving In Vivo Pharmacokinetic (PK) Data Turnaround

AstraZeneca's Discovery Drug Metabolism and Pharmacokinetics (DMPK) department faced challenges with variable and lengthy turnaround times for reporting in vivo PK data, which was critical for Lead Optimisation (LO) projects [31]. The existing process suffered from unpredictable delays, unclear deadlines, and demand that often exceeded capacity. A Lean Six Sigma project was initiated to address these issues.

1. Experimental Protocol: The DMAIC Framework in Action

The project followed the structured DMAIC methodology [31]:

- Define: The project charter was developed, defining the goal to design a process for delivering rat PK results within 10 working days, 80% of the time. The "customer" was defined as the LO project teams, and their requirements (Voice of the Customer) were gathered [31].

- Measure: Over three months, data was collected on the current process performance. "Deviation reports" were used to document every problem and delay, quantifying the time lost. Capacity for dosing, analysis, and human resources was calculated to understand system limitations [31].

- Analyze: The collected data was analyzed, revealing that the number of studies frequently exceeded the maximum capacity of the PK process. Root cause analysis identified that the lack of a centralized scheduling system and variable demand from LO projects were key drivers of delay [31].

- Improve: A set of solutions was designed and implemented, including a new centralized and transparent scheduling system to manage demand and capacity, and standardization of PK study methods to reduce variability [31].

- Control: Controls were established to sustain the improvements, such as ongoing monitoring of turnaround times and capacity utilization to prevent a return to the previous state [31].

2. Data Presentation: Quantitative Outcomes

The implementation of the Lean Six Sigma DMAIC protocol yielded significant, measurable improvements in process efficiency and reliability [31].

Table 2: Quantitative Outcomes of Lean Six Sigma Application in Drug Discovery PK Studies

| Performance Metric | Pre-Implementation State | Post-Implementation Outcome |

|---|---|---|

| Average Turnaround Time | Highly variable and exceeding 10 days | Reduced and stabilized, meeting the 10-day target |

| Process Capacity Management | Demand consistently exceeded maximum capacity | Demand leveled to match optimal capacity (≤16 studies/month) |

| Reporting Consistency | Unpredictable and delayed reporting | 80% of studies reported within 10 working days |

The Scientist's Toolkit: Essential Reagents for Process Improvement

For scientists and researchers embarking on a quality improvement project, the following tools and concepts are essential "research reagents".

Table 3: Essential Toolkit for Quality Management Projects in R&D

| Tool / Concept | Function in the Quality Improvement "Experiment" |

|---|---|

| Project Charter | A one-page document that defines the problem, goals, scope, team, and success criteria; it aligns the team and sets the project's direction [31]. |

| Voice of the Customer (VoC) | A structured process for capturing and analyzing customer needs and requirements (e.g., the LO project teams), ensuring the project delivers what is truly valued [31]. |

| SIPOC Diagram | A high-level process map identifying Suppliers, Inputs, Process steps, Outputs, and Customers; it helps define the process boundaries and key stakeholders at the outset [31]. |

| Deviation Report | A simple form used to document problems, blockers, and delays as they occur during the Measure phase; it provides raw data for root cause analysis [31]. |

| Process Capacity Analysis | A calculation of the maximum throughput of a process (considering people, equipment, time) to identify bottlenecks and ensure demand does not exceed sustainable capacity [31]. |

| Control Chart | A statistical tool used in the Control phase to monitor process performance over time, distinguishing between common-cause and special-cause variation to ensure stability [6] [30]. |

The following workflow diagram synthesizes the DMAIC protocol with key tools, providing a visual guide for implementing a similar project.

Diagram: DMAIC Workflow with Associated Toolkit

The evolution of quality management demonstrates that modern approaches like Six Sigma are not standalone solutions but powerful complements to established systems. While TQM builds the cultural bedrock, Lean accelerates processes, and Kaizen fosters daily engagement, Six Sigma provides the analytical rigor to solve complex, high-impact problems such as reducing variation in experimental data or optimizing critical R&D workflows [6] [28] [26].

For the drug development industry, the integration of these methodologies offers a proven path to address pressing challenges. The successful application of Lean Six Sigma at AstraZeneca to improve the PK study process is a testament to its potential to enhance efficiency, reduce costs, and ultimately accelerate the delivery of new drugs to patients [31]. The future of quality management in life sciences will likely see a deeper integration of these methodologies with digital transformation, leveraging artificial intelligence and the Internet of Things to enable real-time process monitoring and predictive analytics [30]. By understanding the unique strengths and synergistic relationships between TQM, Lean, Kaizen, and Six Sigma, researchers and drug development professionals can build a robust, holistic quality management system that is greater than the sum of its parts.

In the pursuit of operational excellence, particularly in highly regulated fields like drug development, the choice of quality control methodology significantly impacts outcomes. Traditional quality control (QC) methods and Six Sigma approaches share the common goal of reducing defects and improving processes, but they diverge fundamentally in philosophy, methodology, and application. Traditional QC, often embodied by practices like Total Quality Management (TQM), typically employs a reactive, inspection-focused approach that prioritizes the detection of defects in finished products or outputs (Y variables) [6] [5]. In contrast, Six Sigma is a proactive, data-driven methodology that focuses on controlling and improving the inputs (X variables) of a process to prevent defects from occurring in the first place [22] [5].

The core distinction lies in their tactical deployment. Traditional methods often rely on a combination of data and 'gut feel' for decision-making and may employ a 'band-aid' approach to problem-solving, addressing symptoms rather than root causes [5]. Six Sigma, however, is characterized by its structured use of statistical tools, rigorous training in applied statistics, and a relentless focus on root cause analysis to achieve breakthrough performance gains [5]. This is encapsulated in the Define, Measure, Analyze, Improve, Control (DMAIC) framework, which provides a disciplined roadmap for process improvement [32] [30].

Central to the Six Sigma methodology is the Sigma Scale, a universal benchmark for process capability. This scale is quantified by the metric Defects Per Million Opportunities (DPMO), which provides a standardized measure of how often defects occur relative to the number of opportunities for a defect [33] [34]. Unlike traditional metrics, DPMO allows for comparison across different processes, products, and even industries, offering researchers and quality professionals a common language for quality.

The Sigma Scale and DPMO Explained

Core Definitions and Calculation

The Sigma Scale is a direct reflection of process quality, with each Sigma level corresponding to a specific DPMO value. To understand this relationship, three key variables must be defined [33] [35]:

- Defect (D): Any instance where a product, service, or process output fails to meet a customer requirement or predetermined specification [33]. It is crucial to distinguish a defect (a single error) from a defective unit (a unit that has one or more defects, rendering the entire unit non-conforming) [35].

- Unit (U): The item, service, or output being produced or processed.

- Opportunity (O): Any specific point within a process where a defect could occur. It represents a Critical-to-Quality (CTQ) characteristic that is important to the customer [33] [35].

The formula for calculating DPMO is [33] [34]:

DPMO = [D / (U × O)] × 1,000,000

This calculation provides a normalized defect rate, enabling meaningful comparisons. For example, a simple pen might have four defect opportunities: ink quality, ballpoint function, cap fit, and barrel integrity [33]. If 10,000 pens are produced with 120 total defects found across these opportunities, the DPMO would be calculated as follows [33]:

- D = 120 defects

- U = 10,000 units

- O = 4 opportunities per unit

- DPMO = [120 / (10,000 × 4)] × 1,000,000 = 3,000

The Sigma Level Correlation

The following table summarizes the direct correlation between the Sigma Level, DPMO, and the corresponding process yield and defect rate [33]:

Table 1: Sigma Level to DPMO Conversion

| Sigma Level | DPMO | Yield (%) | Defect Rate (%) |

|---|---|---|---|

| 1σ | 691,462 | 69.1% | 30.9% |

| 2σ | 308,538 | 93.1% | 6.9% |

| 3σ | 66,807 | 99.3% | 0.7% |

| 4σ | 6,210 | 99.98% | 0.02% |

| 5σ | 233 | 99.977% | 0.023% |

| 6σ | 3.4 | 99.99966% | 0.00034% |

As the table illustrates, each increase in the Sigma Level represents an exponential improvement in quality, signifying a dramatic reduction in process variation and defects. The widely cited benchmark of 3.4 DPMO for a Six Sigma process represents a state of near-perfect quality, where a process will produce only 3.4 defects for every million opportunities [33] [34] [30].

Diagram 1: The Sigma Scale and DPMO Relationship. As the Sigma level increases, the DPMO decreases exponentially, indicating a higher performance process [33].

Experimental Protocols and Data

Methodology for DPMO Calculation and Analysis

To objectively compare process performance using the Sigma Scale, a standardized experimental protocol for calculating and analyzing DPMO is essential. The following workflow, based on the Six Sigma DMAIC framework, provides a rigorous methodology suitable for research environments [32] [30]:

Define the Project Scope:

- Objective: Clearly state the process to be improved and the specific customer requirements (CTQs).

- Protocol: Create a project charter documenting the scope, goals, and team members. Use a SIPOC (Suppliers, Inputs, Process, Outputs, Customers) diagram to map the high-level process [30].

Measure Current Performance:

- Objective: Establish a baseline DPMO for the process.

- Protocol:

- Determine Sample Size: Select a statistically significant sample of units (U) for inspection [34].

- Identify and Define Opportunities: Clearly define each potential defect opportunity (O) per unit. Ambiguity here leads to inaccurate calculations [33].

- Data Collection: Inspect the sample and record the total number of defects (D) found. The use of automated data capture tools is recommended to ensure reliability [30].

- Calculate Baseline DPMO: Apply the DPMO formula:

DPMO = [D / (U × O)] × 1,000,000[33].

Analyze the Root Causes:

- Objective: Identify the root causes of the defects.

- Protocol: Use statistical and analytical tools to investigate the data. Common techniques include:

- Root Cause Analysis: Five Whys, Cause-and-Effect (Fishbone) diagrams [30].

- Statistical Analysis: Hypothesis testing, regression analysis, and Pareto charts to identify the most frequent defect types [32] [6].

- Measurement System Analysis (MSA): Ensure that the measurement system itself (e.g., inspection equipment, personnel) is accurate and not a significant source of variation [30].

Improve the Process:

- Objective: Implement solutions to eliminate the root causes of defects.

- Protocol:

- Solution Brainstorming & Selection: Generate and select potential solutions based on the analysis.

- Pilot Testing: Implement the solution on a small scale (e.g., a pilot batch in drug development) [30].

- Calculate Improved DPMO: Measure the DPMO after the pilot implementation to quantify the improvement.

Control the Improved Process:

Comparative Experimental Data

The following table synthesizes experimental data and outcomes from various industries, demonstrating the practical impact of implementing a Six Sigma DPMO approach compared to traditional QC methods.

Table 2: Comparative Experimental Data: Traditional QC vs. Six Sigma

| Industry / Case | Traditional QC / Baseline Performance | Six Sigma-Driven Improvement | Key Metrics & Outcome |

|---|---|---|---|

| General Manufacturing [30] | N/A | Implementation of Lean Six Sigma | Achieved up to 20% cost savings within the first year. |

| Cross-Industry Average [30] | N/A | Application of Six Sigma methods | Reported average of 22% cost reduction and 28% productivity increase. |

| Automotive Supplier [34] | High variability in manual assembly process. | Systematic problem-solving focused on DPMO reduction. | Improved defect rates from ~20,000 DPMO to under 10 DPMO. |

| General Electric (Jet Engine Assembly) [34] | Baseline defect rate of ~20,000 DPMO. | Systematic problem-solving focused on DPMO reduction. | Improved defect rates from ~20,000 DPMO to under 10 DPMO. |

| Boeing Airlines [32] | Could not identify root cause of air fan issues. | Used Six Sigma to trace problem to a fundamental manufacturing issue. | Resolved foreign object damage and related electrical issues. |

The Researcher's Toolkit: Essential Reagents and Solutions for Quality experimentation

For scientists and professionals embarking on quality improvement projects, the "reagents" are the metrics and analytical tools. The following table details the essential components of a modern quality research toolkit.

Table 3: Key Research "Reagent Solutions" for Quality experimentation

| Tool / Metric | Type | Primary Function in Analysis |

|---|---|---|

| DPMO (Defects Per Million Opportunities) | Metric | Standardizes defect measurement across different processes, enabling comparison and Sigma Level calculation [33] [34]. |

| Statistical Software (Minitab, R, Python) | Analytical Tool | Performs complex statistical analyses such as hypothesis testing, design of experiments (DOE), and creation of control charts with high accuracy [34]. |

| Control Charts | Analytical Tool | Monitors process performance over time to distinguish between common-cause and special-cause variation, ensuring stability [34]. |

| Process Capability Indices (Cp, Cpk) | Metric | Gauges a process's ability to meet customer specifications by comparing process variability to specification limits [32] [34]. |

| First Time Yield (FTY) | Metric | Measures the percentage of units that pass through a process correctly the first time without rework, indicating initial process efficiency [33] [32]. |

| Rolled Throughput Yield (RTY) | Metric | Quantifies the overall probability of a unit passing through multiple process steps defect-free, exposing the "hidden factory" of rework [32] [35]. |

Diagram 2: DMAIC Workflow. The structured, iterative protocol for Six Sigma projects [32] [30].

The comparative analysis unequivocally demonstrates that the Six Sigma methodology, with its quantitative Sigma Scale and DPMO metric, provides a fundamentally more rigorous and effective framework for quality control than traditional, inspection-based approaches. While traditional QC methods focus on detecting output defects, Six Sigma's data-driven, preventive model focuses on controlling input variables to minimize process variation, which is the true source of defects [5].

For researchers, scientists, and drug development professionals, the implications are significant. The Sigma Scale offers a universal language for quality that transcends individual processes, enabling clear benchmarking and goal setting. The experimental protocol of DMAIC provides a disciplined, evidence-based framework for process improvement that is directly applicable to laboratory workflows, manufacturing processes, and clinical trial management. By adopting the DPMO metric and the structured toolkit of Six Sigma, research-intensive organizations can move beyond merely finding defects to building quality and reliability directly into their core processes, thereby enhancing patient safety, accelerating development, and reducing costly errors.

The Six Sigma Toolkit: Applying DMAIC and Statistical Rigor in the Lab

The pursuit of quality in drug development has evolved significantly from traditional inspection-based methods to sophisticated, proactive methodologies. Traditional quality control (QC) methods often relied heavily on end-product testing and retrospective analysis, focusing on detecting defects after they occurred. In contrast, Six Sigma represents a data-driven, structured methodology aimed at eliminating defects and reducing variation within processes by following a defined sequence of phases known as DMAIC [36] [5]. This approach shifts the focus from detecting problems to preventing them entirely, embodying the principle of "prevention over inspection" [5].

The pharmaceutical industry faces immense pressure to deliver products of exceptionally high quality and consistency, where errors can have serious consequences. While traditional QC methods have served the industry for decades, Six Sigma's DMAIC framework offers a systematic roadmap for achieving near-perfect quality levels—as high as 99.99996% or 3.4 defects per million opportunities [37]. This comprehensive guide explores the DMAIC roadmap in detail, comparing its effectiveness against traditional QC approaches within the context of modern drug development.

Fundamental Concepts: Traditional QC vs. Six Sigma

Philosophical Foundations and Approaches

The core distinction between traditional quality management and Six Sigma lies in their fundamental philosophy and operational approach. Traditional quality management often employs an inspection-based method that focuses on detecting problems in the output (Y) and applying corrective measures, sometimes described as a "band-aid approach" [5]. Decisions in this model may be based on a combination of data and 'gut feel,' without a formal structure for tool application [5].

In contrast, Six Sigma represents a preventive, data-driven methodology that controls process inputs (X's) to influence outputs [5]. It employs a structured, root cause approach to problem-solving with formal training in applied statistics [5]. This methodology demands strong top management commitment and creates cultural change within organizations, leading to breakthrough performance gains validated through key business results [5].

Table 1: Core Philosophical Differences Between Traditional QC and Six Sigma

| Characteristic | Traditional Quality Control | Six Sigma Approach |

|---|---|---|

| Primary Focus | Output inspection (Focus on Y) | Process input control (Focus on X's) |

| Decision Basis | Data combined with 'gut feel' | Data-driven decisions |

| Problem Approach | Reactive, "band-aid" solutions | Proactive, root cause elimination |

| Tool Application | No formal structure | Structured use of statistical tools |

| Training | Lack of structured training | Structured training in applied statistics |

| Economic Model | Cost of quality | Business results validation |

Historical Development and Evolution

The origins of these methodologies further highlight their philosophical differences. Traditional quality control methods trace their roots to early manufacturing quality inspection techniques, with quality often viewed as a separate function rather than an integrated business strategy [5].

Six Sigma emerged as a distinct methodology in the 1980s at Motorola, combining existing quality principles with rigorous statistical analysis [38] [36]. The approach was further refined by incorporating Lean principles from the Toyota Production System, which focuses on eliminating waste (muda), unevenness (mura), and overburden (muri) [38] [36]. This integration created Lean Six Sigma, a hybrid methodology that addresses both process variation and waste elimination [36].

The DMAIC methodology itself represents an evolution from earlier quality improvement cycles. Compared to the Plan-Do-Check-Act (PDSA) cycle, DMAIC provides "a more robust preparation of measurement and analysis" before implementing changes, with change not proposed until the fourth of five phases [38]. This structured approach helps ensure that solutions address root causes rather than symptoms.

The DMAIC Roadmap: Phase-by-Phase Analysis

DMAIC represents a rigorous, five-phase methodology for improving existing processes that fail to meet performance standards or customer expectations [39]. Each phase builds upon the previous one, creating a logical sequence for problem-solving and improvement.

Define Phase

The Define phase establishes the project foundation by clearly articulating the problem, scope, and objectives [38] [39]. In pharmaceutical contexts, this involves defining critical quality attributes that impact patient safety and drug efficacy. Key activities include:

- Drafting a Project Charter with problem statements, goals, and timeline [39] [40]

- Identifying customers (internal and external) and their requirements using Voice of the Customer tools [39]

- Mapping high-level processes to understand workflow and stakeholder interactions [40]

- Conducting stakeholder analysis to understand organizational impact [39]

This phase ensures alignment between the improvement project and broader organizational objectives while establishing clear metrics for success.

Measure Phase

The Measure phase focuses on quantifying the current process performance to establish a reliable baseline [39] [41]. This data-driven approach differentiates Six Sigma from traditional qualitative methods. Key activities include:

- Identifying and documenting the current process steps with corresponding inputs and outputs [39]

- Developing measurement systems and validating their accuracy [39]

- Collecting data to establish baseline performance metrics [39] [40]

- Using tools like process capability analysis and Pareto charts to analyze frequency of problems [39]

In pharmaceutical applications, this might involve measuring batch failure rates, analytical testing variability, or manufacturing process parameters.

Analyze Phase

The Analyze phase identifies the root causes of variation and poor performance through rigorous data examination [39] [41]. This represents a crucial differentiator from traditional methods, which may address symptoms rather than underlying causes. Key activities include:

- Using root cause analysis tools like Fishbone diagrams and 5-Whys [38] [36]

- Conducting Failure Mode and Effects Analysis to identify potential failures [39]

- Applying statistical analysis including multivariate charts to detect variation types [39]

- Validating causes of errors, deviation, delays, or defects [38]

This phase bridges data collection and improvement by pinpointing the critical few factors that significantly impact process performance.

Improve Phase

The Improve phase develops, tests, and implements solutions to address the validated root causes [39] [41]. This phase emphasizes innovative, evidence-based interventions. Key activities include:

- Brainstorming and evaluating potential solutions [39]

- Using Design of Experiments to solve complex problems with multiple variables [39] [36]

- Conducting Kaizen events for rapid change through employee involvement [39]

- Piloting process changes and implementing solutions [40]

- Collecting data to confirm measurable improvement [40]

In pharmaceutical applications, solutions might include process parameter optimization, equipment modifications, or procedural changes validated through pilot batches.

Control Phase

The Control phase ensures that improvements are sustained long-term through monitoring and standardization [39] [41]. This focus on sustainability represents a key advantage over traditional approaches. Key activities include:

- Developing control plans documenting requirements to maintain improvements [39]

- Implementing statistical process control to monitor process behavior [39]

- Establishing standard operating procedures and training [39] [41]

- Creating response plans for performance deviations [40]

- Using Five S methodology for workplace organization and visual control [39]

This institutionalization of improvements prevents regression to previous performance levels and creates a foundation for continuous improvement.

DMAIC Process Flow with Key Tools

Comparative Analysis: DMAIC vs. Traditional QC Methods

Methodological Comparison

The DMAIC methodology differs substantially from traditional quality control approaches in both structure and application. Unlike traditional methods that may lack a formalized framework, DMAIC provides a "structured and systematic approach through DMAIC, ensuring a clear path to process improvement" [22].

Table 2: Methodological Comparison: DMAIC vs. Traditional QC

| Aspect | Traditional QC Methods | DMAIC Methodology |

|---|---|---|

| Framework Structure | Often ad-hoc or based on PDCA cycles | Structured 5-phase approach [22] |

| Data Utilization | Focuses less on data-driven insights [22] | Heavy reliance on data and statistical analysis [22] |

| Problem-Solving | Simpler problem-solving techniques [22] | In-depth root cause analysis [22] |

| Variability Approach | May accept inherent process variation | Focused on reducing process variation [22] |

| Project Scale | Suitable for smaller, incremental improvements [22] | Better suited for complex, large-scale projects [22] |

| Improvement Focus | Continuous incremental improvement | Breakthrough performance gains [5] |

Application in Pharmaceutical Development

In pharmaceutical contexts, the differences between these approaches become particularly significant. Traditional QC methods in pharma often emphasize compliance with regulatory standards through rigorous testing of final products, focusing on detecting deviations rather than preventing them.

DMAIC methodology, however, has been successfully applied to various pharmaceutical processes, including:

- Reducing wait times for radiology results in clinical trials [38]

- Improving safe administration of medications [38]

- Decreasing unnecessary antibiotic use [38]

- Shortening production cycle times [37]

- Enhancing processes continuously [37]

- Encouraging automated processes [37]

One systematic review identified "196 manuscripts outlining Six Sigma use in the healthcare sector," with most originating from the United States as published case studies [38].

Experimental Protocols and Implementation

DMAIC Implementation Framework

Successful DMAIC implementation follows either a team-based approach or a Kaizen event framework. The teamwork approach involves individuals skilled in DMAIC tools leading a team that works on projects part-time while performing regular duties, typically as long-duration projects taking months to complete [39]. Alternatively, Kaizen events represent an intense progression through DMAIC completed in about a week, with team members dedicated exclusively to the project during this period [39].

Before initiating DMAIC, proper project selection is crucial. Good DMAIC projects should be [40]:

- Obvious problems within existing processes

- Meaningful but manageable in scope

- Potential to reduce lead time or defects while resulting in cost savings

- Amenable to data collection and measurable improvement

Research Reagent Solutions for Quality Improvement

Table 3: Essential Methodological Tools for Quality Improvement Research

| Research Tool | Function | Application Context |

|---|---|---|

| Statistical Software | Advanced data analysis and visualization | All DMAIC phases, particularly Measure and Analyze |

| Control Charts | Monitor process behavior and variation | Measure and Control phases [36] |

| Design of Experiments | Structured approach to study multiple variables | Improve phase for optimizing solutions [39] [36] |

| Failure Mode and Effects Analysis | Identify potential failures preemptively | Analyze phase for risk assessment [39] [36] |

| Process Capability Analysis | Assess process ability to meet specifications | Measure phase for baseline assessment [39] |

| Root Cause Analysis Tools | Identify underlying causes of problems | Analyze phase (Fishbone, 5 Whys) [38] [36] |

Verification and Validation Protocols

The Control phase includes critical verification activities to ensure sustainability. These include:

- Developing Monitoring Plans to track success of updated processes [40]

- Crafting Response Plans for performance deviations [40]

- Implementing process control plans documenting requirements to maintain improvements [39]

- Establishing ongoing process capability monitoring

In pharmaceutical applications, this typically involves establishing control strategies for critical process parameters that impact critical quality attributes, ensuring consistent drug quality throughout the product lifecycle.

Comparative Performance Data

Quantitative Outcomes Comparison

Six Sigma's DMAIC methodology demonstrates significant advantages over traditional approaches in quantitative performance measures. While traditional quality management might operate at two to three sigma levels, Six Sigma aims for a quality level of 99.99996% or 3.4 defects per million opportunities [37]. This represents a dramatic defect reduction from approximately 30% to 0.0003% [37].

Table 4: Quantitative Performance Comparison: Traditional QC vs. DMAIC

| Performance Metric | Traditional QC | DMAIC Methodology |

|---|---|---|

| Target Defect Rate | Varies, often 2-3 sigma (≈ 30% defects) | 3.4 defects per million opportunities [37] |

| Primary Focus | Output inspection (detection) | Process input control (prevention) [5] |

| Cost Impact | Higher cost of poor quality | Significant cost savings through defect reduction [37] |

| Cycle Time | Potential for extended timelines | Reduced production cycle times [37] |

| Approach to Variation | May accept inherent variation | Focused on variation reduction [22] |

| Cultural Impact | Quality as separate function | Cultural change with widespread involvement [5] |

Pharmaceutical Industry Case Data

Pharmaceutical industry implementations demonstrate DMAIC's tangible benefits. Companies applying Six Sigma principles have achieved [37]:

- Reduced cycle times in production processes

- Enhanced continuous improvement capabilities

- More effective risk management and quality control

- Streamlined processes through waste elimination

The methodology has proven particularly valuable for addressing core processes that deliver fundamental products to external or internal customers, selected based on their ability to influence organizational objectives [37].

The DMAIC roadmap represents a fundamentally different approach to quality management compared to traditional QC methods. While traditional approaches focus on detecting defects through output inspection, DMAIC employs a preventive, data-driven methodology that controls process inputs to eliminate defects at their source. This paradigm shift from detection to prevention offers significant advantages for the pharmaceutical industry, where quality failures can have serious consequences.

For drug development professionals and researchers, DMAIC provides a structured framework for achieving measurable, sustainable improvements in processes ranging from manufacturing to clinical development. The methodology's rigorous, data-driven approach aligns well with the scientific discipline inherent in pharmaceutical research while offering the potential for breakthrough improvements in quality, efficiency, and cost-effectiveness.

As the pharmaceutical industry continues to face pressure to improve quality while controlling costs, DMAIC offers a proven methodology for achieving these competing objectives. By adopting this systematic approach, organizations can transition from reactive quality control to proactive quality management, potentially reducing defects from 30% to 0.0003% and moving toward the ultimate goal of zero defects in pharmaceutical products [37].

This guide provides an objective comparison of three foundational Six Sigma definition and measurement tools—Project Charters, SIPOC, and Voice of the Customer (VOC)—against traditional Quality Control (QC) methods.

Comparative Analysis: Six Sigma vs. Traditional QC Methods

The Define and Measure phases of the Six Sigma DMAIC framework (Define, Measure, Analyze, Improve, Control) introduce a structured, proactive, and customer-centric approach to quality management, which contrasts with the reactive nature of traditional QC [30] [42] [8].

Table 1: High-Level Methodology Comparison

| Feature | Traditional QC Methods | Six Sigma Define/Measure Approach |

|---|---|---|

| Primary Focus | Reactive detection of defects in final products or services [8]. | Proactive problem-solving and defect prevention through process understanding [43] [30]. |

| Problem Definition | Often vague, based on general quality complaints or high-level defect rates. | Precise, using a structured Project Charter and Problem Statement with baseline data [43]. |

| Process Understanding | Limited; focuses on inspection points. | Comprehensive; uses high-level maps like SIPOC to visualize the entire process flow [44] [45]. |

| Customer Focus | Implicit; assumes conformance to internal specifications equals quality. | Explicit; uses Voice of the Customer (VOC) to drive requirements and project goals [30] [46]. |

| Data Usage | Tracks defect counts; used for final product acceptance or rejection. | Establishes baseline performance metrics; used for statistical analysis and root cause investigation [43] [47]. |

| Structured Framework | Lacks a unified, mandatory structure for projects. | Follows the disciplined, sequential DMAIC roadmap [30] [8]. |

Table 2: Quantitative Outcomes Comparison

| Metric | Traditional QC Outcomes | Six Sigma Define/Measure Outcomes |

|---|---|---|

| Error/Defect Rate | Variable; often measured in defects per hundred or thousand [8]. | Aims for a quality level of 3.4 defects per million opportunities (DPMO) [30] [8]. |

| Cost Savings | Reduces costs of scrap and rework. | Reported average cost reduction of 22% and productivity increase of 28% [30]. |

| Project Scope Clarity | Unclear scope can lead to scope creep and wasted effort. | SIPOC and the Project Charter reduce scope creep by defining boundaries upfront [43] [44]. |

| Solution Sustainability | Solutions may not address root causes, leading to recurring issues. | VOC and data-driven charters ensure solutions address true customer needs, improving sustainability [43] [46]. |

Experimental Protocols and Methodologies

The following protocols detail the standard methodologies for implementing the three key Six Sigma tools, providing a reproducible framework for researchers.

Protocol for Developing a Project Charter

A Project Charter formally authorizes a project and provides the foundational blueprint for a Six Sigma initiative [43] [46].

Detailed Methodology:

- Draft Problem Statement: Define the issue concisely without assigning blame or suggesting solutions. Include supporting data on frequency, quantity, duration, and financial impact [43]. Example: "Product defect rates have increased by 15% over the last quarter, leading to a 20% reduction in customer satisfaction and an estimated $250,000 in warranty costs." [43]

- Define Business Case and Goals: State the project's purpose and set SMART objectives (Specific, Measurable, Achievable, Relevant, Time-bound) [43] [30]. Example: "Reduce product defect rates by 20% within six months to improve customer satisfaction and reduce annual warranty costs by $200,000."

- Establish Project Scope: Use a SIPOC diagram (see Protocol 2.2) to define process boundaries. Clearly state what is included and, critically, what is excluded from the project [43].

- Identify Stakeholders and Team: List key stakeholders and assign roles and responsibilities to team members, including the project sponsor, team lead (e.g., Black Belt), and subject matter experts [46] [8].

- Outline Timeline and Resources: Develop a high-level project plan and document required resources and budget.

- Gain Formal Authorization: The completed charter must be reviewed and signed by the project sponsor to secure resource commitment and organizational support [43].

Protocol for Creating a SIPOC Diagram

A SIPOC (Suppliers, Inputs, Process, Outputs, Customers) diagram provides a high-level process map to align stakeholders on project scope and key elements before detailed work begins [44] [45].

Detailed Methodology:

- Define the Process Boundaries: Agree on the starting and ending points of the process to be improved [44].

- Map the High-Level Process Steps: In the center of the diagram, list 4-7 key steps that constitute the process. Use verb-noun format (e.g., "Receive order," "Verify component," "Assemble unit") [44] [45].

- Identify the Outputs: Define the end products, services, or deliverables generated by the process (e.g., "Tested software application," "Shipped product") [44].

- Identify the Customers: List the individuals or entities, internal or external, that receive the outputs (e.g., "End-users," "Stakeholders," "Next department in the assembly line") [44].

- Identify the Inputs: List the materials, information, or resources required for the process to function (e.g., "Raw materials," "Customer requirements," "Code modules") [44].

- Identify the Suppliers: List the individuals, departments, or entities that provide the identified inputs (e.g., "Raw material vendor," "Marketing department," "Software development team") [44].

- Verify the Diagram: Review the completed SIPOC with the project sponsor, champion, and key stakeholders to ensure accuracy and shared understanding [45].

Protocol for Capturing and Analyzing the Voice of the Customer (VOC)

VOC is a systematic process for capturing customer needs and expectations and translating them into actionable, measurable project goals [30] [46].

Detailed Methodology:

- Gather Customer Data: Collect raw data from customers using a mix of methods [46]:

- Direct Methods: Surveys, interviews, and focus groups.

- Indirect Methods: Analysis of complaint logs, warranty claims, and online reviews.

- Interpret and Organize Raw Data: Analyze qualitative data to identify recurring themes, specific complaints, and desired features. Organize these into clear customer need statements.