Optimizing Sample Loading to Prevent Artifacts: A Strategic Guide for Robust Drug Development Data

This article provides a comprehensive guide for researchers and drug development professionals on preventing data-distorting overloading artifacts through strategic sample loading optimization.

Optimizing Sample Loading to Prevent Artifacts: A Strategic Guide for Robust Drug Development Data

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on preventing data-distorting overloading artifacts through strategic sample loading optimization. Covering foundational concepts to advanced applications, it details how improper loading amounts can introduce critical errors in analytical techniques from histopathology to chromatography. The content outlines a fit-for-purpose methodology for determining optimal load, practical troubleshooting workflows for identifying and rectifying overload, and robust validation frameworks to ensure data integrity and reproducibility. By integrating these principles, scientists can significantly enhance the reliability of their data in preclinical and clinical research, accelerating the path to successful regulatory approval.

Understanding Overloading Artifacts: Origins, Impact, and Detection in Biomedical Analysis

In scientific research and analysis, "overloading" occurs when the amount of sample introduced into an analytical system exceeds its operational capacity. This creates "overloading artifacts"—observable anomalies in data that do not represent the true nature of the sample but are instead byproducts of system overload. These artifacts can compromise data integrity, leading to inaccurate quantification, misidentification of components, and ultimately, erroneous conclusions. Understanding how to define, identify, and prevent these artifacts is fundamental to optimizing sample loading and ensuring the reliability of experimental results across various methodologies, from chromatography to electrophoresis. This guide provides a structured, technical framework for troubleshooting overloading artifacts within the critical context of optimizing sample load.

Technical Troubleshooting Guides

Diagnosing Column Overload in Liquid Chromatography (LC)

In Liquid Chromatography, column overload happens when the mass of analyte exceeds the binding capacity of the stationary phase.

- Primary Symptoms: The most characteristic signs are a decrease in retention time and a change in peak shape, where the peak develops a sharp, steep front and a trailing edge, resulting in a right-triangle or shark-fin appearance [1] [2]. This occurs because the center of mass of the analyte band moves through the column more quickly than under ideal conditions [1].

- Diagnostic Test: The definitive test for column overload is to reduce the injected sample mass. A 10-fold reduction is a standard starting point. If the peak retention time increases and the peak shape becomes more symmetrical and Gaussian, column overload is confirmed [1] [2].

- Underlying Mechanism: The column's stationary phase has a finite number of "active sites" for interaction. When these are saturated, additional sample molecules cannot be retained and must travel further to find free sites, leading to broader peaks and reduced retention [1] [2].

- Complicating Factors: Overload is not solely dependent on the mass injected; it is also a function of peak concentration. Using a column with a smaller diameter reduces peak volume, increasing the concentration at the peak apex and making overload more likely at the same injected mass. Methods designed to save solvent by using narrower columns often require a proportional reduction in injection volume and mass to avoid this effect [2].

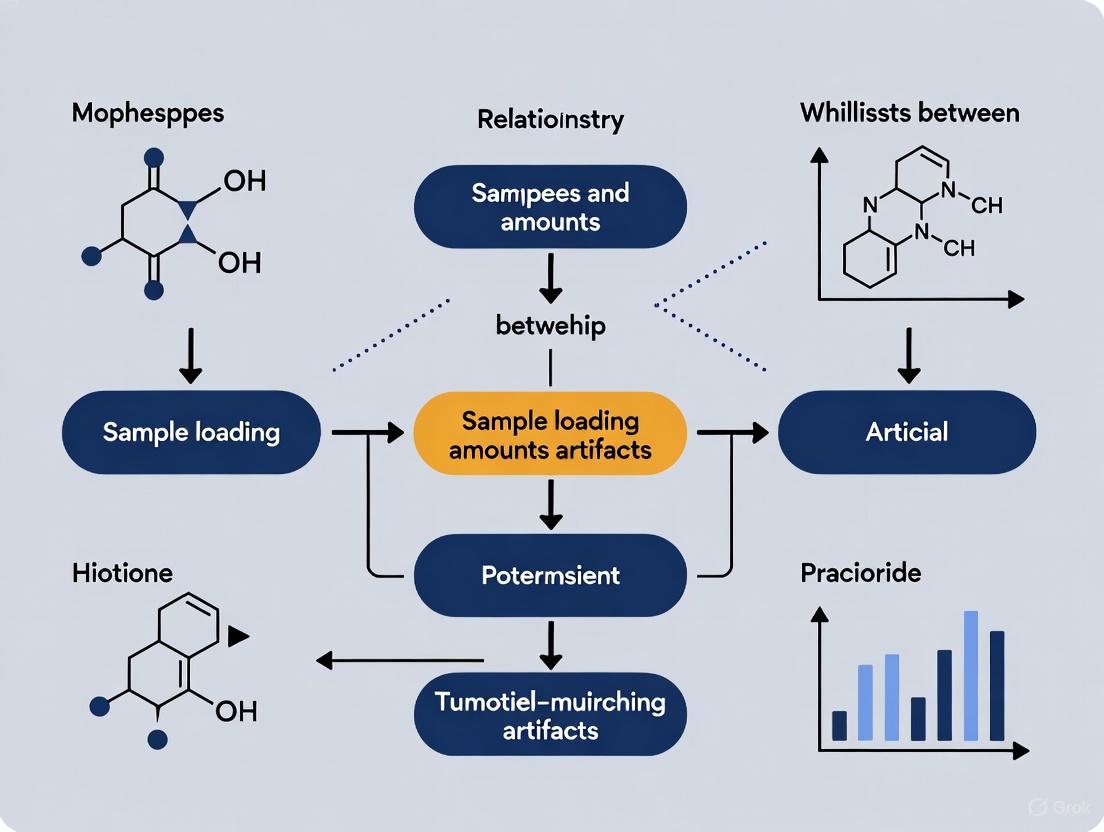

Diagram: Liquid Chromatography Overload Diagnosis Workflow

Identifying Detector Overload in Liquid Chromatography (LC)

Detector overload is a distinct issue where the analyte concentration at the detector exceeds its linear response range.

- Primary Symptom: The key indicator is a flat-topped peak [2]. As the analyte concentration reaches the upper limit of the detector's dynamic range, the signal response plateaus, resulting in a truncated peak apex.

- Diagnostic Test: Serial dilution of the sample will cause the peak height to decrease proportionally, and the flat top will revert to a normal, rounded shape once the concentration falls within the detector's linear range [2].

- Important Consideration: The effective linear range of a UV detector can be reduced if the mobile phase itself has significant background absorbance. Even if the baseline is auto-zeroed, the absolute absorbance may consume part of the detector's available range, causing overload at a lower-than-expected analyte concentration [2].

Troubleshooting Gel Electrophoresis Overloading

Overloading in polyacrylamide gel electrophoresis (PAGE) manifests as distortions in the migration pattern of protein or DNA bands.

Symptoms and Causes:

- Distorted Bands ("Smiling" or "Frowning"): This is often caused by uneven heat dissipation (Joule heating) across the gel. The center of the gel becomes hotter, causing samples in middle lanes to migrate faster, creating a "smile" pattern. Overloading wells can exacerbate this by creating local regions of high conductivity and heat [3].

- Poor Band Resolution: Overloading the well with too much protein or DNA causes bands to become thick, merge, and fail to separate cleanly. This is often coupled with vertical smearing [4] [3] [5].

- Horizontal Smearing and Streaking: This can be caused by excess salt in the sample (e.g., exceeding 100 mM), which increases conductivity and distorts the electric field, leading to lane widening and dumbbell-shaped bands [5]. Viscous samples due to genomic DNA contamination can also cause smearing and aggregation [5].

Solutions:

- Reduce Sample Load: For mini-gels, a maximum of 0.5 μg per protein band or 10–15 μg of cell lysate per lane is recommended for optimal resolution [5].

- Reduce Voltage: Running the gel at a lower voltage for a longer duration minimizes Joule heating and improves resolution [3].

- Desalt Samples: Use dialysis, concentrators, or desalting columns to ensure salt concentrations do not exceed 100 mM [5].

- Remove Insoluble Material: Centrifuge samples after heat treatment to prevent streaking from precipitated material [4].

The table below summarizes quantitative guidelines for protein gel electrophoresis.

| Artifact | Recommended Maximum Load (Coomassie) | Recommended Maximum Load (Silver Stain) | Critical Buffer Concentration |

|---|---|---|---|

| Poor Resolution / Streaking | 0.5 μg per band; 10-15 μg crude lysate [5] | ~100x less than Coomassie [4] | Salt < 100 mM [5] |

| Smiling/Frowning Bands | N/A (load volume dependent) | N/A (load volume dependent) | N/A |

| Remedial Action | Protocol Adjustment | Key Parameter to Monitor | Additional Tools |

| Reduce load by 50% | Lower voltage, extend run time | Band sharpness & straightness | Prestained protein ladder [5] |

Table: Protein Gel Electrophoresis Overloading Guidelines and Solutions

Diagram: Gel Electrophoresis Overload Diagnosis Workflow

Differentiating a Tailing Peak from a Co-eluting Minor Peak

In chromatography, a tailing peak can mask the presence of a small, co-eluting impurity, which is a critical issue in purity and stability-indicating assays.

- The Challenge: A minor peak (e.g., 0.1-1% of the main peak area) eluting just after a large, tailing peak can be indistinguishable from the tail itself [1].

- Diagnostic Tests:

- Inject a Smaller Sample: This is the first step to rule out column overload. If the tailing persists at a 10-fold lower concentration, it suggests a co-eluting peak rather than overload [1].

- Collect and Reinject Fractions: This is a powerful technique. Collect a fraction from the tailing region of the peak and reinject it. If the minor component is present, the reinjected chromatogram will be enriched for it, making the two peaks more obvious, especially if their areas are more equal [1].

- Advanced Detection (MS): Mass spectrometry can definitively identify the presence of a second component by detecting two different masses. However, converting a method to be MS-compatible can be non-trivial [1].

- Peak Purity with Diode-Array Detector (DAD): This technique compares UV spectra across the peak. Its utility is often limited because structurally related compounds (like impurities or degradants) have very similar UV spectra, making discrimination difficult, especially at low relative concentrations [1].

Frequently Asked Questions (FAQs)

Q1: How can I quickly tell if my LC peak is tailing due to column overload or because of a hidden minor peak? The most straightforward test is to inject a 10-fold smaller sample amount. If the tailing disappears and the retention time increases, the issue was column overload. If the tailing persists on a normalized scale, it suggests a co-eluting minor peak [1].

Q2: Why are my protein bands on my western blot faint, even though I loaded a lot of protein? This can be a sign of overloading. Too much protein can lead to poor transfer efficiency to the membrane, increased nonspecific binding, and diffuse bands that are difficult to detect. Try reducing the amount of protein loaded by 50% and ensure you are using a positive control, like a prestained protein ladder, to assess transfer and detection [5].

Q3: My DNA gel bands are "smiling." Is this a sign of overloading? Yes, indirectly. "Smiling" is primarily caused by uneven heating across the gel, with the center being hotter. However, overloading the wells can exacerbate this effect by increasing the local conductivity and heat production. Solutions include reducing the voltage, using a constant current power supply, and loading less sample per well [3].

Q4: What is the single most important factor for preventing overloading artifacts in gel electrophoresis? Using the correct sample mass for the gel size and detection method. Overloading wells is a primary cause of poor resolution, smearing, and distorted bands. Always determine your protein concentration with a reliable assay before loading and follow guidelines for your specific gel system (e.g., 0.5 μg per band for a purified protein on a mini-gel with Coomassie stain) [4] [5].

Q5: My LC detector doesn't seem to be overloaded (no flat tops), but my peaks are broad and retention is shifting. What's happening? This is a classic description of column overload [2]. Detector overload and column overload are separate issues. Your detector may be functioning within its linear range, but the mass of analyte has exceeded the capacity of the column. Perform the diagnostic test of reducing the injection volume/mass to confirm.

The Scientist's Toolkit: Essential Reagents & Materials

| Tool / Reagent | Primary Function in Preventing Overloading Artifacts |

|---|---|

| Desalting Columns / Dialysis Devices | Removes excess salts from samples to prevent lane distortion and smearing in electrophoresis [5]. |

| Slide-A-Lyzer MINI Dialysis Device | A specific example of a device used to decrease salt concentration in small-volume samples [5]. |

| Prestained Protein Ladder | Serves as a critical positive control to monitor electrophoresis run, transfer efficiency, and molecular weight estimation; helps diagnose general system failures [5]. |

| Ultra-Pure Urea & Mixed-Bed Resins | High-purity urea and resins (e.g., AG 501-X8) remove cyanate contaminants that cause protein carbamylation, an artifact that creates charge heterogeneity and spurious bands [4]. |

| Detergent Removal Columns | Removes excess nonionic detergents (Triton X-100, NP-40) that can interfere with SDS binding and cause lane widening and streaking in SDS-PAGE [5]. |

| Benzonase Nuclease | Degrades genomic DNA and RNA in crude cell extracts to reduce sample viscosity, preventing protein aggregation and smearing during electrophoresis [4]. |

| Formate/Acetate Buffers | MS-compatible buffers that can replace non-volatile buffers (e.g., phosphate) when LC-UV methods need to be adapted for LC-MS to investigate peak purity [1]. |

Frequently Asked Questions

What are the most common types of artifacts in gel electrophoresis? The most common artifacts include distorted bands ("smiling" or "frowning"), band smearing, poor resolution between bands, and faint or absent bands [3].

Why is optimizing sample loading amount critical? Overloading wells with too much sample is a primary cause of several artifacts, including distorted bands, smearing, and poor resolution, which can compromise data interpretation and lead to incorrect conclusions [3].

My gel has "smiling" bands. Is this a sample loading issue? While "smiling" bands are primarily caused by uneven heat dissipation during the run, overloading a well with a high salt concentration can create local heating and exacerbate this distortion [3].

No bands are visible on my gel after staining. Could this be related to sample amount? Yes, one potential cause is that the sample concentration loaded into the well was too low to be detected. However, the first step is to check your electrophoresis setup and staining protocol, as the issue may not be with the sample itself [3].

How can I prevent smearing in my protein gel? To prevent smearing, ensure you are not overloading the gel, handle samples gently to avoid degradation, run the gel at a lower voltage, and verify that protein samples are properly denatured [3].

Troubleshooting Guide: Common Electrophoresis Artifacts

The following table outlines common artifacts, their specific causes, and methodological solutions.

| Artifact Type | Primary Causes | Impact on Data Integrity | Corrective Methodologies |

|---|---|---|---|

| Distorted Bands ("Smiling" or "Frowning") | Uneven heat distribution; High salt in samples; Overloaded wells [3]. | Distorts apparent molecular weight and quantity, leading to incorrect sample analysis. | Reduce voltage; Use constant current power supply; Desalt samples; Load smaller sample volumes [3]. |

| Band Smearing & Fuzziness | Sample degradation; Excessive voltage; Incorrect gel concentration; Incomplete digestion or denaturation [3]. | Obscures true band identity and purity, compromising assessments of sample integrity. | Handle samples on ice; Use correct gel concentration; Run gel at lower voltage; Verify complete sample digestion/denaturation [3]. |

| Poor Band Resolution | Suboptimal gel concentration; Overloaded wells; Incorrect run time; Voltage too high [3]. | Prevents clear distinction between molecules of similar sizes, hindering accurate analysis. | Optimize gel concentration for target size; Load less sample; Run gel longer at lower voltage [3]. |

| Faint or Absent Bands | Insufficient sample concentration; Sample degradation; Incorrect staining; Gel loading error [3]. | Can lead to false negative results or failure to detect critical components. | Increase starting material; Verify staining protocol; Check power supply and connections [3]. |

Experimental Protocol: Optimizing Sample Loading to Prevent Artifacts

1. Objective To determine the optimal sample loading amount that provides clear, well-resolved bands without distortion or smearing for a specific target molecule and gel system.

2. Materials

- Purified sample

- Appropriate DNA/RNA or protein ladder/marker

- Electrophoresis system (gel tank, power supply)

- Pre-cast gels or materials for gel casting (acrylamide, agarose)

- Fresh running buffer

- Staining solution (e.g., Coomassie Blue, SYBR Safe)

3. Methodology

- Step 1: Preparative Dilution Series: Prepare a series of sample dilutions. For instance, if a single "standard" load is 10 µL, prepare loads of 5 µL, 10 µL, 15 µL, and 20 µL, adjusting the buffer to keep the total volume consistent across wells [3].

- Step 2: Gel Electrophoresis: Load the dilution series alongside an appropriate molecular weight ladder/marker onto the gel. Perform the run at a constant voltage recommended for your gel type, avoiding excessively high voltages that cause heating [3].

- Step 3: Post-Run Analysis: Stain and destain the gel according to your standard protocol. Document the results.

4. Data Interpretation and Optimization

- Optimal Load: The dilution that yields a sharp, well-defined band with high intensity without causing smearing, distortion, or merging with adjacent bands.

- Under-loaded: Band is faint or absent, risking false negatives [3].

- Over-loaded: Band is smeared, distorted, or thick with poor resolution, compromising accuracy and leading to potential misidentification [3].

The Scientist's Toolkit: Essential Research Reagent Solutions

| Item | Function | Considerations for Preventing Artifacts |

|---|---|---|

| Acrylamide/Bis-Acrylamide | Forms the polyacrylamide gel matrix for protein or small nucleic acid separation. | Concentration is critical; must be optimized for the size range of target molecules to ensure proper resolution [3]. |

| Agarose | Forms the gel matrix for separation of larger nucleic acid fragments. | Gel percentage determines pore size; higher percentages provide better resolution for smaller fragments [3]. |

| Running Buffer (e.g., TAE, TBE, SDS-PAGE Buffer) | Conducts current and maintains stable pH during electrophoresis. | Must be fresh and at the correct concentration; depleted or incorrect buffer alters system resistance, causing heating and distortion [3]. |

| Molecular Weight Ladder/Marker | Provides a reference for estimating the size of unknown sample fragments. | Essential for validating that the electrophoresis run was successful and for troubleshooting failed experiments [3]. |

| Staining Solution (e.g., Coomassie, SYBR Safe) | Visualizes separated biomolecules on the gel. | Must be prepared correctly and given adequate staining time; otherwise, bands may not be visible even if present [3]. |

| Sample Loading Buffer | Contains dye to track migration and density agent (e.g., glycerol) to sink sample into well. | Should not contribute excessively to sample salt concentration, which can cause local heating and distorted bands [3]. |

Troubleshooting Guides

This section provides solutions to common problems encountered during the histology workflow, from tissue collection to processing.

Biopsy and Prefixation Guide

| Problem | Causes | Solutions |

|---|---|---|

| Hemorrhage Artifact [6] | Extravasated erythrocytes from the biopsy procedure itself, unrelated to underlying pathology. | Apply local hemostatic agents properly; distinguish artifact from true pathological hemorrhage. |

| Thermal/Heat Artifact [6] | Use of excessive heat during tissue removal with lasers or electrosurgery. | Use optimized power settings for surgical tools; minimize thermal exposure to specimen edges. |

| Tissue Shrinkage (Prefixation) [7] | Exposure to hyperosmolar fixatives or prolonged fixation times. | Use isotonic fixatives where possible; limit fixation time; rehydrate shrunken tissue with distilled water. [7] |

| Tissue Swelling [7] | Use of hypotonic fixatives or overhydration during the initial processing steps. | Use hypertonic fixatives; gently blot swollen specimens on absorbent paper to reduce excess moisture. [7] |

| Injected Material Artifact [6] | Presence of substances like intralesional corticosteroids, which can appear as light blue pools of mucin. | Document injection history; be aware of this pitfall to avoid misdiagnosis as a mucinous lesion. |

Fixation Guide

| Problem | Causes | Solutions |

|---|---|---|

| Incomplete Fixation [6] | Delay in fixation; use of an inadequate volume of formalin; insufficient fixation time. | Place tissue in fixative immediately after removal; use at least a 10:1 ratio of fixative to tissue volume; allow 24-48 hours for fixation (approx. 1 hour per mm of tissue thickness). [6] |

| Artifact Formation [7] | Overhandling of the specimen, poor sectioning technique, or contamination during fixation. | Handle tissues with care; use clean, well-maintained equipment; practice good laboratory hygiene. [7] |

| Inconsistent Fixation [7] | Uneven distribution of fixative, variability in tissue thickness, or inconsistent handling. | Ensure specimens are fully immersed in fixative; maintain uniform tissue thickness during trimming; standardize handling techniques. [7] |

| Microwave Fixation Artifact [6] | Accelerated fixation using microwave can alter tissue texture and cellular appearance. | Can result in vacuolization, cytoplasmic overstaining, or pyknotic nuclei; follow optimized protocols for microwave use. |

| Improper Fixation (Saline) [6] | Storing tissue in saline for transport instead of fixative. | Causes vacuolization of basal layer epithelium, separation of collagen, and eventual cell lysis. Always use appropriate fixative. |

Tissue Processing & Embedding Guide

| Problem | Causes | Solutions |

|---|---|---|

| Shrinkage of Tissue [8] | Inadequate fixation; rapid dehydration (e.g., jumping from 70% to 100% ethanol too quickly); excessive heat during wax infiltration (>60°C). | Optimize fixation with buffered formalin; use a gradual ethanol series (e.g., 70%, 90%, 100%); keep wax baths at or below 60°C. [8] |

| Retained Air in Samples [8] | Incomplete submersion during fixation; inadequate vacuum cycles in processors; common in porous tissues (e.g., lung). | Submerge tissues fully; use vacuum chambers for porous samples; employ modern processors with vacuum/pressure cycles. [8] |

| Poor Embedding or Infiltration [8] | Incomplete dehydration leaves residual water blocking wax; clearing agents fail to displace ethanol; low-quality paraffin. | Ensure thorough dehydration with a graded alcohol series; use multiple changes of clearing agent; invest in high-quality paraffin with additives. [8] |

| Overprocessed Tissue [6] | Excessive dehydration or clearing, leading to hardened, brittle tissue that is difficult to cut. | Follow recommended times for dehydration and clearing steps; monitor tissue consistency. |

| Underprocessed Tissue [6] | Inadequate dehydration or clearing, resulting in poor paraffin penetration. This leads to tissue that is difficult to cut and causes staining artifacts. | Ensure proper processing time, especially for fatty or thick tissues; prevent water contamination in reagents. |

Frequently Asked Questions (FAQs)

Q1: What is the single most critical step to avoid artifacts in histology? The most critical step is proper and timely fixation [6]. Tissue should be placed in an adequate volume of an appropriate fixative, such as 10% neutral buffered formalin, immediately after collection. Inadequate fixation initiates a cascade of problems that cannot be reversed in later stages.

Q2: How can I prevent tissue shrinkage during processing? Adopt a controlled, gradual processing protocol [8]:

- Fixation: Use phosphate-buffered formalin for 6-24 hours with gentle agitation.

- Dehydration: Follow a gradual ethanol series (e.g., 70%, 90%, 100%) with sufficient time at each step.

- Infiltration: Keep paraffin wax temperature at or below 60°C to prevent over-hardening and shrinkage.

Q3: Our lab is seeing uneven fixation across tissue samples. What could be the cause? This is often due to inconsistent tissue handling [7]. Key things to check are:

- Size: Ensure tissues are trimmed to a uniform thickness (ideally ≤4mm) [8].

- Immersion: Verify that all specimens are fully submerged in fixative without being overcrowded.

- Agitation: Use gentle agitation during fixation to ensure even fixative distribution.

Q4: What are the clear signs of poor tissue infiltration with paraffin? Poor infiltration results in a soft, mushy, or uneven block that is challenging to section with a microtome. The tissue may appear non-transparent, and sectioning may produce crumbling or ribbons that break easily [8] [6].

Q5: How does sample overload during processing manifest, and how can it be prevented? While not explicitly detailed in the results, "overloading" a processor can lead to inconsistent fixation and processing [7]. This occurs when too many or overly large samples are processed together, preventing proper fluid exchange. It manifests as variable tissue quality within the same batch. Prevent it by matching processing schedules and reagent volumes to the tissue type, size, and quantity [8].

Experimental Protocols for Optimization

Protocol 1: Standardized Fixation for Optimal Morphology

This protocol is designed to prevent common fixation artifacts like shrinkage, swelling, and incomplete penetration [7] [6].

- Tissue Trimming: Immediately after biopsy, trim specimen to a uniform thickness not exceeding 4 mm [8].

- Fixative Solution: Immerse tissue completely in 10% neutral buffered formalin. Use a container with a fixative-to-tissue volume ratio of at least 10:1 [6].

- Fixation Time: Allow fixation to proceed for 6 to 24 hours at room temperature, with gentle agitation if possible. For larger samples, extend time accordingly (approximately 1 hour per mm of thickness) [6].

- Post-Fixation Handling: After fixation, transfer tissue to a labeled cassette and rinse briefly in an appropriate buffer before proceeding to dehydration.

Protocol 2: Graduated Processing for Delicate Tissues

This protocol minimizes shrinkage and ensures complete infiltration, which is critical for preventing overloading artifacts in downstream analysis [8].

- Dehydration:

- Process tissues through a series of ethanol baths with increasing concentrations.

- Recommended Series: 70% ethanol → 90% ethanol → 100% ethanol → 100% ethanol.

- Timing: 15 to 45 minutes per bath, depending on tissue size and density [8].

- Clearing:

- Transfer tissue through two changes of xylene, 20 minutes each, followed by a longer bath of 45 minutes to ensure complete removal of ethanol [8].

- Alternative: Consider xylene-free protocols using agents like isopropanol.

- Infiltration (Wax Impregnation):

- Immerse tissue in two to three changes of molten paraffin wax.

- Critical Parameter: Maintain wax temperature at or below 60°C.

- Duration: 45-60 minutes per bath to ensure thorough infiltration [8].

Visual Guide: Tissue Processing Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function |

|---|---|

| Phosphate-Buffered Formalin [8] | A standard fixative that stabilizes tissue by cross-linking proteins, preserving cellular morphology in a neutral pH environment. |

| Gradual Ethanol Series [8] | A sequence of ethanol solutions (e.g., 70%, 90%, 100%) used to slowly remove water from tissue during dehydration, preventing warping and excessive shrinkage. |

| Xylene [8] | A clearing agent used to remove alcohol from dehydrated tissue, making it miscible with paraffin wax for the infiltration step. |

| High-Quality Paraffin Wax with Additives [8] | The embedding medium; premium wax with polymers (e.g., styrene) provides hardness and elasticity, enabling thin, high-quality sections. |

| Hemostatic Agents (e.g., Aluminum Chloride) [6] | Used during biopsy to control bleeding, but can introduce granular deposits in tissue, which must be recognized as an artifact. |

Troubleshooting Guides

Why is my experimental data producing unreliable or highly variable outputs in my quantitative model?

This problem often stems from issues with the initial sample quality and preparation, which introduce artifacts that corrupt the data used for model building [4].

- Problem: Unreliable or highly variable model outputs.

- Primary Cause: Degraded or artifact-laden experimental data used as model input.

- Solution: Implement rigorous sample preparation and quality control protocols.

Diagnosis and Solutions Table

| Problem Manifestation | Potential Root Cause | Corrective & Preventive Actions |

|---|---|---|

| Multiple unexpected bands on SDS-PAGE; protein degradation [4] | Protease activity in sample buffer prior to heating [4] | Add sample buffer and immediately heat to 95-100°C for 5 minutes. Alternatively, heat at 75°C for 5 minutes to inactivate proteases while being gentler on proteins [4]. |

| Specific protein cleavage fragments on SDS-PAGE [4] | Cleavage of acid- and heat-labile Asp-Pro bond during heating [4] | Reduce heating temperature to 75°C for 5 minutes to avoid this specific cleavage while still denaturing the protein [4]. |

| Heterogeneous cluster of contaminating bands (~55-65 kDa) [4] | Keratin contamination from skin, hair, or dander in sample or buffer [4] | Run sample buffer alone on a gel to identify contamination source. Remake contaminated buffers, aliquot, and store at -80°C. Use clean gloves and pre-cleaned labware [4]. |

| Distorted, poorly resolved bands; streaking [4] | Overloading or presence of insoluble material [4] | Determine accurate protein concentration. Centrifuge sample (17,000 x g, 2 min) after heating to remove insolubles. For purified protein, load 0.5–4.0 μg; for crude samples, load 40–60 μg for Coomassie staining [4]. |

| Altered protein mass/charge, affecting analysis [4] | Protein carbamylation from urea solution contaminants [4] | Treat urea solutions with a mixed-bed resin to remove cyanate. Add chemical scavengers (e.g., 5-25 mM glycylglycine) or 25-50 mM ammonium chloride. Limit protein exposure time to urea [4]. |

How do I optimize sample loading to prevent column overloading in my analytical assays?

Column overloading occurs when too much sample is injected, saturating the system and leading to distorted data that is unsuitable for quantitative modeling [9].

- Problem: Column overloading causing distorted peaks and inaccurate data.

- Primary Cause: Injecting too high a mass or volume of analyte onto the chromatographic system [9].

- Solution: Systematically determine the loading capacity for your specific column and analytes.

Diagnosis and Solutions Table

| Problem Manifestation | Potential Root Cause | Corrective & Preventive Actions |

|---|---|---|

| Peak broadening and tailing (potentially symmetrical) [9] | Volume overload: Injecting too much sample volume [9] | Concentrate the sample if possible. Use an injection technique with a higher split ratio to create a narrower initial sample band [9]. |

| Broad, distorted, non-Gaussian peaks with shifted retention times [9] | Mass overload: Injecting too high a concentration of analyte, saturating interaction sites [9] | Dilute the sample or inject a smaller volume. Increase the split ratio. Consider a column with a larger internal diameter or a thicker stationary phase film [9]. |

| Loss of resolution impacting data quality for modeling [9] | Exceeding the column's sample loading capacity [9] | Determine the "capacity cup-full point" – the amount of solute that increases peak width at half-height by 10%. Use this as the maximum loading limit [9]. |

Experimental Protocol: Determining Sample Loading Capacity

This protocol is adapted from methodologies used to evaluate PLOT columns [9].

- Preparation: Prepare a series of samples with a gradual increase in the concentration of your target analyte.

- Analysis: Inject each sample onto your chromatographic system under consistent, optimized conditions.

- Measurement: For each resulting peak, measure the peak width at half height.

- Analysis: Plot the peak width against the amount of analyte loaded on the column.

- Determination: Identify the point at which the peak width increases by 10% compared to its value at very low, non-overloading concentrations. This is the practical loading capacity for your system [9].

How can I systematically troubleshoot general problems in my experimental research?

A structured approach to troubleshooting is essential for maintaining the integrity of the data used in MIDD.

(Troubleshooting Workflow for Robust Experiments)

Frequently Asked Questions (FAQs)

What is the single most critical step in sample preparation for reliable data?

The most critical step is the immediate and proper denaturation of your sample. Adding sample buffer and then delaying the heating step can allow proteases to digest your proteins of interest at room temperature, generating irreproducible data and misleading degradation profiles. Always heat your samples immediately after adding buffer [4].

My protein of interest is difficult to dissolve. How can I prepare it for electrophoresis?

Some proteins, such as histones and membrane proteins, may not dissolve completely in standard SDS sample buffer alone. You can add 6–8 M urea or a non-ionic detergent like Triton X-100 to the sample buffer to aid in solubilization. After heating, always centrifuge the sample (e.g., 2 minutes at 17,000 x g) to remove any remaining insoluble material before loading the gel to prevent streaking [4].

How does sample overloading in an experiment negatively impact MIDD?

Model-Informed Drug Development relies on high-quality, quantitative data to build and validate mathematical models. Sample overloading in analytical assays (e.g., chromatography, electrophoresis) produces distorted, non-linear, and inaccurate data [9]. Feeding this corrupted data into a model compromises its predictive power, leading to poor decisions about dose selection, trial design, and efficacy evaluation [10]. Ensuring optimal sample loading is a foundational step in generating the reliable inputs required for quantitative predictions.

Where can I find regulatory guidance on applying MIDD in my drug development program?

The FDA's Center for Drug Evaluation and Research (CDER) and Center for Biologics Evaluation and Research (CBER) run a MIDD Paired Meeting Program. This program affords selected sponsors the opportunity to meet with Agency staff to discuss MIDD approaches for a specific drug development program. You can submit a meeting request to the relevant Investigational New Drug (IND) application. Detailed procedures, eligibility criteria, and submission deadlines are provided on the FDA's website [11].

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function & Rationale |

|---|---|

| Dithiothreitol (DTT) / β-mercaptoethanol | Reducing agent that breaks disulfide bonds in proteins, ensuring complete denaturation and linearization for accurate molecular weight analysis on SDS-PAGE [4]. |

| Benzonase Nuclease | Recombinant endonuclease added to viscous cell extracts to degrade DNA and RNA. Reduces viscosity without proteolytic activity, preventing smearing and improving sample resolution in gels [4]. |

| Mixed-Bed Resin (e.g., AG 501-X8) | Used to deionize urea solutions by removing ammonium cyanate, a contaminant that causes protein carbamylation. This prevents unwanted mass and charge alterations [4]. |

| Urea with Stabilizers | A denaturing agent. Using it with stabilizers like 25-50 mM ammonium chloride or glycylglycine pushes the chemical equilibrium away from cyanate formation, minimizing protein carbamylation during preparation [4]. |

| Protease Inhibitor Cocktails | Added to lysis buffers to inhibit a broad spectrum of proteases, preserving protein integrity from the moment of cell lysis and preventing generation of spurious cleavage fragments [4]. |

Strategic Methodologies for Determining Optimal Sample Load Across Techniques

FAQs and Troubleshooting Guides

FAQ 1: What does a "Fit-for-Purpose" framework mean in the context of experimental design?

A "Fit-for-Purpose" framework ensures that a system, policy, or experimental design is operationalized in a manner best suited to local needs and specific contexts [12]. It moves away from a "one-size-fits-all" approach and instead advocates for designing processes that are a "best fit" for their local environment, resources, and analytical goals. This approach is aligned with emerging models that espouse decentralization and is particularly crucial for ensuring that different systems are "optimally adapted to [their] political, social and economic context" [12]. In practice, this means that your sample loading strategies, data collection efforts, and analytical methods should be tailored to your specific research question and the context in which the data will be used, rather than blindly following standardized protocols that may not be optimal for your unique situation.

FAQ 2: How can I systematically determine the optimal sample loading amount to prevent overloading artifacts?

Determining optimal sample loading requires a systematic, data-oriented approach rather than an intuitive, concept-oriented one [13] [14]. The core principle is to minimize data collection and sample loading efforts while preserving analytical efficiency and validity. Follow this decision workflow to establish your optimal load:

A successful application of this framework in EEG research demonstrated that the number of artifact tasks could be reduced from twelve to three, and repetitions of isometric contraction tasks could be decreased from ten to three or even just one, without compromising the detection efficiency [13] [14].

FAQ 3: What are the most common overloading artifacts and how can I identify them?

Overloading artifacts manifest differently across analytical platforms. The table below summarizes common artifacts, their causes, and detection methods in different research contexts:

Table 1: Common Overloading Artifacts and Identification Strategies

| Analytical Context | Common Overloading Artifacts | Primary Causes | Detection Methods |

|---|---|---|---|

| Flow Cytometry | Spectral spillover, high background autofluorescence, false positives from fluorescent spillover spreading error [15]. | Supraoptimal antibody concentration, incorrect detector sensitivity/PMT voltage, inadequate compensation [15]. | Use FMO controls, check stain index, analyze single-stained compensation controls [15] [16]. |

| EEG Recordings | Electromyography (EMG) artifacts from masseter, temporalis, frontalis, and occipitalis muscle groups [13]. | Jaw tensing, biting, teeth grinding, frowning, head turning [13]. | Binary classification using neural architectures to differentiate artifact epochs from non-artifact epochs [13]. |

| High-Dimensional Flow Cytometry | Excessive autofluorescence obscuring weak signals, photon-counting statistical errors at detection limits [15]. | Overly sensitive detector settings, failure to titrate reagents, ignoring cellular autofluorescence characteristics [15]. | Titrate all reagents, adjust detector sensitivity to distinguish autofluorescence from background noise [15]. |

FAQ 4: What controls are essential for validating that my sample load is within optimal range?

Proper controls are non-negotiable for validating optimal sample loading. The specific controls required depend on your analytical platform:

For Flow Cytometry:

- Fluorescence Minus One (FMO) Controls: Critical for establishing accurate gate boundaries and assessing false positives from fluorescent spillover in multicolor panels [15] [16].

- Single-Stained Compensation Controls: Essential for calculating accurate spillover matrices and correcting for spectral overlap [15].

- Isotype Controls: Helpful for measuring non-specific antibody binding, though Fc receptor blockade is often a superior approach [15].

For EEG/EMG Studies:

- Non-Artifact Epoch Controls: Recordings without voluntary artifact generation are necessary to establish a baseline for binary classification models [13].

- Task Repetition Controls: Systematic variation of task repetitions (e.g., from 10 down to 3 or 1) to determine the minimum sufficient data for reliable artifact detection [14].

FAQ 5: My data is noisy despite following protocols. What key parameters should I re-optimize?

When facing persistent noise, systematically re-optimize these key parameters:

Table 2: Key Parameter Optimization for Noise Reduction

| Parameter | Optimization Goal | Practical Application |

|---|---|---|

| Reagent Titration | Find saturating but not supraoptimal concentration [15]. | Determine the best stain index value for each fluorescent reagent on target cells [15]. |

| Detector Sensitivity (PMT Voltage/APD Gain) | Position cell populations centrally in the plot; increase sensitivity to distinguish autofluorescence from background noise [15]. | Fine-tune voltages to clearly distinguish populations (e.g., G0/G1 in cell cycle); keep brightest fluorochrome within linear detection range [17] [15]. |

| Data Collection Scope | Minimize collection efforts while preserving efficiency [13]. | Reduce artifact tasks from 12 to 3 and task repetitions from 10 to 3 or 1, as demonstrated in EEG/EMG research [13] [14]. |

| Gating Strategy | Isolate target populations while excluding debris, dead cells, and doublets [17]. | Use sequential, hierarchical gating: exclude debris (FSC-A vs. SSC-A), select single cells (FSC-A vs. FSC-W), then define target phenotype with fluorescence [17]. |

Experimental Protocols for Key Experiments

Protocol 1: Systematic Optimization of Data Collection to Prevent Overloading

This protocol provides a generalizable framework for determining the minimum sufficient sample load, based on research that successfully optimized EEG data collection [13] [14].

Objective: To minimize data collection and sample loading efforts while preserving analytical efficiency and preventing overloading artifacts.

Materials:

- Standard equipment specific to your analytical domain (e.g., flow cytometer, EEG recording system)

- Necessary reagents or task protocols for your study

- Data recording and analysis software

Methodology:

- Define Artifact Spectrum: Identify all potential artifacts or variables relevant to your analysis. In the EEG study, this included 12 artifact types from masseter, temporalis, and frontalis muscle groups [13].

- Establish Baseline Data Collection: Begin with conventional or literature-based data collection levels (e.g., 12 artifact tasks with 10 repetitions each) [14].

- Iterative Reduction: Systematically reduce the number of variables and repetitions while monitoring detection performance.

- Cross-Validation: Test whether training on a reduced set of artifacts (e.g., 3 instead of 12) can adequately detect other types [13].

- Generalization Testing: Validate the optimized model on unknown subjects to ensure robustness [13].

- Performance Thresholding: Establish minimum data collection levels that maintain >95% of original performance metrics.

Expected Outcomes: A validated, optimized data collection protocol that significantly reduces sample loading (demonstrated reduction of 75% in tasks and 70-90% in repetitions) while preserving analytical integrity [13] [14].

Protocol 2: Flow Cytometry Panel Optimization to Prevent Spectral Overloading

Objective: To develop a high-dimensional fluorescent flow cytometry panel that avoids spectral overlap and overloading artifacts while maintaining signal clarity.

Materials:

- Flow cytometer with multiple lasers and detection capabilities

- Titrated antibody-fluorochrome conjugates

- Single-stained compensation controls

- FMO controls

- Viability dye (e.g., PI or 7-AAD)

Methodology:

- Antibody Titration: For each antibody, determine the optimal concentration that provides the best stain index (signal-to-noise ratio) on target cells. Avoid supraoptimal concentrations that increase background [15].

- Detector Optimization: Adjust PMT voltages to position negative populations clearly away from axis origins while keeping the brightest signals within the linear detection range. Do not decrease sensitivity merely to minimize autofluorescence [15].

- Compensation Setup: Run single-stained controls for each fluorochrome to calculate spillover matrices. Modern instruments can compute these rapidly and precisely with appropriate controls [15].

- Gating Strategy Implementation:

- Step 1: Exclude debris and dead cells using FSC-A vs. SSC-A plot and viability dye [17].

- Step 2: Remove doublets by plotting FSC-A against FSC-W (or FSC-H vs. FSC-W) and gating on the linear cluster representing single cells [17].

- Step 3: Define target phenotype using specific fluorescence markers with thresholds set using unstained and FMO controls [17] [16].

- Validation with FMO Controls: Use FMO controls for each channel to establish accurate positive/negative boundaries, especially for dim populations or those with significant spillover spreading [15].

Expected Outcomes: A optimized flow cytometry panel that minimizes spectral overloading artifacts, provides clear population separation, and generates reproducible, reliable data across experiments.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Research Reagents and Materials for Preventing Overloading Artifacts

| Reagent/Material | Function/Purpose | Application Notes |

|---|---|---|

| Fluorescence Minus One (FMO) Controls | Essential for accurate gating in multicolor flow cytometry; helps resolve ambiguous populations and set boundaries by omitting one fluorochrome from the full panel [15] [16]. | Critical for high-dimensional panels (>10 colors); use for each channel where precise gating is required, especially for dim markers [15]. |

| Single-Stained Compensation Controls | Enable accurate calculation of spectral spillover matrices; necessary for correcting fluorescence overlap between channels [15]. | Must be included for every fluorochrome in the panel; use compensation particles or cells with known antigen expression [15]. |

| Viability Dyes (PI, 7-AAD) | Distinguish live cells from dead cells; dead cells can cause nonspecific binding and increase background noise [17]. | Include in every flow cytometry experiment; gate out dead cells early in the gating strategy to improve data quality [17]. |

| Fc Receptor Blocking Reagents | Reduce nonspecific antibody binding through Fc receptors; superior to isotype controls for assessing specificity [15]. | Particularly important when working with immune cells; use commercial blocking reagents prior to antibody staining [15]. |

| Isometric Contraction Task Protocols | Generate controlled EMG artifacts for EEG data collection optimization; includes jaw tensing, frowning, etc. [13]. | Enable systematic study of artifacts; can be reduced from 10 to 3 repetitions once optimal loading is determined [13] [14]. |

Advanced Technical Diagrams

Flow Cytometry Gating Hierarchy for Optimal Load Validation

The following diagram illustrates the sequential, hierarchical gating strategy essential for ensuring data quality and preventing analytical "overloading" by progressively refining the population of interest.

Data Collection Optimization Pathway

This workflow outlines the systematic approach to reducing data collection efforts while maintaining analytical integrity, moving from concept-oriented to data-oriented design.

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: What are the most common indicators of sample overloading in western blotting? The most common visual indicators are saturated or smeared bands, high background noise, and a loss of resolution between adjacent bands. Quantitatively, when the signal intensity of your target protein ceases to increase linearly with the amount of protein loaded, you have likely reached overloading [18].

Q2: My protein signals are faint even after increasing exposure time. Could this be due to underloading? Yes, faint signals are a classic sign of underloading. Before drastically increasing your load, confirm that your transfer was efficient and your antibodies are working correctly. A systematic approach is to run a scouting gel with a wide range of loading amounts (e.g., 5 µg to 40 µg) to identify the linear range for your specific protein and detection system [19].

Q3: How does a systematic load scouting protocol prevent artifacts in quantitative analysis? Overloading leads to a non-linear relationship between sample amount and signal intensity, which violates the basic assumption of most quantitative assays. A load scouting protocol establishes the dynamic range and the linear response zone for your assay, ensuring that all subsequent quantitative comparisons are made within a range where signal accurately reflects quantity [20]. This is fundamental for generating reliable and reproducible data in drug development.

Q4: What is the first step I should take if I suspect my loading amount is suboptimal? The first step is to establish a performance baseline [19] [20]. Run your assay using your current standard loading amount and document the results, including signal strength, background, and any artifacts. This baseline becomes your reference point for measuring the impact of any adjustments you make during the systematic scouting process.

Q5: Why is it recommended to test a wide range of concentrations during load scouting? Testing a wide range (e.g., from clear underloading to definite overloading) allows you to map the entire response curve of your assay. This visualization helps you pinpoint the precise inflection point where linearity is lost and confidently select an optimal, robust loading amount situated safely within the linear range [18].

Troubleshooting Common Load-Related Issues

| Problem | Potential Causes | Recommended Solution | Verification Method |

|---|---|---|---|

| Smeared Bands | Sample overloading; Improper transfer; Poor gel polymerization. | Perform a load scout (e.g., 5-30 µg); Check gel quality; Optimize transfer conditions. | Bands are sharp and well-resolved on the scouting blot. |

| High Background | Overloading; Non-specific antibody binding; Blocking issues. | Reduce loading amount; Titrate antibodies; Optimize blocking buffer and duration. | Clean background with high signal-to-noise ratio. |

| Non-Linear Standard Curve | Overloading at higher concentrations; Assay dynamic range exceeded. | Extend the scouting range to lower concentrations; Use a different detection method (e.g., more sensitive chemiluminescent substrate). | Signal intensity shows a linear increase (R² > 0.98) across the used range. |

| Faint or No Signal | Severe underloading; Protein degradation; Failed transfer or inactive reagents. | Increase load based on scouting results; Check sample preparation; Validate reagents with a positive control. | Clear, detectable signal appears within the linear range. |

| Inconsistent Replicates | Inaccurate sample measurement; Pipetting errors; Gel well artifacts. | Use a highly accurate pipette; Practice consistent loading technique; Include an internal loading control. | Low coefficient of variation (<10%) between technical replicates. |

Experimental Protocols for Key Load Scouting Experiments

Protocol 1: Baseline Establishment and Load Scouting

Objective: To determine the linear dynamic range and optimal loading amount for a specific protein-detection system combination.

Materials:

- Protein samples (e.g., cell lysate)

- BCA or Bradford Assay Kit

- 5X Laemmli Sample Buffer

- Precast SDS-PAGE Gel

- Running Buffer

- Transfer System

- Primary and Secondary Antibodies

- Chemiluminescent Substrate

- Imaging System

Methodology:

- Sample Preparation: Quantify your protein sample accurately. Prepare a dilution series in duplicate. A recommended starting range is 5, 10, 15, 20, 25, and 30 µg of total protein per lane [20].

- Electrophoresis and Transfer: Load the samples onto an SDS-PAGE gel and run at constant voltage. Perform a standard wet or semi-dry transfer to a PVDF membrane.

- Immunodetection: Block the membrane, then incubate with your target primary antibody and corresponding HRP-conjugated secondary antibody under optimized conditions.

- Image Acquisition: Develop the blot with chemiluminescent substrate and capture images at multiple exposure times (e.g., 1s, 10s, 60s) to avoid camera saturation [18].

- Data Analysis: Use image analysis software to quantify the band intensity. Plot the signal intensity against the protein amount loaded. The optimal load is the highest amount within the linear portion of the curve (R² > 0.98).

Protocol 2: Validation of Optimal Load

Objective: To confirm that the selected optimal loading amount provides reproducible and quantitative results across different sample types and experimental days.

Materials: (Same as Protocol 1, plus additional test samples)

Methodology:

- Experimental Design: Prepare a set of samples, including a standard curve using a control lysate (from the optimal load range) and your experimental samples.

- Parallel Processing: Run the standard curve and experimental samples on the same gel to eliminate inter-gel variability.

- Quantification and Normalization: Quantify band intensities. Normalize the signal of experimental samples against the standard curve to calculate relative abundance, ensuring quantification remains within the validated linear range [19].

Table 1: Load Scouting Results for Hypothetical Protein X (~50 kDa)

| Total Protein Loaded (µg) | Band Intensity (Arbitrary Units) | Background Intensity (Arbitrary Units) | Signal-to-Noise Ratio | Within Linear Range? (Y/N) |

|---|---|---|---|---|

| 5 | 1,250 | 105 | 11.9 | Y |

| 10 | 2,550 | 115 | 22.2 | Y |

| 15 | 4,100 | 125 | 32.8 | Y |

| 20 | 7,900 | 380 | 20.8 | N (Saturation) |

| 25 | 8,200 | 850 | 9.6 | N (High Background) |

| 30 | 8,150 | 1,200 | 6.8 | N (Severe Background) |

Based on the data above, the optimal loading range for Protein X is 10-15 µg, where the signal-to-noise ratio is high and the relationship between load and signal is linear.

Workflow and Signaling Pathways

Systematic Load Scouting Workflow

Research Reagent Solutions

Table 2: Essential Materials for Load Scouting Experiments

| Item | Function in Load Scouting | Key Consideration |

|---|---|---|

| Protein Quantification Assay (e.g., BCA) | Accurately determines protein concentration for preparing precise loading series. | Choose an assay compatible with your sample buffer (e.g., BCA for SDS-containing samples). |

| Precast SDS-PAGE Gels | Provides consistent pore size and separation quality, reducing gel-to-gel variability. | Select an appropriate percentage gel for your target protein's molecular weight. |

| Validated Primary Antibody | Binds specifically to the target protein for detection. | Antibody specificity and titer must be confirmed to avoid non-specific signals. |

| Chemiluminescent Substrate | Generates light signal upon reaction with the HRP enzyme for detection. | Linearity of the substrate's signal response over time is critical for quantification. |

| Image Analysis Software | Quantifies band intensity and facilitates the generation of signal vs. load plots. | Ensure the software can detect and avoid pixel saturation for accurate quantitation. |

FAQs: Troubleshooting Common Instrumental Issues

Q1: What are the top practices to minimize contamination in my LC-MS/MS system? Contamination is a major source of downtime and unreliable data. Key preventative measures include [21]:

- Solvents and Mobile Phases: Use high-quality LC/MS-grade solvents. Prepare aqueous mobile phases fresh each week and do not "top off" old mobile phase bottles. Adding a minimum of 5% organic content can prevent bacterial growth [21].

- System Configuration: Employ a divert valve to direct initial column effluent away from the mass spectrometer, preventing non-volatile contaminants from entering the source. Use scheduled ionization to only apply voltage when your analytes are eluting [21].

- Sample Preparation: Centrifuge samples (e.g., 21,000 x g for 15 minutes) to pellet particulates. Ensure the autosampler needle aspirates from the top of the vial, not the bottom, to avoid injecting the pellet [21].

- Routine Maintenance: Implement a shutdown method to flush the system with a high organic solvent at the end of each batch. Regular cleaning and replacement of guard columns are paramount [21].

Q2: My chromatographic peaks are tailing, splitting, or broadening. What could be the cause? Poor peak shape directly impacts quantification accuracy. The following table outlines common symptoms, causes, and solutions [22].

Table 1: Troubleshooting Guide for Chromatographic Peak Issues

| Symptom | Primary Cause | Corrective Action |

|---|---|---|

| Peak Tailing [22] | Column overloading / contamination / active silanol sites | Dilute sample or reduce injection volume; replace guard column; add buffer to mobile phase (e.g., ammonium formate with formic acid). |

| Peak Fronting [22] | Solvent-sample mismatch / column degradation | Dilute sample in a solvent that matches the initial mobile phase strength; replace or regenerate the analytical column. |

| Peak Splitting [22] | Solvent incompatibility / solubility issues | Ensure sample is fully soluble and dissolved in a solvent compatible with the mobile phase. |

| Broad Peaks [22] | High extra-column volume / low flow rate / low temperature | Use shorter, narrower tubing; increase flow rate or column temperature. |

Q3: I am experiencing a significant loss of sensitivity in my LC-MS/MS analysis. How can I restore it? First, rule out simple issues like calculation errors, incorrect dilutions, or wrong detector settings [22]. If these are correct, proceed as follows:

- Diagnose with a Standard: Analyze a known standard. If the response is low, the issue is likely instrumental. If the standard is fine, the problem lies in sample preparation or handling [22].

- Check for Adsorption: If poor response is only in the first few injections, active sites in the system may be adsorbing analytes. Condition the system with several preliminary sample injections [22].

- Optimize Source Settings: Ensure the instrument is properly tuned for your compounds. Optimizing the curtain gas and other source parameters can significantly improve signal intensity [21].

Q4: When should I choose GC-MS over LC-MS for quantifying small molecules? GC-MS is an excellent choice for volatile, thermally stable, and non-polar compounds. It is inherently "greener" as it uses gaseous mobile phases, avoiding hazardous solvent waste. A key advantage is the universal and highly reproducible electron impact (EI) ionization, which facilitates easier library matching for compound identification [23]. For example, a 2025 method for paracetamol and metoclopramide achieved rapid, high-resolution separation in just 5 minutes using GC-MS [23].

Experimental Protocols for Precision Quantification

Protocol: A Validated GC-MS Method for Simultaneous Quantification

This protocol is adapted from a green analytical method for the rapid analysis of paracetamol (PAR) and metoclopramide (MET) [23].

1. Instrument Parameters:

- GC-MS System: Agilent 7890A GC coupled with a 5975C MSD.

- Column: Agilent 19091S-433 (5% Phenyl Methyl Silox), 30 m × 250 μm × 0.25 μm.

- Carrier Gas: Helium, constant flow rate of 2 mL/min.

- Injection & Source: Injector temperature: 280°C; Ion source temperature: 150°C.

- Oven Program: Optimized for a 5-minute total runtime.

- Detection: SIM mode monitoring m/z 109 for PAR and m/z 86 for MET.

2. Sample and Standard Preparation:

- Stock Solution: Prepare PAR and MET in ethanol at 500 μg/mL and 100 μg/mL, respectively.

- Working Solutions: Dilute stock solution with ethanol to create a calibration curve.

- Sample Prep: For tablet analysis, dissolve and extract powdered tablets in ethanol. For plasma, use a protein precipitation or solid-phase extraction (SPE) protocol.

3. Validation Data Summary: Table 2: Validation Results for the GC-MS Assay of PAR and MET [23]

| Parameter | Paracetamol (PAR) | Metoclopramide (MET) |

|---|---|---|

| Linear Range | 0.2 - 80 μg/mL | 0.3 - 90 μg/mL |

| Correlation (r²) | 0.9999 | 0.9988 |

| Precision (RSD%) | < 3.605% | < 3.392% |

| Recovery in Tablets | 102.87% | 101.98% |

| Recovery in Plasma | 92.79% | 91.99% |

Workflow Diagram: Systematic Troubleshooting for LC/GC-MS

The following diagram outlines a logical, symptom-based workflow to diagnose and resolve common issues in your LC-MS or GC-MS analyses, connecting the FAQs and protocols into a single actionable guide.

The Scientist's Toolkit: Essential Research Reagent Solutions

Selecting the right consumables is critical for robust and reproducible results. The following table details key solutions for sample preparation and analysis.

Table 3: Key Research Reagent Solutions for LC/GC-MS Analysis

| Product / Solution | Function | Application Example |

|---|---|---|

| Phospholipid Removal (PLR) Plates [24] | Removes proteins and phospholipids from biological samples in a single step, significantly reducing matrix effects in LC-MS/MS. | Cleanup of serum, plasma, or whole blood for drug analysis. |

| Mixed-Mode SPE Sorbents [24] | Polymeric sorbents with ion-exchange groups provide superior sample cleanliness by retaining analytes via both hydrophobic and ionic interactions. | Extraction of a wide range of drugs from complex matrices prior to LC-MS/MS. |

| Microelution SPE [24] | Uses minimal sorbent and solvent volumes, eliminating the need for evaporation and reconstitution. Ideal for low sample volumes and greener protocols. | Concentrating analytes from small-volume biological samples. |

| Biphenyl/Phenyl-Hexyl LC Columns [24] | Offer complementary selectivity to C18 columns via π-π interactions with aromatic analytes, improving separation for many pharmaceuticals. | Differentiation of drug isomers or compounds with similar hydrophobicity. |

| Enhanced Matrix Removal (EMR) Cartridges [25] | Pass-through cartridges designed for selective removal of specific matrix interferences (e.g., lipids, pigments) without retaining target analytes. | Streamlined cleanup for PFAS in food or mycotoxins in feed. |

| Dual-Bed PFAS SPE Cartridges [25] | Combine sorbents like weak anion exchange and graphitized carbon black for comprehensive extraction and cleanup of PFAS from environmental samples. | Sample prep for EPA Method 1633 (water, soil, tissue). |

Integrating AI and Active Learning for Predictive Loading and Artifact Avoidance

Troubleshooting Guides

FAQ 1: How can I determine the optimal sample loading amount to prevent overloading artifacts in my imaging data?

Problem: Overloading artifacts degrade image quality, complicating quantitative analysis of peri-implant regions or tissue samples. These artifacts appear as bright and dark streaks in CT imaging, obscuring critical anatomical details [26].

Troubleshooting Steps:

Quick Fix (5 minutes): Implement a pre-imaging calibration series.

- Procedure: Run a short, low-resolution scan with a reduced sample load (approximately 50% of your standard protocol).

- Verification: Check for the absence of streaking or saturation artifacts in the calibration image. If artifacts are gone, proceed with a adjusted load.

- Command Line Snippet (for automated systems):

./scanner_control --protocol calibrate_low_res --sample_load 0.5

Standard Resolution (15 minutes): Integrate an AI-based pre-screening step.

- Procedure: Use a trained deep learning model (e.g., a U-Net architecture) to predict potential artifact regions based on the sample's digital twin or a pre-scan [27] [26].

- Verification: The model should output a probability map of artifact risk. A risk score above 0.7 suggests a high probability of overloading.

- Expected Output: An overlay on your sample preview image highlighting high-risk zones in red (#EA4335).

Root Cause Fix (30+ minutes): Establish an Active Learning loop.

- Procedure: a. Start with a small set of known good and bad loading amounts. b. For each new experiment, let the AI model predict the optimal load. c. Incorporate the results (successful load or artifact) back into the training dataset. d. Periodically retrain the model to improve its predictions [28].

- When to Use: For long-term research projects to progressively minimize artifacts and resource waste.

Diagram: Active Learning Workflow for Load Optimization

FAQ 2: My AI model for artifact prediction is not generalizing well to new sample types. How can I improve its performance with limited data?

Problem: Models trained on limited or homogeneous data fail when encountering new sample geometries, densities, or material compositions, leading to inaccurate load predictions and persistent artifacts.

Troubleshooting Steps:

Quick Fix (5 minutes): Apply data augmentation to your existing training set.

- Procedure: Use affine transformations (rotation, scaling, shearing) on your existing sample images to simulate variations. For tabular data (e.g., sample features), add Gaussian noise [28].

- Verification: Retrain your model on the augmented dataset and validate on a held-out test set. Look for a 5-10% improvement in accuracy.

Standard Resolution (15 minutes): Employ a Multimodal Learning (MM-Net) approach.

- Procedure: Instead of relying on a single data type (e.g., sample weight), integrate multiple data modalities.

- Input 1: Gene expression profiles or material properties of the sample.

- Input 2: Histology Whole-Slide Images (WSIs) or micro-CT scout views.

- Input 3: Molecular descriptors or physical parameters of the sample [28].

- Verification: The MM-Net should outperform unimodal models. Monitor the Matthew's Correlation Coefficient (MCC); a value above 0.7 indicates strong performance [28].

- Procedure: Instead of relying on a single data type (e.g., sample weight), integrate multiple data modalities.

Root Cause Fix (30+ minutes): Utilize Federated Learning (FL) for privacy-preserving model improvement.

- Procedure: a. Develop a base model on your local data. b. Collaborate with other labs to train the model on their local data without sharing raw data. c. Only share model parameter updates. d. Aggregate updates to create a robust, generalized model [27].

- When to Use: In multi-institutional studies where data privacy is a concern but model generalization is critical.

Diagram: Multimodal Neural Network (MM-Net) Architecture

Experimental Protocols & Data

Table 1: Quantitative Comparison of Artifact Correction Techniques in CT Imaging

This table summarizes a preclinical validation study comparing a novel Deep Learning-based Metal Artifact Correction (AI-MAC) algorithm against conventional methods, using a metal-free scan as the ground truth [26].

| Technique | Subjective Image Quality Score (1-5) | Signal-to-Noise Ratio (SNR) | Contrast-to-Noise Ratio (CNR) | Soft Tissue Segmentation Completeness (%) |

|---|---|---|---|---|

| Reference (Metal-Free) | 5.0 | 12.1 ± 0.8 | 8.5 ± 0.5 | 100.0 |

| AI-MAC | 4.5 ± 0.3 | 11.8 ± 0.7 | 8.3 ± 0.6 | 99.1 ± 0.5 |

| Virtual Monochromatic Imaging (VMI) | 4.2 ± 0.4 | 9.5 ± 0.6 | 6.1 ± 0.4 | 94.5 ± 0.7 |

| Conventional MAC | 3.1 ± 0.5 | 8.9 ± 0.9 | 5.8 ± 0.7 | 94.0 ± 0.6 |

Note: The AI-MAC algorithm most closely approximates the metal-free reference across all metrics, particularly in characterizing soft tissue [26].

Protocol 1: Preclinical Validation of a Deep Learning-based Artifact Correction Algorithm

Methodology: [26]

- Phantom Design: An ovine vertebral specimen was inserted consecutively with two sets of pedicle screws (Φ 6.5 × 30-mm and Φ 7.5 × 40-mm) into a water base to simulate metal implantation.

- Image Acquisition:

- A metal-free reference was scanned first using a 320-row CT scanner (120 kVp, 100 mAs).

- Scans were repeated with metal inserts using both single-energy and dual-energy protocols.

- Image Reconstruction & Processing:

- The single-energy data with inserts were reconstructed using a Hybrid Iterative Reconstruction (HIR) algorithm combined with conventional MAC and the novel AI-MAC.

- Dual-energy data were reconstructed to generate Virtual Monochromatic Images (VMI) at optimal keV levels.

- Analysis: Images were compared qualitatively via radiologist scoring and quantitatively via CT attenuation, image noise, SNR, CNR, and segmentation accuracy of the paraspinal muscle and vertebral body.

Table 2: Impact of Data Augmentation on Drug Response Prediction (PDX Models)

This table demonstrates how data augmentation techniques can improve model performance when sample size is limited, a common challenge in preclinical research [28].

| Training Data Scenario | Matthew's Correlation Coefficient (MCC) | Accuracy | F1-Score |

|---|---|---|---|

| Baseline (Single-drug treatments only) | 0.45 | 0.78 | 0.72 |

| With Augmented Drug-Pairs | 0.61 | 0.85 | 0.81 |

| Multimodal NN (GE + Histology + Augmented Data) | 0.73 | 0.91 | 0.89 |

Note: GE = Gene Expression. Combining multimodal data with augmentation yielded the most significant performance boost for predicting drug response in Patient-Derived Xenograft (PDX) models [28].

Protocol 2: Multimodal Learning with Data Augmentation for Drug Response Prediction

Methodology: [28]

- Data Source: Utilization of unpublished PDX drug response data from the NCI Patient-Derived Models Repository (PDMR).

- Data Augmentation:

- Homogenization: Single-drug and drug-pair treatments were combined into a single dataset by standardizing their drug representation.

- Drug-Pair Augmentation: The sample size of all drug-pair treatments was doubled by augmenting the data.

- Multimodal Network (MM-Net):

- Inputs: The model was designed to take four feature sets as input: Gene Expression (GE), Histology Whole-Slide Images (WSIs), and molecular descriptors for two drugs.

- Training: The MM-Net was trained on the combined and augmented dataset to predict a binary representation of treatment response.

- Validation: The MM-Net's performance was benchmarked against baselines, including a unimodal NN using only GE and a LightGBM model, using the MCC metric.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function/Benefit |

|---|---|

| Polymeric Nanoparticles (PNPs) | Used as nanodrug carriers (e.g., for Resveratrol). They offer higher structural stability, biocompatibility, and controlled release of encapsulated drugs, enhancing therapeutic efficacy and stability [29]. |

| Biodegradable Polymers (e.g., PVP, PVA, PLGA) | Form the matrix of PNPs. Their biodegradable nature makes them safe for in-vivo delivery, and drugs can be encapsulated within their core or dispersed in the matrix [29]. |

| Stabilizers/Surfactants (e.g., Poloxamer 407, Polysorbates) | Added to nanoparticle formulations to maintain nanoparticle size and shape, preventing aggregation and ensuring uniformity [29]. |

| Patient-Derived Xenograft (PDX) Models | Created by grafting human tumor tissue into immunodeficient mice. These models preserve tumor heterogeneity better than in-vitro cell lines, providing a more reliable platform for preclinical drug response studies [28]. |

| AI-Integrated Quality by Design (QbD) | A systematic approach to pharmaceutical development. Machine Learning is integrated with QbD to model non-linear relationships between material/process parameters and product quality, optimizing formulations more accurately than traditional methods [29]. |

Troubleshooting Workflow: Identifying, Diagnosing, and Correcting Overloading Artifacts

Diagnostic Signatures of System Overload

System overload occurs when an experimental sample or a biological model is pushed beyond its functional capacity, leading to characteristic artifacts and performance degradation. The tables below summarize the key visual, quantitative, and neurophysiological signatures of overload across different experimental systems.

Table 1: Quantitative Signatures of Cognitive Overload in an N-back Task [30]

| Load Condition | Performance Accuracy (Mean) | Reaction Time (Median) | fMRI Activation Signature |

|---|---|---|---|

| 0-back (Control) | Baseline (Target: 'a') | Baseline | Reference level |

| 2-back (Optimal Load) | High | Moderate | Adaptive increase in DLPFC activation |

| 4-back (Overload) | Significant decline | Slowed | Inverted U-shaped response; decreased DLPFC activation |

Table 2: Neurophysiological Signatures of Sensory Processing Overload [31]

| EEG Metric | Correlation with High Sensitivity | Group Difference (High vs. Low SPS) | Topographic Pattern |

|---|---|---|---|

| Beta Power (12.5-25 Hz) | Positive correlation | Significantly higher in HSP group | Most pronounced in central, parietal, temporal regions |

| Gamma Power (25.5-45 Hz) | Not specified | Significantly higher in HSP group | Associated with increased cognitive processing |

| Global EEG Power (1-45 Hz) | Positive correlation | Significantly higher in HSP group | Suggests overall increased information processing |

Troubleshooting Guides & FAQs

Problem 1: Performance Decline Under High Cognitive Load

Q: During my cognitive task (e.g., N-back), subject performance drops sharply at high loads. How can I confirm this is system overload and not poor task design?

A: A specific pattern distinguishes true overload from poor design. In a parametric working memory fMRI study [30], exposure to a capacity-exceeding 4-back load caused not only immediate failure but also a subsequent decline in performance on an otherwise manageable 2-back task. This carryover effect is a key signature of overload.

Diagnostic Protocol [30]:

- Design: Use a block design with varying load levels (e.g., 0-back, 1-back, 2-back, 4-back).

- Counterbalance: Ensure that blocks of moderate load (e.g., 2-back) immediately follow both control (1-back) and overload (4-back) blocks.

- Measure:

- Behavioral: Accuracy and reaction time for each block.

- Neural (fMRI): Brain activation, particularly in the Dorsolateral Prefrontal Cortex (DLPFC) and amygdala.

- Interpretation: True overload is confirmed if:

- Performance on the moderate task is worse after an overload block (

2-back/4) compared to after a control block (2-back/1). - This performance decline is independently predicted by increased amygdala activation and reduced DLPFC activation during the overload condition.

- Performance on the moderate task is worse after an overload block (

Problem 2: Identifying Neural Correlates of Overload

Q: What are the reliable neural biomarkers I should measure to diagnose overload in a neuroimaging experiment?

A: Overload is characterized by a breakdown in the brain's executive control network and engagement of limbic regions [30]. Concurrently, in EEG studies, a general increase in global power may indicate heightened, potentially inefficient, information processing [31].

Experimental Protocol [30]:

- fMRI Acquisition: Collect Blood Oxygen Level-Dependent (BOLD) data on a 3-Tesla scanner. Use an EPI pulse sequence (e.g., TR = 2 sec, TE = 30, whole-brain coverage).

- Preprocessing: Perform standard steps including slice timing correction, motion correction, normalization to a standard template (e.g., MNI), and spatial smoothing.

- Analysis:

- Use a general linear model (GLM) to model task condition effects.

- Apply a random-effects model to individual subject data for group analysis.

- Examine activation in pre-defined Regions of Interest (ROIs): DLPFC and amygdala.

- Conduct a functional connectivity analysis (e.g., psychophysiological interaction - PPI) to test for inverse coupling between the amygdala and DLPFC.

Problem 3: Optimizing Sample Loading in Drug Discovery

Q: In AI-driven drug discovery, how can I prevent "overloading" generative models to ensure they produce viable, synthesizable molecules?

A: Generative models can fail by producing molecules with poor target engagement or low synthetic accessibility. An effective solution is to implement a structured, iterative workflow with nested active learning cycles that act as a filter [32].

Diagnostic & Optimization Protocol [32]:

- Initial Training: Train a generative model (e.g., Variational Autoencoder) on a general and a target-specific set of molecules.

- Inner Active Learning Cycle:

- Generate new molecules.

- Use chemoinformatic oracles to evaluate drug-likeness and Synthetic Accessibility (SA).

- Fine-tune the model with molecules that pass these filters.

- Outer Active Learning Cycle:

- After several inner cycles, use a physics-based oracle (e.g., molecular docking simulations) to evaluate the accumulated molecules for target affinity.

- Fine-tune the model with high-scoring molecules.

- Validation: Select top candidates for rigorous simulation (e.g., absolute binding free energy calculations) and experimental synthesis and testing.

The Scientist's Toolkit

Table 3: Research Reagent Solutions for Overload Diagnostics

| Item / Reagent | Function / Application | Key Characteristics / Purpose |

|---|---|---|

| N-back Task Paradigm | To parametrically apply cognitive load and induce overload in human subjects [30]. | Letters/numbers presented sequentially; subject indicates match to N steps back. Allows for load manipulation (0-back to 4-back). |

| Functional MRI (fMRI) | To non-invasively measure brain activity correlates of overload (e.g., DLPFC deactivation) [30]. | Blood Oxygen Level-Dependent (BOLD) contrast. Reveals load-dependent activation changes in key neural circuits. |