Maximizing Value in Drug Development: A Strategic Cost-Benefit Analysis of QC Validation Procedures

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to evaluate the economic and operational impact of Quality Control (QC) validation procedures.

Maximizing Value in Drug Development: A Strategic Cost-Benefit Analysis of QC Validation Procedures

Abstract

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to evaluate the economic and operational impact of Quality Control (QC) validation procedures. It bridges foundational economic principles with practical laboratory applications, detailing how methodologies like Six Sigma and Analytical Quality by Design (AQbD) can be leveraged for significant cost savings and enhanced data integrity. Through troubleshooting guides, validation case studies comparing techniques like UFLC-DAD and spectrophotometry, and quantitative analysis of internal versus external failure costs, this resource offers a strategic roadmap for optimizing laboratory efficiency, ensuring regulatory compliance, and maximizing return on investment in biomedical research.

The Economics of Quality Control: Foundational Principles for Effective Validation

Defining Cost-Benefit Analysis in a Regulatory Context

Cost-Benefit Analysis (CBA) serves as a systematic analytical framework within regulatory science, enabling objective evaluation of projects, policies, or procedures by quantifying their expected costs against anticipated benefits. In regulatory contexts, particularly in pharmaceutical development and clinical laboratory medicine, CBA provides a critical decision-making tool that transforms complex trade-offs into comparable financial metrics. This methodology moves beyond simple financial calculations to encompass broader economic, social, and regulatory impacts, offering a structured approach to justify investments in quality control (QC) validation procedures and technological innovations [1].

The core principle of CBA involves calculating a Benefit-Cost Ratio (BCR), where the present value of benefits is divided by the present value of costs. A BCR exceeding 1.0 indicates that benefits surpass costs, justifying the regulatory investment. Supplementary metrics including Net Present Value (NPV), Internal Rate of Return (IRR), and payback period provide additional dimensions for evaluation. Regulatory bodies worldwide increasingly mandate robust CBA to ensure efficient resource allocation, with agencies like the U.S. Department of Transportation and HM Treasury continually updating their frameworks to address modern priorities including climate change, equity, and digital infrastructure [1].

For researchers, scientists, and drug development professionals, CBA offers an evidence-based approach to navigate complex regulatory decisions, from implementing new QC validation procedures to evaluating pharmaceutical supply chain innovations. This guide compares the application and outcomes of CBA methodologies across different regulatory and quality control scenarios, providing experimental data and analytical frameworks to support superior regulatory decision-making.

Core Principles and Methodological Framework

The application of Cost-Benefit Analysis in regulatory contexts follows a standardized methodological framework that ensures comprehensive evaluation and comparability across different QC validation procedures.

Foundational Concepts and Calculation Methods

At its core, CBA in regulatory science relies on several key financial calculations that account for the time value of money and provide objective metrics for comparison:

- Benefit-Cost Ratio (BCR): Calculated as the sum of present value benefits divided by the sum of present value costs. A BCR > 1 indicates a financially viable project [1] [2].

- Net Present Value (NPV): The difference between the present value of benefits and costs, with positive NPV indicating economic viability [1].

- Present Value Formula: PV = FV/(1+r)^n, where FV is future value, r is the discount rate, and n is the number of periods [2].

- Discount Rates: Regulatory agencies specify appropriate discount rates (e.g., USDOT recommends 7% base rate with 3% for sensitivity analysis) to convert future costs and benefits to present values [1].

The fundamental CBA process employs a systematic seven-step approach: (1) define project scope and baseline; (2) identify and categorize costs and benefits; (3) monetize costs and benefits; (4) apply discount rates; (5) calculate BCR, NPV, and IRR; (6) conduct sensitivity and scenario analysis; and (7) compile and report findings [1]. This structured methodology ensures that regulatory decisions consider both immediate and long-term impacts while accounting for uncertainty through sophisticated risk assessment techniques.

Regulatory CBA Workflow

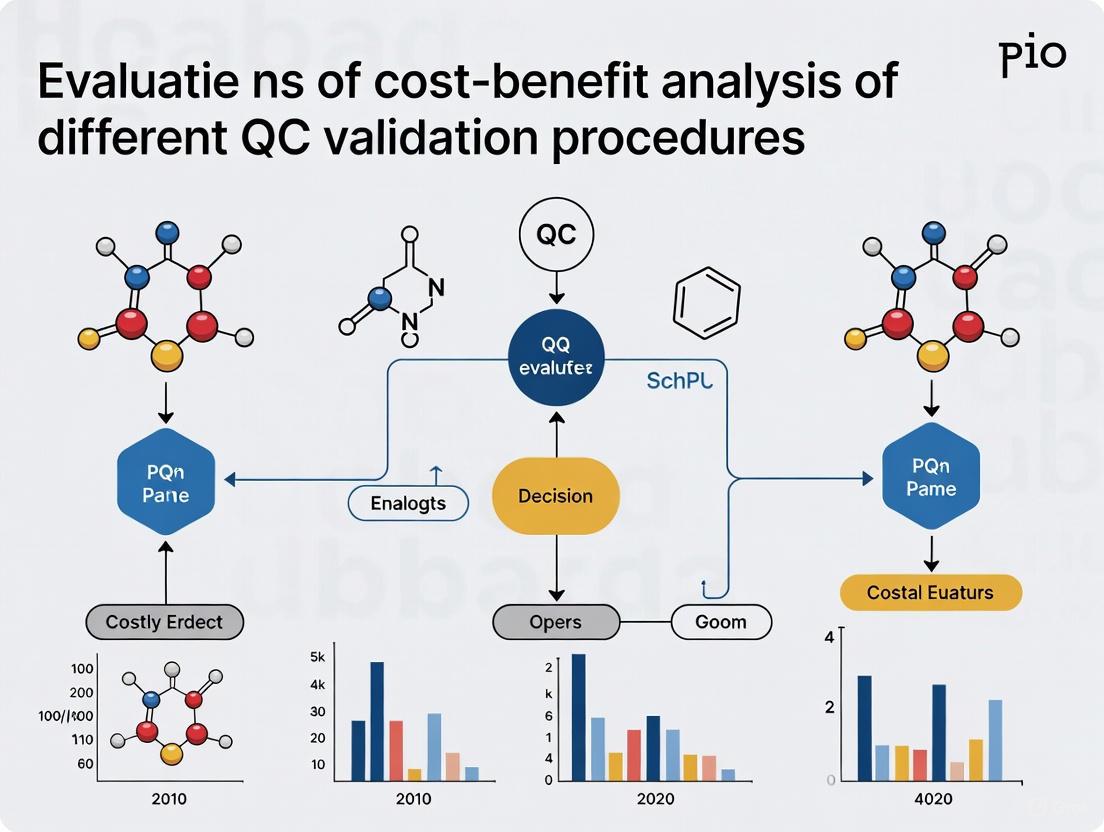

The following diagram illustrates the standardized workflow for conducting cost-benefit analysis in regulatory contexts, particularly for QC validation procedures:

Essential Research Reagent Solutions for CBA Implementation

Successfully implementing CBA in regulatory research requires specific analytical tools and frameworks:

Table: Essential Research Reagent Solutions for CBA Implementation

| Tool/Framework | Primary Function | Application Context |

|---|---|---|

| Six Sigma Methodology | Quality control optimization through statistical process control | Biochemistry lab performance improvement [3] |

| Bias% and CV% Calculations | Quantify measurement accuracy and precision | Sigma metric calculation for QC validation [3] |

| Social Cost of Carbon (SCC) | Monetize environmental impacts ($190/metric ton CO2 in 2025) | Environmental regulation CBA [1] |

| Monte Carlo Simulation | Statistical modeling for uncertainty assessment | Risk analysis in regulatory forecasting [1] |

| Westgard Sigma Rules | QC validation through statistical quality control | Clinical laboratory test validation [3] |

| Distributional Weights | Incorporate equity considerations into analysis | Social impact assessment in regulatory CBA [1] |

Comparative Experimental Data: CBA Applications Across Regulatory Contexts

Experimental applications of CBA across different regulatory environments demonstrate its versatility and impact on decision-making processes.

CBA Outcomes in Healthcare and Pharmaceutical Contexts

Recent research provides quantitative evidence of CBA effectiveness across multiple regulatory domains:

Table: Comparative CBA Outcomes in Regulatory Contexts

| Regulatory Context | CBA Methodology | Key Quantitative Findings | Benefit-Cost Ratio |

|---|---|---|---|

| Biochemistry Lab QC [3] | Six Sigma with Westgard rules | Absolute savings of INR 750,105.27; 50% reduction in internal failure costs | Not specified |

| Korean Pharmaceutical Information Service [4] | Net present value calculation over 12 years | Net financial benefit: $37.2 million; Social benefit: $571.6 million | 2.6 (financial), 24.8 (with social benefits) |

| Pharmaceutical Innovation [5] | Elasticity modeling of revenue impact | 10% revenue reduction leads to 2.5-15% innovation decline | Not applicable |

| Residential Construction Project [2] | Present value benefit calculation | Project costs: $65,000; Present value benefits: $288,000 | 4.43 |

Experimental Protocols and Methodologies

Six Sigma QC Validation Protocol

A yearlong study evaluating biochemistry lab performance implemented the following experimental protocol [3]:

- Sigma Metric Calculation: Six sigma values for 23 routine chemistry parameters were calculated over one year using bias% and coefficient of variation (CV%)

- QC Validation: Application of New Westgard sigma rules using Biorad Unity 2.0 software

- Comparative Analysis: Evaluation of false rejection rates, probability of error detection, and costs of reruns/repeats before and after implementing new sigma rules

- Cost Assessment: Calculation of internal failure costs (rework, repeats) and external failure costs (incorrect results impacting patient care)

- Savings Calculation: Computation of relative and absolute annual savings comparing traditional QC approaches with optimized Six Sigma methods

This protocol demonstrated that carefully planned quality control techniques could achieve significant cost reductions by lowering both internal and external failure costs through effective prevention and appraisal cost planning [3].

Pharmaceutical Supply Chain Monitoring Protocol

The Korean Pharmaceutical Information Service (KPIS) implemented this experimental framework to evaluate its pharmaceutical tracking system [4]:

- Data Integration: Established unified system connecting manufacturing, distribution, and dispensing data

- Serialization: Implemented 13-digit product codes for individual drug tracking

- Real-time Monitoring: Daily reporting of shipping, returns, and disposal information

- Discrepancy Investigation: Automated comparison of wholesaler supply data against NHI claims data from hospitals/pharmacies

- Cost-Benefit Calculation: Assessment over 12-year period including labor, system development, and operational costs versus financial and social benefits

The study conducted sensitivity analyses on annual benefits, demonstrating how net benefit varied according to program implementation year, with results ranging from -$1.5 million to $24.7 million [4].

Advanced Methodological Considerations in Regulatory CBA

Addressing Uncertainty and Equity in Regulatory Analysis

Modern CBA applications in regulatory contexts must account for complex factors beyond direct financial calculations:

Risk and Uncertainty Management: Regulatory CBA employs multiple techniques to address forecasting uncertainties. Sensitivity analysis adjusts one variable at a time to assess impact on results, while scenario analysis models best-case, worst-case, and most likely scenarios. Monte Carlo simulations use statistical modeling to simulate thousands of iterations, producing probability distributions of results rather than single-point estimates [1]. These approaches are particularly important in pharmaceutical regulation, where approximately 28% of infrastructure projects experience cost overruns and 17% face benefit shortfalls [1].

Equity and Distributional Weights: Contemporary regulatory CBA increasingly incorporates equity considerations through distributional weights. This approach assigns higher value to benefits received by disadvantaged populations, recognizing that a dollar gained by marginalized groups has more societal value than the same dollar earned by high-income populations. For example, a health intervention benefiting low-income communities may receive a distributional weight of 1.5, effectively amplifying its impact in overall CBA [1]. This methodological evolution aligns regulatory analysis with modern ESG-focused investor expectations and policy frameworks promoting equitable development.

Decision Framework for Regulatory CBA

The final stage of regulatory CBA converts analytical results into actionable decisions through a structured decision framework:

This balanced approach prevents over-reliance on pure financial metrics while maintaining analytical rigor. Companies that effectively implement regulatory CBA typically create cross-functional decision teams including both financial analysts and operational experts, helping identify blind spots in the analysis and increasing buy-in for the ultimate regulatory decision [6].

Cost-Benefit Analysis represents an indispensable methodological framework for regulatory decision-making, particularly in pharmaceutical development and quality control validation. The comparative experimental data presented demonstrates that systematically applied CBA methodologies can generate substantial financial and social returns across diverse regulatory contexts.

From the 50% reduction in internal failure costs achieved through Six Sigma implementation in clinical biochemistry [3] to the $571.6 million in social benefits generated by Korea's integrated pharmaceutical information system [4], the quantitative evidence confirms that structured CBA approaches deliver measurable value. However, successful implementation requires more than simple financial calculations—it demands careful consideration of uncertainty, equity impacts, strategic alignment, and stakeholder concerns.

For researchers, scientists, and drug development professionals, mastering CBA methodologies provides a critical competitive advantage in navigating increasingly complex regulatory environments. By applying the frameworks, experimental protocols, and analytical tools outlined in this guide, regulatory professionals can optimize quality control validation procedures, justify strategic investments, and ultimately enhance both economic efficiency and public health outcomes through evidence-based decision-making.

In the landscape of drug development and clinical research, effective financial management is paramount. A critical aspect of this management involves understanding and categorizing the various costs associated with laboratory operations, particularly in Quality Control (QC) validation procedures. Within the context of cost-benefit analysis, laboratory costs are systematically classified into three fundamental components: direct costs, indirect costs, and intangible costs [7]. This framework enables researchers and laboratory managers to accurately evaluate the true economic impact of their QC strategies, from routine biochemistry analyses to complex drug development protocols. A comprehensive grasp of these cost components is not merely an accounting exercise; it forms the essential foundation for optimizing resource allocation, justifying investments in new technologies, and ultimately ensuring the financial sustainability of research endeavors while maintaining the highest standards of data integrity and patient safety.

Defining the Core Cost Components

Understanding the distinct nature of each cost category is the first step toward effective cost management and analysis in a laboratory setting.

Direct Costs

Direct costs are expenses that can be specifically and exclusively identified with a particular project, test, or analysis [8]. These costs are incurred as a direct result of medical or analytical management and can be traced to a specific cost object (e.g., a specific assay, validation project, or drug trial) with high accuracy [7]. In laboratory operations, direct costs are often the most visible and easily quantifiable.

Examples in Laboratory Context:

- Reagents and Consumables: Costs of chemicals, antibodies, enzymes, and other disposable materials used in specific tests [9].

- Specialized Labor: Salaries and benefits for technicians and scientists directly engaged in performing the QC validation or specific assays.

- Equipment Depreciation: Cost of specialized analytical instruments (e.g., spectrometers, autoanalyzers) dedicated to a particular project [9].

- Calibration Materials: Purchase of third-party controls and calibrators used for specific analytical runs [9].

Indirect Costs

Indirect costs, also known as facilities and administrative (F&A) costs, are general institutional expenditures incurred for common or joint objectives that benefit multiple projects or activities [8]. These costs cannot be readily identified with a single sponsored project or specific test but are necessary for the overall operation of the laboratory.

Examples in Laboratory Context:

- Utilities: Costs of electricity, water, and gas required to maintain the laboratory environment.

- Administrative Salaries: Salaries for laboratory managers, procurement staff, and safety officers not directly working on a single project.

- Facility Maintenance: Costs for cleaning, waste disposal, and maintenance of the general laboratory infrastructure.

- IT Infrastructure: Laboratory Information Management System (LIMS) licenses and network costs shared across multiple projects.

Intangible Costs

Intangible costs are expenditures or losses that are difficult to measure and quantify objectively in monetary terms [10]. These costs represent real economic impacts that affect laboratory efficiency and value but are not captured in traditional accounting systems. In QC procedures, they often manifest as productivity losses or opportunity costs [11].

Examples in Laboratory Context:

- Productivity Loss: Decreases in output or efficiency when implementing a new QC procedure or during staff training periods [12].

- Opportunity Cost: Lost research opportunities when highly skilled personnel are occupied with troubleshooting QC failures instead of pursuing innovative work.

- Reputational Damage: Long-term impact on a laboratory's credibility and brand value due to erroneous results or delayed deliverables [11].

- Morale and Turnover: Costs associated with reduced employee morale, increased stress, and potential turnover due to inefficient workflows or constant firefighting of QC issues [10].

Table 1: Comparative Overview of Laboratory Cost Components

| Cost Category | Definition | Quantifiability | Examples in Laboratory Context |

|---|---|---|---|

| Direct Costs | Expenses directly identifiable with a specific project or test [8] | High | Reagents, specialized labor, dedicated equipment, calibration materials [9] |

| Indirect Costs | Overhead expenses supporting multiple projects [8] | Moderate | Utilities, administrative salaries, facility maintenance, shared IT infrastructure |

| Intangible Costs | Non-monetary costs affecting efficiency and value [10] | Low | Productivity loss, opportunity cost, reputational damage, morale issues [11] |

Experimental Case Study: Cost-Benefit Analysis of QC Validation in a Biochemistry Lab

A 2025 study provides compelling experimental data on the financial impact of optimizing QC validation procedures in a clinical biochemistry laboratory, demonstrating the practical application of cost component analysis [9].

Methodology and Experimental Protocol

The retrospective study analyzed 23 routine biochemistry parameters on an Autoanalyzer Beckman Coulter AU680 over one year [9].

Experimental Protocol:

- Sigma Metric Calculation: Sigma metrics were calculated for each analyte using the formula: σ = (TEa% - bias%) / CV%, where TEa is total allowable error, bias% indicates inaccuracy, and CV% represents imprecision [9].

- QC Validation: Using Biorad Unity 2.0 software, researchers applied New Westgard Sigma Rules to identify optimal QC procedures based on each analyte's sigma performance [9].

- Cost Assessment: Internal and external failure costs were calculated for both existing and candidate QC procedures using specialized financial worksheets [9].

- Comparative Analysis: The financial impact was assessed by comparing total costs before and after implementing the optimized QC rules, with savings expressed in both absolute (Indian Rupees) and relative terms [9].

Results and Financial Impact

The implementation of sigma-based QC rules yielded substantial financial improvements, demonstrating the value of a systematic approach to QC optimization [9].

Table 2: Financial Outcomes of Optimized QC Procedures

| Cost Category | Pre-Optimization | Post-Optimization | Reduction | Financial Impact |

|---|---|---|---|---|

| Internal Failure Costs | Baseline | 50% lower [9] | 50% | INR 501,808.08 saved [9] |

| External Failure Costs | Baseline | 47% lower [9] | 47% | INR 187,102.80 saved [9] |

| Total Annual Savings | - | - | - | INR 750,105.27 [9] |

Key Findings:

- Internal Failure Costs: These are costs associated with re-analyzing controls and patient specimens, including reagents, controls, and labor for repeats and reruns. The 50% reduction indicates significantly improved operational efficiency and resource utilization [9].

- External Failure Costs: These represent the expenses of incorrect results reaching physicians, including further confirmatory testing and potential impacts on patient care. The 47% reduction demonstrates enhanced quality and reliability of laboratory outputs [9].

- Methodology Impact: The study highlighted that selective addition of incoming sample variations to the calibration model, while reducing prediction performance slightly, resulted in considerable resource savings, representing an optimal trade-off between quality and cost [9].

The Cost-Benefit Analysis Framework for Laboratory Decision-Making

Cost-benefit analysis (CBA) provides a systematic approach to evaluating QC validation procedures and other laboratory investments by comparing projected costs and benefits [12].

The CBA Process

The CBA process consists of several key steps that enable laboratory managers to make data-driven decisions about their QC strategies [13]:

- Establish Analysis Framework: Define the scope, goals, and metrics for evaluation, ensuring all costs and benefits are measured in consistent monetary units [12].

- Identify Costs and Benefits: Compile comprehensive lists of all relevant cost categories (direct, indirect, intangible) and anticipated benefits [12].

- Assign Monetary Values: Quantify all cost and benefit elements, using historical data, market prices, and estimation techniques for intangible factors [13].

- Calculate Net Present Value (NPV): Account for the time value of money using the formula: NPV = Σ[(Bt - Ct) / (1+i)^t], where Bt is benefit at time t, Ct is cost at time t, i is discount rate [13].

- Analyze Results and Decide: Compare total benefits against total costs, with positive NPV indicating financial viability [13].

Visualizing the Cost-Benefit Relationship in QC Strategy

The following diagram illustrates the logical workflow for conducting a cost-benefit analysis of QC procedures, incorporating the three core cost components:

The Scientist's Toolkit: Essential Research Reagent Solutions

Implementing effective QC strategies requires specific materials and reagents designed to ensure analytical accuracy and precision. The following table outlines key research reagent solutions essential for laboratory QC validation.

Table 3: Essential Research Reagent Solutions for QC Validation

| Reagent/Material | Function | Application Context |

|---|---|---|

| Third-Party Quality Controls | Monitor analytical performance independent of manufacturer calibrators [9] | Daily verification of assay precision and accuracy across multiple instruments |

| Calibration Materials | Establish the relationship between instrument response and analyte concentration [9] | Initial method validation and periodic recalibration of analytical systems |

| Spectrophotometric Standards | Provide known absorbance values for verification of spectrophotometer accuracy | Wavelength accuracy and photometric linearity checks in spectroscopic methods |

| Certified Reference Materials | Serve as definitive standards with well-characterized composition and uncertainty | Method validation, trueness verification, and meeting regulatory requirements |

| Lyophilized Quality Controls | Stable, reconstitutable controls for monitoring long-term assay performance [9] | Internal Quality Control (IQC) programs across clinical chemistry parameters |

The systematic categorization and analysis of direct, indirect, and intangible costs provide laboratory managers and researchers with a powerful framework for evaluating QC validation procedures and other strategic investments. The experimental data demonstrates that optimized, sigma-based QC protocols can generate substantial cost savings—up to 50% reduction in internal failure costs and 47% in external failure costs—while maintaining or improving quality outcomes [9]. As laboratories face increasing pressure to deliver accurate results faster and more cost-effectively, a comprehensive understanding of these cost components becomes essential for sustainable operation and continued innovation in drug development and clinical research.

In the competitive landscapes of pharmaceuticals and clinical diagnostics, quality control (QC) validation has evolved from a regulatory necessity to a strategic asset. The rigorous quantification of QC benefits—spanning error reduction, cost savings, and accelerated turnaround times—provides organizations with a critical evidence base for justifying investments in modern quality systems. Research and industry benchmarks now consistently demonstrate that proactive, data-driven QC validation models yield substantial returns, transforming quality from a cost center into a source of competitive advantage [14] [15]. This guide objectively compares the performance of traditional QC procedures against modern, optimized approaches, focusing on experimental data that quantify their impact on operational and financial outcomes.

The fundamental shift in quality management, often termed Quality 4.0, integrates big data, artificial intelligence (AI), and machine learning with traditional quality processes [14]. This integration enables a move from reactive detection to predictive analytics, where errors can be prevented before they occur. Companies that excel in this area document a 23% reduction in operational costs and a 31% faster time-to-market for new products [14]. Furthermore, within agile software development environments, teams that integrate quality assurance and quality control (QAQC) early report a 37% reduction in bug-fixing time and ship products 22% faster with 41% fewer post-release patches [14]. These figures establish a compelling baseline for the quantitative benefits explored in this guide.

Experimental Protocols & Methodologies for QC Evaluation

To objectively compare QC procedures, researchers employ structured experimental protocols that generate quantifiable performance data. The following sections detail two foundational methodologies: the Sigma Metrics Methodology for analytical processes and the Digital Biomarker Impact Model for clinical development.

Sigma Metrics Methodology for Analytical QC

This protocol is widely used in clinical and manufacturing laboratories to quantify analytical performance and design statistically appropriate QC rules. A recent study applied it to 23 routine biochemistry parameters on an autoanalyzer platform [9].

- Primary Objective: To calculate the sigma metric for each analytical process and use it to select an optimal QC procedure that minimizes false rejections while maximizing error detection, thereby reducing costs and improving turnaround time (TAT) [9].

- Key Performance Indicators (KPIs): The core metrics calculated were:

- Bias%: The inaccuracy of the method, calculated as

(Observed Value - Target Value) / Target Value × 100%. The target value was derived from the manufacturer's mean or an External Quality Assessment Scheme (EQAS) [9]. - CV%: The imprecision of the method, calculated as

Standard Deviation / Laboratory Mean × 100from daily Internal Quality Control (IQC) data [9]. - Total Allowable Error (TEa%): The maximum error that can be tolerated without affecting the medical utility of a result, defined by standards from bodies like the Clinical Laboratory Improvement Act (CLIA) [9].

- Bias%: The inaccuracy of the method, calculated as

- Sigma Calculation: The sigma metric for each parameter was determined using the formula: σ = (TEa% - Bias%) / CV% [9].

- QC Validation and Rule Selection: Using software (e.g., Biorad Unity 2.0), researchers characterized existing QC rules and identified candidate rules based on the calculated sigma value. Optimal rules were selected to achieve a high Probability of Error Detection (Ped ≥ 90%) and a low Probability of False Rejection (Pfr ≤ 5%) [9].

- Cost-Benefit Analysis: Finally, internal failure costs (e.g., costs of rerunning tests, reagents, labour for rework) and external failure costs (e.g., costs of incorrect diagnoses, additional patient care) were calculated for both existing and candidate QC procedures. The difference represented the quantified savings [9].

Digital Biomarker Impact Model in Clinical Trials

This methodology uses statistical modeling to quantify how digital biomarkers can improve the efficiency and success probability of clinical trials, particularly in drug development for complex diseases like Parkinson's.

- Primary Objective: To quantify the contribution of digitally-enabled measurements (e.g., from wearables) as enrichment tools (for patient selection) and endpoints (for outcome measurement) on clinical study success [16].

- Modeling Framework: A Monte Carlo simulation is run to model the "Probability of Study Success (PoSS)," defined as the probabilistic occurrence of a study achieving a p-value of less than 0.05 for its primary endpoint [16].

- Key Variables: The model focuses on three components of trial design:

- The choice of endpoint (e.g., a digital vs. a traditional clinical endpoint).

- The choice of study population (e.g., enriched using a digital biomarker).

- The sample size per treatment arm [16].

- Quantifying Impact: The model illustrates the magnitude of improvement these technologies provide. For example, in a Duchenne muscular dystrophy trial, using a wearable-derived digital endpoint called stride velocity 95th centile (SV95C) was estimated to reduce the required pivotal trial sample size by 70% compared to using traditional endpoints like the 6-minute walk test [16]. This directly translates to massive cost savings and faster trial completion.

Diagram 1: Experimental workflows for comparing QC procedures, showing the parallel paths of the Sigma Metrics and Digital Biomarker methodologies.

Comparative Performance Data & Analysis

The following tables synthesize quantitative data from experimental studies, providing a clear comparison of performance outcomes between traditional and optimized QC procedures.

Financial and Operational Impact of Sigma-Based QC

A one-year retrospective study in a clinical biochemistry lab analyzed 23 parameters, comparing existing multi-rules with a new, sigma-based candidate rule. The financial results are summarized below [9].

Table 1: Cost-Benefit Analysis of Sigma-Based QC Rules in a Clinical Lab [9]

| Cost Category | Existing QC Rule (INR) | Candidate QC Rule (INR) | Absolute Savings (INR) | Relative Savings |

|---|---|---|---|---|

| Internal Failure Costs | 1,003,616.16 | 501,808.08 | 501,808.08 | 50% |

| External Failure Costs | 400,205.07 | 213,102.27 | 187,102.80 | 47% |

| Total Annual Costs | 1,403,821.23 | 653,715.96 | 750,105.27 | 53% |

Key Findings: The implementation of sigma-based rules led to dramatic cost reductions. Internal failure costs, which include reagents, controls, and labour for rerunning tests due to false rejections, were cut in half. External failure costs, which are associated with the more severe consequences of undetected errors (e.g., misdiagnosis, additional patient care), were reduced by 47% [9]. This demonstrates that optimized QC is not just about internal efficiency but directly impacts patient care and associated costs.

Broader Industry Performance Benchmarks

Beyond the clinical lab, data from manufacturing and software development highlight the cross-industry value of modern QC and QA practices.

Table 2: Cross-Industry Performance Benchmarks for Modern QC/QA Practices [14]

| Industry / Domain | QC Intervention | Quantitative Benefit | Impact Context |

|---|---|---|---|

| Agile Software Development | Integrated QAQC | 15% higher success rates; 37% less bug-fixing time | Compared to teams using traditional approaches |

| General Manufacturing | Proactive QC Systems | $4.30 saved for every $1 spent on prevention | Cost ratio of proactive vs. reactive fixes |

| Automotive Manufacturing | Real-time Monitoring | 52% fewer warranty claims | Compared to manual inspections |

| Pharmaceuticals | Predictive & Proactive Methods | 67% reduction in batch rejections | Annual performance data |

| Electronics Production | AI-Powered Inspection + Engineer Review | 40% reduction in defects | Case study result |

The data shows that the benefits of modern QC are universal. The electronics case study, which combined AI with human expertise, is a prime example of the hybrid approach championed by Quality 4.0 [14]. Furthermore, the high return on prevention spending ($4.30 saved per $1 spent) makes a compelling financial case for investing in advanced QC systems [14].

The Scientist's Toolkit: Essential Research Reagents & Materials

Successful experimentation in QC validation relies on a set of fundamental tools and materials. The following table details key items referenced in the featured studies.

Table 3: Essential Research Reagents and Solutions for QC Experiments

| Item Name | Function & Application | Example from Research |

|---|---|---|

| Third-Party Assayed Controls | Used to independently verify analyzer accuracy and calculate Bias%. These controls have predefined target values. | Biorad Lyphocheck clinical chemistry controls were used to determine Bias% for 23 parameters [9]. |

| QC Validation / Sigma Software | Software platforms that automate the calculation of sigma metrics and recommend optimal, cost-effective QC rules. | Biorad Unity 2.0 software was used to identify candidate QC rules based on sigma metrics [9]. |

| Digital Wearable Sensors | Used in clinical trials to collect objective, continuous physiological data (digital biomarkers) from patients in real-world settings. | Wearable-derived stride velocity 95th centile (SV95C) was used as a digital endpoint in a Duchenne muscular dystrophy trial [16]. |

| Monte Carlo Simulation Platform | Statistical software used to model complex systems, such as clinical trials, to quantify the probability of success under different design scenarios. | The Captario SUM platform was used to model the impact of digital biomarkers on trial success in Parkinson's disease [16]. |

| Experiment Tracking & Comparison Tools | Platforms that log all experiment metadata (parameters, metrics, outcomes) to enable consistent comparison of different training runs or QC strategies. | Neptune.ai is used by researchers to track, group, and compare thousands of model training runs to identify best-performing configurations [17]. |

The experimental data presented provides unequivocal evidence that modern, data-driven QC validation procedures significantly outperform traditional methods. The key takeaways are:

- Quantifiable Financial Returns: Organizations can achieve substantial annual savings, as demonstrated by the 53% reduction in total failure costs from the clinical lab study [9]. The return on investment is accelerated by reducing both internal waste and costly external errors.

- Enhanced Operational Efficiency: Optimized QC directly improves key performance indicators like turnaround time (TAT) by reducing false rejections and unnecessary reruns [14] [9].

- Increased Project Success Rates: In fields like drug development, the use of advanced tools like digital biomarkers can dramatically reduce sample size requirements and increase the probability of phase 3 success, de-risking the entire R&D pipeline [16].

To capture these benefits, organizations should adopt a structured implementation workflow: First, scope the change and perform a quick risk assessment to determine data needs. Second, conduct focused studies (e.g., using sigma metrics or simulations) to generate quantitative evidence for the new procedure. Third, choose the appropriate reporting and implementation path, ensuring regulatory compliance. Finally, file, monitor, and track post-implementation results to confirm the expected benefits and foster a culture of continuous improvement [18] [15]. By following this evidence-based approach, researchers, scientists, and drug development professionals can transform their quality control processes from a cost center into a powerful engine for efficiency, reliability, and value creation.

In the pharmaceutical and biotech industries, quality failure costs are the expenses incurred when products or services fail to meet quality standards. These costs are categorized within the Prevention, Appraisal, and Failure (PAF) model, a fundamental quality cost framework [19] [20]. Failure costs, the focus of this analysis, are themselves divided into two distinct types: internal failure costs, which are identified before a product reaches the customer (e.g., rework and scrap), and external failure costs, which arise after the product has been shipped (e.g., warranty claims and recalls) [19] [20] [21]. For drug development professionals, understanding this distinction is not merely an accounting exercise; it is critical for performing accurate cost-benefit analyses of Quality Control (QC) validation procedures. A robust validation strategy invests in prevention and appraisal to avoid the substantially higher costs, both financial and reputational, associated with internal and particularly external failures [19] [22].

Defining and Comparing Internal and External Failure Costs

Internal and external failure costs represent different stages and magnitudes of quality failure impact. Their comparison is essential for strategic resource allocation.

Internal Failure Costs

Internal failure costs are those incurred to remedy defects discovered before the product or service is delivered to the customer [20]. These costs occur when work outputs fail to reach design quality standards and are detected by internal controls [19] [23].

- Rework or Rectification: The correction of defective material or errors to make them fit for use [19] [20]. In electronics, rework introduces risks like thermal stress and contamination that can compromise long-term reliability [24]. In construction, rework can cost 4-6% of the total project value [25].

- Scrap: Defective product or material that cannot be repaired, used, or sold [19] [20].

- Failure Analysis: The activity required to establish the causes of internal product or service failure [20].

- Waste: Performance of unnecessary work or holding of stock as a result of errors, poor organization, or communication [20].

External Failure Costs

External failure costs are incurred to remedy defects discovered after the customer has received the product or service [26] [20]. These are often the most severe category of quality costs.

- Complaints and Returns: All work and costs associated with handling and servicing customer complaints, including the processing of returned goods [26] [20].

- Warranty Claims: The costs associated with repairing or replacing failed products under warranty agreements [26] [21].

- Product Recalls: The significant expenses related to identifying, retrieving, and managing defective products from the market, including logistics and communications [26] [21].

- Legal Costs and Liabilities: Costs arising from lawsuits, settlements, or regulatory fines due to product defects causing harm [26] [21].

- Loss of Reputation and Sales: The long-term financial impact of a damaged brand image, reduced customer loyalty, and lost market share [26] [21]. This is a potentially devastating but difficult-to-quantify cost.

Comparative Analysis

The table below summarizes the key characteristics of internal and external failure costs for easy comparison.

Table 1: Comparative Analysis of Internal and External Failure Costs

| Aspect | Internal Failure Costs | External Failure Costs |

|---|---|---|

| Detection Point | Within the organization, before delivery to the customer [20] [23] | After delivery to the customer [26] [20] |

| Primary Examples | Scrap, rework, failure analysis [19] [20] | Warranty claims, product recalls, complaint handling [26] [20] |

| Financial Impact | Typically lower and more quantifiable [21] | Often substantially higher; can be catastrophic [21] |

| Reputational Impact | Generally contained internally | Severe, damaging brand image and customer trust [26] [21] |

| Containment | Easier to contain and manage | More complex and costly to contain (e.g., market-wide recalls) [26] |

A critical principle is that external failure costs tend to be substantially higher than internal failure costs [21]. While internal failures like rework represent a direct financial loss, external failures compound direct costs like recalls with profound indirect costs like loss of customer trust and potential litigation [26] [21]. One analysis suggests that in many companies, the total cost of poor quality can be 10-15% of operations, with effective quality improvement programs capable of substantially reducing this figure [20].

The Cost-Benefit Analysis of QC Validation and Method Maintenance

In drug development, QC validation is a primary prevention cost, while ongoing method maintenance is a form of appraisal cost. Investing in these areas is a strategic decision to minimize the risk of far greater internal and external failure costs.

The "Fit-for-Purpose" Validation Strategy

A rational approach to method validation is the "fit-for-purpose" strategy, which aligns the rigor and resource investment of validation with the stage of product development and the intended use of the method [22]. This strategy optimizes the cost-benefit ratio of prevention activities.

- Early-Stage Development: Validation is simpler and more provisional, as less is known about the product and processes. The goal is to be "fit-for-purpose" without over-investing in a product that may not advance [22].

- Commercialization (BLA Stage): A full validation according to ICH Q2R1 is required. The higher investment is justified by the severe external failure costs (e.g., product recalls, regulatory actions) that a validated method helps prevent [22].

- Generic Validation for Platform Assays: For non-product-specific methods (e.g., for monoclonal antibodies), a single validation on representative material can be applied to similar products. This approach saves considerable resources while maintaining quality standards, offering an excellent return on investment [22].

Cost-Benefit of Model Maintenance

The principle of cost-benefit extends to the ongoing maintenance of analytical methods and calibration models. A 2021 study highlights a framework for cost-benefit analysis of calibration model maintenance [27]. The research evaluated strategies like continuously adding new samples to the calibration set versus selectively adding only samples with new variations.

- Finding: Continuously adding samples improved prediction performance and model robustness but at a higher cost. Selectively adding samples showed a reduced prediction performance but saved considerable resources, representing a more cost-efficient strategy in some contexts [27].

- Implication: For researchers, this demonstrates that the most rigorous maintenance is not always the most cost-effective. The optimal strategy depends on a balance between the required performance and the cost of resources, always weighed against the potential failure costs of an under-performing model [27].

Experimental Protocol: A Spiking Study for SEC Validation

A key experimental protocol in biopharmaceutical QC is the spiking study, used to validate impurity assays like Size-Exclusion Chromatography (SEC) for accuracy [22].

1. Objective: To determine the accuracy of a SEC method in quantifying aggregates and low-molecular-weight (LMW) impurities in a biological product by assessing recovery of known, spiked amounts of these species [22].

2. Methodology:

- Generation of Spiking Material: Stable aggregates and LMW species are generated. This can be achieved through controlled chemical reactions (e.g., oxidation for aggregates, reduction for LMW species) or by collecting fractions from a purification process [22].

- Sample Preparation: The generated impurity materials are spiked into the main product at multiple, known concentration levels (e.g., low, medium, high) to create a series of test samples [22].

- Analysis and Calculation: All spiked samples are analyzed using the SEC method. The observed percentage of impurities (from the chromatogram) is compared to the expected percentage based on the spike [22].

- Recovery Calculation: Accuracy is measured by calculating the percentage recovery:

(Observed % Impurity / Expected % Impurity) * 100%. Good accuracy is typically demonstrated by recoveries of 90-100% for aggregates and 80-100% for LMW species [22].

3. Application in Method Selection: This study can reveal critical performance differences between methods. As cited, two SEC methods may both pass a simple linearity study, but a spiking study can show that one method has a significantly more sensitive and accurate response to actual impurities, making it the more reliable and cost-effective choice in the long run by reducing the risk of incorrect results [22].

Essential Research Reagent Solutions for QC

The execution of robust QC experiments relies on specific reagents and materials. The following table details key solutions used in the featured fields.

Table 2: Key Research Reagent Solutions for Quality Control Experiments

| Research Reagent / Material | Function in QC Experiments |

|---|---|

| Forced-Degradation Samples | Chemically or physically stressed samples used to generate impurities (aggregates, LMW species) for specificity and accuracy studies, such as SEC spiking [22]. |

| Process-Related Impurities | Isolated impurities collected from purification process cut-offs, used as reference materials in assay validation [22]. |

| Calibration Standards | Characterized materials with known properties used to build and maintain multivariate calibration models for process analytical technology (PAT) [27]. |

| Ionic Contamination Standards | Solutions with known ionic concentrations used to calibrate Ion Chromatography (IC) systems for testing board cleanliness and detecting corrosive residues [24]. |

| Surface Insulation Resistance (SIR) Test Coupons | Standardized test boards used to evaluate the electrical reliability of assemblies and detect the presence of conductive contaminants that could cause failure [24]. |

Strategic Pathways: Quality Investment and Cost Avoidance

The relationship between quality expenditures and failure costs is not linear; strategic investment in prevention and appraisal causes a disproportionate reduction in costly failures. The following diagram illustrates this core cost-benefit relationship and the decision pathways in QC method strategy.

Diagram 1: Strategic Pathways in Quality Cost Management. This diagram shows how investments in Prevention and Appraisal costs influence strategic decisions regarding QC validation and maintenance, ultimately driving a cost-benefit analysis to balance upfront investment against the risk of internal and external failure costs.

For drug development professionals, the meticulous understanding of internal versus external failure costs provides a powerful framework for justifying investments in quality. While internal failures like rework represent a clear, quantifiable loss, the data and case studies confirm that external failures such as recalls, litigation, and reputational damage carry a vastly higher potential cost [26] [21]. The experimental protocols and cost-benefit analyses of validation strategies demonstrate that a "fit-for-purpose" but rigorous approach to QC is not an expense but a critical safeguard [22] [27]. By strategically allocating resources to robust prevention and appraisal activities, such as method validation and maintenance, organizations can directly reduce the frequency and severity of both internal and external failure costs, thereby protecting patients, ensuring regulatory compliance, and securing long-term profitability.

The Role of CBA in Strategic Decision-Making for Drug Development

Cost-Benefit Analysis (CBA) serves as a systematic analytical framework for evaluating the economic viability of projects, programs, or policies by comparing total expected costs against total anticipated benefits [1]. In the context of drug development, this methodology transforms complex strategic decisions into quantifiable assessments that transcend personal bias and organizational politics. The fundamental metric in CBA is the Benefit-Cost Ratio (BCR), calculated by dividing the present value of benefits by the present value of costs [1]. A BCR exceeding 1.0 indicates that benefits surpass costs, signaling a project that delivers positive economic value.

The drug development landscape faces increasing pressure to justify investments not only in terms of financial return but also broader public health value. Regulatory bodies are updating their CBA frameworks to address contemporary priorities, including accelerated therapy development, patient-centric trial designs, and efficient resource allocation [1]. For researchers, scientists, and drug development professionals, mastering CBA principles provides an evidence-based foundation for strategic portfolio decisions, resource allocation, and stakeholder communications, ensuring that limited resources are directed toward developments that maximize both economic and therapeutic value.

CBA Methodologies and Quantitative Frameworks

The Core CBA Process

A robust, defensible CBA follows a structured methodology with seven essential steps [1]:

- Step 1: Define Project Scope and Baseline - Clearly articulate project boundaries, stakeholders, and success criteria, establishing what would happen if no action is taken.

- Step 2: Identify and Categorize Costs and Benefits - Comprehensively identify direct costs, indirect benefits, revenue increases, operational cost savings, productivity improvements, risk reduction, and environmental benefits.

- Step 3: Monetize Costs and Benefits - Transform identified elements into measurable monetary values using market data, shadow pricing, or accepted proxies like the Social Cost of Carbon.

- Step 4: Apply Discount Rates - Convert future costs and benefits to present values using appropriate discount rates (e.g., 3-7% depending on project type and agency guidelines).

- Step 5: Calculate BCR, NPV, and IRR - Apply established formulas to determine key financial metrics, where BCR = Present Value of Benefits ÷ Present Value of Costs.

- Step 6: Conduct Sensitivity and Scenario Analysis - Test how variations in assumptions affect results through sensitivity analysis, scenario modeling, or Monte Carlo simulations.

- Step 7: Compile and Report Findings - Present methodology, results, assumptions, and limitations transparently in formal reports.

The following workflow diagram illustrates the strategic application of CBA in drug development decision-making:

Advanced CBA Applications in Healthcare Settings

Beyond basic financial calculations, modern CBA in drug development incorporates sophisticated elements that reflect the sector's complexities. Distributional weights represent a significant evolution in CBA methodology, assigning higher value to benefits received by disadvantaged populations [1]. For instance, a health intervention benefiting low-income communities may receive a distributional weight of 1.5, effectively amplifying its impact in the overall analysis [1]. This approach aligns with regulatory trends emphasizing equitable healthcare access.

Furthermore, CBA now systematically integrates social and environmental costs through standardized valuation metrics. The Social Cost of Carbon (SCC), currently valued at approximately $190 per metric ton in federal analyses, allows drug developers to quantify environmental impacts of manufacturing and distribution processes [1]. A project that reduces emissions by 50,000 tons thus yields a quantified benefit of $9.5 million in a CBA [1]. Such comprehensive costing enables more socially responsible portfolio decisions.

CBA of QC Validation Procedures in Laboratory Medicine

Experimental Protocol for QC Validation Assessment

A rigorous methodology for evaluating the cost-benefit analysis of quality control (QC) validation procedures involves the following experimental protocol, adapted from clinical laboratory practice [9]:

Sigma Metric Calculation: For each analytical parameter, calculate sigma metrics using the formula: Sigma (σ) = (TEa% - Bias%) / CV%, where TEa represents total allowable error, Bias% indicates inaccuracy, and CV% represents imprecision [9]. These calculations should be performed over a substantial period (e.g., one year) to ensure statistical reliability.

QC Procedure Optimization: Apply appropriate QC rules (e.g., Westgard Sigma Rules) using specialized software (e.g., Biorad Unity 2.0) to identify optimal control rules and numbers of control measurements based on the calculated sigma metrics [9]. The selection criteria should prioritize procedures with high probability of error detection (Ped > 90%) and low probability of false rejection (Pfr < 5%).

Cost Assessment: Compute internal failure costs (false rejection test costs, false rejection control costs, rework labor costs) and external failure costs (patient rerun costs, extra patient care costs due to undetected errors) for both existing and candidate QC procedures using standardized worksheets [9]. These should incorporate all relevant cost components: reagents, controls, calibrators, technologist time, and potential impacts on patient care.

Benefit Quantification: Calculate financial savings from reduced reagent consumption, fewer control materials, decreased repeat analyses, and reduced labor requirements after implementing optimized QC procedures [9]. Compare these against implementation costs to determine net benefits and payback period.

Statistical Analysis: Perform comparative analysis of false rejection rates, error detection rates, and financial metrics before and after implementation of new QC procedures, using both relative and absolute savings calculations to determine statistical and practical significance [9].

Quantitative Results from QC Optimization Implementation

The table below summarizes financial outcomes from implementing CBA-optimized QC procedures in a clinical biochemistry laboratory setting, demonstrating substantial cost savings:

Table 1: Financial Outcomes of QC Optimization in Clinical Laboratory

| Cost Category | Savings Amount (INR) | Savings Percentage | Primary Savings Drivers |

|---|---|---|---|

| Total Annual Savings | 750,105.27 | - | Combined internal & external failure cost reduction [9] |

| Internal Failure Costs | 501,808.08 | 50% | Reduced reagent use, fewer repeats, labor efficiency [9] |

| External Failure Costs | 187,102.80 | 47% | Fewer erroneous results impacting patient care [9] |

These financial outcomes demonstrate that strategically planned quality control techniques in clinical laboratories can achieve significant cost reductions while maintaining or improving quality outcomes [9]. The implementation of Six Sigma methodology in QC validation created a framework for optimizing resource utilization while preserving analytical quality, with savings realization dependent on testing volume and frequency of QC operations [9].

Research Reagent Solutions for QC Validation Studies

Table 2: Essential Research Reagents and Materials for QC Validation Experiments

| Reagent/Material | Function in QC Validation | Application Example |

|---|---|---|

| Third-Party Assayed Controls | Provides independent target values for bias calculation | Biorad Lyphocheck controls used for sigma metric computation [9] |

| QC Validation Software | Applies statistical rules and calculates performance metrics | Biorad Unity 2.0 for implementing Westgard Sigma Rules [9] |

| Precision Materials | Determines analytical imprecision (CV%) | Commercial control materials with established stability [9] |

| External Quality Assurance | Provides peer group comparison for accuracy assessment | External Quality Assessment Scheme (EQAS) materials [9] |

CBA Applications in Broader Drug Development Context

Regulatory Science and Accelerated Development Pathways

Cost-Benefit Analysis plays a critical role in optimizing regulatory science initiatives and drug development tools. The Critical Path Institute (C-Path), a public-private partnership, exemplifies how strategic resource allocation can accelerate therapy development through quantitative assessment of development methodologies [28] [29]. C-Path's various clinical trial simulation tools and disease databases represent strategic investments whose benefits include reduced development timelines, optimized trial designs, and more efficient regulatory pathways [28].

Recent regulatory innovations like the Critical Path Innovation Meetings (CPIMs) provide a forum for discussing novel drug development tools, where CBA frameworks help evaluate their potential impact [30]. These meetings have addressed topics ranging from artificial intelligence for clinical trial efficiency to digital health technologies for endpoint measurement, all requiring careful assessment of implementation costs against potential benefits in accelerated development [30].

Strategic Budgeting and Financial Planning in Drug Development

Modern financial planning methodologies in healthcare and drug development increasingly incorporate CBA principles through several advanced approaches:

Zero-Based Budgeting (ZBB): This approach requires justifying all expenses for each new period rather than simply adjusting previous budgets, potentially reducing costs by 20-40% through elimination of unnecessary expenditures [31]. ZBB establishes cost awareness and accountability, enabling more informed financial decisions aligned with organizational goals.

Rolling Forecasts: These provide flexibility by allowing organizations to update financial projections regularly (typically quarterly or monthly) based on real-time data and trends [32] [31]. This enables drug development organizations to respond swiftly to unforeseen challenges in clinical trials, changes in regulatory requirements, or shifts in development priorities.

Automated Budgeting Tools: These enhance accuracy and efficiency, with finance teams saving an average of 500 hours annually through automated processes while reducing human error [31]. Automation streamlines data collection and analysis, providing real-time insights into financial performance across complex drug development portfolios.

The following diagram illustrates how these modern financial approaches integrate CBA principles into the drug development budgeting process:

Cost-Benefit Analysis provides an indispensable framework for strategic decision-making throughout the drug development continuum. From optimizing quality control procedures in analytical laboratories to informing portfolio decisions and regulatory strategy, CBA transforms complex trade-offs into quantifiable assessments. The methodology's evolution to incorporate social values, environmental impacts, and distributional equity reflects the expanding responsibilities of drug developers to multiple stakeholders.

As healthcare systems face increasing cost pressures and demands for demonstrated value, the rigorous application of CBA principles will become even more critical. By systematically evaluating both costs and benefits across financial, clinical, and social dimensions, drug development professionals can allocate scarce resources to maximize therapeutic innovation while maintaining fiscal sustainability. The integration of advanced analytical approaches—including sensitivity analysis, scenario modeling, and real-time data integration—will further enhance CBA's value in guiding the high-stakes decisions that characterize modern drug development.

From Theory to Lab Bench: Methodologies for Quantifying QC Value

Applying Six Sigma Metrics (σ) for QC Procedure Validation

This guide compares the performance of traditional Quality Control (QC) procedures against those optimized with Six Sigma metrics, providing an objective analysis grounded in experimental data and industry case studies. The evaluation is framed within a broader research thesis on the cost-benefit analysis of different QC validation procedures.

Six Sigma is a data-driven methodology that uses statistical analysis to reduce defects and process variation, with a performance target of no more than 3.4 defects per million opportunities [33]. In the context of QC procedure validation, it provides a rigorous framework to quantify analytical performance and tailor control rules accordingly, moving from a one-size-fits-all approach to a risk-based strategy [34] [35]. The core metric, the Sigma metric (σ), is calculated by comparing the inherent precision (CV%), accuracy (Bias%), and the required quality specification—the Total Allowable Error (TEa)—for a given test: σ = (TEa% - Bias%) / CV% [9] [35].

A 2025 global survey of QC practices highlights the critical need for such optimization, revealing that one-third of laboratories experience out-of-control events every day, and a majority (80%) still use some form of the overly sensitive 2SD control rule, which contributes to high false rejection rates [36]. Implementing Six Sigma metrics directly addresses these inefficiencies by balancing the probability of error detection (Ped) with a low probability of false rejection (Pfr), leading to more robust and cost-effective quality systems [9].

Performance Comparison: Traditional vs. Six Sigma QC

The table below summarizes a head-to-head performance comparison based on experimental data from clinical laboratory studies.

Table 1: Performance Comparison of Traditional vs. Six Sigma-Based QC Procedures

| Performance Metric | Traditional QC (e.g., 1:2s multi-rule) | Six Sigma-Optimized QC | Data Source & Context |

|---|---|---|---|

| False Rejection Rate (Pfr) | Higher (e.g., 5-10% range for multi-rules) | Significantly Lower (e.g., <0.5% for a 13.5s rule) | Laboratory case study showing reduced nuisance alarms [35] |

| Error Detection (Ped) | Inconsistent; may be excessive for stable assays | Tailored to assay performance; high Ped for low-σ assays | Principle of selecting QC rules based on Sigma score [9] [35] |

| Annual QC Material Consumption | Baseline | 75% reduction | Case study: Sint Antonius Hospital over 4 years [35] |

| Annual Cost Savings (Internal & External Failures) | Baseline | 47-50% reduction; Absolute saving of INR 750,105 (~$9,000 USD) | Clinical chemistry lab study of 23 parameters [9] |

| Labor Efficiency | High time spent troubleshooting false alarms | Estimated 10 minutes saved per avoided rerun | Operational time analysis from a laboratory case study [35] |

Analysis of Comparative Data

The data demonstrates that Six Sigma-optimized QC procedures deliver superior outcomes across all key metrics. The most significant benefits are observed in cost reduction and operational efficiency. The 75% reduction in QC material consumption [35] directly translates to lower reagent costs and is complemented by a near 50% reduction in the costs associated with internal rework and external failures [9]. Furthermore, by drastically reducing the false rejection rate, technologists spend less time on unnecessary troubleshooting, which improves workflow and staff morale [35].

Experimental Protocols for Sigma Metric Validation

The following workflow details the standard methodology for validating and implementing a Sigma-based QC procedure. This protocol is synthesized from established laboratory case studies [9] [35].

Diagram 1: Sigma Metric Validation Workflow

Detailed Experimental Methodology

Step 1: Define Quality Requirement (TEa) The first step is to establish the quality specification for the test, expressed as Total Allowable Error (TEa). This can be sourced from regulatory bodies like CLIA, from biological variation databases, or from peer-reviewed literature [9] [35]. TEa defines the maximum error that can be tolerated without affecting clinical utility.

Step 2: Collect Performance Data Gather data to estimate the assay's accuracy (Bias%) and precision (CV%).

- Bias% Calculation: Bias% = (|Observed Value - Target Value| / Target Value) × 100. The target value can be derived from the mean of a reference method, peer group mean, or results from an External Quality Assessment (EQA) scheme [9].

- CV% Calculation: CV% = (Standard Deviation / Laboratory Mean) × 100. This is determined from internal quality control (IQC) data collected over time, ideally for a period of at least one year to capture long-term performance [9] [35].

Step 3: Calculate Sigma Metric Use the formula σ = (TEa% - Bias%) / CV% to compute the Sigma metric for the assay. This calculation should be performed at two or more concentration levels. The lower of the resulting Sigma values is used for designing the QC strategy to ensure a conservative, risk-based approach [9] [35].

Step 4: Select QC Procedure & Frequency Map the calculated Sigma metric to an appropriate QC procedure. The following decision logic, based on the "Westgard Sigma Rules," is a standard industry approach [35]:

Diagram 2: QC Procedure Selection Logic

Step 5: Implement, Monitor, and Verify After implementation, the performance of the new QC procedure must be continuously monitored. This includes tracking the frequency of out-of-control events, false rejection rates, and cost metrics to validate the projected benefits and ensure the procedure remains effective [34] [9].

The Scientist's Toolkit: Essential Reagents & Materials

The table below lists key materials and software solutions required for conducting a Sigma metric validation study.

Table 2: Essential Research Reagents and Solutions for QC Validation

| Item Name | Function / Explanation | Example in Context |

|---|---|---|

| Third-Party QC Materials | Lyophilized or liquid control samples used to independently assess precision (CV%) and accuracy (Bias%) without manufacturer influence. | Biorad Lyphocheck controls used in a clinical chemistry study [9]. |

| QC Validation Software | Specialized software used to analyze QC data, calculate Sigma metrics, and simulate the performance of different QC rules (Pfr, Ped). | Biorad Unity 2.0 software and Westgard EZ Rules 3 [9] [35]. |

| Statistical Analysis Software | Tools for performing advanced statistical calculations, including Measurement System Analysis (MSA) and regression analysis. | Tools like Minitab and JMP are cited for data analysis in Six Sigma projects [33]. |

| Reference Materials | Certified materials with assigned target values, used to determine the Bias% of an analytical method. | Manufacturer mean or peer group mean used as a target value [9]. |

| Total Allowable Error (TEa) Source | A defined quality specification from an authoritative source, serving as the benchmark for calculating Sigma metrics. | CLIA criteria, the Biological Variation database, or RCPA guidelines [9]. |

The objective comparison demonstrates that applying Six Sigma metrics for QC procedure validation provides a scientifically rigorous and financially sound alternative to traditional methods. By tailoring QC rules and frequency to the actual performance of each assay, laboratories can achieve a significant reduction in operational costs—up to 75% in QC material consumption and 50% in failure-related costs—while maintaining or improving the quality of patient results. This data-driven approach aligns with modern quality management systems, fulfilling regulatory requirements through a risk-based lifecycle model and delivering a compelling cost-benefit profile for research and development in the pharmaceutical and biotechnology industries.

Implementing New Westgard Sigma Rules for Cost-Effective Quality Control

In the landscape of clinical laboratory science, quality control represents a significant operational cost center while being non-negotiable for patient safety. Traditional QC practices, particularly in the United States, have created a scenario where nearly 46% of laboratories experience out-of-control events daily [37]. This high frequency of QC failures triggers costly repeat testing, increases reagent consumption, and prolongs turnaround times—creating an unsustainable economic model without compromising quality. The 2025 Great Global QC Survey reveals that a majority of laboratories worldwide have done nothing to manage their QC costs, highlighting a critical need for smarter, data-driven approaches [36].

The implementation of Sigma-based Westgard rules represents a paradigm shift from one-size-fits-all QC procedures toward a risk-based, method-specific validation strategy. Rather than applying uniform multirules across all analytical platforms, this approach tailors QC rules to the actual performance characteristics of each method, quantified through Sigma metrics. This technical guide provides researchers and laboratory professionals with experimental data, implementation protocols, and cost-benefit analyses to support the transition to more efficient, cost-effective QC validation procedures.

Theoretical Foundation: Sigma Metrics as a QC Optimization Tool

The Fundamental Principle

Sigma metrics provide a standardized measurement of process performance that enables laboratories to match the rigor of their QC procedures to the actual quality of their analytical methods. The Sigma metric is calculated as: (Sigma = (TEa - |Bias|) / CV), where TEa represents the total allowable error specification, Bias represents the accuracy estimate, and CV represents the precision estimate [38]. This single numerical value predicts how often a method will produce reliable results without excessive false rejections.

Methods with higher Sigma metrics (≥6) demonstrate excellent performance and require less stringent QC procedures, while methods with lower Sigma metrics (<3) exhibit poor performance and demand more robust QC strategies with increased error detection capability. This graduated approach prevents the economic waste of applying maximal QC rules to methods that don't require them while ensuring sufficient oversight for problematic methods.

Clarifying Purpose and Limitations

A critical understanding for effective implementation is that Sigma metrics and QC rules function as performance indicators and error detectors—not performance improvers [39]. As articulated in a 2025 methodological critique, "A statistic, on its own, cannot improve (or degrade) a method's stable performance. An analytical Sigma metric, on its own, cannot improve the performance of a method. Quality control, on its own, cannot improve the performance of a method" [39]. This distinction is crucial—the benefit comes from appropriately matching QC effort to method performance, not from the rules themselves enhancing analytical quality.

Table 1: Sigma Metric Performance Classification and Recommended QC Strategy

| Sigma Level | Performance Assessment | Recommended QC Strategy | Error Detection Priority |

|---|---|---|---|

| ≥6 | World-class | Minimal rules (1:3s) | High specificity, low false rejection |

| 5-6 | Excellent | Moderate rules (1:3s/2:2s/R:4s) | Balanced error detection |

| 4-5 | Good | Full multirules (1:3s/2:2s/R:4s/4:1s) | Increased sensitivity |

| <4 | Poor | Maximum rules with increased QC frequency | Maximum error detection |

Global QC Landscape: The Cost of Inefficient Practices

Prevalence of Suboptimal QC Methods

The 2025 Great Global QC Survey data reveals persistent reliance on statistically flawed QC approaches across international laboratories. In the United States, the use of 2 SD limits for rejection—despite generating false rejection rates of 9% (for 2 controls) and 14% (for 3 controls)—has actually increased [37]. This practice directly contributes to operational inefficiency, as laboratories waste resources investigating false alerts and repeating acceptable runs.

Globally, 75% of laboratories routinely repeat controls following an out-of-control event, with 5% admitting they "keep repeating as much as necessary to get in-control" [36]. This "casino" approach to quality control represents a significant, unquantified cost center in laboratory operations through unnecessary reagent consumption, technologist time allocation, and instrument utilization.

Accreditation Standards and QC Practices

Comparative analysis between CLIA and ISO 15189 accredited laboratories reveals striking similarities in QC practices despite different regulatory frameworks [40]. CLIA-accredited laboratories demonstrate higher rates of out-of-control events (44% daily versus 29% in ISO labs), potentially attributable to their stronger preference for 2 SD rejection rules and higher rates of control repetition [40]. Neither regulatory framework appears to sufficiently incentivize the adoption of more efficient, risk-based QC approaches, indicating that improvement initiatives must come from within laboratory operations rather than external mandates.

Experimental Evidence: Quantitative Benefits of Sigma-Based Implementation

Year-Long Cost-Benefit Analysis in Indian Laboratory

A comprehensive yearlong study evaluating the implementation of Sigma-based Westgard rules across 23 routine chemistry parameters demonstrated significant financial benefits [3]. Researchers calculated Sigma metrics for each parameter using bias% and CV%, then applied optimized QC rules using Bio-Rad Unity 2.0 software with comparison of pre- and post-implementation performance indicators.

Table 2: Cost Savings After Sigma-Based Rule Implementation [3]

| Cost Category | Pre-Implementation | Post-Implementation | Reduction | Annual Savings (INR) |

|---|---|---|---|---|

| Internal Failure Costs | Baseline | 50% reduction | 50% | 501,808.08 |

| External Failure Costs | Baseline | 47% reduction | 47% | 187,102.80 |

| Total QC Costs | Baseline | Combined reduction | - | 750,105.27 |

The study documented a false rejection rate decrease from 5.6% to 2.5% after implementing Sigma-based rules, directly translating to reduced reagent consumption and technologist time [3]. Additionally, the rate of out-of-turnaround-time reports during peak hours decreased from 29.4% to 15.2%, representing a significant operational improvement beyond direct cost savings.

Efficiency Study on Biochemical Tests

Research on 26 biochemical tests demonstrated that transitioning from uniform QC rules (1-3s, 2-2s, 2/3-2s, R-4s, 4-1s, and 12-x) to customized Sigma-based rules reduced QC repeats from 5.6% to 2.5% [38]. This improvement in operational efficiency manifested in better proficiency testing performance, with cases exceeding 2 Standard Deviation Index reducing from 67 to 24, and cases exceeding 3 SDI dramatically decreasing from 27 to 4 [38].

The correlation between optimized QC rules and improved proficiency testing performance suggests that reducing unnecessary QC repetition allows technologists to focus attention on legitimate quality issues rather than statistical false alarms.

Implementation Protocol: Methodological Framework

Workflow for Sigma-Based Rule Implementation

The following diagram illustrates the systematic approach for transitioning from uniform QC rules to Sigma-based optimized procedures:

Phase 1: Performance Assessment and Sigma Calculation

The implementation begins with comprehensive data collection for each analytical method. Precision (CV%) should be determined using at least 20-30 days of internal quality control data, preferably across multiple reagent lots and calibrations. Bias estimation should incorporate method comparison studies, proficiency testing results, or both. Total allowable error (TEa) goals should be selected based on clinical requirements, using sources such as CLIA, RiliBÄK, or biological variation databases.

For each method, calculate Sigma metrics using the formula: Sigma = (TEa - |Bias|) / CV. Categorize methods into performance tiers: World-class (Sigma ≥6), Excellent (Sigma 5-6), Good (Sigma 4-5), Marginal (Sigma 3-4), and Poor (Sigma <3). This stratification enables appropriate resource allocation, with problematic methods flagged for improvement initiatives rather than simply increasing QC surveillance.

Phase 2: Rule Selection and Validation

Utilize Westgard Advisor software or manual algorithms to select appropriate QC rules based on each method's Sigma metric. For high-performing methods (Sigma ≥6), consider reducing to 2 controls per run with simple 1:3s rules. For methods with Sigma 5-6, implement 1:3s/2:2s/R:4s rules. Lower performing methods require progressively more sophisticated multirule procedures with potentially increased QC frequency.

Validate selected rules using historical QC data to confirm appropriate error detection and acceptable false rejection rates. Establish baseline metrics for QC repeat rates, turnaround time compliance, and reagent consumption before full implementation to enable quantitative benefit analysis.

Phase 3: Implementation and Monitoring

Roll out new QC procedures method-by-method with comprehensive staff education on the revised rules and troubleshooting protocols. Monitor key performance indicators including: out-of-control events, false rejection rates, QC repeat rates, turnaround time compliance, and proficiency testing performance. Document cost savings through reduced reagent usage, technologist time allocation, and decreased repeat testing.

The Researcher's Toolkit: Essential Materials and Methods

Table 3: Essential Research Reagents and Software Solutions

| Tool Category | Specific Examples | Research Function | Implementation Role |

|---|---|---|---|