Machine Learning for Enzyme Commission Number Prediction: Methods, Applications, and Future Directions

Accurate prediction of Enzyme Commission (EC) numbers is crucial for annotating the function of the millions of uncharacterized proteins in genomic databases.

Machine Learning for Enzyme Commission Number Prediction: Methods, Applications, and Future Directions

Abstract

Accurate prediction of Enzyme Commission (EC) numbers is crucial for annotating the function of the millions of uncharacterized proteins in genomic databases. This article explores the transformative role of machine learning (ML) in overcoming the limitations of traditional homology-based methods for EC number prediction. We provide a comprehensive analysis of the field, covering foundational concepts, state-of-the-art methodological approaches—including contrastive learning, graph neural networks, and ensemble models—and the critical challenges of data quality and model interpretability. Aimed at researchers, scientists, and drug development professionals, this review also offers a comparative evaluation of existing tools and discusses future directions, highlighting how advanced ML models are accelerating enzyme discovery for applications in synthetic biology, metabolic engineering, and therapeutic development.

The EC Number Prediction Challenge: From Sequence to Function

A substantial portion of enzymes encoded in microbial genomes remain functionally uncharacterized, creating a critical gap in our understanding of cellular metabolism and limiting opportunities in drug development and synthetic biology. The Enzyme Commission (EC) number system provides a standardized hierarchical classification for enzyme functions, yet experimental determination of these identifiers remains time-consuming and costly [1] [2]. This annotation deficit is particularly pronounced in microbial communities, where up to 70% of proteins lack functional characterization [3]. Machine learning (ML) technologies have emerged as powerful tools to address this challenge, enabling high-throughput annotation of uncharacterized enzyme sequences with increasing accuracy and coverage.

Comparative Analysis of Machine Learning Approaches for EC Number Prediction

Performance Metrics of State-of-the-Art Models

Advanced computational approaches have demonstrated remarkable capabilities in predicting EC numbers from protein sequences and structures. The table below summarizes the performance of leading models on independent benchmark datasets.

Table 1: Performance comparison of EC number prediction tools on independent test datasets

| Model | Approach | Test Dataset | Precision | Recall | F1-Score | Key Features |

|---|---|---|---|---|---|---|

| CLEAN-Contact [4] | Contrastive learning + contact maps | NEW-392 | 0.652 | 0.555 | 0.566 | Integrates sequence & structure data |

| CLEAN [4] | Contrastive learning | NEW-392 | 0.561 | 0.509 | 0.504 | Sequence-based contrastive learning |

| DeepECtransformer [1] | Transformer neural network | Proprietary test set | 0.854* | 0.794* | 0.809* | Uses transformer architecture |

| ProteEC-CLA [5] | Contrastive learning + agent attention | Standard dataset | - | - | 0.947 | Enhanced feature extraction |

| GraphEC [2] | Geometric graph learning | Price-149 | Superior to baselines | - | - | Uses ESMFold-predicted structures |

| BEC-Pred [6] | BERT-based reaction analysis | Reaction dataset | 0.916 | - | - | Predicts from reaction SMILES |

Macro averages; *EC4 level accuracy

Addressing Dataset Imbalances and Rare EC Numbers

A significant challenge in EC number prediction stems from the inherent imbalance in training datasets. The EC:1 class (oxidoreductases) demonstrates the lowest average number of sequences per EC number (4,352 compared to 6,819-16,525 for other classes), resulting in comparatively lower prediction performance (F1-score: 0.699) [1]. CLEAN-Contact shows particular promise in addressing this limitation, demonstrating a 30.4% improvement in precision for rare EC numbers (occurring 5-10 times in training data) compared to CLEAN [4].

Experimental Protocols for Model Implementation and Validation

Protocol: Implementing DeepECtransformer for Genome Annotation

Purpose: Predict EC numbers for uncharacterized genes in microbial genomes using protein sequences.

Materials:

- Computational Environment: Linux server with Python 3.8+, PyTorch, and DeepECtransformer package

- Input Data: FASTA file containing amino acid sequences of uncharacterized proteins

- Reference Databases: UniProtKB/Swiss-Prot for homology search fallback

Procedure:

- Data Preprocessing:

- Input protein sequences in FASTA format

- Remove redundant sequences using CD-HIT (90% identity cutoff)

- Split sequences into segments of 1,000 residues with 200-residue overlap

Neural Network Prediction:

- Load pre-trained DeepECtransformer model with transformer architecture

- Generate sequence embeddings for each input protein

- Compute probability distributions over 2,802 EC number classes

- Apply threshold of 0.5 for positive predictions

Homology-Based Validation:

- For sequences with no neural network prediction: Perform BLASTP against UniProtKB/Swiss-Prot

- Transfer EC numbers from top hits with E-value < 1e-5 and sequence identity > 40%

- Combine predictions from both approaches

Result Interpretation:

- Apply integrated gradients method to identify functional motifs

- Cross-reference predictions with known metabolic pathways

- Generate annotation report with confidence scores [1]

Protocol: Structural Annotation with GraphEC

Purpose: Leverage protein structural information for improved EC number prediction.

Materials:

- Software Requirements: ESMFold for structure prediction, PyTorch Geometric

- Hardware: GPU with ≥16GB memory (recommended)

- Input: Amino acid sequences in FASTA format

Procedure:

- Structure Prediction:

- Process each sequence through ESMFold to generate 3D coordinates

- Calculate TM-scores to assess prediction quality (accept >0.8)

Active Site Prediction:

- Construct protein graph with residues as nodes

- Incorporate geometric features and ProtTrans sequence embeddings

- Run GraphEC-AS to identify active site residues (AUC: 0.958)

EC Number Prediction:

- Apply attention mechanism weighted by active site predictions

- Generate initial EC number assignments

- Refine predictions using label diffusion algorithm with homology information [2]

Protocol: Experimental Validation of Computational Predictions

Purpose: Biochemically validate computational predictions for uncharacterized enzymes.

Materials:

- Cloning: pET expression vector, E. coli BL21(DE3) cells

- Protein Purification: Ni-NTA affinity chromatography, size exclusion chromatography

- Enzyme Assays: Relevant substrates, cofactors, spectrophotometer/fluorometer

Procedure:

- Heterologous Expression:

- Clone candidate genes into pET expression vector

- Transform E. coli BL21(DE3) with recombinant plasmid

- Indduce expression with 0.1-1.0 mM IPTG at 16-37°C

Protein Purification:

- Lyse cells via sonication in appropriate buffer

- Purify His-tagged proteins using Ni-NTA affinity chromatography

- Further purify using size exclusion chromatography

- Verify purity by SDS-PAGE

Enzyme Activity Assays:

Implementation Framework for Research and Development

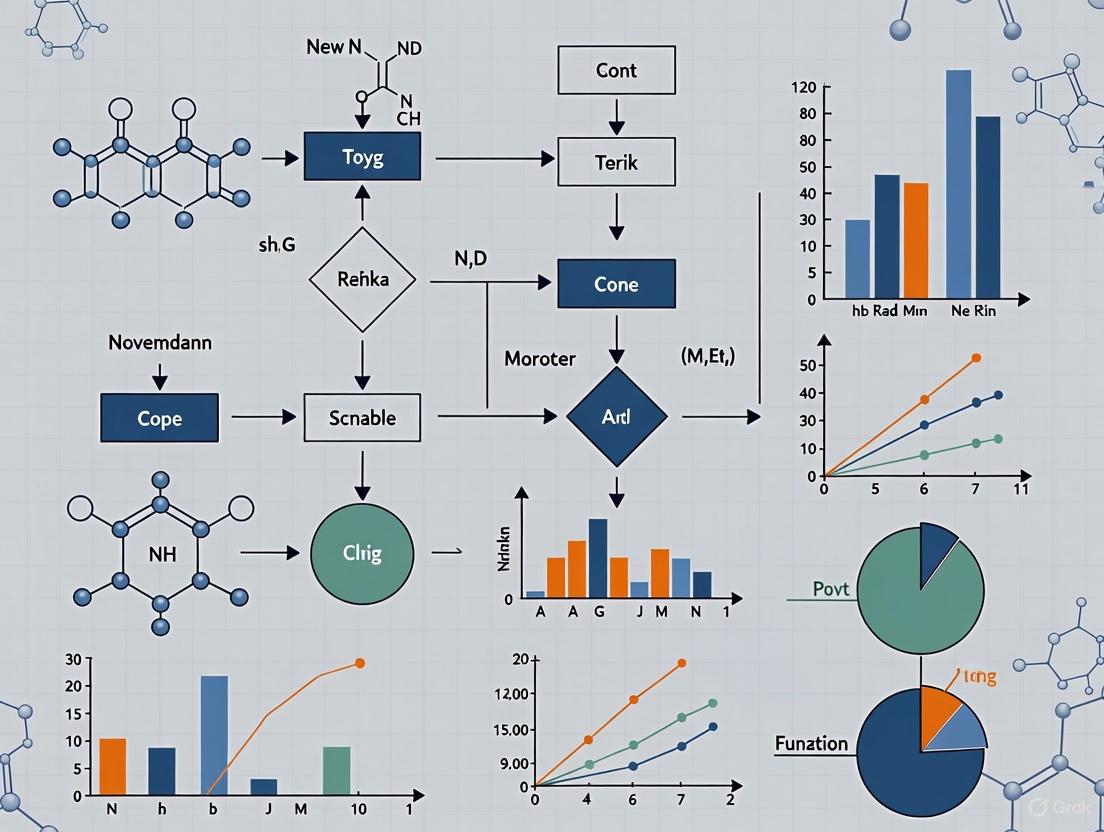

Visualization of the Integrated Annotation Pipeline

Figure 1: Integrated computational and experimental workflow for enzyme function annotation

Essential Research Reagent Solutions

Table 2: Key reagents and computational tools for enzyme annotation research

| Category | Item | Specifications | Application |

|---|---|---|---|

| Expression Systems | pET Vectors | T7 promoter, His-tag | Heterologous protein production |

| E. coli BL21(DE3) | T7 RNA polymerase expression | Recombinant protein expression | |

| Purification | Ni-NTA Resin | High affinity for His-tagged proteins | Immobilized metal affinity chromatography |

| Size Exclusion Columns | S200, S300 media | Protein polishing and complex analysis | |

| Analysis | Spectrophotometer | UV-Vis capability | Enzyme kinetic measurements |

| Substrate Libraries | Diverse metabolic intermediates | Enzyme activity screening | |

| Computational | ESMFold | Language model-based | Rapid protein structure prediction |

| ProtTrans | Protein language model | Sequence embedding generation | |

| UniProtKB | Comprehensive protein database | Homology searches and validation |

Machine learning approaches have dramatically advanced our ability to annotate uncharacterized enzyme sequences, with models like DeepECtransformer, CLEAN-Contact, and GraphEC demonstrating exceptional performance in EC number prediction. The integration of multiple data modalities—including protein sequences, predicted structures, and reaction information—represents the most promising direction for further improving annotation accuracy, particularly for rare EC classes. As these computational tools continue to evolve, they will play an increasingly vital role in illuminating the functional dark matter of the enzyme universe, accelerating drug discovery and metabolic engineering efforts.

Traditional sequence similarity search tools, such as the Basic Local Alignment Search Tool (BLAST), have long served as fundamental resources in bioinformatics for identifying homologous sequences and inferring protein function [7]. These tools operate on the principle that significant sequence similarity implies evolutionary relatedness (homology) and, by extension, functional similarity. However, the rapid expansion of genomic databases and the advent of sophisticated machine learning approaches for enzyme function prediction have revealed critical limitations in these traditional methods.

A primary challenge lies in the "detection horizon" of sequence-based methods—a threshold beyond which sequences have diverged so substantially that their common evolutionary origin becomes undetectable by standard metrics [7]. This limitation is particularly problematic for enzyme commission (EC) number prediction, where accurate functional annotation requires detecting distant evolutionary relationships that may lack significant sequence similarity. Furthermore, the foundational assumption that structural similarity always indicates homology has been challenged by evidence of convergent evolution at the structural level, where analogous proteins with nearly identical structures lack detectable sequence similarity [8].

This Application Note examines these limitations within the context of modern enzyme function prediction research, providing quantitative analyses of BLAST parameters, experimental protocols for overcoming sequence-based detection limits, and visualization of integrated workflows that combine traditional and next-generation approaches for accurate EC number annotation.

Quantitative Analysis of BLAST Limitations

Current BLAST Search Constraints

The National Center for Biotechnology Information (NCBI) has implemented specific technical limitations on web BLAST services to maintain system performance as biological databases continue to grow exponentially. Table 1 summarizes these critical constraints, which directly impact the scope and sensitivity of homology detection for enzyme sequences [9].

Table 1: Default Parameters and Limits for NCBI Web BLAST

| Parameter | Current Setting | Impact on Enzyme Analysis |

|---|---|---|

| Expect Value Threshold | 0.05 (reduced from previous defaults) | Increases stringency, potentially missing distant homologs with E-values between previous threshold and 0.05 |

| Max Target Sequences | 5,000 | Limits comprehensive analysis for large enzyme families with numerous members |

| Nucleotide Query Length | 1,000,000 bp | Generally sufficient for most enzyme gene sequences |

| Protein Query Length | 100,000 amino acids | Adequate for virtually all enzyme sequences |

| Filtering | Low complexity and repetitive regions masked by default | Reduces false positives but may obscure functionally important regions in certain enzyme classes |

These constraints reflect practical necessities for managing computational load but inevitably affect the sensitivity of enzyme function prediction. The reduced E-value threshold of 0.05 increases statistical stringency, potentially excluding valid but evolutionarily distant homologs that could provide crucial insights into enzyme function. Additionally, the masking of low-complexity regions, while reducing spurious matches, may obscure functionally important segments in certain enzyme classes [9].

The Remote Homology Detection Problem

The core limitation of traditional BLAST searches lies in their diminishing sensitivity for detecting remote homologs as sequences diverge beyond a certain threshold. Coevolution-based structure prediction methods have emerged to extend this detection horizon by inferring three-dimensional constraints from correlated substitutions in multiple sequence alignments [7]. These methods can identify structural relationships even when sequences appear devoid of all annotated domains and repeats, effectively pushing back the homology detection horizon.

Recent evidence suggests that strong structural matches do not guarantee homology. A 2025 study analyzing Foldseek clusters found that approximately 2.6% of structure matches lacked sequence-level support for homology, including about 1% of strong structure matches with Template Modeling Score (TM-score) ≥ 0.5 [8]. This subset of matches was significantly enriched in structures with predicted repeats that could induce spurious matches. Phylogenetic analysis of tandem repeat units revealed genealogies inconsistent with shared common ancestry, demonstrating that convergent evolution can produce highly similar protein structures independently [8].

Next-Generation Solutions for Enzyme Function Prediction

Machine Learning Approaches

Machine learning methods have dramatically advanced enzyme function prediction by integrating diverse features beyond primary sequence similarity. Table 2 compares several state-of-the-art computational tools that address the limitations of traditional homology-based approaches.

Table 2: Machine Learning Tools for Enzyme Commission Number Prediction

| Tool | Approach | Input Data | Reported Performance | Advantages |

|---|---|---|---|---|

| ProteEC-CLA [5] | Contrastive Learning + Agent Attention | Protein sequence | 98.92% accuracy (EC4 level) on standard dataset | Enhanced feature extraction; improved utilization of unlabeled data |

| TopEC [10] | 3D Graph Neural Networks + Localized 3D Descriptor | Protein structure | F-score: 0.72 on fold-split dataset | Robust to uncertainties in binding site locations; learns biochemical and shape-dependent features |

| SOLVE [11] | Ensemble Learning (RF, LightGBM, DT) | Protein sequence | High accuracy on independent datasets (specific metrics not provided) | Interpretable via Shapley analyses; identifies functional motifs |

These tools demonstrate several advantages over traditional homology-based methods. ProteEC-CLA leverages contrastive learning to construct positive and negative sample pairs, enhancing sequence feature extraction and improving utilization of unlabeled data [5]. TopEC represents a significant advancement by utilizing 3D structural information through graph neural networks, focusing on localized binding site descriptors rather than global fold similarity, thereby addressing the fold bias problem common in structure-based function prediction [10]. The SOLVE framework provides interpretability through Shapley analyses, identifying functional motifs at catalytic and allosteric sites—a crucial feature for drug development applications [11].

Advanced Alignment Technologies

Next-generation sequence alignment tools have emerged to address the scalability limitations of traditional BLAST when searching against exponentially growing genomic databases. LexicMap, a recently developed nucleotide sequence alignment tool, enables efficient querying of moderate-length sequences (>250 bp) against millions of prokaryotic genomes [12].

Unlike BLAST, LexicMap employs a innovative probing and seeding algorithm that uses a small set of 20,000 probe k-mers to capture seeds across entire genome databases. This approach guarantees seed coverage every 250 bp while supporting variable-length prefix and suffix matching for increased sensitivity to divergent sequences [12]. The method demonstrates particular strength in maintaining robustness as sequence divergence increases beyond 10%, a threshold where many k-mer-based prefiltering methods fail.

Experimental Protocols

Protocol: Detecting Structural Analogs with Tandem Repeat Analysis

This protocol outlines a method for distinguishing truly homologous structures from analogous ones using tandem repeat analysis, based on approaches described in [8].

Materials

- Protein structures (experimental or predicted)

- Foldseek structural alignment software

- US-align for pairwise structural alignment

- Tandem repeat prediction software (e.g., RepeatsDB-based tools)

- Multiple sequence alignment software (e.g., MAFFT)

- Phylogenetic inference package (e.g., IQ-TREE)

Procedure

- Identify Strong Structural Matches: Using Foldseek, cluster protein structures at an E-value of 0.01 with at least 90% coverage. Calculate TM-scores for all pairs using US-align. Retain pairs with TM-score ≥ 0.5 for further analysis.

- Assess Sequence-Level Homology: For each structure pair, extract corresponding sequences and perform bootstrap analysis of amino acid substitution scores. Calculate the proportion of bootstrap replicates where the substitution score exceeds random expectation. Pairs with bootstrap support < 0.99 are considered to lack sequence-level support for homology.

- Detect Structural and Sequence Repeats: Use RepeatsDB to classify structures with predicted repeats. Identify sequence-level tandem repeats underlying the structural repeats.

- Construct Repeat Unit Alignments: Using structural alignments as a guide, manually create multiple sequence alignments of the repeat units from both proteins.

- Perform Phylogenetic Analysis: Build phylogenetic trees from repeat unit alignments. Assess whether tree topology supports homology (repeat units diverging from common ancestral repeats) or analogy (repeat units clustering by protein rather than common ancestry).

- Interpret Results: Structure pairs where repeat units show high bootstrap support (≥0.80) for genealogies inconsistent with shared common ancestry provide evidence for analogous rather than homologous relationships.

Protocol: EC Number Prediction with TopEC

This protocol describes the process of predicting Enzyme Commission numbers using the 3D graph neural network framework TopEC [10].

Materials

- Protein structures (experimental or predicted via AlphaFold2, etc.)

- TopEC software (https://github.com/IBG4-CBCLab/TopEC)

- NVIDIA GPU with at least 40GB memory (for full structure analysis)

- Binding site annotation (experimental or via P2Rank prediction)

Procedure

- Structure Preparation: Obtain protein structures either experimentally or through prediction. For enzymes of unknown function, use AlphaFold2 to generate predicted structures.

- Binding Site Identification: Annotate binding sites using experimental evidence when available. For novel structures, use P2Rank to predict potential binding sites.

- Graph Construction:

- Atom Resolution: Create graph nodes for each heavy atom position, using atom type definitions from force field ff19SB.

- Residue Resolution: Create graph nodes for each Cα atom position of the enzyme backbone.

- Localized Descriptor Generation: For memory-efficient processing, focus on the binding site region by including either:

- The closest n atoms to the binding site center, OR

- All atoms within a defined radius r of the binding site.

- Model Application:

- Use TopEC-distances (based on SchNet) for both atom and residue resolution.

- Use TopEC-distances+angles (based on DimeNet++) for residue resolution only.

- EC Number Prediction: The model outputs predictions across all four levels of EC classification. The highest probability class at the fourth level (EC4) represents the specific enzyme function prediction.

- Validation: For novel predictions, consider experimental validation through enzymatic assays targeting the predicted function.

Workflow Visualization

Workflow comparing traditional and next-generation approaches for enzyme function prediction.

The Scientist's Toolkit

Research Reagent Solutions

Table 3: Essential Computational Tools for Advanced Enzyme Function Analysis

| Tool/Resource | Type | Primary Function | Application in Enzyme Research |

|---|---|---|---|

| Foldseek [8] | Structural alignment tool | Fast protein structure search | Identify structural analogs and homologs beyond sequence detection limits |

| TopEC [10] | 3D Graph Neural Network | EC number prediction from structure | Predict enzyme function for structurally characterized proteins of unknown function |

| ProteEC-CLA [5] | Protein language model | EC number prediction from sequence | High-throughput annotation of enzyme sequences from genomic data |

| LexicMap [12] | Nucleotide alignment tool | Scalable sequence search against massive databases | Identify homologous genes across millions of prokaryotic genomes |

| AlphaFold Database [8] | Protein structure database | Predicted structures for proteomes | Source of structural models for enzymes without experimental structures |

| RepeatsDB [8] | Tandem repeat database | Annotation of protein tandem repeats | Identify repetitive structural elements that may indicate convergent evolution |

The limitations of traditional BLAST and sequence similarity searches necessitate a paradigm shift in enzyme function prediction. While these tools remain valuable for identifying close homologs, their inability to detect remote homology and distinguish structural analogs from true homologs constrains their utility for comprehensive EC number annotation.

Integration of machine learning approaches—particularly those leveraging structural information through graph neural networks—represents a promising path forward. Tools such as TopEC demonstrate how localized 3D descriptors can capture functional determinants missed by sequence-based or global fold similarity methods. Similarly, ensemble learning frameworks like SOLVE provide interpretable predictions that identify functionally important motifs.

For researchers investigating enzyme function, we recommend a hybrid approach that combines traditional sequence analysis with next-generation structural comparison and machine learning. This integrated strategy maximizes the strengths of each method while mitigating their individual limitations, ultimately leading to more accurate EC number predictions and facilitating drug discovery efforts targeting specific enzyme functions.

The Enzyme Commission (EC) number is a numerical classification scheme for enzymes, established by the International Union of Biochemistry and Molecular Biology (IUBMB). This system provides a standardized framework for classifying enzymes based on the chemical reactions they catalyze, rather than based on the individual enzymes themselves [13] [14]. Each EC number is associated with a recommended name for the corresponding enzyme-catalyzed reaction, bringing much-needed order to the field of enzymology [13].

The development of this system in the 1950s and its first publication in 1961 addressed a critical problem: the arbitrary and chaotic naming of newly discovered enzymes, which often provided little clue about the reaction catalyzed (e.g., "old yellow enzyme") [13]. The EC system works analogously to library classification systems, organizing enzymatic knowledge in a logical, hierarchical structure that has become foundational for biochemical research, database curation, and the emerging field of machine learning-based enzyme function prediction [15] [14].

The Structure and Hierarchy of EC Numbers

The Four-Level Classification System

Every EC number consists of the letters "EC" followed by four numbers separated by periods (e.g., EC 3.4.11.4). These numbers represent a progressively finer classification of the enzyme function [13]. The table below details the meaning of each level in the hierarchy.

Table 1: The Four-Level Hierarchy of the EC Number System

| EC Number Level | Description | Example: EC 3.4.11.4 (Tripeptide Aminopeptidase) |

|---|---|---|

| First Number (Class) | The general type of reaction catalyzed [13] [14]. There are seven main classes. | 3 - Hydrolase (uses water to break a molecule) [13] |

| Second Number (Sub-class) | Further defines the general type of bond or group acted upon [13] [14]. | 4 - Acts on peptide bonds [13] |

| Third Number (Sub-sub-class) | Further specifies the nature of the reaction or the substrates [13] [14]. | 11 - Cleaves off the amino-terminal amino acid from a polypeptide [13] |

| Fourth Number (Serial Identifier) | A unique serial number assigned to a specific enzyme-substrate combination [13] [14]. | 4 - Cleaves the amino-terminal end from a tripeptide [13] |

The Seven Major Enzyme Classes

The first digit of an EC number places the enzyme into one of seven fundamental classes based on the type of reaction catalyzed.

Table 2: The Seven Major Classes of Enzymes

| EC Class | Class Name | Reaction Catalyzed | Example Reaction | Example Enzymes (Trivial Names) |

|---|---|---|---|---|

| EC 1 | Oxidoreductases | Catalyze oxidation-reduction reactions; transfer of H and O atoms or electrons [13] [15]. | AH + B → A + BH (reduced) [13] | Dehydrogenase, Oxidase [13] |

| EC 2 | Transferases | Transfer a functional group (e.g., methyl, acyl, amino, phosphate) from one substance to another [13] [15]. | AB + C → A + BC [13] | Transaminase, Kinase [13] |

| EC 3 | Hydrolases | Form two products from a substrate by hydrolysis (cleavage of a bond by water) [13] [15]. | AB + H₂O → AOH + BH [13] | Lipase, Amylase, Peptidase [13] |

| EC 4 | Lyases | Catalyze non-hydrolytic addition or removal of groups from substrates, often forming double bonds [13] [15]. | RCOCOOH → RCOH + CO₂ [13] | Decarboxylase [13] |

| EC 5 | Isomerases | Catalyze intramolecular rearrangement (isomerization changes within a single molecule) [13] [15]. | ABC → BCA [13] | Isomerase, Mutase [13] |

| EC 6 | Ligases | Join two molecules by synthesizing new C-O, C-S, C-N or C-C bonds with simultaneous breakdown of ATP [13] [15]. | X + Y + ATP → XY + ADP + Pᵢ [13] | Synthetase [13] |

| EC 7 | Translocases | Catalyze the movement of ions or molecules across membranes or their separation within membranes [13] [15]. | — | Transporter [13] |

The Role of EC Numbers in Machine Learning Research

The systematic and hierarchical nature of the EC number makes it an ideal target for machine learning (ML) models aimed at high-throughput enzyme function annotation. With the rapid discovery of new protein sequences far outpacing experimental characterization, computational prediction of EC numbers has become crucial [16] [17].

The Computational Challenge

The primary task is to assign a four-level EC number to a given protein sequence. This is a complex, multi-label classification problem with significant challenges [16] [18]:

- Class Imbalance: The distribution of known sequences across EC numbers is highly skewed; some EC numbers have thousands of associated sequences, while others have only a handful [18].

- Data Scarcity: For reaction-level prediction, the number of curated enzyme-reaction pairs is much smaller than the number of enzyme sequences [18].

- Hierarchical Prediction: Accurate prediction requires correct classification at each of the four hierarchical levels.

Evolution of ML Approaches for EC Number Prediction

Early methods relied heavily on sequence homology, but these fail for novel enzymes without close relatives [16] [17]. Traditional machine learning models (e.g., SVM, K-Nearest Neighbors, Random Forests) required manual feature extraction from sequences, which limited their performance [17]. The field has now transitioned to deep learning, which can automatically learn relevant features directly from raw amino acid sequences [17].

Modern frameworks, such as HDMLF (Hierarchical Dual-core Multitask Learning Framework), treat the problem in a multi-task manner: first predicting if a sequence is an enzyme, then predicting if it is multifunctional, and finally predicting the precise EC number(s) [16]. State-of-the-art models like ProteEC-CLA and CLAIRE leverage several advanced techniques [5] [18]:

- Protein Language Models (e.g., ESM): These transformer-based models, pre-trained on millions of protein sequences, generate informative, context-aware numerical embeddings (vector representations) of a protein sequence, drastically improving downstream prediction accuracy [16] [5].

- Contrastive Learning: This technique helps the model learn by comparing positive and negative sample pairs, which improves feature extraction and is particularly effective in overcoming data imbalance [5] [18].

- Attention Mechanisms: These allow the model to focus on the most relevant parts of the protein sequence for making a functional prediction, also adding a degree of interpretability [5].

The performance of these models is benchmarked using metrics like accuracy and F1-score. The following table summarizes the performance of several recent models.

Table 3: Performance Comparison of Recent EC Number Prediction Models

| Model Name | Key Methodology | Reported Performance | Key Advantage |

|---|---|---|---|

| HDMLF [16] | Protein language model (ESM), Gated Recurrent Unit (GRU), multi-task hierarchy | Improves accuracy and F1 score by 60% and 40% over previous state-of-the-art, respectively [16]. | High performance on newly discovered proteins. |

| ProteEC-CLA [5] | Contrastive Learning, ESM2 protein model, Agent Attention | 98.92% accuracy on standard dataset; 93.34% accuracy on challenging clustered split dataset [5]. | Enhanced ability to capture local and global sequence features. |

| CLAIRE [18] | Contrastive Learning, pre-trained reaction language model (rxnfp), data augmentation | Weighted average F1 scores of 0.861 and 0.911 on two different testing sets [18]. | Predicts EC numbers from reaction data, useful for synthetic biology. |

EC Number Prediction Workflow

Experimental Protocols for ML-Driven EC Number Prediction

This section outlines a generalized protocol for developing and validating a deep learning model to predict EC numbers from protein sequences, reflecting methodologies used in recent studies [16] [5].

Data Curation and Preprocessing

Objective: To construct a high-quality, chronologically-segregated dataset for training and evaluating prediction models.

- Data Source: Extract enzyme sequences with experimentally verified EC numbers from a reference database such as UniProt/Swiss-Prot [16] [19].

- Temporal Splitting: To simulate a real-world prediction scenario and avoid data leakage, split the data chronologically.

- Training Set: Use a snapshot of the database from an earlier date (e.g., February 2018).

- Testing Set 1: Use a snapshot from a later date (e.g., June 2020), filtering out any sequences present in the training set.

- Testing Set 2: Use an even more recent snapshot (e.g., February 2022) for a second, more challenging validation of model stability over time [16].

- Data Augmentation: For reaction-based predictors, augment the training data by shuffling the order of reactants and products in the reaction SMILES strings to improve model robustness [18].

Feature Extraction with Protein Language Models

Objective: To convert raw amino acid sequences into numerical embeddings that capture structural and functional information.

- Model Selection: Choose a pre-trained protein language model, such as Evolutionary Scale Modeling (ESM) [16] or UniRep [16].

- Embedding Generation: Pass each protein sequence through the pre-trained model.

- Layer Selection: Extract the hidden layer outputs as the feature vector for the sequence. Empirical testing is required to identify the optimal layer (e.g., ESM-32), as performance is not always linear with depth [16].

Model Training with a Hierarchical Framework

Objective: To train a neural network that predicts EC numbers accurately.

- Architecture:

- Multi-Task Training:

- Task 1 (Binary Classification): Train the model to distinguish between enzyme and non-enzyme sequences.

- Task 2 (Multifunction Detection): Train the model to predict if an enzyme catalyzes multiple reactions.

- Task 3 (EC Number Prediction): Train the model to predict the full EC number, often treated as a multi-label classification problem [16].

- Optimization: Use a greedy strategy to integrate and fine-tune the tasks, maximizing final EC prediction performance [16].

Model Validation and Experimental Confirmation

Objective: To rigorously assess the model's predictions and avoid propagation of errors.

- In Silico Validation:

- In Vitro Experimental Validation:

- Cloning and Expression: Clone the gene encoding the predicted enzyme into an expression vector and express it in a suitable host (e.g., E. coli) [19].

- Protein Purification: Purify the recombinant protein using affinity chromatography.

- Enzyme Activity Assay: Incubate the purified enzyme with its predicted substrate(s) under optimized buffer conditions. Measure the formation of products or the consumption of substrates using techniques like spectrophotometry or mass spectrometry [19].

- Kinetics Analysis: Determine the enzyme's catalytic efficiency (kₐₜ/Kₘ) and compare it to known related enzymes. A very weak activity (e.g., orders of magnitude lower) may indicate enzyme promiscuity rather than true physiological function, highlighting a common pitfall in prediction [19].

Essential Research Tools and Reagents

Table 4: The Scientist's Toolkit for EC Number and ML Research

| Item | Function / Application |

|---|---|

| Databases | |

| UniProt/Swiss-Prot [16] [19] | A comprehensive, high-quality resource for protein sequences and their curated functional annotations, including EC numbers. |

| ENZYME Database (Expasy) [20] | A dedicated repository of information related to enzyme nomenclature, based on IUBMB recommendations. |

| Rhea [18] | A expert-curated database of biochemical reactions, used for training reaction-based EC predictors. |

| Computational Tools & Models | |

| ESM (Evolutionary Scale Modeling) [16] [5] | A state-of-the-art protein language model used to generate powerful numerical embeddings from amino acid sequences. |

| HDMLF & ProteEC-CLA [16] [5] | Examples of advanced deep learning frameworks designed specifically for hierarchical EC number prediction. |

| CLAIRE [18] | A contrastive learning model that predicts EC numbers from chemical reaction data. |

| Experimental Reagents | |

| Expression Vectors & Host Cells (e.g., E. coli) [19] | For cloning and expressing the genes of putative enzymes for functional validation. |

| Affinity Chromatography Kits | For purifying recombinant enzymes after expression. |

| Spectrophotometric Assay Kits/Reagents | For measuring enzyme activity and kinetic parameters in vitro. |

The integration of machine learning with the established EC numbering system is revolutionizing enzyme annotation. Future research will likely focus on several key areas:

- Incorporating Structural Data: Using 3D protein structures or predicted structures from tools like AlphaFold to provide additional context for function prediction [16] [21].

- Predicting Enzyme Promiscuity and Specificity: Developing models like EZSpecificity that can predict an enzyme's exact substrate preferences, going beyond the broad categorization of the EC number [21].

- De Novo Enzyme Design: Leveraging generative AI models to design entirely new enzymes with desired catalytic activities, guided by EC classification principles [17].

- Improved Data Curation and Validation: As highlighted by critical analyses, the community must prioritize data quality and rigorous, domain-expert-led validation to prevent the propagation of errors in databases and models [19].

In conclusion, the EC numbering system provides the essential, structured vocabulary for enzyme function. When this vocabulary is combined with modern machine learning techniques, it creates a powerful tool for deciphering the functional dark matter of the protein universe, with profound implications for basic biochemical research, drug discovery, and synthetic biology.

The Role of Machine Learning in Scaling Functional Annotation

The exponential growth of genomic data has created a critical bottleneck in the life sciences: the functional annotation of enzymes. Accurate annotation is crucial for elucidating disease mechanisms, identifying drug targets, and advancing metabolic engineering [5]. The Enzyme Commission (EC) number system provides a standardized hierarchical classification for enzyme functions, but experimental determination of EC numbers remains slow and resource-intensive. Machine learning (ML) now offers powerful computational approaches to scale this functional annotation process, leveraging patterns in protein sequences, structures, and evolutionary relationships to predict enzyme functions with increasing accuracy. This application note examines current ML methodologies for EC number prediction, provides experimental protocols for their implementation, and offers resources for researchers seeking to apply these tools in drug discovery and basic research.

Current Machine Learning Approaches for EC Number Prediction

Recent advances in machine learning have produced diverse computational frameworks for enzyme function prediction, each with distinct architectural strengths and data requirements. The table below summarizes several state-of-the-art tools and their performance characteristics.

Table 1: Machine Learning Tools for Enzyme Commission Number Prediction

| Tool Name | ML Approach | Input Data | Key Features | Reported Performance |

|---|---|---|---|---|

| ProteEC-CLA [5] | Contrastive Learning + Agent Attention | Protein Sequences | Utilizes ESM-2 protein language model; enhanced feature extraction | 98.92% accuracy (EC4 level, standard dataset); 93.34% accuracy (clustered split) |

| TopEC [10] | 3D Graph Neural Network | Protein Structures | Uses localized 3D descriptors from binding sites; message-passing networks | F-score: 0.72 (fold split dataset); Robust to binding site uncertainties |

| DeepECtransformer [22] | Transformer Neural Network | Protein Sequences | Covers 5,360 EC numbers; identifies functional motifs; interpretable predictions | Precision: 0.76-0.95; Recall: 0.68-0.94 across EC classes |

| SOLVE [11] | Ensemble Learning (RF, LightGBM, DT) | Protein Sequences | Addresses class imbalance with focal loss; provides Shapley interpretability | Outperforms existing tools across all metrics on independent datasets |

| CLEAN-Contact [4] | Contrastive Learning | Sequences + Contact Maps | Combines ESM-2 and ResNet50; integrates sequence and structural information | 16.22% higher precision than CLEAN; superior on understudied EC numbers |

These tools demonstrate that different computational strategies offer complementary strengths. Sequence-based methods like ProteEC-CLA and DeepECtransformer provide broad applicability even when structural data is unavailable [5] [22]. Structure-aware approaches like TopEC leverage spatial information for improved accuracy on challenging cases [10], while hybrid methods like CLEAN-Contact aim to capture the benefits of both sequence and structure information [4].

Quantitative Performance Comparison

To facilitate tool selection for specific research needs, we provide a detailed comparison of model performance across standardized benchmark datasets.

Table 2: Performance Comparison on Benchmark Datasets

| Tool | Precision | Recall | F1-Score | AUROC | Test Dataset |

|---|---|---|---|---|---|

| CLEAN-Contact [4] | 0.652 | 0.555 | 0.566 | 0.777 | New-392 |

| CLEAN [4] | 0.561 | 0.509 | 0.504 | 0.753 | New-392 |

| CLEAN-Contact [4] | 0.621 | 0.513 | 0.525 | 0.756 | Price-149 |

| CLEAN [4] | 0.531 | 0.434 | 0.452 | 0.717 | Price-149 |

| DeepEC [4] | ~0.238 | N/A | N/A | N/A | Price-149 |

| ProteInfer [4] | ~0.243 | N/A | N/A | N/A | Price-149 |

Performance varies significantly across enzyme classes. For example, DeepECtransformer shows lower performance for EC:1 class (oxidoreductases), largely due to dataset imbalance, with fewer sequences available per EC number compared to other classes [22]. CLEAN-Contact demonstrates particular strength on understudied EC numbers, showing 30.4% improvement in precision for rare enzymes (occurring 5-10 times in training data) compared to CLEAN [4].

Experimental Protocols

Protocol: Implementing ProteEC-CLA for High-Accuracy EC Prediction

Purpose: To predict EC numbers from protein sequences using contrastive learning and agent attention mechanisms.

Materials:

- Protein sequences in FASTA format

- Python 3.8+

- PyTorch deep learning framework

- Pretrained ProteEC-CLA model [5]

- GPU resources (recommended for rapid inference)

Procedure:

- Data Preparation:

- Input protein sequences in FASTA format

- Preprocess sequences using the ESM-2 tokenizer

- Generate sequence embeddings using the pretrained ESM-2 model

Model Setup:

- Load the pretrained ProteEC-CLA model architecture

- Initialize with published weights

- Configure Agent Attention mechanisms for enhanced feature extraction

Inference:

- Feed sequence embeddings through the contrastive learning framework

- Apply Agent Attention to capture local and global sequence features

- Generate predictions at all four EC number levels

Result Interpretation:

- Extract probability scores for each EC number assignment

- Apply threshold of ≥0.95 for high-confidence predictions [5]

- Output final EC number assignments with confidence metrics

Validation: The model achieves 98.92% accuracy at the EC4 level on standard datasets and maintains 93.34% accuracy on challenging clustered split datasets [5].

Protocol: Structure-Based EC Prediction with TopEC

Purpose: To predict EC numbers from protein structures using 3D graph neural networks.

Materials:

- Protein structures in PDB format

- Python 3.7+

- DimeNet++ or SchNet frameworks

- TopEC software package [10]

- P2Rank for binding site prediction (if experimental sites unknown)

Procedure:

- Structure Preprocessing:

- Input experimental or predicted protein structures

- Identify binding sites using experimental evidence, homology, or P2Rank prediction [10]

- Extract regional representations focusing on binding site vicinity

Graph Construction:

- Option A (Residue-level): Create nodes for each Cα atom

- Option B (Atom-level): Create nodes for each heavy atom

- Build graphs using closest n atoms or atoms within radius r from binding site

Model Application:

- Apply message-passing neural networks (SchNet for distances; DimeNet++ for distances+angles)

- Utilize localized 3D descriptors for function classification

- Generate EC number predictions across hierarchy

Output Analysis:

- Review F-score metrics (target: 0.72)

- Assess model confidence based on structural features

- Export predictions with structural rationales

Validation: TopEC achieves robust performance (F-score: 0.72) even with uncertainties in binding site locations and similar functions in distinct binding sites [10].

Workflow Visualization

EC Number Prediction Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for ML-Based Enzyme Annotation

| Resource | Type | Function | Example Tools |

|---|---|---|---|

| Protein Language Models | Software | Generate informative sequence embeddings for functional analysis | ESM-2 [5] [4], ProtBert [4] |

| Structure Prediction | Software | Generate 3D protein models when experimental structures unavailable | AlphaFold2, RoseTTAFold [10] |

| Contact Map Generators | Software | Create 2D representations of residue contacts for hybrid models | Various structure processors [4] |

| Curated Enzyme Datasets | Data | Training and benchmarking datasets with validated EC numbers | UniProtKB [22], Binding MOAD [10], TopEnzyme [10] |

| Graph Neural Networks | Software Framework | Process 3D structural data as graphs for structure-based prediction | SchNet, DimeNet++ [10] |

| Interpretability Tools | Software | Explain model predictions and identify important features | Shapley analysis [11], Attention visualization [22] |

Implementation Considerations

Data Quality and Curation

High-quality functional annotation requires rigorously curated training data. Research indicates that erroneous functions in databases like UniProt can be propagated by ML models, leading to systematic errors [19]. Implementation should include:

- Careful inspection of training data sources and quality

- Regular updates to annotation protocols when systematic errors are detected [23]

- Validation of novel predictions against biological context and existing literature

Addressing Dataset Imbalance

EC number classes are naturally imbalanced, with some functions being extensively characterized while others are rare. This imbalance can significantly impact model performance [22]. Effective strategies include:

- Implementing focal loss functions to mitigate class imbalance [11]

- Utilizing contrastive learning to improve performance on understudied EC numbers [4]

- Employing clustered splits (30% sequence identity) during evaluation to remove fold bias [10]

Model Interpretability

Beyond prediction accuracy, understanding model reasoning is crucial for biological insight. Tools like DeepECtransformer can identify functional motifs and important regions through attention mechanisms [22]. SOLVE provides Shapley analysis to highlight the contribution of specific sequence regions to functional predictions [11]. These interpretability features help build trust in predictions and can provide novel biological insights.

Machine learning approaches are dramatically accelerating the scale and accuracy of enzyme functional annotation. Sequence-based methods offer broad applicability, structure-based approaches provide enhanced accuracy for challenging cases, and hybrid methods leverage complementary data types for improved performance. As these tools continue to evolve, integration with experimental validation remains essential to ensure biological relevance and address limitations such as dataset bias and error propagation. The protocols and resources provided here offer researchers a pathway to implement these advanced computational methods in drug discovery and basic enzyme research.

Architectures and Algorithms: A Deep Dive into Modern EC Prediction Models

Leveraging Protein Language Models for State-of-the-Art Sequence Embeddings

Protein Language Models (PLMs) have emerged as a transformative technology for extracting meaningful representations from amino acid sequences. These sequence embeddings encapsulate intricate structural, functional, and evolutionary patterns, making them exceptionally powerful for downstream predictive tasks in bioinformatics. Within the specific research context of machine learning for predicting Enzyme Commission (EC) numbers, PLMs provide a critical foundation for developing accurate, scalable, and rapid functional annotation tools. This Application Note details the methodology for generating and utilizing state-of-the-art sequence embeddings, provides protocols for their application in EC number prediction, and presents a comparative analysis of leading PLMs to guide researcher selection.

Protein Language Models (PLMs) are deep learning models, typically based on the transformer architecture, that are pre-trained on millions of protein sequences to learn the fundamental "language" of proteins [24]. Analogous to how large language models for text learn from vast corpora of words, PLMs learn from the statistical patterns and dependencies between amino acids in sequences from databases like UniRef [24]. This self-supervised pre-training, often done via a masked language modeling objective where the model learns to predict randomly hidden amino acids, allows the model to internalize complex biological principles without explicit manual labeling [24] [25].

The primary output of a PLM is a sequence embedding—a high-dimensional, numerical vector representation that captures the semantic and syntactic meaning of a protein sequence. These embeddings can be generated for an entire sequence (per-protein embedding) or for each individual amino acid position (per-residue embedding). For EC number prediction, which is a protein-level functional classification task, per-protein embeddings serve as powerful feature vectors that can be used to train supervised machine learning classifiers, capturing information that is often more informative than hand-crafted features like physicochemical properties or k-mer frequencies [24].

Generating Protein Sequence Embeddings: A Step-by-Step Protocol

This protocol describes the process of generating per-protein embeddings using the ESM2 model via the TRILL platform, a framework designed to democratize access to various PLMs [24]. The workflow is summarized in Figure 1.

Pre-requisites and Environment Setup

- Computing Environment: A computing environment with Python 3.8+ and access to a GPU is recommended for faster inference, especially with larger models.

- Software Installation: Install the necessary Python packages. The TRILL platform can be a convenient starting point.

Input Data Preparation

- Sequence Collection: Compile the protein sequences of interest in a FASTA format file. Ensure sequences are valid and contain only standard amino acid characters.

- Data Cleaning: Remove redundant sequences or sequences with ambiguous residues if necessary, depending on the research objective.

Embedding Generation with ESM2

The following Python code demonstrates how to generate per-protein embeddings using the Hugging Face transformers library, which provides direct access to ESM2 models.

Critical Steps and Parameters:

- Model Selection: The ESM2 model family comes in various sizes (e.g.,

esm2_t12_35M_UR50Dwith 35M parameters toesm2_t48_15B_UR50Dwith 15B parameters). Larger models are more powerful but computationally intensive [24]. - Tokenization: The tokenizer converts the amino acid string into model-ingestible tokens. The

max_lengthparameter should be set to accommodate the longest sequence in your dataset. - Pooling Strategy: The example uses mean pooling over the sequence length to create a single vector per protein. This is a standard approach for protein-level classification tasks. Alternatively, you can use the embedding of the special

<cls>token if the model provides one.

Output and Storage

The final output is a numerical vector (the embedding_array in the code) whose dimensionality depends on the chosen model (e.g., 2560 dimensions for the esm2_t36_3B_UR50D model). Store these vectors in a efficient format (e.g., NumPy .npy or a matrix in a CSV file) for subsequent machine learning analysis.

Performance Benchmarking of Key PLMs

Selecting the appropriate PLM is crucial for project success. Below is a comparative analysis of leading open-source PLMs based on benchmarking studies for protein property prediction tasks, including crystallization propensity, which shares similarities with EC number prediction as a sequence-based classification problem [24].

Table 1: Benchmarking of Open-Source Protein Language Models for Sequence Embedding

| Model | Key Architecture | Embedding Dimension (per-protein) | Notable Strengths | Considerations |

|---|---|---|---|---|

| ESM2 [24] | Transformer Encoder | Varies by size (e.g., 1280 for t30, 2560 for t36) | Superior performance in crystallization prediction benchmarks (3-5% gains in AUC/AUPR) [24]. Broadly effective. | Model size scales computationally. |

| ProtT5-XL [24] | T5 Encoder-Decoder | 1024 | Strong performer in multiple benchmarks. | Computational demand of encoder-decoder architecture. |

| Ankh [24] | Transformer Encoder | Varies by size (e.g., 1536 for Large) | First large-scale PLM trained on African genomes, offering diversity. | Performance in benchmarks slightly behind ESM2 [24]. |

| ProstT5 [24] | T5-based | 1024 | Designed for protein structure-text tasks, potentially rich embeddings. | Benchmark performance behind ESM2 for crystallization [24]. |

| xTrimoPGLM [24] | Generalized Language Model | Varies | A general model capable of understanding both protein and natural language. | Comprehensive benchmarking data is less extensive. |

| SaProt [24] | Transformer with structure-aware vocabulary | Varies | Incorporates structural vocabulary, potentially bridging sequence-structure gap. | Requires structure-derived inputs for full capability. |

Table 2: Performance of PLM-based Classifiers on an Independent Crystallization Test Set (Adapted from [24])

| Model | AUC | AUPR | F1 Score |

|---|---|---|---|

| ESM2 (t36, 3B params) + LightGBM [24] | 0.89 | 0.90 | 0.82 |

| ESM2 (t30, 150M params) + LightGBM [24] | 0.87 | 0.88 | 0.80 |

| ProtT5-XL + LightGBM [24] | 0.84 | 0.85 | 0.77 |

| Ankh-Large + LightGBM [24] | 0.83 | 0.84 | 0.76 |

| DeepCrystal (CNN-based) [24] | 0.82 | 0.83 | 0.75 |

Integration of PLM Embeddings for EC Number Prediction

The application of PLM embeddings has proven highly effective for EC number prediction. Researchers can integrate these embeddings into a standard machine learning workflow, as illustrated in Figure 2.

- Embedding Generation: Generate per-protein embeddings for all enzyme sequences in the dataset using a chosen PLM (e.g., ESM2) as described in the protocol.

- Classifier Training: Use the generated embeddings as input features to train a supervised classifier. Gradient Boosting Machines (e.g., LightGBM, XGBoost) and simple neural networks are common and effective choices [24].

- Hierarchical Prediction: EC numbers form a hierarchical tree. It is often beneficial to train separate classifiers for each level (e.g., first digit: class; second digit: subclass; etc.) or to use a multi-label, multi-class setup that respects this hierarchy [2] [5].

- Advanced Integration with Specialized Models: For maximum performance, PLM embeddings can be fused with other data sources. For instance:

- GraphEC: This model uses ESMFold-predicted structures to construct 3D graphs of the enzyme's active site. It then augments these structural graphs with sequence embeddings from ProtTrans (a family that includes ProtT5) to achieve state-of-the-art EC number prediction [2].

- ProteEC-CLA: This predictor integrates the pre-trained ESM2 model with contrastive learning and an agent attention mechanism to deeply analyze sequence features, achieving high accuracy (e.g., 93.34% on a challenging clustered dataset) [5].

Figure 1: Workflow for generating protein sequence embeddings and using them for EC number prediction.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Resources for Leveraging PLMs in Research

| Resource Name | Type | Function/Benefit | URL/Reference |

|---|---|---|---|

| ESM2 [24] | Pre-trained Model | Provides state-of-the-art sequence embeddings for protein sequences. | Hugging Face Hub: facebook/esm2_t*_* |

| TRILL [24] | Software Platform | Democratizes access to multiple PLMs (ESM2, Ankh, ProtT5) via a command-line interface, simplifying embedding generation. | https://github.com/raghvendra5688/crystallization_benchmark |

| Hugging Face Transformers | Python Library | The primary library for loading and using pre-trained transformer models, including ESM2 and ProtT5. | https://github.com/huggingface/transformers |

| LightGBM / XGBoost [24] | Machine Learning Library | High-performance gradient boosting frameworks that are highly effective for building classifiers on top of PLM embeddings. | https://github.com/Microsoft/LightGBM |

| ProteEC-CLA [5] | Specialized Predictor | An example of a state-of-the-art EC number predictor built using ESM2 embeddings, contrastive learning, and agent attention. | N/A |

| GraphEC [2] | Specialized Predictor | An example of a predictor that combines ESMFold-predicted structures with ProtTrans sequence embeddings for EC number prediction. | N/A |

Troubleshooting and Optimization Guidelines

- Low Predictive Performance:

- Potential Cause: The chosen PLM embeddings may not be optimal for your specific enzyme family or task.

- Solution: Benchmark multiple PLMs (see Table 1) on a validation set. Consider using a larger model (e.g., ESM2 3B instead of 150M) if computationally feasible. Fine-tuning the PLM on a related task can also improve performance.

- Long Computation Time:

- Potential Cause: Using very large models or processing extremely long sequences.

- Solution: Utilize a GPU for embedding generation. For long sequences, consider using a model with a longer context window or truncating sequences if biologically reasonable. Start with a smaller, faster model for prototyping.

- Handling Out-of-Distribution Sequences:

- Potential Cause: The PLM was not exposed to similar sequences during pre-training.

- Solution: Models like METL, which are pre-trained on biophysical simulation data, can offer an alternative or complementary approach to evolution-based models like ESM2, potentially improving generalization [25]. Ensemble methods combining multiple PLMs can also be robust.

Accurately predicting Enzyme Commission (EC) numbers is a fundamental challenge in bioinformatics, with significant implications for understanding disease mechanisms, identifying drug targets, and advancing synthetic biology [5] [18]. The EC number system provides a hierarchical classification (e.g., EC 2.7.10.1) that precisely defines an enzyme's catalytic function across four levels of specificity. However, experimental determination of enzyme function is complex, time-consuming, and resource-intensive, creating a substantial gap between the rapid accumulation of protein sequences and their functional annotation [26]. While traditional homology-based methods and emerging deep learning approaches have shown promise, they often struggle with data scarcity, class imbalance across thousands of EC categories, and an inherent inability to identify truly novel functions beyond their training distribution [18] [19]. Contrastive learning has emerged as a powerful framework to address these limitations by learning representations that map enzyme sequences with similar functions closer in embedding space while pushing dissimilar functions apart, thereby improving both prediction accuracy and generalization capability for enzyme function annotation.

Contrastive Learning Fundamentals for Biological Sequences

Contrastive learning is a machine learning paradigm that teaches models to recognize similarities and differences by contrasting positive and negative sample pairs [27] [28]. In biological contexts, this approach mimics how human experts compare sequences or structures to infer functional relationships. The core principle involves learning an embedding space where similar instances (positive pairs) are positioned close together while dissimilar instances (negative pairs) are separated [29]. For enzyme function prediction, this translates to mapping sequences with identical or similar EC numbers closer in the latent space while separating those with different functions.

Key Components of Contrastive Learning Frameworks:

- Anchor, Positive, and Negative Samples: The anchor is a reference data point, the positive sample shares the same functional class as the anchor, while the negative sample belongs to a different class [27].

- Encoder Network: Typically a deep neural network that maps input sequences to a latent representation space [28].

- Projection Head: A non-linear transformation that further refines representations for contrastive objectives [29].

- Loss Functions: Specialized functions that quantify similarity and guide the learning process [27].

Critical Loss Functions for Enzyme Function Prediction:

- InfoNCE (Noise-Contrastive Estimation): Maximizes agreement between positive samples while minimizing agreement with multiple negative samples [27] [28].

- Triplet Loss: Ensures the anchor is closer to positive samples than to negative samples by a defined margin [27].

- N-Pair Loss: Extends triplet loss to consider multiple negative samples simultaneously for more stable training [27].

- Contrastive Loss: A margin-based loss that directly penalizes positive pairs that are distant and negative pairs that are close in embedding space [28].

Table 1: Contrastive Loss Functions for Enzyme Function Prediction

| Loss Function | Key Mechanism | Advantages | Typical Applications |

|---|---|---|---|

| InfoNCE | Contrasts against multiple negative samples | Excellent for multi-class scenarios | ProteEC-CLA [5], CLAIRE [18] |

| Triplet Loss | Uses anchor-positive-negative triplets | Effective with carefully selected hard negatives | Fine-grained functional discrimination |

| N-Pair Loss | Multiple positive and negative pairs | Captures nuanced relationships | Multi-label enzyme functions |

| Contrastive Loss | Margin-based separation | Simple implementation | Binary similarity learning |

Implementation Protocols for EC Number Prediction

Protocol 1: Sequence-Based Contrastive Learning with ProteEC-CLA

ProteEC-CLA demonstrates how contrastive learning can be applied directly to protein sequences for EC number prediction by combining contrastive learning with agent attention mechanisms [5].

Experimental Workflow:

Step-by-Step Methodology:

- Input Representation: Convert raw protein sequences into numerical embeddings using the pre-trained ESM-2 language model, which captures evolutionary patterns and biochemical properties [5].

- Contrastive Sample Selection: Construct positive and negative pairs based on EC number hierarchy. Sequences sharing identical EC numbers at the target level form positive pairs, while sequences with different EC numbers form negative pairs.

- Feature Enhancement: Process embeddings through agent attention mechanisms to capture both local details and global features critical for functional discrimination [5].

- Contrastive Optimization: Apply contrastive loss (typically InfoNCE variant) to maximize agreement between positive pairs and minimize agreement between negative pairs in the embedding space.

- Hierarchical Classification: Implement multi-level classifiers that leverage the learned representations to predict EC numbers across all four hierarchical levels.

Key Advantages: This approach achieves 98.92% accuracy at the EC4 level on standard benchmarks and 93.34% accuracy on more challenging clustered split datasets, demonstrating robust performance even for enzymes with distant evolutionary relationships [5].

Protocol 2: Multi-Modal Contrastive Learning with MAPred

MAPred introduces a multi-modal approach that integrates both sequence and structural information through an autoregressive prediction network, addressing limitations of sequence-only methods [26].

Experimental Workflow:

Step-by-Step Methodology:

- Multi-Modal Input Encoding: Generate both sequence embeddings (using ESM) and structural tokens (3Di sequences from ProstT5) from the primary amino acid sequence [26].

- Cross-Attention Fusion: Employ interlaced sequence-to-3Di cross-attention mechanisms to integrate structural and sequence information bidirectionally.

- Multi-Scale Feature Extraction: Implement parallel global and local feature extraction pathways, with CNN-based architectures capturing conserved functional sites [26].

- Autoregressive Prediction: Decompose EC number prediction into a sequential process that first predicts the first digit, then uses this prediction as context for subsequent digits, respecting the intrinsic hierarchy of the EC classification system [26].

Performance Characteristics: This approach demonstrates state-of-the-art performance on challenging benchmark datasets including New-392, Price, and New-815, particularly for enzymes with limited sequence homology but conserved structural features [26].

Protocol 3: Structure-Aware Contrastive Learning with TopEC

TopEC addresses scenarios where 3D structural information is available, leveraging graph neural networks to incorporate spatial relationships directly into the contrastive learning framework [10].

Experimental Workflow:

- Structure Representation: Convert enzyme structures into graph representations at either residue (Cα atoms) or atomic (heavy atoms) resolution [10].

- Localized Descriptor Extraction: Focus on binding site regions using experimental evidence, homology annotation, or P2Rank predictions to create localized 3D descriptors.

- 3D Graph Neural Networks: Apply message-passing networks (SchNet for distances, DimeNet++ for distances and angles) to capture spatial and chemical interactions.

- Contrastive Objective: Optimize representations such that enzymes with similar functions cluster in 3D-aware embedding space regardless of overall fold similarity.

Performance Metrics: TopEC achieves an F-score of 0.72 for EC classification, significantly outperforming regular 2D graph neural networks and demonstrating particular strength in identifying similar functions across distinct structural folds [10].

Table 2: Performance Comparison of Contrastive Learning Frameworks for EC Prediction

| Framework | Input Modality | Key Innovation | Reported Performance | Dataset |

|---|---|---|---|---|

| ProteEC-CLA [5] | Sequence | Agent Attention + Contrastive Learning | 98.92% accuracy (EC4) 93.34% accuracy (clustered split) | Standard benchmark |

| CLAIRE [18] | Chemical Reactions | Contrastive Learning + Data Augmentation | F1: 0.861 (test set) F1: 0.911 (yeast metabolism) | ECREACT (n=61,817) |

| MAPred [26] | Sequence + Structure | Multi-modal + Autoregressive Prediction | State-of-art on New-392, Price, New-815 | Multiple benchmarks |

| TopEC [10] | 3D Structure | Localized 3D Descriptors + GNNs | F-score: 0.72 | PDB300 + TopEnzyme |

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Computational Tools for Contrastive Learning in Enzyme Informatics

| Tool/Resource | Type | Function | Application Context |

|---|---|---|---|

| ESM-2 [5] [26] | Pre-trained Language Model | Protein sequence embedding | General-purpose sequence representation |

| ProstT5 [26] | Structure Prediction | 3Di token generation from sequence | Structural feature extraction |

| DRFP [18] | Reaction Fingerprint | Reaction representation | Chemical reaction encoding |

| RxnFP [18] | Pre-trained Model | Reaction embeddings | Reaction property prediction |

| SchNet [10] | Graph Neural Network | 3D distance-based learning | Spatial relationship modeling |

| DimeNet++ [10] | Graph Neural Network | Distance and angle learning | Geometric feature extraction |

| UniProt [21] [19] | Database | Annotated enzyme sequences | Training data and benchmarking |

| Rhea [18] | Database | Enzyme-reaction mappings | Reaction-EC relationship training |

Validation and Best Practices

Experimental Validation Protocols

Rigorous validation is essential for reliable enzyme function prediction. Recommended protocols include:

Computational Validation:

- Fold Split Evaluation: Cluster datasets at 30% sequence identity to remove fold bias and ensure generalization to structurally diverse enzymes [10].

- Temporal Split Validation: Split data chronologically to simulate real-world scenarios where models predict functions for newly discovered enzymes [10].

- Cross-Family Validation: Evaluate performance across diverse enzyme families to detect over-specialization to particular protein folds.

Experimental Validation:

- In Vitro Assays: Express and purify predicted enzymes, then measure catalytic activity against hypothesized substrates [21].

- Kinetic Characterization: Determine Michaelis-Menten parameters (Km, kcat) to quantify catalytic efficiency and compare with known enzymes in the same EC class.

- Negative Controls: Include enzymes known not to perform the predicted function to test specificity claims.

Critical Implementation Considerations

Data Quality and Curation:

- Address Database Errors: Recognize that approximately 30% of novel predictions in some studies were already present in databases or contained biologically implausible repetitions [19].

- Combat Class Imbalance: Utilize focal loss penalties or specialized sampling strategies to address extreme imbalance across EC categories [11].

- Data Augmentation: For reaction-based prediction, shuffle participant order within reactants and products to increase robustness [18].

Biological Context Integration:

- Evolutionary Context: Account for gene duplication and functional diversification events that create structural similarities without functional conservation [19].

- Cellular Context: Consider metabolic pathways, gene neighborhood, and organism-specific biochemistry to validate predictions [19].

- Multi-Modal Evidence: Integrate structural, sequence, and contextual evidence rather than relying on any single information type [26] [10].

Contrastive learning frameworks represent a transformative approach for mapping sequences to functional similarity in enzyme informatics. By learning representations that explicitly encode functional relationships, these methods advance beyond traditional homology-based approaches and address critical challenges of data scarcity and class imbalance. The integration of multi-modal data—combining sequence, structure, and reaction information—through sophisticated architectures including agent attention, cross-modal fusion, and graph neural networks has demonstrated significant improvements in prediction accuracy and generalization capability. As these frameworks continue to evolve, their ability to leverage increasingly available protein structural data from prediction tools like AlphaFold and ESMFold will further enhance their utility for annotating the vast landscape of uncharacterized enzymes, ultimately accelerating discovery in biotechnology, drug development, and fundamental biological research.

The accurate prediction of Enzyme Commission (EC) numbers is a fundamental challenge in computational biology, with significant implications for understanding cellular metabolism, drug discovery, and synthetic biology. Traditional prediction methods have primarily relied on protein sequence homology, often overlooking the critical three-dimensional structural information that directly determines enzyme function and catalytic activity. The emergence of geometric graph learning represents a paradigm shift in the field, enabling researchers to directly leverage protein structural data for highly accurate function annotation. This approach is particularly powerful for annotating enzymes with limited sequence homology to characterized proteins, thereby expanding the functional space of predictable enzymes.

Tools such as GraphEC exemplify this structure-aware approach by integrating predicted protein structures with advanced neural network architectures to achieve state-of-the-art prediction performance. These methods recognize that enzyme active sites—typically located on the protein surface and responsible for catalyzing reactions—exhibit high evolutionary conservation and are more reliably identified through structural analysis than sequence alignment alone. By focusing on the spatial arrangement of atoms and residues, geometric graph learning captures the physical and chemical constraints that govern enzymatic function, leading to more biologically meaningful predictions.

This protocol details the implementation, application, and validation of structure-aware EC number prediction methods, with specific emphasis on GraphEC. It provides researchers with comprehensive guidance for utilizing these advanced computational techniques, along with performance benchmarks against alternative approaches and practical considerations for experimental design.

Performance Comparison of EC Number Prediction Tools

Table 1: Comparative performance of EC number prediction tools across independent test sets

| Method | Approach | Key Features | Test Set | Performance Metrics |

|---|---|---|---|---|

| GraphEC [30] [31] | Geometric graph learning | ESMFold-predicted structures, active site prediction, ProtTrans embeddings, label diffusion | NEW-392 | Outperformed competing methods |

| Price-149 | Outperformed competing methods | |||

| TopEC [10] | 3D graph neural network | Localized 3D descriptor, message-passing networks (SchNet, DimeNet++), binding site focus | Fold-split dataset | F-score: 0.72 |

| CLEAN [32] | Contrastive learning | Protein sequence embeddings, contrastive learning framework | Benchmark tests | High accuracy, predicts promiscuous activity |

| DeepEC [33] | Convolutional Neural Networks (CNNs) | Three specialized CNNs, homology analysis fallback | Benchmark tests | High precision, high-throughput |

| HDMLF [16] | Hierarchical dual-core multitask learning | Protein language model embedding, GRU framework, attention mechanism | Testset20 & Testset22 | Accuracy improved by 60%, F1 by 40% over previous state-of-the-art |

| BEC-Pred [6] | Transformer-based model | Uses reaction SMILES (substrates/products), transfer learning | Reaction dataset | Accuracy: 91.6% |

Table 2: GraphEC-AS active site prediction performance on the TS124 independent test

| Method | AUC | MCC | Recall | Precision | F1 Score |

|---|---|---|---|---|---|

| GraphEC-AS [30] | 0.9583 | 0.4145 | 0.7126 | 0.2336 | 0.4698 |

| PREvaIL_RF [30] | - | 0.2939 | 0.6223 | 0.1487 | 0.2400 |

| BiLSTM (without structural info) [30] | - | - | - | - | Performance lower than GraphEC-AS |

Application Notes

Advantages of Structure-Aware Approaches

Structure-aware prediction methods offer several distinct advantages over traditional sequence-based approaches. GraphEC utilizes geometric graph learning on ESMFold-predicted structures, augmented by pre-trained protein language model (ProtTrans) embeddings. Its unique implementation involves first predicting enzyme active sites (GraphEC-AS), which then guides the EC number prediction. This active-site-first approach is biologically intuitive since these regions are highly conserved and directly determine function [30]. Experimental results demonstrate that GraphEC-AS achieves an AUC of 0.9583 on the TS124 independent test, significantly outperforming methods like PREvaIL_RF [30]. Visualization of the learned embeddings shows that GraphEC-AS clearly separates active sites from non-active sites in the structural space, a distinction not achievable with sequence-only methods [30].

The TopEC framework employs 3D graph neural networks with localized 3D descriptors based on enzyme binding sites. By using message-passing networks (SchNet, DimeNet++) that incorporate distance and angle information, TopEC achieves an F-score of 0.72 on a fold-split dataset, significantly outperforming regular 2D graph neural networks [10]. This approach is robust to uncertainties in binding site locations and can recognize similar functions occurring in distinct structural binding sites. The model learns from an interplay between biochemical features and local shape-dependent features, capturing subtle structural determinants of function that evade sequence-based detection [10].

Limitations and Considerations

Despite their superior performance, structure-aware methods present certain limitations. The computational resources required for predicting and processing protein structures are substantial, though tools like ESMFold have reduced inference time by up to 60 times compared to AlphaFold2 [30]. The quality of predicted structures directly impacts performance, with GraphEC performance improving with higher TM-scores of ESMFold-predicted structures [30].

These methods also depend on training data quality and coverage. While structure-based models are less affected by sequence bias, they may still struggle with enzyme classes underrepresented in structural databases. Furthermore, the interpretation of complex geometric graph learning models can be challenging, requiring additional validation to build biological trust in the predictions [32].

Experimental Protocols

Protocol 1: EC Number Prediction Using GraphEC

Objective: Predict EC numbers for a set of protein sequences using the GraphEC framework.

Materials:

- Computing Environment: Linux system with NVIDIA GPU (≥8GB memory recommended)