How Secondary Structures Hinder PCR Efficiency: Mechanisms, Solutions, and Modern Validation Strategies

This article provides a comprehensive analysis of how DNA secondary structures such as hairpins, G-quadruplexes, and stable GC-rich domains negatively impact Polymerase Chain Reaction (PCR) efficiency.

How Secondary Structures Hinder PCR Efficiency: Mechanisms, Solutions, and Modern Validation Strategies

Abstract

This article provides a comprehensive analysis of how DNA secondary structures such as hairpins, G-quadruplexes, and stable GC-rich domains negatively impact Polymerase Chain Reaction (PCR) efficiency. Tailored for researchers and drug development professionals, we explore the foundational mechanisms of PCR failure, present established and novel methodological workarounds, detail systematic troubleshooting protocols, and discuss advanced validation techniques using real-time PCR and deep learning. By synthesizing foundational knowledge with cutting-edge research, this guide serves as a critical resource for optimizing assays, ensuring accurate gene quantification, and advancing molecular diagnostics and synthetic biology applications.

The Hidden Hurdles: Understanding How DNA Secondary Structures Disrupt PCR

Within the context of gene regulation and polymerase chain reaction (PCR) efficiency, the secondary structures of nucleic acids—specifically hairpins and G-quadruplexes (G4s)—present both intriguing regulatory mechanisms and significant technical challenges. These stable, non-B DNA structures can form transiently or constitutively in genomic sequences, influencing fundamental cellular processes including gene transcription and replication. For researchers aiming to amplify or manipulate genetic sequences, these structures can act as formidable physical barriers, impeding polymerase progression and leading to assay failure, non-specific amplification, or biased results. A comprehensive understanding of their formation principles, structural characteristics, and experimental handling is therefore paramount for the design of robust and reproducible genetic experiments in drug development and basic research.

Structural Characteristics and Formation Principles

Hairpin Structures

Hairpins, also known as stem-loop structures, are among the most common secondary structures in nucleic acids. They form when a single-stranded DNA or RNA molecule folds back on itself, creating a double-stranded stem of complementary base pairs and a single-stranded loop. The formation is driven by Watson-Crick base pairing, and stability is primarily influenced by the length and GC content of the stem, as well as the size of the loop. In PCR and other enzymatic assays, hairpins within primers or templates can prevent efficient annealing and extension, particularly when the 3' end of a primer is involved in the stem structure, rendering it unavailable for polymerase extension [1].

G-Quadruplexes (G4s)

G-quadruplexes are higher-order DNA or RNA structures formed in guanine-rich sequences. Their core unit is the G-quartet, a planar array of four guanines held together by Hoogsteen hydrogen bonding. Stacking of multiple G-quartets leads to the formation of a stable G-quadruplex, with the intervening nucleotide sequences forming loops of varying lengths and configurations [2]. The stability and conformational polymorphism of G4 DNA are governed by the length, composition, and structure of these loops [2]. Conventional G4s contain loops of 1–7 nucleotides, but increasing evidence highlights the biological significance of unconventional G4s with long loops, which can be stabilized by the formation of nested secondary structures like hairpins within these loops [2].

Hybrid and Transition Structures: Hairpin-G4

The boundary between hairpins and G-quadruplexes is not always distinct. A single G-rich sequence can adopt multiple stable conformations. A prominent example is the conformational transition between a hairpin and a G-quadruplex, as identified in the promoter region of the WNT1 gene [3]. The native G-rich sequence (WT22) from the WNT1 promoter was shown to form both hairpin and G-quadruplex topologies. The potassium ion-induced transition from the hairpin to the G4 structure was found to be remarkably slow, occurring on a time scale of about 4800 seconds, underscoring the complex kinetic landscape that can govern these structural interconversions [3]. Furthermore, so-called hairpin-G4s—G-quadruplexes where a long loop folds into a stable hairpin—have been systematically studied and found to be more stable than G4s with unstructured long loops [2]. This synergy between structures expands the functional and structural diversity of non-B DNA conformations in the genome.

Impact on PCR Efficiency and Gene Expression

The formation of secondary structures in template DNA or primers directly challenges PCR efficiency. These structures act as physical barriers, causing polymerase pausing, stalling, or premature dissociation. This can result in truncated products, reduced yield, or complete amplification failure. The issue is particularly acute with GC-rich templates, which have a high propensity to form both stable hairpins and G-quadruplexes [4].

- Hairpin-Induced Problems: Hairpins within a primer, especially near its 3' end, can prevent proper annealing to the template. If a hairpin forms in the template within the amplicon, it can slow down or halt polymerase progression during extension [1].

- G-Quadruplex-Induced Problems: G4 structures are exceptionally stable physical barriers. High-throughput G4 sequencing (G4-Seq) is based on the principle that G4s cause polymerase pause sites [2]. During PCR, this pausing can lead to incomplete synthesis of the target strand.

Beyond their role as technical obstacles in PCR, these structures are recognized as key regulatory elements in biology. Hairpin-G4s identified in promoter regions have been shown to form stable structures and regulate gene expression, as confirmed by in-cell reporter assays [2]. The ability of a single sequence to interconvert between a hairpin and a G-quadruplex, as seen in the WNT1 promoter, provides a potential mechanism for ligand-mediated or condition-dependent gene modulation [3].

Quantitative Data on Structure Stability

The stability of secondary structures is quantifiable through various biophysical parameters, which helps researchers predict their potential impact on experimental outcomes. The following table summarizes key stability metrics for hairpins and G-quadruplexes based on current research.

Table 1: Quantitative Stability Metrics for Hairpins and G-Quadruplexes

| Structure Type | Key Stability Factor | Typical Stable Range | Measurement Technique | Reported Stability Data |

|---|---|---|---|---|

| PCR Primer | Melting Temp (Tm) | 50–72°C | Spectrophotometry / Calculator | Primer pairs should have Tms within 5°C of each other [1]. |

| PCR Primer | GC Content | 40–60% | Sequence Analysis | Prevents secondary structures; high GC increases stability [4] [1]. |

| Hairpin Loop | Loop Size | Variable | NMR, CD Spectroscopy | Smaller loops generally increase hairpin stability. |

| Conventional G4 | Loop Length | 1–7 nt | Thermal Denaturation, CD, NMR | Defined as canonical; widely studied and predicted [2]. |

| Long-Loop G4 | Loop Length | >10 nt (up to 20+ nt) | G4-Seq, Thermal Denaturation | Less stable than conventional G4s, but stability increases with internal hairpin [2]. |

| Hairpin-G4 | Thermal Stability | Variable ΔTm | CD Melting Curve | More stable than long-loop G4s with unstructured loops [2]. |

| Kinetic Transition | Hairpin-to-G4 | ~4800 s | NMR Kinetics | Slow conformational transition observed in WNT1 promoter sequence [3]. |

Experimental Protocols for Structural Analysis

Circular Dichroism (CD) Spectroscopy for G-Quadruplex Topology

CD spectroscopy is a primary technique for characterizing the secondary structure and thermal stability of G-quadruplexes in solution.

- Sample Preparation: Dissolve the oligonucleotide in an appropriate buffer (e.g., 10 mM lithium cacodylate, pH 7.0) with or without stabilizing cations (e.g., 100 mM KCl). Anneal the sample by heating to 95°C for 5–10 minutes and then allow it to cool slowly to room temperature.

- Data Acquisition: Acquire CD spectra over a wavelength range (e.g., 220–320 nm) at a constant temperature. For thermal stability analysis, monitor the CD signal at a specific wavelength (e.g., 264 nm for parallel G4s) while increasing the temperature at a controlled rate (e.g., 1°C per minute). The melting temperature (Tm) is determined as the point of the steepest transition in the melting curve.

- Interpretation: Parallel G-quadruplexes typically show a positive peak at ~264 nm and a negative peak at ~240 nm. The Tm value provides a comparative measure of structural stability [2] [3] [5].

Nuclear Magnetic Resonance (NMR) Spectroscopy for High-Resolution Structure

NMR is used for determining the atomic-resolution structure and for characterizing conformational dynamics, such as the hairpin-to-G4 transition.

- Sample Preparation: Prepare a relatively concentrated DNA sample (e.g., 0.5–2 mM) in a buffer, often in D2O or a 90% H2O/10% D2O mixture. Site-specific isotope labeling (e.g., with 15N or 13C) can be employed to resolve complex spectra.

- Data Acquisition: Collect a series of one-dimensional (1D) and two-dimensional (2D) NMR spectra (e.g., NOESY, TOCSY, COSY) at various temperatures. Non-exchangeable proton assignments are made in D2O, while imino proton assignments, crucial for observing G-quartet formation, are made in H2O-based buffers.

- Interpretation: Imino proton signals in the 10–12 ppm region are characteristic of Hoogsteen hydrogen bonds in G-quartets. NOE cross-peaks provide distance restraints for calculating the 3D structure. Kinetic studies of structural transitions can be performed by time-resolved NMR after initiating the change (e.g., by adding K+ ions) [3] [5].

Polymerase Stop Assay for Functional Impact

The polymerase stop assay directly evaluates the ability of a secondary structure to halt polymerase elongation, modeling its impact on processes like PCR or replication.

- Reaction Setup: An end-labeled DNA template containing the potential structure-forming sequence is incubated with a DNA polymerase (e.g., Taq polymerase) under conditions that favor structure formation (e.g., with KCl for G4s).

- Gel Electrophoresis: The reaction products are separated on a denaturing polyacrylamide gel. A sequencing ladder run in parallel helps identify the precise stop site.

- Interpretation: A strong pause or stop signal immediately upstream or within the structure-forming sequence indicates an impediment to polymerase progression. The intensity of the stop band correlates with the stability and potency of the secondary structure [2].

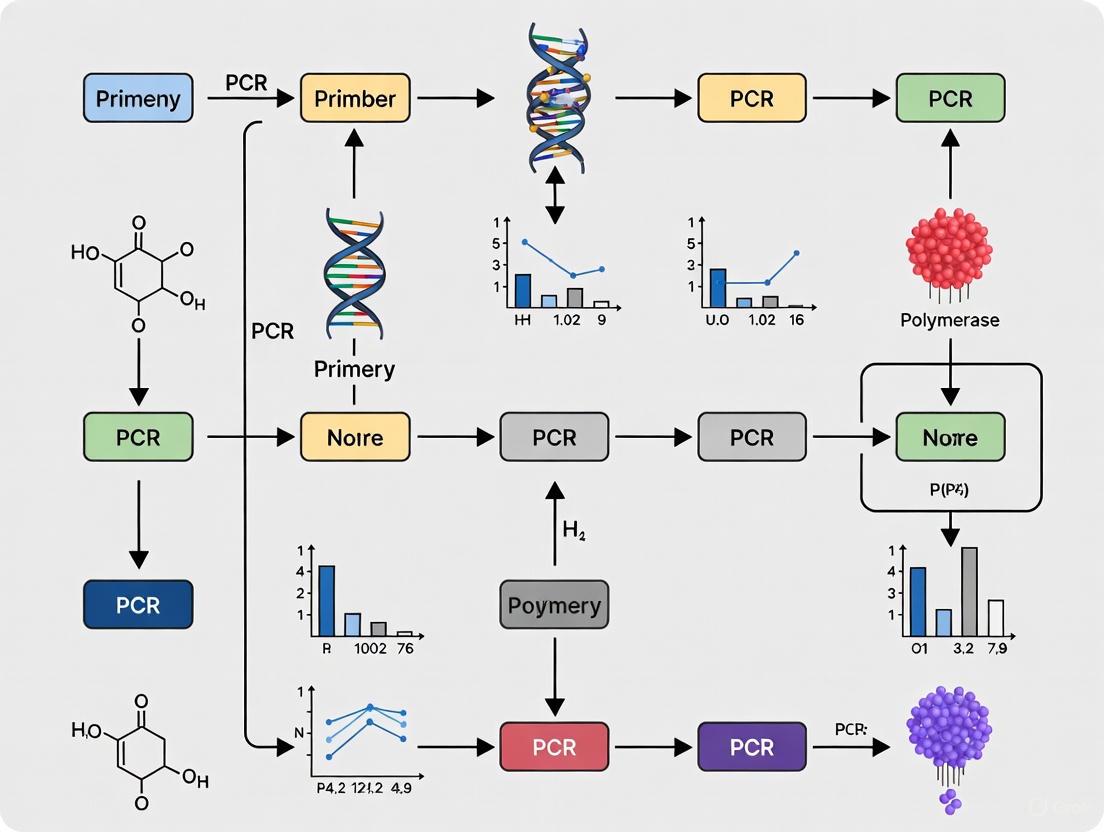

Experimental Workflow for Secondary Structure Analysis

The following diagram illustrates a generalized workflow for characterizing a problematic DNA sequence, integrating the techniques described above.

The Scientist's Toolkit: Research Reagent Solutions

Successfully navigating research involving secondary structures requires a suite of specialized reagents and tools. The table below details key solutions for analyzing and mitigating their effects.

Table 2: Essential Research Reagents and Tools for Secondary Structure Studies

| Reagent / Tool | Function / Purpose | Application Notes |

|---|---|---|

| DMSO (Dimethyl Sulfoxide) | A chemical additive that disrupts secondary structures, particularly effective for GC-rich and G-quadruplex sequences. | Typically used at 5-10% concentration in PCR to improve yield from structured templates [4]. |

| Betaine | A stabilizing osmolyte that equalizes the stability of GC and AT base pairs, reducing the formation of hairpins and other secondary structures. | Used in PCR to enhance amplification of GC-rich targets [4]. |

| Hot-Start DNA Polymerase | A modified enzyme inactive until a high-temperature activation step, preventing non-specific priming and primer-dimer formation at lower temperatures. | Crucial for improving specificity, especially when primers have secondary structure [4]. |

| HPLC-Purified Primers | High-purity oligonucleotides free from truncated synthesis products and salts. | Ensures accurate concentration and reduces failed reactions due to primer impurities [1]. |

| Site-Specific Isotope Labeling (¹⁵N, ¹³C) | Incorporation of stable isotopes into DNA oligonucleotides at specific positions for NMR resonance assignment. | Essential for determining high-resolution structures of complex folds like G-quadruplexes [3]. |

| G4-Stabilizing Cations (K⁺, Na⁺) | Ions that coordinate in the central channel of G-quartets, stabilizing the G-quadruplex structure. | K⁺ generally confers higher stability than Na⁺. Used in buffer for in vitro studies [2] [5]. |

Strategies for Mitigating PCR Interference

Overcoming the inhibitory effects of secondary structures is critical for successful genetic analysis. The following strategies are recommended:

- Primer Design Optimization: Design primers that are 20–30 nucleotides long with a GC content of 40–60%. Avoid stretches of 4 or more of the same nucleotide and ensure the 3' end ends in a G or C to strengthen binding. Most importantly, use software to check for and avoid self-complementarity and hairpin formation within the primers themselves [4] [1].

- Chemical Additives: Incorporate DMSO (5-10%), formamide, or betaine into the PCR mix. These compounds interfere with hydrogen bonding and base stacking, destabilizing secondary structures in the template and allowing polymerase access [4].

- PCR Protocol Modifications:

- Touchdown PCR: Begin with an annealing temperature several degrees above the calculated primer Tm and gradually decrease it in subsequent cycles. This favors the amplification of the specific target when it first anneals, before non-specific products can form [4] [1].

- Hot-Start PCR: Use a modified polymerase that requires thermal activation. This prevents primer-dimer formation and non-specific extension during reaction setup at low temperatures [4].

- Extended Denaturation and Higher Annealing Temperatures: Increase the initial denaturation time and use a higher annealing temperature to help melt stable structures [1].

- Template Manipulation: Use high-quality, clean template DNA to avoid contaminants that can exacerbate polymerase pausing. For extremely problematic structured regions, consider using a reverse transcriptase enzyme or a polymerase with high processivity and strand-displacement activity, which are better equipped to unwind secondary structures [4].

In the realm of molecular biology, the polymerase chain reaction (PCR) stands as a transformative technology that has catapulted the discipline into a golden age of discovery [6]. However, despite its widespread adoption, PCR efficiency remains susceptible to various molecular impediments, with stable intramolecular secondary structures in DNA templates representing a particularly formidable challenge [7] [8]. These structures, which form preferentially due to reaction kinetics before any intermolecular interactions during the annealing step, can adversely impact PCR performance through multiple proposed mechanisms including polymerase stalling, polymerase jumping, and endonucleolytic cleavage [8]. The consequences manifest practically as higher error rates, reduced sensitivity, decreased specificity, and sometimes complete amplification failure [8]. Understanding how these stable structures directly impede two fundamental processes—primer annealing and polymerase processivity—is therefore crucial for researchers, scientists, and drug development professionals seeking to optimize molecular assays, particularly when working with difficult templates such as GC-rich regions or complex viral vectors.

Molecular Mechanisms of PCR Inhibition by Secondary Structures

How Stable Secondary Structures Physically Block Primer Access

Stable intramolecular secondary structures within DNA templates, particularly hairpins and stem-loop formations, create significant physical barriers to effective primer annealing. During the PCR annealing step, these structures form preferentially before any intermolecular interactions due to reaction kinetics [8]. When a template sequence folds back upon itself through complementary base pairing, it creates a double-stranded region that effectively "hides" the primer binding site, making it inaccessible for hybridization with the PCR primer.

The thermal stability of these secondary structures directly correlates with their inhibitory effects on PCR [8]. For instance, the inverted terminal repeat (ITR) sequences of adeno-associated virus (AAV) vectors form exceptionally stable T-shaped hairpin structures with a melting temperature (Tm) of approximately 85.3°C [8]. This remarkable stability means these structures remain intact at standard PCR annealing temperatures (typically 40-60°C), physically preventing primers from accessing their complementary sequences. The result is either complete amplification failure or significantly reduced yield, making ITRs among the most challenging templates for PCR amplification and Sanger sequencing [8].

Impediment of DNA Polymerase Processivity

Beyond blocking primer access, stable secondary structures create formidable barriers to polymerase progression during the extension phase of PCR. Research using HIV-1 reverse transcriptase has demonstrated that DNA polymerases encounter significant pause sites when traversing stable hairpin structures [9]. These pause sites correlate directly with high free energy barriers required to melt stem base pairs ahead of the polymerase.

Pre-steady state kinetic analyses reveal that polymerization at these pause sites occurs through biphasic kinetics—a fast phase (10–20 s⁻¹) and a slow phase (0.02–0.07 s⁻¹) during a single binding event [9]. At non-pause sites, polymerization proceeds through a single phase with a fast nucleotide incorporation rate (33–87 s⁻¹). This suggests that DNA substrates at pause sites exist in both productive and non-productive states at the polymerase active site, with the non-productively bound DNA slowly converting to a productive state upon melting of the next stem base pair without dissociation from the enzyme [9].

The situation is further complicated by the finding that Taq polymerase possesses endonucleolytic activity that can cleave within stable secondary structures, leading to template degradation and amplification failure [8]. This mechanism adds another dimension to how secondary structures inhibit PCR beyond the more commonly recognized mechanisms of polymerase stalling and jumping.

Table 1: Quantitative Effects of DNA Secondary Structures on Polymerase Function

| Parameter | Non-Pause Sites | Pause Sites (within hairpins) | Experimental System |

|---|---|---|---|

| Incorporation Rate | 33–87 s⁻¹ | Fast phase: 10–20 s⁻¹Slow phase: 0.02–0.07 s⁻¹ | HIV-1 RT with synthetic hairpin template [9] |

| Reaction Amplitudes | 32–50% (single phase) | Fast phase: 4–10%Slow phase: 14–40% | Pre-steady state kinetic analysis [9] |

| Dissociation Rates | 0.14–0.29 s⁻¹ | 0.14–0.29 s⁻¹ | DNA binding studies [9] |

Experimental Evidence and Quantitative Analysis

The inhibitory effects of secondary structures on PCR efficiency have been quantitatively demonstrated through systematic studies. Deep learning approaches using convolutional neural networks (1D-CNNs) have successfully predicted sequence-specific amplification efficiencies based solely on sequence information, achieving high predictive performance (AUROC: 0.88, AUPRC: 0.44) [10]. These models trained on synthetic DNA pools revealed that approximately 2% of sequences exhibit very poor amplification efficiency (as low as 80% relative to the population mean), equivalent to a halving in relative abundance every 3 cycles [10]. This progressive skewing of coverage distributions during multi-template PCR directly results from sequence-specific amplification efficiencies independent of GC content [10].

Experimental validation using orthogonal qPCR confirmed that sequences identified as having low amplification efficiency in sequencing data also showed significantly lower amplification efficiencies in single-template qPCR [10]. Furthermore, when 1000 sequences from original experiments were resynthesized in a new oligo pool, sequences with low attributed amplification efficiencies were drastically underrepresented after just 30 PCR cycles and effectively drowned out completely by cycle 60, demonstrating that poor amplification is reproducible and independent of pool diversity [10].

Table 2: Quantitative Impact of Secondary Structures on PCR Amplification Efficiency

| Template Type | Amplification Efficiency | Experimental Outcome | Reference |

|---|---|---|---|

| Random sequences (2% subset) | ~80% relative to mean | Halving in relative abundance every 3 cycles; complete dropout after 60 cycles | [10] |

| EGFR Target A (structured) | Severe reduction | Significantly impaired amplification compared to unstructured Target D | [8] |

| rAAV ITR sequences | Near-complete failure | Unable to amplify without specialized methods | [7] [8] |

| HIV-1 template with hairpin | Polymerase pausing at 4 primary sites | Identified within first half of hairpin stem | [9] |

Diagram Title: Mechanisms of PCR Inhibition by Secondary Structures

Methodologies for Studying Secondary Structure Effects

Experimental Workflow for Assessing Structure-Induced Amplification Bias

Diagram Title: Workflow for Quantifying Amplification Efficiency

Research Reagent Solutions for Secondary Structure Challenges

Table 3: Research Reagents and Their Applications in Mitigating Secondary Structure Effects

| Reagent / Method | Composition / Mechanism | Application Context | Effectiveness |

|---|---|---|---|

| Disruptors | Three components: anchor (template binding), effector (strand displacement), 3' blocker (prevents elongation) [7] [8] | rAAV ITR amplification; templates with ultra-stable hairpins (Tm = 85.3°C) [8] | Successfully amplifies otherwise unamplifiable templates; superior to DMSO/betaine [8] |

| DMSO | Believed to reduce thermal stability of secondary structures [8] | GC-rich templates; general secondary structure issues | Effects vary greatly depending on template; ineffective for rAAV ITRs [8] |

| Betaine | Reduces strength of hydrogen bonds between guanosine and cytosine [8] | GC-rich templates; general secondary structure issues | Effects vary depending on template; ineffective for rAAV ITRs [8] |

| 7-deaza-dGTP | Modified nucleotide that reduces hydrogen bonding strength [8] | Extremely stable structures (e.g., rAAV ITRs) | Only reported success for full-length rAAV ITR amplification prior to disruptors [8] |

| Polymerase Mixtures | Combination of non-proofreading and proofreading enzymes [11] | Long-range PCR; structured regions | Improves yield by correcting misincorporations that may stall synthesis [11] |

Advanced Solutions and Optimization Strategies

Disruptor Technology: A Novel Approach

Disruptors represent a novel class of oligonucleotide reagents specifically designed to overcome stable intramolecular secondary structures in PCR templates [7] [8]. These engineered oligonucleotides consist of three functional components: (1) an anchor sequence designed to initiate template binding, (2) an effector region that disrupts intramolecular secondary structure through strand displacement, and (3) a 3' blocker to prevent its elongation by DNA polymerase [8].

The proposed mechanism of action involves the anchor sequence first binding to the template, followed by effector-mediated strand displacement that unwinds the intramolecular secondary structure [8]. This mechanism is consistent with experimental observations that the anchor plays a more critical role in disruptor function than the effector component [8]. In practical applications, disruptors have enabled successful amplification of inverted terminal repeat sequences of recombinant adeno-associated virus vectors despite their well-known reputation as some of the most difficult templates for PCR amplification due to ultra-stable T-shaped hairpin structures [7] [8]. Notably, in stark contrast to the effectiveness of disruptors, both DMSO and betaine—two PCR additives routinely used to facilitate amplification of GC-rich templates—demonstrated no improving effect on these challenging templates [8].

PCR Protocol Modifications and Additives

Beyond specialized reagents like disruptors, several PCR protocol modifications can help mitigate the effects of secondary structures. Touchdown PCR, which starts with an annealing temperature higher than the optimal Tm and gradually reduces it in later cycles, promotes selective amplification of the desired product by initially increasing stringency [4]. Hot-start PCR prevents nonspecific amplification and primer-dimer formation by keeping the polymerase inactive until high temperatures are reached, thereby increasing the stringency of primer annealing [11].

The use of additives represents another strategy. Dimethylsulfoxide (DMSO) at a final concentration of 1-10%, formamide (1.25-10%), bovine serum albumin (10-100 μg/ml), and betaine (0.5 M to 2.5 M) have all been employed as PCR enhancers to address various challenges including secondary structures [6]. However, it is crucial to note that the effectiveness of these additives varies greatly depending on template sequences, and they may themselves interfere with Taq polymerase activity at higher concentrations [8].

The direct impact of stable secondary structures on PCR efficiency manifests through two primary mechanisms: physical blocking of primer access to template binding sites and impairment of polymerase processivity during extension. These molecular challenges translate to very practical problems in laboratory settings—including reduced sensitivity, lower yields, and sometimes complete amplification failure—particularly when working with difficult templates such as GC-rich regions or complex viral vectors.

The continuing evolution of solutions, from traditional additives to novel technologies like disruptors and deep learning-based prediction tools, reflects the ongoing importance of this challenge in molecular biology research and diagnostic applications. As PCR continues to be a cornerstone technique in fields ranging from basic research to drug development and clinical diagnostics, understanding and addressing the impediments posed by secondary structures remains crucial for researchers seeking reliable and efficient amplification of challenging DNA templates. The integration of computational prediction models with advanced biochemical interventions represents a promising direction for preemptively identifying and resolving amplification challenges before they compromise experimental results.

The polymerase chain reaction (PCR) stands as a foundational technique in molecular biology, yet its efficiency is profoundly influenced by template sequence characteristics that are often overlooked in experimental design. Sequence-specific determinants—including GC content, repetitive elements, and specific motif locations—create secondary structures and other biochemical challenges that dramatically impact amplification success. These factors cause significant issues in multi-template PCR applications essential to modern genomics, from next-generation sequencing library preparation to DNA data storage systems, where non-homogeneous amplification results in skewed abundance data and compromised analytical accuracy [10]. Even with optimized traditional parameters (e.g., primer concentration, magnesium levels, and annealing temperature), inherent sequence properties can cause amplification failure or bias, leading to false negatives in diagnostic applications, inaccurate quantification in gene expression studies, and incomplete representation in metagenomic surveys [10] [12]. This technical guide examines how secondary structures and other sequence-specific features affect PCR efficiency, providing researchers with a framework for predicting and mitigating these effects through advanced design strategies and experimental optimizations.

Quantitative Impact of Sequence Features on PCR Efficiency

GC Content and Amplification Bias

GC content significantly influences amplification efficiency through its effect on duplex stability and secondary structure formation. Templates with extremely high or low GC content present distinct challenges:

High GC content (typically >60%) promotes formation of stable secondary structures and intra-molecular hairpins that hinder polymerase progression during extension. These structures increase local melting temperatures, requiring specialized cycling conditions or additives for successful amplification [13]. Research demonstrates that GC-rich sequences exhibit particularly problematic behavior in multi-template PCR, where standardized conditions apply to diverse sequences simultaneously [10].

Low GC content (<40%) results in low duplex stability, complicating primer annealing and potentially reducing processivity of DNA polymerase. These AT-rich sequences may fail to amplify efficiently under standard conditions optimized for average GC content [13].

Experimental data from deep learning models trained on synthetic DNA pools reveals that GC content alone does not fully explain poor amplification efficiency. In controlled studies, constraining random sequences to 50% GC content did not eliminate amplification biases, suggesting more complex sequence-specific factors beyond overall GC percentage [10].

Repetitive Sequences and Structural Challenges

Repetitive elements, including short tandem repeats (STRs) and homopolymer regions, introduce multiple challenges for PCR amplification:

Secondary structure formation: Repetitive sequences facilitate intra-strand folding that creates physical barriers to polymerase progression. These structures include hairpins, cruciforms, and G-quadruplexes that stall DNA synthesis [14].

Polymerase slippage: In homopolymer tracts and STR regions, DNA polymerase can dissociate and re-associate misaligned on the template strand, resulting in insertion/deletion errors that compromise sequence fidelity and potentially create premature termination sites [14].

Accessibility issues: Repeat-rich regions often exhibit unusual chromatin organization or DNA compaction in genomic contexts, further reducing amplification efficiency [15].

Recent research on STRs has revealed that approximately 7% of STRs exhibit sequence variability in human populations, with these variable repeats demonstrating distinct amplification properties and greater propensity for expansion during replication [14]. This variability directly impacts PCR efficiency in genotyping studies and clinical assays targeting these regions.

Motif Location and Primer-Proximal Effects

The location of specific sequence motifs relative to primer binding sites critically influences amplification success. Research utilizing convolutional neural networks to predict sequence-specific amplification efficiencies has identified that particular motifs adjacent to adapter priming sites strongly correlate with poor amplification [10]. The CluMo (Motif Discovery via Attribution and Clustering) interpretation framework has elucidated adapter-mediated self-priming as a major mechanism causing low amplification efficiency, challenging conventional PCR design assumptions [10].

Specifically, certain 5'-proximal promoter and 5' exon regions of protein-coding genes and long non-coding RNAs exhibit particular susceptibility to amplification failure when they contain specific motif patterns [15]. These problematic motifs often involve inverted repeats capable of forming stable secondary structures that interfere with primer binding or polymerase initiation.

Table 1: Sequence Features and Their Impact on PCR Efficiency

| Sequence Feature | Optimal Range | Problematic Extremes | Primary Impact Mechanism | Common Solutions |

|---|---|---|---|---|

| GC Content | 40-60% | <40% or >60% | Melting temperature variation; Secondary structure formation | Additives (DMSO, BSA); Temperature optimization |

| Homopolymer Runs | <8 bp | >12 bp | Polymerase slippage; Stalling | Proofreading enzymes; Buffer optimization |

| Repeat Density | Low | High | Secondary structure; Primer misalignment | Betaine; Increased extension time |

| Motif Location | Distant from primers | Adjacent to priming sites | Self-priming; Competitive structures | Primer redesign; Hot-start polymerase |

Experimental Analysis of Sequence-Specific Amplification

Systematic Evaluation of PCR Efficiency

Methodological approach for quantifying sequence-specific amplification efficiency:

Serial amplification protocol: A robust experimental design involves tracking amplicon coverage for thousands of synthetic DNA sequences with common terminal primer binding sites across multiple PCR cycles (e.g., 90 cycles divided into six consecutive reactions of 15 cycles each) [10]. This approach enables precise quantification of amplicon composition throughout the amplification trajectory.

Efficiency calculation: Sequence-specific amplification efficiency (εi) can be quantified by fitting sequencing coverage data to an exponential PCR amplification model that accounts for both initial coverage bias (from synthesis) and PCR-induced bias [10]. This dual-parameter model accurately identifies sequences with poor amplification characteristics.

Orthogonal validation: Efficiency measurements should be validated using single-template qPCR with selected sequences representing different efficiency categories [10]. This confirmation ensures that observed biases reflect true amplification differences rather than sequencing artifacts.

Experimental data from such systematic analyses reveals that approximately 2% of random sequences exhibit severely compromised amplification efficiency (as low as 80% relative to population mean), resulting in their effective disappearance from the pool after 60 amplification cycles [10]. This dropout occurs reproducibly across different pool compositions, confirming the sequence-specific nature of the phenomenon.

Positional Effects and Predictive Modeling

Deep learning approaches, particularly one-dimensional convolutional neural networks (1D-CNNs), have demonstrated remarkable success in predicting sequence-specific amplification efficiencies based solely on sequence information, achieving an AUROC of 0.88 and AUPRC of 0.44 [10]. These models confirm that positional sequence information, especially motifs adjacent to primer binding sites, provides critical predictive power for identifying problematic sequences.

The interpretation of these models through frameworks like CluMo has identified specific motif classes associated with poor amplification, enabling proactive sequence design to avoid amplification failures in applications such as DNA data storage and amplicon sequencing [10]. This approach reduces the required sequencing depth to recover 99% of amplicon sequences by fourfold, offering significant efficiency improvements for genomics applications.

Table 2: Quantitative Data on Sequence-Specific Amplification Efficiency

| Parameter | Value/Observation | Experimental Context | Impact |

|---|---|---|---|

| Poorly amplifying sequences | ~2% of random pools | Synthetic DNA pools with common adapters | Complete dropout after 60 cycles |

| Efficiency range | 80-100% (relative to mean) | Multi-template PCR | 5% efficiency reduction halves relative abundance every 3 cycles |

| Predictive model performance | AUROC: 0.88; AUPRC: 0.44 | 1D-CNN trained on synthetic sequences | Accurate identification of problematic sequences |

| GC-constrained pools | Comparable skewing in 50% GC pools | GCfix vs GCall experiments | Confirms factors beyond GC content drive efficiency |

| Required sequencing depth reduction | 4-fold | Using efficiency-informed design | More cost-effective sequencing |

Research Reagent Solutions for Challenging Sequences

Table 3: Essential Reagents for Optimizing Amplification of Problematic Sequences

| Reagent Category | Specific Examples | Mechanism of Action | Application Context |

|---|---|---|---|

| Polymerase Selection | Pfu, Vent (high-fidelity); Taq (high-yield) | Proofreading (3'-5' exonuclease) vs. processivity tradeoffs | GC-rich templates; Long amplicons; Cloning applications |

| Additives | DMSO (1-10%), formamide (1.25-10%), BSA (400ng/μL), betaine | Reduce secondary structure; Neutralize inhibitors | High GC content; Complex templates; Inhibitor-containing samples |

| Enhanced Buffer Systems | Commercial enhancers; Non-ionic detergents (Tween-20, Triton X-100) | Stabilize polymerase; Prevent secondary structure | Problematic motifs; Repeat-rich sequences |

| Hot-Start Components | Antibody-based, chemical modification, aptamer-based | Inhibit polymerase at low temperatures | Prevent primer-dimers; Improve specificity |

| Magnesium Optimization | MgCl₂ (0.5-5.0 mM range) | Cofactor for polymerase; Affects duplex stability | Fine-tuning specific primer-template pairs |

Advanced Methodologies for Sequence-Specific PCR Challenges

DNA Extraction and Template Preparation Protocols

Effective PCR amplification of challenging sequences begins with optimized DNA extraction protocols tailored to specific sample types. Research on endangered species identification demonstrates that extraction method significantly impacts downstream amplification success [16]. Key considerations include:

Method selection: Comparative evaluation of Chelex-100, sodium chloride (NaCl) precipitation, modified CTAB protocols, and commercial silica-based kits (e.g., NucleoSpin Tissue Kit) for specific sample types [16]. For calcified tissues like mollusk shells, harsh extraction conditions may be necessary despite potential DNA degradation.

Inhibitor removal: Shells and environmental samples often contain calcium carbonate, pigments, and other PCR inhibitors that require specialized purification [16]. Incorporating additional wash steps or alternative lysis buffers can significantly improve amplification efficiency for problematic templates.

Quality assessment: Beyond standard spectrophotometry, using fluorometric methods and PCR-based quality checks ensures template suitability for amplifying difficult sequences [16].

Stepwise Optimization of Amplification Conditions

A systematic approach to PCR optimization is essential for challenging templates. Research on real-time RT-PCR analysis establishes that stepwise optimization of primer sequences, annealing temperatures, primer concentrations, and template concentration ranges dramatically improves efficiency, specificity, and sensitivity [17].

Primer design strategy: For sequence-specific amplification, primer design should leverage single-nucleotide polymorphisms (SNPs) present in homologous sequences to ensure specificity [17]. This approach is particularly valuable for differentiating between highly similar sequences in multi-gene families.

Temperature optimization: Implementing thermal gradient experiments to identify optimal annealing temperatures for specific primer-template combinations, especially for templates with extreme GC content or secondary structures [17].

Concentration titration: Methodical optimization of primer concentrations (typically 0.1-1μM) and magnesium levels (0.5-5.0mM) to maximize specificity and yield while minimizing primer-dimer formation [13] [17].

Cycle number adjustment: Increasing amplification cycles to 34 instead of standard 28 for low-copy number templates, while being mindful of increased background and non-specific amplification [13].

Visualization of Sequence Determinants and PCR Efficiency Relationships

Figure 1: Sequence determinants and their impact on PCR efficiency through molecular mechanisms. This diagram illustrates how specific sequence features influence amplification success through defined molecular pathways.

Sequence determinants including GC content, repetitive elements, and motif location play critical roles in PCR efficiency through their influence on secondary structure formation and polymerase accessibility. Traditional optimization approaches that focus solely on reaction conditions provide incomplete solutions for the inherent challenges posed by certain sequence architectures. The emerging paradigm leverages deep learning models trained on comprehensive synthetic sequence libraries to predict amplification efficiency directly from sequence information, enabling proactive design of amplicons with more uniform amplification characteristics [10]. This approach, combined with mechanistic insights from interpretation frameworks like CluMo, allows researchers to identify and avoid problematic sequence motifs before synthesis and amplification.

For researchers working with challenging templates, a multifaceted strategy incorporating sequence-informed design, specialized reagent selection, and systematic optimization protocols offers the most reliable path to robust amplification. As genomic applications increasingly rely on parallel amplification of diverse templates—from metagenomic studies to DNA data storage systems—understanding and addressing these sequence determinants becomes essential for generating accurate, reproducible results. The quantitative relationships and methodological frameworks presented in this guide provide a foundation for developing PCR assays that successfully navigate the challenges posed by extreme sequence architectures.

Multi-template Polymerase Chain Reaction is a foundational technique in molecular biology, enabling the parallel amplification of diverse DNA sequences from a single sample. This process is crucial for applications ranging from microbial community profiling in metabarcoding studies to library preparation in next-generation sequencing and DNA data storage systems [10] [18]. However, the simultaneous amplification of multiple homologous templates introduces significant technical challenges that can compromise the accuracy and reliability of downstream analyses.

The core issue lies in the phenomenon of non-homogeneous amplification, where different DNA templates amplify at varying efficiencies within the same reaction [10]. This efficiency bias systematically distorts the original template ratios, leading to skewed abundance data in the final amplification products. Even minor differences in amplification efficiency can result in dramatic representation biases due to the exponential nature of PCR—a template with an efficiency just 5% below the average can be underrepresented by a factor of two after only 12 cycles [10]. This distortion has profound implications for quantitative analyses across biological disciplines, potentially leading to false conclusions in microbial ecology, diagnostics, and synthetic biology applications.

Molecular Mechanisms of Amplification Bias

Sequence-Specific Factors Affecting Amplification Efficiency

The efficiency with which a DNA template amplifies in multi-template PCR is influenced by several sequence-intrinsic properties that affect polymerase processivity and primer binding kinetics.

Secondary Structure Formation: Intramolecular secondary structures within single-stranded DNA templates represent a major impediment to efficient amplification. These stable structures, including hairpins and stem-loops, can cause polymerase stalling, premature termination, or template switching [8]. Their thermal stability directly correlates with inhibitory potency, with more stable structures exerting stronger inhibitory effects on PCR amplification [8]. In extreme cases, such as the inverted terminal repeat (ITR) sequences of adeno-associated viruses, these structures form ultra-stable T-shaped hairpins (Tm = 85.3°C) that render amplification and sequencing exceptionally challenging [8].

Adapter-Mediated Self-Priming: Recent research employing deep learning interpretation frameworks has identified specific motifs adjacent to adapter priming sites as closely associated with poor amplification efficiency [10]. These motifs facilitate adapter-mediated self-priming, where primers aberrantly bind to template regions beyond their intended binding sites, leading to inefficient amplification and generating artifactual products.

GC Content and Primer Binding Energies: While traditionally considered a primary factor in amplification bias, experimental evidence suggests GC content alone cannot fully explain observed efficiency variations [10]. However, differences in binding energies between permutations of degenerate primers significantly impact amplification efficiency, with GC-rich primer permutations typically amplifying with higher efficiency [19].

Template Concentration Effects: The relative amplification efficiency for each template is not constant but varies nonlinearly with its proportional representation within the community [20]. Low-abundance templates are particularly susceptible to under-representation during amplification, compounding the challenges of detecting rare variants in diverse communities.

PCR Artifacts in Multi-Template Systems

The complex composition of multi-template reactions creates favorable conditions for the formation of specialized artifacts that further distort abundance ratios.

Heteroduplex Formation: When amplifying homologous sequences, single-stranded DNA products from different templates can cross-hybridize during annealing phases, forming heteroduplex molecules containing mismatched base pairs [18]. The potential number of heteroduplexes increases geometrically with template diversity, with n distinct sequences potentially forming n(n-1) heteroduplex combinations [18]. These heteroduplexes migrate separately from parental molecules during analysis, creating false signals and leading to overestimation of sample complexity.

Chimeric Amplicons: Chimeric sequences arise when a partially extended primer from one template dissociates and anneals to a different template during amplification, creating recombinant molecules that do not exist in the original sample [18]. Template switching is particularly prevalent when stable secondary structures cause polymerase pausing or premature termination, increasing the probability of incomplete extension products engaging in recombination events.

Table 1: Major Artifacts in Multi-Template PCR and Their Consequences

| Artifact Type | Formation Mechanism | Impact on Analysis |

|---|---|---|

| Heteroduplexes | Cross-hybridization between amplicons from different homologous templates | Overestimation of sample diversity; additional bands/clusters in separation methods |

| Chimeras | Template switching between partially extended primers and heterologous templates | False sequence variants; inflation of phylogenetic diversity |

| Primer Dimers | Self-annealing of primers at their 3' ends | Competition for reagents; reduced target amplification efficiency |

| Self-Priming Products | Aberrant primer binding to non-target sites on templates | Reduction in intended products; skewed abundance ratios |

Quantitative Assessment of Amplification Bias

Experimental Measurement of Amplification Efficiencies

Systematic investigation of sequence-specific amplification efficiencies requires carefully controlled experimental designs that track abundance changes throughout the amplification process.

Serial Amplification Protocol: One robust approach involves performing consecutive PCR reactions with limited cycle numbers (e.g., 15 cycles per reaction) with sequencing-based quantification of amplicon composition at each stage [10]. This serial amplification across many cycles (e.g., 90 total cycles) enables precise tracking of coverage distribution broadening and sequence dropout over time.

Efficiency Calculation: The exponential nature of PCR amplification allows researchers to model the process using the equation (Nn = N0 × (1 + ε)^n), where (Nn) represents the amplicon quantity after n cycles, (N0) is the initial template quantity, and ε is the per-cycle amplification efficiency. By fitting sequencing coverage data to this model across multiple cycle thresholds, researchers can derive quantitative efficiency estimates (ε) for individual templates [10].

Orthogonal Validation: Efficiency measurements derived from bulk sequencing should be validated using orthogonal methods such as single-template qPCR on selected sequences [10]. This confirmation ensures that observed under-representation reflects true amplification inefficiency rather than measurement artifacts.

Experimental data from synthetic DNA pools demonstrates that approximately 2% of random sequences exhibit severe amplification deficiencies, with efficiencies as low as 80% relative to the population mean [10]. Such templates can be effectively drowned out after 60 amplification cycles, becoming undetectable in sequencing data despite their initial presence.

Impact of Compositional Effects on Data Interpretation

The compositional nature of sequencing data introduces additional complexities in interpreting amplification results. Because sequencing platforms typically normalize the total amount of genetic material from each sample, the resulting data represents relative abundances rather than absolute quantities [21]. This compositional effect means that an increase in one component's abundance necessarily causes the relative decrease of all other components, even if their absolute amounts remain unchanged [20].

This compositional property has critical implications for differential abundance analysis, as changes in relative abundance do not necessarily correlate with changes in absolute abundance [21]. In extreme cases, widespread changes in absolute abundance across features can lead to false positive differential abundance calls when interpreting relative count data [21].

Table 2: Quantitative Impacts of Amplification Bias in Multi-Template PCR

| Parameter | Typical Range | Experimental Evidence |

|---|---|---|

| Efficiency variation between templates | 80-120% (relative to mean) | Deep learning prediction from synthetic DNA pools [10] |

| Sequences with severe efficiency deficits | ~2% of random sequences | Experimental data from GC-controlled oligonucleotide pools [10] |

| Reduction in sequencing depth to recover diversity | 4-fold reduction required to recover 99% of amplicons | Model predictions from efficiency-corrected library design [10] |

| Sensitivity of differential abundance calling | Median: 0.91 (range: 0.47-0.98) | Analysis of 16S, bulk RNA-seq, and single-cell RNA-seq datasets [21] |

| Specificity of differential abundance calling | Median: 0.89 (range: 0.76-0.97) | Analysis of 16S, bulk RNA-seq, and single-cell RNA-seq datasets [21] |

Experimental Protocols for Bias Characterization

Protocol 1: Serial Amplification with Sequencing Quantification

This protocol enables systematic tracking of amplification efficiency across multiple templates throughout the amplification process.

Materials:

- Synthetic DNA pool with known sequence composition

- High-fidelity DNA polymerase with proofreading activity

- Platform-specific adapter primers

- Next-generation sequencing platform

Procedure:

- Sample Preparation: Divide the synthetic DNA pool into aliquots for serial amplification. Use synthetic pools with either random sequence composition or GC-controlled sequences (e.g., fixed at 50% GC content) to control for GC-specific effects [10].

- Serial Amplification: Perform multiple consecutive PCR reactions, each with a limited number of cycles (e.g., 15 cycles). After each reaction, remove an aliquot for sequencing library preparation while using the remainder as template for the subsequent amplification round [10].

- Library Preparation and Sequencing: Prepare sequencing libraries at each timepoint (e.g., after 15, 30, 45, 60, 75, and 90 total cycles) using standard protocols for the sequencing platform.

- Data Analysis:

- Map sequencing reads to the reference sequences to determine coverage for each template.

- Fit coverage trajectories to exponential amplification models to estimate initial abundance biases and sequence-specific amplification efficiencies.

- Categorize sequences by amplification efficiency (e.g., low: <90%, average: 90-110%, high: >110% relative to mean) [10].

Protocol 2: Disruptor-Mediated Secondary Structure Interruption

This protocol tests the specific contribution of secondary structures to amplification bias using specially designed oligonucleotide disruptors.

Materials:

- Target DNA templates predicted to form stable secondary structures

- Disruptor oligonucleotides with anchor, effector, and 3' blocker domains

- Standard PCR reagents including dNTPs and DNA polymerase

- Control additives (DMSO, betaine) for comparison

Procedure:

- Disruptor Design: Design disruptor oligonucleotides containing three functional components:

- Anchor: A sequence complementary to a region adjacent to the secondary structure to initiate template binding.

- Effector: A sequence designed to overlap with the duplex region of the secondary structure to mediate strand displacement.

- 3' Blocker: A modification (e.g., C3 spacer) at the 3' end to prevent polymerase extension [8].

- PCR Setup: Set up parallel reaction series with:

- No additives (control)

- Standard additives (DMSO or betaine) at recommended concentrations

- Disruptor oligonucleotides at optimized concentrations

- Amplification: Perform PCR using cycling conditions appropriate for the target templates. For challenging templates like rAAV ITR sequences, use manufacturer-recommended cycling parameters [8].

- Product Analysis:

- Quantify amplification yield using gel electrophoresis or fluorometric methods.

- Compare amplification efficiency across conditions.

- Validate product sequence fidelity by Sanger sequencing.

Diagram 1: Experimental workflows for quantifying and mitigating amplification bias. Protocol 1 tracks efficiency through serial amplification, while Protocol 2 directly targets secondary structures with disruptor oligonucleotides.

Computational Approaches for Predicting and Mitigating Bias

Deep Learning for Efficiency Prediction

Recent advances in deep learning have enabled the prediction of sequence-specific amplification efficiencies directly from DNA sequence information, offering a powerful approach for bias mitigation.

Model Architecture: One-dimensional convolutional neural networks (1D-CNNs) can be trained on reliably annotated datasets derived from synthetic DNA pools to predict amplification efficiency based solely on sequence information [10]. These models achieve high predictive performance, with demonstrated AUROC of 0.88 and AUPRC of 0.44 in classifying poorly amplifying sequences [10].

Interpretation Frameworks: Model interpretation techniques such as CluMo (Clustered Motif discovery) identify specific sequence motifs adjacent to adapter priming sites that are associated with poor amplification efficiency [10]. This approach has elucidated adapter-mediated self-priming as a major mechanism causing low amplification efficiency, challenging conventional PCR design assumptions.

Application to Library Design: The predictive capability of these models enables the design of inherently homogeneous amplicon libraries by excluding or modifying sequences predicted to amplify poorly. This approach can reduce the required sequencing depth to recover 99% of amplicon sequences by fourfold, significantly improving the efficiency of sequencing projects [10].

Compositional Data Analysis Strategies

The compositional nature of sequencing data requires specialized statistical approaches to avoid misinterpretation of amplification results.

Reference-Based Normalization: Where feasible, incorporating external reference standards (spike-ins) of known concentration provides an absolute scaling factor that helps mitigate compositional effects [21]. These references should cover a range of abundances and be introduced prior to amplification to control for both extraction and amplification biases.

Differential Abundance Testing: Methods designed specifically for compositional data, such as those implemented in the R package ALDEx2 or Songbird, can provide more robust differential abundance analysis by accounting for the relative nature of the data [21]. These approaches help distinguish true biological changes from technical artifacts introduced during amplification.

Efficiency-Aware Abundance Estimation: Incorporating sequence-specific efficiency estimates into abundance quantification models can correct for systematic biases. Bayesian approaches that jointly estimate initial template concentrations and amplification efficiencies from multi-cycle sequencing data show particular promise for accurate absolute quantification [20].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Studying and Mitigating Multi-Template PCR Bias

| Reagent/Category | Function | Application Notes |

|---|---|---|

| High-Fidelity DNA Polymerases | Reduces misincorporation errors and template switching | Essential for minimizing chimeras; preferred over standard Taq for complex mixtures [18] |

| Disruptor Oligonucleotides | Unwinds stable secondary structures in templates | Three-component design: anchor, effector, and 3' blocker; more effective than DMSO/betaine for challenging structures [8] |

| GC-Rich Resolution Enhancers | Reduces secondary structure stability | Betaine, DMSO, or 7-deaza-dGTP; effectiveness varies by template [8] |

| Synthetic DNA Pools | Reference standards for efficiency calibration | GC-controlled pools (e.g., fixed 50% GC) help isolate sequence effects from GC content effects [10] |

| Molecular Barcodes (UMIs) | Tags individual molecules pre-amplification | Enables computational correction for amplification bias; essential for absolute quantification [10] |

| Proofreading Exonucleases | Degrades single-stranded DNA | Reduces heteroduplex formation; may disproportionately affect rare templates [18] |

| Hot-Start Polymerases | Prevents non-specific amplification during setup | Critical for multiplex reactions; reduces primer-dimers and spurious products [22] |

| Phosphorothioate-Modified Primers | Protects against exonuclease degradation | Incorporation at 3' end inhibits degradation by proofreading activity [22] |

Diagram 2: Mechanism of how template secondary structures cause amplification bias (red) and how disruptor oligonucleotides mitigate these effects (green). Disruptors prevent the cascade of molecular events that lead to skewed abundance ratios.

The journey from amplification dropout to skewed abundance in multi-template PCR represents a critical challenge in molecular biology with far-reaching implications for research and diagnostic applications. Through systematic investigation, researchers have identified secondary structure formation as a primary molecular mechanism driving amplification bias, with specific sequence motifs adjacent to priming sites playing a disproportionately important role in efficiency reduction.

The quantitative frameworks and experimental protocols presented in this work provide researchers with robust tools for characterizing and mitigating these biases in their own systems. By integrating deep learning predictions, disruptor technologies, and compositional data analysis, scientists can significantly improve the accuracy and reliability of multi-template PCR applications. As these methodologies continue to mature, they promise to enhance the validity of quantitative molecular analyses across diverse fields including microbial ecology, clinical diagnostics, and synthetic biology.

Moving forward, the field would benefit from standardized reference materials and benchmarking protocols to enable cross-laboratory validation of bias mitigation strategies. Furthermore, the integration of efficiency-aware computational models into standard analysis pipelines will help bridge the gap between relative measurements and biologically meaningful absolute abundances. Through continued methodological refinement and validation, the scientific community can overcome the challenges of amplification bias, unlocking the full potential of multi-template PCR for quantitative biological investigation.

Polymerase Chain Reaction (PCR) is a foundational technique in molecular biology, yet its efficiency and accuracy can be severely compromised by sequence-specific biases, particularly in multi-template amplification. Traditional explanations for PCR failure have centered on factors such as GC-content, amplicon length, and primer annealing temperatures. However, recent research employing advanced deep learning models has uncovered a more precise and previously underappreciated mechanism: adapter-mediated self-priming. This technical guide synthesizes recent findings that utilize convolutional neural networks to elucidate how specific sequence motifs adjacent to primer binding sites facilitate self-priming, leading to significant amplification inefficiencies and skewed quantitative results. This insight, framed within a broader thesis on how secondary structures dictate PCR efficiency, challenges long-standing design assumptions and provides a new roadmap for optimizing nucleic acid amplification in research and diagnostic applications.

The Problem of Non-Homogeneous Amplification in Multi-Template PCR

Multi-template PCR, essential for high-throughput sequencing and DNA data storage, is plagued by non-homogeneous amplification. This results in skewed abundance data that compromises the accuracy and sensitivity of downstream analyses [10]. During serial amplification of complex templates, a progressive broadening of coverage distribution is observed, where a subset of sequences becomes severely depleted or drops out entirely [10].

The exponential nature of PCR means that even small, sequence-specific differences in amplification efficiency are dramatically compounded over multiple cycles. For instance, a template with an amplification efficiency just 5% below the mean will be underrepresented by approximately half after only 12 cycles—a common cycle number in Illumina library preparation [10]. While factors like GC-content, amplicon length, and polymerase choice have historically been blamed, their mitigation often fails to resolve the imbalance, suggesting the involvement of other, more specific sequence-based factors [10].

Table 1: Traditional Factors Affecting PCR Efficiency and Common Optimization Strategies

| Factor | Effect on PCR | Common Optimization Strategy |

|---|---|---|

| GC-Rich Content [23] | Strong hydrogen bonding and secondary structures hinder polymerase progression and primer annealing. | Use of additives (DMSO, betaine), specialized polymerases, adjusted thermal cycling [23]. |

| Primer Design [12] | Poorly designed primers lead to mispriming, primer-dimers, and non-specific amplification. | Optimization of primer concentration (0.2-1.0 µM), annealing temperature, and 3'-end stability [12] [24]. |

| Template Quality & Length [12] | Degraded template or very long/short fragments lead to low yield or false negatives. | Use of high-quality, intact DNA; fragment size selection (200-500 bp recommended) [12]. |

| Mg²⁺ Concentration [12] | Affects primer annealing, duplex stability, and polymerase activity. | Titration around a standard starting point of 2.0 mM [12]. |

Deep Learning Approach to Predict Amplification Efficiency

Model Architecture and Training

To systematically investigate sequence-specific amplification efficiency, researchers employed a one-dimensional convolutional neural network (1D-CNN). This model was trained on large, reliably annotated datasets derived from synthetic DNA pools, which contained thousands of random sequences with common terminal primer binding sites [10]. The use of synthetic pools precluded biases from biological sequence motifs, allowing the model to focus on intrinsic sequence properties affecting PCR.

The model was designed to predict sequence-specific amplification efficiencies based on sequence information alone. It achieved a high predictive performance, with an Area Under the Receiver Operating Characteristic (AUROC) score of 0.88 and an Area Under the Precision-Recall Curve (AUPRC) of 0.44, successfully identifying the worst-amplifying sequences [10]. This demonstrates the power of deep learning in deciphering complex sequence-property relationships that elude traditional analysis.

Experimental Validation of Predictions

The model's predictions were rigorously validated through orthogonal experiments. When sequences categorized by the model as having low efficiency were tested in single-template qPCR, they confirmed significantly lower amplification efficiencies [10]. Furthermore, when a subset of these poorly performing sequences was re-synthesized into a new oligo pool and amplified, they were consistently and reproducibly under-represented, effectively "drowned out" after 60 PCR cycles [10]. This confirmed that the failure is an intrinsic property of the sequence itself, independent of the pool's composition.

The CluMo Framework: Identifying Self-Priming as a Key Failure Mechanism

The CluMo Interpretation Framework

A key innovation in this research was the development of CluMo (Motif Discovery via Attribution and Clustering), a deep learning interpretation framework designed to move beyond the "black box" nature of neural networks [10]. CluMo identifies specific sequence motifs that are closely associated with poor amplification by analyzing the attribution scores from the trained 1D-CNN. It streamlines global motif discovery by aggregating individual nucleotide-level attributions into shared, interpretable motifs, overcoming challenges associated with variable motif lengths and clustering decisions [10].

The Self-Priming Mechanism

The application of CluMo revealed that specific motifs adjacent to adapter priming sites were strongly associated with poor amplification efficiency [10]. The analysis led to the elucidation of adapter-mediated self-priming as a major mechanism causing PCR failure.

In this mechanism, a segment of the template sequence itself, near the 3' end of the intended primer binding site, acts as an unintended internal primer. This occurs when a region within the template is complementary to the adapter sequence. During the annealing step, the adapter can bind to this internal site instead of its intended target at the sequence terminus. The polymerase then begins extension, which is futile as it does not generate the correct amplicon. This mis-priming event effectively sequesters reagents and inhibits the proper amplification of the template, leading to its severe under-representation in the final product [10].

Diagram 1: Deep learning and motif discovery workflow.

Impact and Practical Applications

Quantifiable Improvements in Amplicon Library Design

The practical application of these deep learning insights is significant. By enabling the design of inherently homogeneous amplicon libraries, the approach reduces the required sequencing depth to recover 99% of amplicon sequences by fourfold [10]. This translates directly into cost savings and increased efficiency for sequencing projects. Furthermore, the ability to identify and avoid sequences prone to self-priming opens new avenues for improving DNA amplification in genomics, diagnostics, and synthetic biology [10].

Table 2: Key Quantitative Findings from the Deep Learning Study

| Metric | Value/Result | Interpretation |

|---|---|---|

| Model Performance (AUROC) [10] | 0.88 | High predictive performance in classifying amplification efficiency. |

| Model Performance (AUPRC) [10] | 0.44 | Good performance in identifying the rare class (poorly amplifying sequences). |

| Fraction of Low-Efficiency Sequences [10] | ~2% | A small but significant subset of sequences has very poor efficiency (~80% of mean). |

| Sequencing Depth Improvement [10] | 4-fold reduction | Drastic increase in library efficiency by avoiding self-priming sequences. |

| Efficiency of Worst Sequences [10] | As low as 80% | Relative to population mean; leads to halving of relative abundance every ~3 cycles. |

Integration with Existing Optimization Strategies

This new understanding complements rather than replaces traditional optimization methods. For instance, while addressing self-priming tackles a major cause of failure, optimizing factors like Mg²⁺ concentration (typically 1.5-4.0 mM) and using specialized polymerases (e.g., Pfu for fidelity, Taq for yield) remains crucial for overall success [12]. The deep learning model provides a targeted, pre-emptive design strategy, while wet-lab optimizations fine-tune the reaction conditions.

Detailed Experimental Protocols

Protocol 1: Generating Training Data via Serial Amplification

This protocol was used to create the large-scale dataset for training the deep learning model [10].

- Pool Design: Synthesize a pool of DNA oligonucleotides (e.g., 12,000 sequences) containing random insert sequences flanked by common, truncated TruSeq adapter sequences.

- Serial PCR Amplification: Perform a series of six consecutive PCR reactions, with 15 cycles each.

- Sequencing: After each 15-cycle reaction, purify the product and prepare a sample for high-throughput sequencing.

- Efficiency Calculation: Map sequencing reads back to the reference pool. For each sequence, fit the coverage trajectory over the serial amplifications to an exponential PCR model to derive its sequence-specific amplification efficiency (ε𝑖). This creates a labeled dataset of sequences and their corresponding efficiencies.

Protocol 2: Validating Self-Priming with Orthogonal qPCR

This protocol validates the amplification efficiency of sequences predicted to be poor amplifiers by the model [10].

- Sequence Selection: Arbitrarily select sequences from the pool that were categorized by the model as having high, average, or low amplification efficiency.

- Single-Template qPCR: Design target-specific primers for each selected sequence. Perform qPCR reactions using serial dilutions of the individual template.

- Efficiency Calculation: For each sequence, generate a standard curve from the dilution series Ct values. Calculate the amplification efficiency (E) using the slope of the curve: ( E = -1 + 10^{(-1/slope)} ) [25].

- Correlation: Compare the qPCR-derived efficiencies with the model's predictions to confirm that computationally low-efficiency sequences also perform poorly in a controlled, single-template experiment.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Kits for PCR and Efficiency Analysis

| Item / Category | Function / Application | Example / Specification |

|---|---|---|

| Specialized PCR Master Mixes [24] | Optimized buffer systems and enzymes for challenging templates (high GC, long amplicons). | Hieff Ultra-Rapid II HotStart PCR Master Mix. |

| PCR Enhancers / Additives [23] | Disrupt secondary structures, improve polymerase processivity on complex templates. | DMSO, Betaine. |

| High-Fidelity DNA Polymerases [12] | Provide superior accuracy for applications requiring low error rates. | Vent or Pfu polymerase. |

| Hot-Start Taq Polymerase [24] | Reduces non-specific amplification and primer-dimer formation at low temperatures. | Standard for routine, high-yield amplification. |

| Synthetic DNA Pools [10] | Generation of controlled, bias-free datasets for model training and validation. | Custom oligo pools with defined adapter sequences. |

| qPCR Reagents & Standards [25] [26] | For absolute or relative quantification and precise measurement of amplification efficiency. | Intercalating dyes (SYBR Green) or probe-based kits. |

Practical Strategies to Overcome Structural Barriers in Your PCR Assays

Polymerase chain reaction (PCR) is a foundational technique in molecular biology, but its efficiency is critically compromised by difficult DNA templates, particularly those prone to forming stable secondary structures. Sequences with high guanine-cytosine (GC) content (>60%) present a significant challenge due to the strong triple hydrogen bonds between G and C bases, which lead to higher melting temperatures and promote the formation of secondary structures such as hairpins, knots, and tetraplexes [27]. These structures hinder DNA polymerase progression and primer annealing, resulting in PCR failure, truncated products, or nonspecific amplification [27] [28]. The core thesis of this research is that these physicochemical barriers can be systematically overcome by employing advanced DNA polymerases engineered for high processivity and robust proofreading activity, thereby restoring amplification efficiency and ensuring sequence accuracy.

The limitations of traditional polymerases like Taq are pronounced with such templates. Their low processivity—meaning they incorporate only a few nucleotides per binding event—requires longer extension times and often fails to synthesize complete strands through structured regions [29]. Furthermore, they lack a proofreading mechanism, leading to higher error rates that are unacceptable for applications like cloning, sequencing, and functional studies where sequence fidelity is paramount [30]. The engineering of novel polymerases that integrate high processivity with proofreading functions represents a direct solution to the problem of secondary structures, enabling successful amplification of long, GC-rich, and complex targets.

Mechanism of Action: How Advanced Polymerases Overcome Amplification Barriers

The Role of Proofreading (3'→5' Exonuclease) Activity

Fidelity in DNA replication is a critical attribute of high-performance polymerases. The fidelity of a DNA polymerase is defined by its ability to accurately replicate a template, which involves correct nucleotide selection and insertion to maintain canonical Watson-Crick base pairing [30]. High-fidelity polymerases have a strong binding preference for the correct nucleotide during polymerization. When an incorrect nucleotide is incorporated, these enzymes leverage a dedicated 3'→5' exonuclease domain, often called the proofreading function. This domain recognizes the mismatched base and excises it, allowing the polymerase to resume synthesis with the correct nucleotide [30]. This proofreading activity drastically reduces error rates. For example, Q5 High-Fidelity DNA Polymerase possesses an ultra-low error rate of less than 1 error per million bases [30]. This is particularly crucial for applications downstream of amplification, such as cloning, SNP analysis, and next-generation sequencing, where sequence accuracy is non-negotiable [30].

Enhanced Processivity Through Protein Fusion Technology

Processivity is the ability of a polymerase to incorporate a high number of nucleotides without dissociating from the DNA template. Low processivity is a major limitation of traditional polymerases, leading to incomplete synthesis, especially through regions of DNA secondary structure [29]. Inspired by natural replication systems, protein engineers have developed fusion polymerases to address this challenge.

These chimeric enzymes combine a DNA polymerase with a double-stranded DNA (dsDNA) binding protein. A widely adopted strategy involves fusing the polymerase to the Sso7d protein, a 7 kDa, sequence-independent dsDNA binding protein derived from the archaeon Sulfolobus solfataricus [30] [29]. The Sso7d domain binds tightly to the dsDNA backbone behind the polymerase, effectively tethering the enzyme to the template. This physical stabilization dramatically increases processivity, allowing the polymerase to read through long amplicons and GC-rich structures that would otherwise cause dissociation [30] [29]. The benefits of this fusion technology are multifold:

- Longer Amplicons: Capable of amplifying up to 20 kb from plasmid templates [30].

- Faster Extension Rates: Reduced time per kb is required for synthesis [31].

- Improved Tolerance to Inhibitors: The strong DNA binding allows the polymerase to function in the presence of common PCR inhibitors found in crude samples [29].

The following diagram illustrates the synergistic mechanism of a fusion polymerase like Q5 or Phusion, combining proofreading and enhanced processivity to overcome secondary structures.

Comparative Analysis of High-Performance DNA Polymerases

The biotechnology market offers several engineered polymerases that incorporate the principles of high fidelity and processivity. Their performance varies, making certain enzymes more suitable for specific challenges like GC-rich amplification. The table below summarizes key commercial polymerases and their attributes.

Table 1: Comparison of High-Processivity and Proofreading DNA Polymerases

| Polymerase Name | Key Technology / Features | Reported Fidelity (vs. Taq) | Recommended Amplicon Length | Best Suited For |

|---|---|---|---|---|

| Q5 High-Fidelity [30] [28] | Sso7d fusion, strong proofreading | >100x higher [30] | Up to 10 kb (gDNA), 20 kb (plasmid) [30] | GC-rich templates, cloning, NGS library prep |

| Phusion High-Fidelity [29] [28] | Sso7d fusion, proofreading | >100x higher [29] | Up to 20 kb [29] | Long-range PCR, difficult templates, high yield |

| KAPA HiFi [32] | Engineered for intrinsic processivity | 100x higher [32] | Up to 11 kb (genomic) [32] | Extremely high fidelity (lowest error rate), GC-rich targets up to 84% GC [32] |