Harnessing Neural Networks for Enzyme Engineering: From Stability Optimization to Functional Discovery

This article provides a comprehensive overview of the transformative role neural networks are playing in enzyme engineering and stability optimization.

Harnessing Neural Networks for Enzyme Engineering: From Stability Optimization to Functional Discovery

Abstract

This article provides a comprehensive overview of the transformative role neural networks are playing in enzyme engineering and stability optimization. Aimed at researchers, scientists, and drug development professionals, it explores the foundational principles of machine learning in biocatalysis, detailing advanced methodologies from graph neural networks for substrate specificity prediction to self-driving labs for automated optimization. The content addresses critical troubleshooting aspects like data scarcity and model generalization, while rigorously evaluating model performance through experimental validation and comparative analysis. By synthesizing the latest research, this review serves as a strategic guide for leveraging artificial intelligence to accelerate the development of robust, efficient enzymes for biomedical and industrial applications.

The New Paradigm: How Neural Networks Are Reshaping Enzyme Engineering

The field of biocatalyst development is undergoing a profound transformation, moving from traditional directed evolution approaches toward sophisticated artificial intelligence (AI)-driven design. Directed evolution (DE), long the workhorse of protein engineering, mimics natural selection by applying iterative rounds of mutagenesis and screening to accumulate beneficial mutations [1]. However, this approach functions as a greedy hill-climbing optimization on the protein fitness landscape, often becoming trapped in local optima when mutations exhibit non-additive epistatic behavior [1] [2]. The limitations of DE are particularly pronounced when engineering epistatic residues in enzyme active sites or binding interfaces, where synergistic mutational effects are critical for function but difficult to navigate via stepwise mutagenesis [1] [2].

The integration of machine learning (ML) is overcoming these limitations by enabling predictive modeling of sequence-function relationships across vast combinatorial spaces. This computational shift represents more than just an acceleration of existing processes; it constitutes a fundamental change in engineering philosophy from empirical optimization to predictive design [3] [4]. AI-driven methods can now leverage patterns learned from millions of natural protein sequences and structures, augmented with experimental data, to navigate fitness landscapes more intelligently and escape local optima [3] [4]. This paradigm shift is unlocking new possibilities in biocatalyst development, from optimizing natural enzymes for industrial conditions to creating entirely new-to-nature enzymatic functions through de novo design [5] [4].

Quantitative Comparison of Engineering Methods

The performance advantages of ML-assisted methods can be quantitatively assessed across multiple metrics, as shown in Table 1. These comparisons highlight the efficiency gains achievable through computational approaches.

Table 1: Performance Comparison of Enzyme Engineering Methods

| Method | Typical Screening Effort | Key Advantages | Reported Efficiency Gains | Best For |

|---|---|---|---|---|

| Traditional Directed Evolution | 10³-10⁴ variants per round | Simple implementation; No prior knowledge needed | Baseline (1x) | Initial optimization of highly active starting scaffolds |

| Active Learning-assisted DE (ALDE) [1] | ~100s of variants per round | Efficient exploration of epistatic landscapes; Uncertainty quantification | 12% to 93% product yield in 3 rounds [1] | Challenging landscapes with strong epistasis |

| DeepDE [6] | ~1,000 variants per round | Explores triple mutants; Mitigates data sparsity | 74.3-fold activity increase in 4 rounds [6] | Maximizing activity improvements with limited screening |

| ML-guided Cell-Free Engineering [7] | 1,217 variants mapped in parallel | Ultra-high throughput; Multiple reactions simultaneously | 1.6- to 42-fold improved activity across 9 pharmaceuticals [7] | Multi-objective optimization and substrate scope engineering |

| Full Computational Design [5] | Dozens of designs | No experimental optimization required; Novel active sites | Catalytic efficiency of 12,700 M⁻¹s⁻¹ for Kemp eliminase [5] | Creating entirely new enzymes not found in nature |

The quantitative advantages extend beyond simple efficiency metrics. A comprehensive evaluation across 16 diverse protein fitness landscapes revealed that ML-assisted directed evolution (MLDE) provides the greatest advantage on landscapes that are most challenging for traditional DE, particularly those with fewer active variants and more local optima [2]. Furthermore, the incorporation of focused training using zero-shot predictors that leverage evolutionary, structural, and stability knowledge consistently outperforms random sampling for both binding interactions and enzyme activities [2].

Methodologies and Experimental Protocols

Active Learning-Assisted Directed Evolution (ALDE)

ALDE represents a significant advancement over traditional DE by incorporating iterative model updating and uncertainty quantification to guide exploration of the fitness landscape [1]. The protocol consists of four key phases:

Design Space Definition: Select 3-5 epistatic residues that form functional units (e.g., active site residues). For the ParPgb optimization campaign, researchers selected five active-site residues (W56, Y57, L59, Q60, and F89; WYLQF) positioned above the distal face of the heme cofactor, which were known to display epistatic effects and impact non-native activity [1].

Initial Library Construction: Generate an initial combinatorial library using NNK degenerate codons via PCR-based mutagenesis. In the ParPgb case study, this involved simultaneous mutation at all five positions under study through sequential rounds of PCR-based mutagenesis [1].

Iterative ALDE Cycles:

- Wet-lab Assay: Express and screen variants for target function(s)

- Model Training: Train supervised ML models (Gaussian processes or random forests) on collected sequence-fitness data

- Uncertainty Quantification: Apply frequentist uncertainty metrics to identify promising regions

- Variant Selection: Choose next batch balancing exploration vs. exploitation

- The ParPgb implementation required only three rounds of wet-lab experimentation to improve the yield of a desired product from 12% to 93% [1]

Validation: Test top-performing variants under relevant conditions

Key Implementation Details: The method uses an objective function that explicitly optimizes for the desired property. For the cyclopropanation reaction, this was defined as the difference between the yield of the desired cis-product and the yield of the trans-product [1]. The computational component can be implemented using the codebase at https://github.com/jsunn-y/ALDE [1].

DeepDE for Iterative Protein Optimization

DeepDE addresses the data sparsity problem in protein engineering by combining supervised learning on approximately 1,000 mutants with a mutation radius of three, enabling exploration of a much larger sequence space than single or double mutant approaches [6]. The protocol involves:

Library Design:

- Start with a training dataset of 1,000 single or double mutants

- Set mutation radius to three for each evolution round

- The vast combinatorial library of ~1.5×10¹⁰ variants exceeds practical screening limits but enables computational prioritization [6]

Model Training:

- Utilize three deep learning methods: unsupervised, weak-positive only, and supervised learning

- Train on the 1,000-mutant dataset with a 1:9 ratio of single to double mutants

- Evaluate using Spearman rank correlation and normalized discounted cumulative gain (NDCG) metrics [6]

Design Strategies:

- Direct Mutagenesis (DM): Direct prediction of beneficial triple mutants with specific amino acid substitutions

- Screening-coupled Mutagenesis (SM): Prediction of beneficial triple mutation sites followed by experimental construction of 10 libraries for screening [6]

Iterative Evolution:

- Implement 4-5 rounds of evolution

- For GFP engineering, Path III (SM only) consistently delivered the most promising results, outperforming other paths and achieving a 74.3-fold increase in activity by round 4 [6]

The workflow for ALDE exemplifies the iterative human-in-the-loop approach:

ML-Guided Cell-Free Protein Engineering

This approach integrates cell-free DNA assembly, cell-free gene expression, and functional assays to rapidly map fitness landscapes [7]. The protocol enables ultra-high throughput screening:

Cell-Free DNA Assembly:

- Design primers containing nucleotide mismatches to introduce desired mutations via PCR

- Digest parent plasmid with DpnI

- Perform intramolecular Gibson assembly to form mutated plasmid

- Amplify linear DNA expression templates (LETs) via second PCR [7]

Cell-Free Protein Synthesis:

- Express mutated proteins directly from LETs using cell-free systems

- This bypasses transformation and cloning steps, enabling thousands of sequence-defined mutants to be built in a day [7]

Functional Screening:

- Test enzyme variants against multiple substrates in parallel

- For amide synthetase engineering, the platform evaluated substrate preference for 1,217 enzyme variants in 10,953 unique reactions [7]

Machine Learning Modeling:

- Build augmented ridge regression ML models with evolutionary zero-shot fitness predictors

- Extrapolate to higher-order mutants with increased activity

- This approach delivered 1.6- to 42-fold improved activity across nine pharmaceutical compounds [7]

Successful implementation of computational enzyme engineering requires both wet-lab and dry-lab resources, as detailed in Table 2.

Table 2: Essential Research Reagents and Computational Tools

| Category | Resource | Application | Key Features |

|---|---|---|---|

| Wet-Lab Systems | Cell-free gene expression (CFE) systems [7] | Ultra-high throughput protein synthesis | Bypasses cloning; enables 1,000+ variants/day |

| NNK degenerate codon libraries [1] | Initial combinatorial library generation | Covers all amino acids with one stop codon | |

| Computational Tools | ALDE codebase [1] | Active learning-assisted directed evolution | Implements uncertainty quantification; https://github.com/jsunn-y/ALDE |

| ProteinMPNN [4] | Protein sequence design | Generates sequences optimized for given 3D backbone | |

| RFdiffusion [4] | De novo backbone design | Diffusion-based generative model for protein structures | |

| ESM3 [4] | Sequence-structure-function co-generation | Large-scale protein language model for property prediction | |

| Model Organisms | avGFP library [6] | Deep learning validation | Well-characterized fitness landscape for benchmarking |

| ParPgb variants [1] | Epistatic landscape studies | Five active-site residues with known epistasis |

Integrated Workflow for AI-Driven Biocatalyst Development

The most powerful implementations combine multiple computational approaches into integrated systems that leverage both physics-based and knowledge-based predictions. The workflow below illustrates how these components unite in a comprehensive design pipeline:

The computational shift in biocatalyst development represents a fundamental transformation in how we engineer enzymatic function. By moving from directed evolution to AI-driven approaches, researchers can now navigate protein fitness landscapes with unprecedented efficiency, particularly for challenging epistatic landscapes [1] [2]. The methods described here—ALDE, DeepDE, and ML-guided cell-free engineering—provide tangible protocols for implementing these approaches in practical laboratory settings.

Future developments will likely focus on multimodal AI systems that integrate diverse data types including sequence, structure, and dynamical information [3] [4]. The emergence of foundation models for proteins, such as ESM3, points toward a future where enzyme design becomes increasingly predictive and less dependent on extensive experimental screening [3] [4]. Furthermore, the integration of de novo design tools like RFdiffusion with active learning methodologies may ultimately enable the full computational design of high-efficiency enzymes for reactions not known in nature [5] [4].

As these computational methods continue to mature, they promise to accelerate the development of biocatalysts for sustainable chemistry, pharmaceutical manufacturing, and biomedical applications, ultimately establishing a new paradigm of predictable, data-driven enzyme engineering.

Enzyme kinetics is the study of the rates of chemical reactions catalyzed by enzymes, providing a quantitative framework for understanding catalytic efficiency and specificity. The parameters Km (Michaelis constant) and kcat (turnover number) are fundamental to this analysis, serving as critical indicators of how an enzyme interacts with its substrate and converts it to product. Within the context of modern enzyme engineering and neural network-based optimization, these kinetic parameters provide the essential ground-truth data for training models to predict enzyme function and design improved biocatalysts [8] [9]. The ratio kcat/Km, known as the specificity constant or catalytic efficiency, combines these individual parameters into a single metric that describes an enzyme's overall effectiveness under specific conditions [10] [11].

This application note details the core concepts of enzyme stability, specificity, and kinetic parameters, providing structured protocols for their determination. The integration of these classical biochemical principles with emerging artificial intelligence (AI) methodologies is revolutionizing the field, enabling the prediction and design of enzymes with tailored properties for applications in drug development, synthetic biology, and industrial biocatalysis [8] [12] [9].

Defining Core Kinetic Parameters

Km (Michaelis Constant)

Km is the Michaelis constant, defined as the substrate concentration at which the reaction rate is half of the maximal velocity (Vmax) [13]. It is mathematically represented as Km = (k₋₁ + kcat)/k₁, where k₁ and k₋₁ are the rate constants for the formation and dissociation of the enzyme-substrate (ES) complex, and kcat is the catalytic rate constant.

- Functional Interpretation: Km is most accurately interpreted as the dissociation constant of the ES complex, reflecting the enzyme's apparent affinity for a given substrate [13]. A low Km value indicates high affinity (the ES complex is less likely to dissociate), meaning the enzyme requires a lower substrate concentration to achieve half-maximal catalytic efficiency. Conversely, a high Km value signifies low affinity [13].

- Significance in Engineering: In enzyme engineering projects, lowering the Km for a desired substrate is often a target, as it allows for efficient catalysis at lower substrate concentrations, which can be critical for industrial processes [12].

kcat (Turnover Number)

kcat, also known as the turnover number, is defined as the maximal number of substrate molecules converted to product per enzyme molecule per second when the enzyme is fully saturated with substrate [10] [13].

- Functional Interpretation: kcat is the rate constant for the conversion of the ES complex to free enzyme and product. It represents the rate-limiting step of the catalytic cycle and is a direct measure of the enzyme's maximum intrinsic catalytic rate [13].

- Significance in Engineering: A high kcat is desirable as it indicates a fast-acting catalyst. In directed evolution and AI-driven design, optimizing kcat is a primary goal for enhancing the throughput of enzymatic reactions [12] [14].

kcat/Km (Catalytic Efficiency or Specificity Constant)

The ratio kcat/Km is a composite parameter that describes an enzyme's catalytic efficiency or specificity for a substrate [10] [11] [13].

- Functional Interpretation: kcat/Km is the apparent second-order rate constant for the reaction between free enzyme and free substrate at low substrate concentrations ([S] << Km) [10] [13]. It incorporates both binding affinity (Km) and catalytic rate (kcat) into a single measure.

- Meaning of the Ratio: Dividing kcat by Km is meaningful because it defines the enzyme's effectiveness when it is not saturated with substrate, a common scenario in physiological conditions. It answers the question: "How good is the free enzyme at performing a reaction with a scarce substrate?" [10].

- Catalytic Perfection: The value of kcat/Km has an upper limit imposed by the rate at which enzyme and substrate can diffuse together in solution, known as the diffusion limit, which is ~10⁸–10⁹ M⁻¹s⁻¹. Enzymes with a kcat/Km approaching this range, such as triosephosphate isomerase, are said to have achieved 'catalytic perfection' [11].

Table 1: Summary of Core Enzyme Kinetic Parameters

| Parameter | Symbol | Definition | Interpretation | Engineering Goal |

|---|---|---|---|---|

| Michaelis Constant | Km | Substrate concentration at half Vmax | Dissociation constant of ES complex; measure of affinity | Lower Km for higher affinity |

| Turnover Number | kcat | Maximum conversions per enzyme per second at saturation | Intrinsic catalytic rate | Increase kcat for faster rate |

| Catalytic Efficiency | kcat/Km | Ratio of kcat to Km | Specificity constant; overall efficiency under non-saturating conditions | Maximize kcat/Km |

Quantitative Analysis of Kinetic Parameters

The following data, compiled from scientific literature, provides representative examples of Km and kcat values for various enzymes and substrates, illustrating how these parameters define specificity and efficiency.

Table 2: Experimentally Determined Kinetic Parameters for Selected Enzymes

| Enzyme | Substrate | Km | kcat (s⁻¹) | kcat/Km (M⁻¹s⁻¹) | Reference & Context |

|---|---|---|---|---|---|

| C1s Serine Protease | Complement C4 | 0.4 µM | 2.28 | 5.7 x 10⁶ | [11] |

| C1s Serine Protease | Complement C2 | 2.7 µM | 3.51 | 1.3 x 10⁶ | [11] |

| C1s Serine Protease | Ac-Gly-Lys-OMe | 6.7 mM | 0.13 | 1.98 x 10⁴ | [11] |

| C1s Serine Protease | Bz-Arg-OEt | 4.4 mM | 0.0024 | 5.4 x 10² | [11] |

| Beta-Secretase 1 | GLTNIKTEEISEISY-EVEFRWKK* | 4.9 µM | 0.344 | 7.04 x 10⁴ | [11] (Cleaved substrate) |

| Beta-Secretase 1 | SEISY-EVEFRWKK* | 52 µM | 0.234 | 4.5 x 10³ | [11] (Cleaved substrate) |

| N-Myristoyltransferase | Big ET-1 | 0.4 µM | 0.0002 | 5.0 x 10² | [11] |

| N-Myristoyltransferase | Bradykinin | 27.4 µM | 5.75 | 2.1 x 10⁵ | [11] |

*Synthetic peptide substrate. The dash (-) in the sequence indicates the cleavage site.

Analysis of Tabulated Data:

- Specificity Determination: The C1s serine protease shows a clear substrate preference. Its kcat/Km for natural substrate Complement C4 is about 10,000 times higher than for the small synthetic substrate Bz-Arg-OEt, demonstrating a strong specificity for its physiological partner [11].

- Interplay of Km and kcat: For Beta-Secretase 1, the first substrate has both a lower Km (higher affinity) and a higher kcat (faster conversion) than the second, resulting in a significantly greater catalytic efficiency [11]. This highlights how the kcat/Km ratio integrates both factors for a meaningful comparison.

- Context of "Catalytic Perfection": While the kcat/Km values for C1s protease with its natural substrates are high (10⁶ M⁻¹s⁻¹), they remain below the diffusion limit (~10⁸–10⁹ M⁻¹s⁻¹), indicating there is still room for theoretical optimization [11].

Experimental Protocol: Determining kcat and Km

This section provides a standardized protocol for determining the kinetic parameters kcat and Km via initial rate velocity measurements.

Principle

The protocol is based on the Michaelis-Menten model of enzyme kinetics. By measuring the initial rate of reaction (v₀) at a series of substrate concentrations ([S]), the parameters Vmax and Km can be determined by fitting the data to the Michaelis-Menten equation. The kcat is then calculated from Vmax [13].

Materials and Equipment

Table 3: Research Reagent Solutions and Essential Materials

| Item | Specification/Function |

|---|---|

| Purified Enzyme | >95% purity, accurately quantified (e.g., via Bradford assay). |

| Substrate | High-purity, prepared as a concentrated stock solution. |

| Reaction Buffer | Physiologically relevant pH and ionic strength; may include essential cofactors. |

| Stop Solution | Halts the reaction at precise timepoints (e.g., acid, denaturant). |

| Detection System | Spectrophotometer, fluorometer, or HPLC-MS to quantify product formation. |

| Temperature-Controlled | To maintain constant temperature throughout the assay. |

| Cuvettes/Microplates | Reaction vessels compatible with the detection system. |

Step-by-Step Procedure

- Reaction Setup: Prepare a master mix containing buffer, cofactors, and a fixed, limiting concentration of enzyme.

- Substrate Dilution Series: Create a series of substrate solutions covering a range typically from 0.2Km to 5Km. It is critical to include concentrations both below and above the expected Km.

- Initiation and Timing: Initiate the reactions by adding the enzyme master mix to each substrate solution. For each reaction, allow it to proceed for a predetermined, short time interval within the initial linear phase of the reaction.

- Reaction Quenching: Stop each reaction at its precise timepoint using the stop solution.

- Product Quantification: Measure the amount of product formed in each quenched reaction using the appropriate detection system.

- Data Calculation: For each [S], calculate the initial velocity (v₀) as the amount of product formed per unit time, divided by the total enzyme concentration.

Data Analysis and Fitting

- Plot Data: Plot v₀ versus [S]. The plot should resemble a hyperbolic curve.

- Non-Linear Regression: Use software (e.g., GraphPad Prism, Python/SciPy) to fit the data directly to the Michaelis-Menten equation: v₀ = (Vmax * [S]) / (Km + [S]).

- Extract Parameters: From the fit, obtain the values for Vmax and Km.

- Calculate kcat: Using the relationship kcat = Vmax / [Eₜ], where [Eₜ] is the total molar concentration of active enzyme in the reaction.

The logical workflow for this experimental and computational process is summarized below.

The Role of Kinetic Parameters in AI-Driven Enzyme Engineering

The precise determination of kcat and Km provides the foundational dataset for developing and training neural networks to predict and design enzyme function. AI models use these parameters to learn the complex relationships between enzyme sequence/structure and catalytic output [9].

Kinetic Data as Training Input

- High-Quality Data Requirement: The performance of AI models is directly dependent on the quality and quantity of kinetic data. Sparse and noisy experimental kcat data in public databases like BRENDA and SABIO-RK has historically been a major limitation [9].

- Feature Input for Models: Modern deep learning approaches, such as the DLKcat model, use substrate structures (represented as molecular graphs) and protein sequences as direct input to predict kcat values across a wide range of organisms, filling gaps in experimental data [9].

AI Applications in Kinetic Parameter Prediction and Optimization

- kcat Prediction: The DLKcat model combines a graph neural network (GNN) for substrates and a convolutional neural network (CNN) for protein sequences to achieve high-throughput kcat prediction, capturing trends such as enzyme promiscuity and the effects of mutations [9].

- Substrate Specificity Prediction: Models like EZSpecificity use cross-attention graph neural networks trained on enzyme-substrate interaction databases to predict substrate specificity with high accuracy, outperforming previous state-of-the-art models [8].

- Informing Enzyme-Constrained Models: Predicted kcat values on a genome scale are used to reconstruct enzyme-constrained genome-scale metabolic models (ecGEMs), which more accurately simulate cellular metabolism, growth phenotypes, and proteome allocation [9].

The integration of classical kinetics with AI modeling creates a powerful feedback loop for enzyme engineering, as illustrated in the following workflow.

A rigorous understanding of Km, kcat, and kcat/Km remains fundamental to quantifying enzyme function. These parameters provide an unambiguous language for describing catalytic efficiency and substrate specificity. As the field of enzyme engineering progresses, the integration of classical kinetic profiling with advanced neural network models is creating a powerful paradigm. The accurate data generated by the protocols outlined herein directly fuel AI systems, enabling the predictive design of next-generation enzymes with optimized stability, specificity, and kinetic performance for transformative applications in biotechnology and medicine.

The integration of artificial intelligence with structural biology and enzymology is fundamentally transforming enzyme engineering. The ability to predict enzyme function, stability, and kinetics from sequence and structural data is accelerating the development of novel biocatalysts for therapeutic and industrial applications. This paradigm shift relies on a expanding universe of structured biological data—encompassing protein sequences, three-dimensional structures, and kinetic parameters—that serves as the foundational training ground for sophisticated neural network models [15]. Without these comprehensive datasets, machine learning approaches would lack the necessary context to make accurate predictions for enzyme engineering.

This Application Note details practical methodologies for leveraging these data resources within AI-driven workflows for enzyme stability optimization and kinetic property prediction. We provide structured comparisons of essential databases, step-by-step protocols for implementing cutting-edge deep learning tools, and visual workflows to guide researchers in navigating this complex landscape. The protocols are specifically framed within the context of neural network applications for enzyme engineering, enabling researchers to effectively harness these resources for therapeutic enzyme development.

Kinetic Parameter Databases

Table 1: Primary Databases for Enzyme Kinetic Parameters

| Database | Key Features | Data Points | Data Sources | Primary Applications |

|---|---|---|---|---|

| BRENDA [16] | Most comprehensive enzyme resource; includes kcat, Km values | ~8,500 kinetic values (2016 version); continually updated | Literature mining via KENDA automated text mining | Training data for kinetic prediction models; enzyme function analysis |

| SABIO-RK [16] | High-quality curated enzyme kinetics | Not specified in results | Manual literature curation | Biochemical modeling; network biology; quality-sensitive applications |

| SKiD [16] | Integrated structural & kinetic data; 3D enzyme-substrate complexes | 13,653 unique enzyme-substrate complexes | BRENDA integration with structural mapping | Structure-activity relationship studies; molecular docking |

| EnzyExtractDB [17] | LLM-extracted kinetic data; expands beyond existing resources | 218,095 enzyme-substrate-kinetics entries (218,095 kcat, 167,794 Km) | Automated extraction from 137,892 publications | Augmenting training data for improved model generalization |

Structural and Sequence Databases

Table 2: Structural and Sequence Resources for Enzyme Engineering

| Resource | Data Type | Key Features | Applications in AI Models |

|---|---|---|---|

| UniProtKB [16] | Protein sequences & annotations | Standardized enzyme identifiers; functional annotations | Sequence embedding generation; feature extraction |

| Protein Data Bank (PDB) [16] | 3D protein structures | Experimental structures of enzyme-ligand complexes | Structural feature input; molecular environment learning |

| PubChem [16] | Substrate structures | Chemical compound database with SMILES representations | Substrate representation in kinetic prediction models |

Experimental Protocols

Protocol 1: Implementing Deep Learning for Kinetic Parameter Prediction with CataPro

Application: Predicting enzyme turnover number (kcat), Michaelis constant (Km), and catalytic efficiency (kcat/Km) for enzyme discovery and engineering.

Principle: CataPro leverages pre-trained protein language models (ProtT5) for enzyme sequence representation and molecular fingerprints (MolT5 + MACCS) for substrate characterization, combining these features in a neural network framework to predict kinetic parameters [18].

Materials:

- Software Requirements: Python 3.8+, CataPro model implementation, RDKit for cheminformatics, PyTorch or TensorFlow

- Data Requirements: Enzyme amino acid sequences in FASTA format, substrate structures in SMILES notation

- Computational Resources: GPU recommended for accelerated inference (≥8GB VRAM)

Procedure:

Data Preparation

- Obtain enzyme amino acid sequences from UniProtKB in FASTA format

- Convert substrate chemical structures to canonical SMILES notation using PubChem or Open Babel

- For mutant enzymes, generate sequences with specific point mutations

Feature Generation

- Enzyme Representation: Process enzyme sequences through ProtT5-XL-UniRef50 model to generate 1024-dimensional embedding vectors [18]

- Substrate Representation:

- Compute MolT5 embeddings (768-dimensional) from SMILES strings

- Generate MACCS keys fingerprints (167-dimensional binary vectors)

- Concatenate both representations into a 935-dimensional substrate feature vector

- Feature Integration: Concatenate enzyme and substrate representations to form a 1959-dimensional input vector for the neural network

Model Inference

- Load pre-trained CataPro model weights

- Feed the combined enzyme-substrate feature vector through the neural network architecture

- Output predicted kcat (s⁻¹), Km (mM), and/or kcat/Km values

- For comparative analysis, run predictions across multiple enzyme variants or substrates

Validation and Interpretation

- Compare predictions with experimental values when available

- Perform sensitivity analysis on key residues through in silico mutagenesis

- Rank enzyme variants by predicted catalytic efficiency for experimental testing

Troubleshooting:

- For poor generalization, ensure enzymes in application set share <40% sequence identity with training data to avoid overfitting [18]

- For substrate representation issues, verify SMILES validity and consider alternative tautomers

- For low confidence predictions, employ ensemble methods or confirm with complementary tools (DLKcat, UniKP)

Protocol 2: Protein Stability Optimization Using RaSP

Application: Predicting ΔΔG changes for single amino acid substitutions to guide stability engineering of therapeutic enzymes.

Principle: RaSP combines self-supervised learning of protein structural environments with supervised fine-tuning on Rosetta-derived stability changes, enabling rapid and accurate prediction of mutation effects [19].

Materials:

- Software Requirements: RaSP implementation (available via web interface or local installation), PDB structure files

- Data Requirements: High-resolution protein structures (<2.5Å resolution recommended), mutation specifications (wild-type residue, position, mutant residue)

- Computational Resources: Standard CPU sufficient for predictions (∼1 second per residue)

Procedure:

Structure Preparation

- Obtain crystal structure or high-quality predicted structure of target enzyme

- Preprocess structure: add missing heavy atoms, optimize side-chain rotamers for residues with poor electron density

- For structures with missing loops, consider homology modeling or AlphaFold2 prediction to complete structure

Mutation Specification

- Prepare a list of single-point mutations to evaluate (e.g., saturation mutagenesis at specific positions)

- Format mutations as: Wild-type residue + position + Mutant residue (e.g., V12L, K45R)

- For comprehensive analysis, generate all 19 possible substitutions at each target position

Stability Prediction

- Input the protein structure and mutation list to RaSP

- The model processes local atomic environments using a pre-trained 3D convolutional neural network

- Predict ΔΔG values in kcal/mol (negative values indicate stabilization, positive values indicate destabilization)

- For increased reliability, use ensemble prediction (median of 10 model instances)

Result Analysis and Variant Selection

- Filter mutations based on predicted ΔΔG thresholds (typically < -0.5 kcal/mol for stabilizing mutations)

- Avoid strongly destabilizing mutations (ΔΔG > +2.0 kcal/mol) which may compromise protein folding

- Consider structural context: surface residues tolerate more diverse substitutions than buried residues

- Prioritize mutations with predicted stability improvements for experimental validation

Troubleshooting:

- For unreliable predictions on flexible regions, consider incorporating molecular dynamics simulations

- When working with engineered mutants, ensure the input structure reflects the actual mutated sequence

- For multi-mutant variants, assume additive effects or run combinatorial predictions when feasible

Protocol 3: Generative AI-Assisted Enzyme Design with VAEs

Application: Engineering therapeutic enzyme variants with enhanced stability and catalytic activity using generative neural networks.

Principle: Variational autoencoders (VAEs) trained on multiple sequence alignments of enzyme families capture co-evolutionary constraints and enable sampling of novel, functional sequences with minimal mutations relative to wild-type [20].

Materials:

- Software Requirements: Python with deep learning libraries (PyTorch/TensorFlow), multiple sequence alignment tools (BLAST, HMMER)

- Data Requirements: Comprehensive multiple sequence alignment of target enzyme family, wild-type sequence of therapeutic enzyme

- Computational Resources: GPU recommended for training (≥11GB VRAM), significant RAM for large alignments

Procedure:

Dataset Curation

- Collect homologous sequences of target enzyme using BLAST search against non-redundant protein databases

- Perform multiple sequence alignment using MAFFT or ClustalOmega

- Filter alignment to remove fragments and sequences with excessive gaps (>20% gaps)

- Balance sequence diversity: for therapeutic applications, include weighting to favor human-like sequences

Model Training

- Implement VAE architecture with encoder, stochastic latent space, and decoder components

- Train model to reconstruct sequences from the multiple sequence alignment

- For therapeutic applications, use weighted training that inversely correlates with Hamming distance from human wild-type sequence [20]

- Validate model by comparing mutual information patterns in training data versus generated samples

Sequence Generation

- Encode human wild-type enzyme sequence into the latent space representation

- Sample novel variants by adding scaled random noise to the latent vector (control mutation rate via variance scaling)

- Decode perturbed latent vectors to generate novel enzyme sequences

- Filter generated sequences by similarity to human wild-type (typically >95% identity)

Experimental Prioritization

- Express and purify top candidate variants for biochemical characterization

- Measure thermal stability (melting temperature Tm) and catalytic activity (kcat, Km)

- Compare performance against wild-type enzyme and consensus-designed variants

Troubleshooting:

- If generated sequences are insufficiently human-like, increase weight on human wild-type during training

- If mutation rate is too high, reduce the variance scaling during latent space sampling

- For poor expression, prioritize variants with conserved structural motifs and active site residues

Workflow Visualization

Integrated AI-Driven Enzyme Engineering Workflow

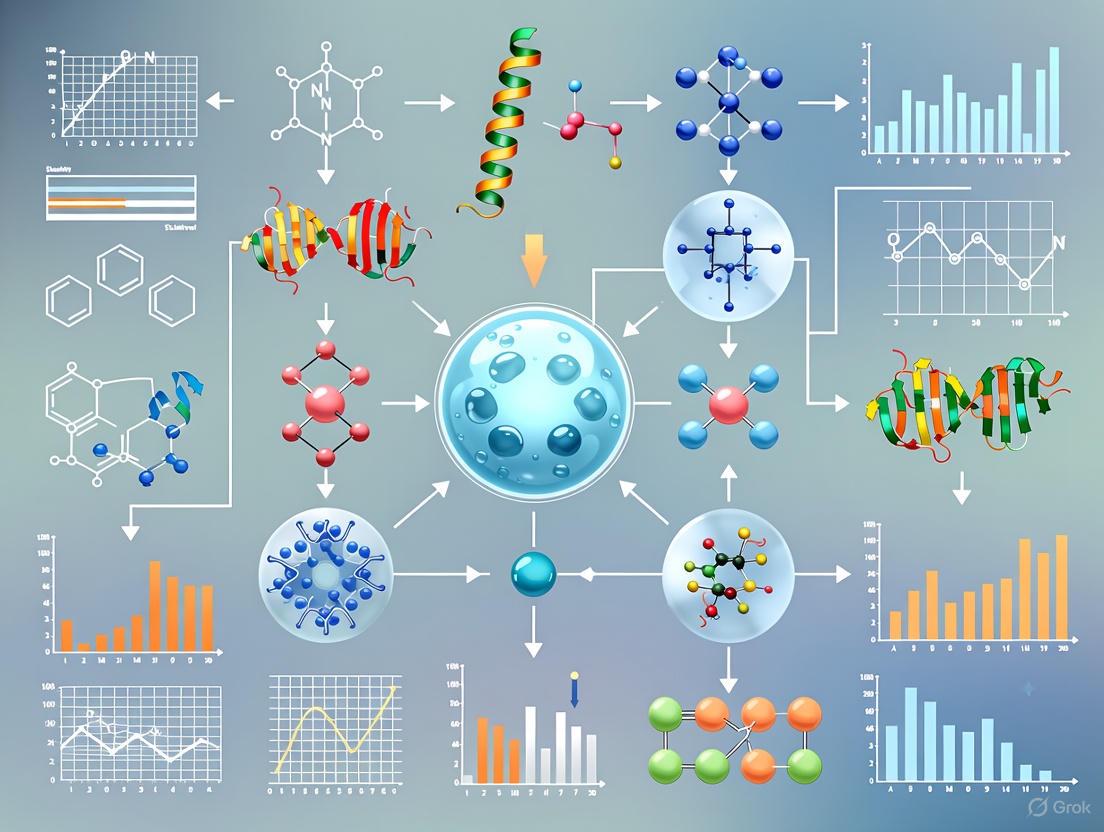

Figure 1: Integrated AI-driven enzyme engineering workflow showing the iterative process between data acquisition, computational modeling, and experimental validation.

Kinetic Parameter Prediction with CataPro

Figure 2: CataPro workflow for enzyme kinetic parameter prediction from sequence and substrate structure inputs.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for AI-Driven Enzyme Engineering

| Tool Name | Type | Function | Access |

|---|---|---|---|

| CataPro [18] | Deep Learning Model | Predicts kcat, Km, and kcat/Km from enzyme sequences and substrate structures | Open source |

| RaSP [19] | Stability Prediction Tool | Rapid prediction of ΔΔG changes for single-point mutations | Web interface & local installation |

| Pythia [21] | Graph Neural Network | Zero-shot ΔΔG prediction with exceptional computational speed | Web server |

| EnzyExtract [17] | Data Extraction Pipeline | LLM-powered extraction of kinetic data from literature | Open source |

| SKiD [16] | Integrated Database | Structure-kinetics mapped database for 13,653 enzyme-substrate complexes | Open access |

| VAE for Enzymes [20] | Generative Model | Samples novel, functional enzyme sequences with minimal mutations | Custom implementation |

| ProtT5 [18] | Protein Language Model | Generates semantic embeddings from amino acid sequences | Open source |

| Rosetta [19] | Modeling Suite | Physics-based protein design and stability calculations | Academic license |

The application of advanced neural network architectures is revolutionizing enzyme engineering and stability optimization research. These models provide powerful tools for predicting enzyme function, designing novel biocatalysts, and understanding structure-function relationships. Graph Neural Networks (GNNs) excel at modeling the complex 3D structure of enzymes as molecular graphs, capturing atomic interactions and spatial relationships critical for catalytic activity. Transformers, with their self-attention mechanisms, process sequential data to model protein sequences and identify patterns governing folding and function. Protein Language Models (pLMs), built on transformer architectures, leverage evolutionary information from massive protein sequence databases to predict functional properties and guide protein design. Together, these architectures form a complementary toolkit for addressing key challenges in biocatalysis, metabolic engineering, and therapeutic development, enabling researchers to move beyond traditional experimental approaches that are often time-consuming and resource-intensive [22] [23] [24].

Graph Neural Networks (GNNs) for Enzyme Structure Analysis

Core Architecture and Principles

Graph Neural Networks are specialized deep learning architectures designed to operate on graph-structured data, making them ideally suited for representing and analyzing enzyme molecules. In GNN-based enzyme modeling, atoms are represented as nodes and chemical bonds as edges, creating a comprehensive molecular graph that preserves structural topology [25] [26]. The key innovation in GNNs is the message-passing mechanism, where nodes iteratively update their representations by exchanging information with their neighboring nodes. This allows the model to capture both local atomic environments and long-range interactions within the enzyme structure—a critical capability for understanding allosteric effects and catalytic mechanisms [26] [27].

GNN architectures exhibit several fundamental properties that make them appropriate for biomolecular data:

- Permutation Invariance: Predictions remain unchanged regardless of how nodes are ordered, ensuring consistent output for identical molecular structures [25] [27].

- Multi-scale Representation: Through multiple message-passing layers, GNNs capture increasingly broader structural contexts, from immediate atomic neighborhoods to domain-level interactions [27].

- Adaptive Receptive Fields: Unlike grid-based models with fixed kernels, GNNs naturally adapt to variable molecular sizes and connectivity patterns [25].

GNN Variants for Enzyme Engineering

Several specialized GNN architectures have been developed to address specific challenges in enzyme informatics:

Table: GNN Architectures for Enzyme Research

| Architecture | Key Mechanism | Enzyme Engineering Applications | Advantages |

|---|---|---|---|

| Graph Convolutional Networks (GCNs) [26] [27] | Spectral graph convolutions with normalized adjacency matrix | Molecular property prediction, Functional classification | Computationally efficient, Suitable for large graphs |

| Graph Attention Networks (GATs) [26] [27] | Self-attention mechanisms weighting neighbor importance | Active site analysis, Substrate specificity prediction | Handles variable importance of different molecular regions |

| Message Passing Neural Networks (MPNNs) [26] | Generalized framework for neighbor aggregation | Quantum chemical property prediction, Reaction outcome forecasting | Flexible message functions, Incorporates edge features |

| Center-Anchored Hierarchical GNN (CAAH-GNN) [22] | Adaptive hierarchical sampling around active sites | Catalytic specificity recognition, Functional residue identification | Focuses computational resources on catalytically relevant regions |

Application Protocol: Enzyme Specificity Prediction with GNNs

Protocol Title: Structure-Based Enzyme Specificity Prediction Using Graph Neural Networks

Purpose: Predict enzyme substrate specificity from 3D structural data to guide enzyme selection and engineering for biocatalytic applications.

Input Data Requirements:

- Enzyme 3D structure (from PDB or homology modeling)

- Active site annotation (catalytic residues)

- Optional: Substrate structure for docking

Methodology:

- Graph Construction [22]:

- Represent enzyme structure as a graph with amino acid residues as nodes

- Define edges based on spatial proximity (e.g., <8Å distance) or chemical interactions

- Encode node features: amino acid type, secondary structure, solvent accessibility, physicochemical properties

- Encode edge features: distance, bond type, interaction strength

Model Architecture [22]:

- Implement center-anchored sampling to focus on active site region

- Use Graph Attention Network layers to weight importance of different residues

- Apply hierarchical pooling to capture multi-scale structural features

- Include global readout layer for graph-level predictions

Training Configuration:

- Loss Function: Cross-entropy for specificity classification

- Optimization: Adam optimizer with learning rate 0.001

- Regularization: Dropout (0.2), Weight decay (1e-5)

- Batch Size: 32 (adjust based on GPU memory)

Interpretation and Validation:

- Compute attention weights to identify critical residues

- Compare predictions with experimental mutagenesis data

- Validate on held-out enzyme families to assess generalizability

Transformer Architectures and Protein Language Models

Core Architecture and Principles

Transformers represent a fundamental shift in sequence processing through the self-attention mechanism, which allows the model to weigh the importance of different elements in a sequence when making predictions. The core innovation lies in the multi-head self-attention layer, which processes entire sequences in parallel (unlike recurrent networks) and captures long-range dependencies more effectively [28]. Each attention head can learn to focus on different types of relationships—some capturing local syntactic patterns while others track broader semantic context [28].

The transformer architecture consists of three key components:

- Embedding Layer: Converts input tokens (amino acids) into dense vector representations while incorporating positional information [28].

- Transformer Block: Contains multi-head self-attention and feed-forward networks with residual connections and layer normalization [28].

- Output Head: Projects processed representations into task-specific outputs (e.g., probability distributions over possible next tokens) [28].

For protein modeling, transformers have been adapted into specialized Protein Language Models (pLMs) that treat amino acid sequences as sentences in a "protein language" and learn evolutionary patterns from millions of natural sequences [24]. These models capture fundamental principles of protein structure and function without explicit structural information.

Protein Language Model Variants

Protein Language Models can be categorized based on their architectural approach and training objectives:

Table: Protein Language Models for Enzyme Research

| Model Type | Architecture | Training Objective | Enzyme Applications |

|---|---|---|---|

| Encoder-only (BERT-like) [24] | Bidirectional Transformer Encoder | Masked Language Modeling (MLM) | Function prediction, Stability effect of mutations |

| Decoder-only (GPT-like) [24] | Autoregressive Transformer Decoder | Next Token Prediction | De novo enzyme design, Sequence generation |

| Encoder-Decoder [24] | Full Transformer Architecture | Sequence-to-Sequence Learning | Enzyme optimization, Scaffold grafting |

| Specialized Models (Finenzyme) [23] | Conditional Transformer | Transfer Learning + Fine-tuning | EC-specific enzyme generation, Functional annotation |

Application Protocol: Enzyme Function Prediction with pLMs

Protocol Title: Transfer Learning with Protein Language Models for Enzyme Function Prediction

Purpose: Leverage pre-trained pLMs to predict Enzyme Commission (EC) numbers and functional properties from amino acid sequences.

Input Data Requirements:

- Enzyme amino acid sequences (FASTA format)

- EC number annotations for training

- Optional: Structural features, phylogenetic profiles

Methodology:

- Model Selection and Setup [23] [24]:

- Select appropriate base model (ESM, ProtTrans, Finenzyme)

- Configure model for transfer learning (partial/full fine-tuning)

- Set up task-specific output heads for multi-label EC classification

Fine-Tuning Strategy [23]:

- Use progressive unfreezing of layers to prevent catastrophic forgetting

- Apply discriminative learning rates (lower for early layers)

- Implement gradient accumulation for effective batch sizes

Training Configuration:

- Loss Function: Focal loss for handling class imbalance in EC numbers

- Optimization: AdamW with cosine annealing learning rate schedule

- Regularization: Weight decay, Layer-wise adaptive rate scaling (LARS)

- Batch Size: 16-64 depending on model size and GPU memory

Interpretation and Analysis:

- Analyze attention maps to identify functionally important regions

- Compare embeddings with known functional families

- Validate predictions against independent test sets and experimental data

Integrated Architectures and Emerging Approaches

Hybrid Models for Enhanced Enzyme Modeling

Recent advances combine the strengths of multiple architectures to overcome limitations of individual approaches:

GNN-Transformer Hybrids integrate structural awareness from GNNs with sequence modeling capabilities of transformers. These models first process 3D structural information through graph networks, then fuse these representations with sequence embeddings from pLMs, creating comprehensive molecular representations that capture both evolutionary and physical constraints [24] [22].

Multimodal pLMs incorporate diverse data types beyond sequence information, including co-evolutionary signals from Multiple Sequence Alignments (MSAs), structural features, and functional annotations. This enriched input enables more accurate prediction of enzyme properties and catalytic mechanisms [29].

Equivariant GNNs explicitly incorporate geometric constraints and symmetry principles (e.g., SE(3)-equivariance) that are fundamental to molecular systems. Models like EZSpecificity use these architectures to predict enzyme-substrate interactions with high accuracy, considering the spatial arrangement of active sites and transition states [8].

Application Protocol: Enzyme Design with Conditional Generation

Protocol Title: Conditional Generation of Novel Enzyme Sequences Using Fine-tuned Transformers

Purpose: Generate novel enzyme sequences with desired catalytic activities and stability properties for biocatalyst development.

Input Data Requirements:

- Curated enzyme family multiple sequence alignment

- Functional annotations (EC numbers, substrate specificity)

- Stability data (Tm, half-life) if available

Methodology:

- Model Preparation [23]:

- Initialize with pre-trained decoder-only transformer (e.g., ProGen)

- Implement conditional generation using EC numbers as control tokens

- Set up masking strategies to preserve catalytic motifs

Training Protocol:

- Phase 1: Fine-tune on target enzyme family with low learning rate

- Phase 2: Reinforcement learning with structural stability rewards

- Phase 3: Adversarial training to improve naturalness of generated sequences

Generation and Filtering:

- Use nucleus sampling (top-p=0.9) for diverse but coherent sequences

- Apply structural consistency filters (predicted secondary structure, disorder)

- Implement catalytic site preservation checks

Validation Pipeline:

- Predict structures of generated sequences (AlphaFold2, ESMFold)

- Assess catalytic competence through active site geometry

- Evaluate stability through molecular dynamics simulations

- Experimental validation of top candidates

Software Libraries and Frameworks

Table: Essential Software Tools for Architecture Implementation

| Tool Name | Application Domain | Key Features | Implementation Considerations |

|---|---|---|---|

| PyTorch Geometric [27] | GNN Development | Specialized graph data loaders, GNN layers | Excellent for custom architecture development, Python ecosystem |

| Deep Graph Library (DGL) [27] | Cross-framework GNNs | Framework-agnostic, High-performance message passing | Good for production deployment, Multi-backend support |

| ESM & HuggingFace [24] | Protein Language Models | Pre-trained pLMs, Fine-tuning utilities | Extensive model zoo, Transfer learning workflows |

| TensorFlow GNN [27] | Industrial-scale GNNs | Distributed training, Production readiness | TensorFlow ecosystem integration, Scalability |

| JAX/Flax for Proteins | Research PLMs | Combinable function transformations, Accelerated computing | Flexibility for research, Growing protein-specific tools |

Critical Datasets for Enzyme Engineering

Table: Essential Datasets for Training and Validation

| Dataset | Data Type | Application | Access Considerations |

|---|---|---|---|

| UniProtKB [23] | Protein sequences & annotations | Pre-training pLMs, Functional prediction | Comprehensive but requires filtering for enzyme-specific subsets |

| Protein Data Bank (PDB) | 3D structures | GNN training, Structure-function mapping | Quality variation, Requires preprocessing |

| BRENDA [8] | Enzyme functional data | Specificity prediction, Kinetic parameter modeling | Manual curation, Rich functional annotations |

| Catalytic Site Atlas | Active site residues | GNN attention guidance, Functional site prediction | Limited coverage, High-quality annotations |

Experimental Validation Reagents and Materials

Table: Essential Research Reagents for Experimental Validation

| Reagent/Material | Function in Validation | Application Context | Considerations |

|---|---|---|---|

| Halogenase Enzymes [8] | Specificity validation | Testing computational predictions | 91.7% accuracy achieved in EZSpecificity validation |

| Terpene Synthases [22] | Catalytic specificity studies | Structure-function relationship mapping | Diverse product profiles, Structural data available |

| Site-Directed Mutagenesis Kits | Functional residue validation | Testing computational attention maps | Gold standard for hypothesis testing |

| Thermal Shift Assays | Stability measurement | Validating stability predictions | High-throughput capability, Correlates with thermostability |

Performance Benchmarks and Comparative Analysis

Quantitative Performance Metrics

Table: Architecture Performance on Enzyme Engineering Tasks

| Architecture | Task | Performance Metric | Result | Reference |

|---|---|---|---|---|

| CAAH-GNN [22] | Enzyme specificity classification | Accuracy | ~10% improvement over baselines | [22] |

| EZSpecificity [8] | Substrate identification | Accuracy | 91.7% (vs. 58.3% previous model) | [8] |

| Finenzyme [23] | EC number prediction | F1-score | Significant improvement over generalist PLMs | [23] |

| GAT-based Models [22] | Active site identification | Attention alignment | High correlation with experimental data | [22] |

| ESM Models [24] | Mutation effect prediction | Spearman correlation | Competitive with structure-based methods | [24] |

The integration of these neural network architectures represents a paradigm shift in enzyme engineering, moving from traditional hypothesis-driven approaches to data-driven predictive and generative methods. As these models continue to evolve, they promise to accelerate the design of novel biocatalysts for sustainable chemistry, therapeutic development, and industrial applications.

Enzyme engineering is a cornerstone of modern biotechnology, with applications ranging from the synthesis of pharmaceuticals to the development of sustainable industrial processes. For decades, traditional directed evolution has served as the workhorse method for optimizing enzyme properties, functioning through iterative cycles of mutagenesis and high-throughput screening. However, the vastness of protein sequence space presents fundamental limitations for these conventional approaches. This application note delineates the specific bottlenecks inherent in traditional enzyme engineering methods and frames them within the emerging paradigm of neural network-guided optimization, which offers transformative solutions to these long-standing challenges.

The Core Bottlenecks of Traditional Enzyme Engineering

Traditional directed evolution, while responsible for numerous engineering successes, faces several interconnected bottlenecks that constrain its efficiency and scope. The table below summarizes the primary limitations and their operational consequences.

Table 1: Key Bottlenecks in Traditional Enzyme Engineering Methods

| Bottleneck | Description | Impact on Engineering Workflow |

|---|---|---|

| Low-Throughput Screening | Experimental assays for enzyme activity are often limited to ~10^3-10^6 variants, a tiny fraction of sequence space. [7] | Severely restricts the exploration of combinatorial mutations and epistatic interactions. |

| Local Search Trapping | Greedy hill-climbing in fitness landscapes often converges on local optima, not global peaks. [30] | Prevents discovery of superior variants requiring multiple, co-dependent mutations. |

| Fitness-Diversity Trade-off | Focusing on "winning" variants for a single transformation fails to generate rich negative data. [7] | Limits the ability to build generalizable sequence-function models for forward design. |

| Cold-Start Problem | No fitness data is available for engineering new-to-nature functions not found in biology. [31] | Makes supervised model training impossible, forcing reliance on random sampling. |

| Epistatic Constraints | Beneficial mutations are often not additive and can be neutral or deleterious in isolation. [7] [30] | Simple site-saturation mutagenesis campaigns can miss critically important synergistic mutations. |

The fundamental challenge is the astronomically vast search space of possible protein sequences. For example, a modest library exploring only 10 positions in an enzyme with 20 possible amino acids each contains 20^10 (over 10 trillion) theoretical variants. Conventional screening methods can only sample an infinitesimal fraction of this space, leading to suboptimal outcomes. [30] Furthermore, up to 70% of random single-amino acid substitutions can result in decreased activity or non-functional proteins, rendering a large proportion of randomly generated libraries ineffective. [32]

Machine Learning-Guided Solutions and Experimental Protocols

Neural networks and other machine learning (ML) models are overcoming these bottlenecks by learning the complex mappings between protein sequence and function. The following workflow details a protocol for an ML-guided engineering campaign, integrating cell-free expression for rapid data generation.

Protocol: ML-Guided Cell-Free Engineering for Amide Synthetase Specialization

This protocol is adapted from a study that engineered amide bond-forming enzymes, achieving 1.6- to 42-fold improved activity for pharmaceutical synthesis. [7]

1. Objective: Convert a generalist amide synthetase (McbA) into multiple specialist enzymes for distinct chemical reactions.

2. Key Reagent Solutions:

- Parent Enzyme: Wild-type McbA from Marinactinospora thermotolerants.

- Cell-Free Protein Expression (CFE) System: A commercial or homemade system for rapid, cell-free transcription and translation.

- DNA Assembly Reagents: PCR reagents, DpnI restriction enzyme, and Gibson assembly master mix.

- Substrate Library: A diverse array of carboxylic acids and amines, including target pharmaceutical precursors.

3. Experimental Workflow:

4. Detailed Methodology:

Step 1: Substrate Promiscuity Exploration

- Express and purify wild-type McbA.

- Set up 1100 unique reactions combining diverse acids and amines at high concentration (e.g., 25 mM) with low enzyme loading (~1 µM).

- Analyze reaction conversions using HPLC or MS to identify target molecules with low but detectable activity for engineering.

Step 2: High-Throughput Sequence-Function Mapping

- Design Primers: For each of the 64 target residues within 10 Å of the active site, design primers to introduce all 19 possible mutations.

- Cell-Free DNA Assembly & Expression:

- Use PCR with mutagenic primers to amplify gene fragments.

- Digest parent plasmid with DpnI.

- Perform intramolecular Gibson assembly to form mutated plasmid.

- Amplify linear DNA expression templates (LETs) via a second PCR.

- Express mutated proteins directly using the CFE system.

- Functional Assay: Perform enzymatic reactions in a high-throughput microplate format directly using the CFE lysate or purified protein. Collect conversion data for all 1216 variants.

Step 3: Machine Learning Model Training

- Encode protein variants (e.g., one-hot encoding of mutations).

- Integrate fitness data from Step 2.

- Train an augmented ridge regression model to predict enzyme activity based on sequence. The model can be augmented with zero-shot fitness predictors from evolutionary data.

Step 4: Model Prediction & Validation

- Use the trained model to predict the activity of all possible double and higher-order mutants within the defined sequence space.

- Select top-predicted variants for synthesis and experimental testing using the CFE platform.

- Validate model accuracy by comparing predicted vs. measured activity fold-improvements.

Advanced ML Frameworks for Overcoming Specific Bottlenecks

For challenges like the cold-start problem, more advanced frameworks like MODIFY have been developed. MODIFY uses an ensemble of protein language models (ESM-1v, ESM-2) and sequence density models (EVmutation, EVE) for zero-shot fitness prediction, requiring no experimental fitness data upfront. [31] It then co-optimizes the predicted fitness and sequence diversity of starting libraries by solving a Pareto optimization problem: max fitness + λ · diversity. This ensures the designed library is enriched in functional variants while maximizing the exploration of sequence space, facilitating the engineering of new-to-nature enzyme functions.

Another critical advancement is the development of robust kinetic prediction models. The CataPro deep learning model uses pre-trained protein language model embeddings (ProtT5) and molecular fingerprints (MolT5, MACCS) to predict enzyme kinetic parameters (kcat, Km) with high accuracy and generalization. [33] This allows for in silico screening and ranking of enzyme variants based on predicted catalytic efficiency, drastically reducing experimental burden.

Table 2: Quantitative Performance of Advanced ML Models in Enzyme Engineering

| Model / Framework | Primary Function | Reported Performance / Outcome |

|---|---|---|

| ML-Guided Cell-Free Platform [7] | Predicts high-order mutants from single-mutant data | 1.6- to 42-fold activity improvement for 9 pharmaceutical compounds. |

| MODIFY [31] | Zero-shot library design balancing fitness & diversity | Outperformed baselines in zero-shot prediction on 87 DMS benchmarks; engineered generalist C–B and C–Si bond-forming enzymes. |

| CataPro [33] | Predicts enzyme kinetic parameters (kcat, Km) | Identified an enzyme (SsCSO) with 19.53x increased activity; further engineering improved activity 3.34-fold. |

| COMPSS Filter [32] | Computational filter to select generated sequences | Improved the experimental success rate of generated sequences by 50–150%. |

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Reagents and Resources for Modern ML-Guided Enzyme Engineering

| Item | Function / Description | Example Use Case |

|---|---|---|

| Cell-Free Protein Expression (CFE) System | Enables rapid synthesis of thousands of protein variants without cellular transformation. [7] | High-throughput generation of sequence-function data for ML training. |

| Pre-trained Protein Language Models (pLMs) | Deep learning models (e.g., ESM-1v, ESM-2, ProtT5) that convert amino acid sequences into numerical embeddings rich with evolutionary and structural information. [31] [33] | Used for zero-shot fitness prediction and as feature inputs for supervised models like CataPro. |

| Machine Learning Framework (e.g., MODIFY, CataPro) | Algorithms designed to predict fitness, design optimized libraries, or forecast kinetic parameters. | Overcoming the cold-start problem and guiding the engineering of new-to-nature activities. |

| Deep Mutational Scanning (DMS) Data | Comprehensive experimental datasets mapping single mutations in a protein to their fitness effects. | Serves as a critical benchmark for developing and validating new fitness prediction models. [31] |

The limitations of traditional enzyme engineering—constrained search, experimental bottlenecks, and the inability to navigate complex epistatic landscapes—are no longer insurmountable. The integration of neural networks and machine learning creates a new engineering paradigm. By leveraging cell-free systems for rapid data generation, protein language models for zero-shot prediction, and sophisticated frameworks for fitness-diversity co-optimization, researchers can now systematically overcome these bottlenecks. This shift enables the efficient design of specialized and generalist biocatalysts for applications from drug development to green chemistry, propelling the field into a new era of data-driven protein design.

Advanced Architectures and Practical Implementations in Biocatalysis

Application Notes

Enzyme substrate specificity—the ability of an enzyme to recognize and selectively act on particular substrates—is a fundamental property governing biological function. This specificity originates from the three-dimensional structure of the enzyme's active site and the complicated transition state of the reaction [8]. A significant challenge in enzymology is the prevalence of enzyme promiscuity, where enzymes can catalyze reactions or act on substrates beyond those for which they were originally evolved [8] [34]. Furthermore, millions of known enzymes lack reliable substrate specificity annotation, creating a substantial bottleneck for their practical application and for understanding the full scope of biocatalytic diversity in nature [8]. Traditional computational methods have struggled to predict specificity reliably, especially for novel enzymes or substrates not represented in training datasets.

The EZSpecificity Model: A Paradigm Shift

EZSpecificity represents a breakthrough in computational enzymology. It is a cross-attention-empowered SE(3)-equivariant graph neural network architecture specifically designed to predict enzyme-substrate interactions [8] [34]. The model's design directly addresses core biochemical principles by representing enzymes and substrates as graphs where atoms and residues are nodes, connected by edges representing biochemical interactions [34]. Two innovative computational features underpin its performance:

- SE(3)-Equivariance: This property ensures the model's predictions are invariant to rotations and translations in 3D space. This is crucial for molecular systems because the absolute orientation of a molecule is arbitrary, but the relative spatial positioning of atoms determines function [34].

- Cross-Attention Mechanism: This allows for dynamic, context-sensitive communication between the enzyme and substrate representations during processing. This mechanism better mimics the "induced fit" and other subtle binding phenomena observed in experimental biochemistry, where both molecules adjust their conformations upon interaction [8] [34].

The model was trained on a comprehensive, tailor-made database of enzyme-substrate interactions (ESIbank), which integrates sequence and structural-level data across 8,124 enzymes and 34,417 substrates—a dataset reported to be 25 times larger than those used for previous models [35].

Quantitative Performance Benchmarking

EZSpecificity has demonstrated superior performance compared to existing state-of-the-art models across multiple validation paradigms. The most compelling evidence comes from experimental validation.

Table 1: Performance Comparison of EZSpecificity Against a State-of-the-Art Model

| Validation Context | Model | Key Performance Metric | Result |

|---|---|---|---|

| Halogenase Experimental Validation [8] | EZSpecificity | Accuracy in identifying single reactive substrate | 91.7% |

| Previous Best Model (ESP) | Accuracy in identifying single reactive substrate | 58.3% | |

| Generalizability Testing [8] [34] | EZSpecificity | Accuracy on unknown enzyme-substrate pairs | Superior Performance |

| Existing Methods | Accuracy on unknown enzyme-substrate pairs | Lower Performance |

This performance leap, evidenced by a 91.7% accuracy in identifying reactive substrates for halogenases [8], indicates that EZSpecificity has captured fundamental principles of molecular recognition rather than merely memorizing training examples. The model's generalizability makes it particularly valuable for predicting the specificity of enzymes with no prior characterization [34].

Research Applications and Integration

The application of EZSpecificity extends across multiple domains of biotechnology and pharmaceutical research, often integrated into a larger workflow for enzyme discovery and engineering.

- Rational Enzyme Design and Engineering: EZSpecificity enables researchers to computationally screen thousands of enzyme variants against target substrates, rapidly identifying promising candidates for further experimental testing. This accelerates the process of engineering enzymes with desired specificities for industrial biocatalysis or therapeutic applications [34].

- Drug Discovery and Development: Understanding molecular recognition is central to drug design. EZSpecificity's architecture can be adapted to model drug-target interactions, predict off-target effects, and assist in the design of small-molecule inhibitors or activators [34] [36].

- Metabolic Engineering and Synthetic Biology: By accurately predicting the substrates that a native or engineered enzyme will act upon, researchers can design more efficient and predictable biosynthetic pathways for the production of high-value chemicals, pharmaceuticals, and biofuels [15].

- Enzyme Discovery: The model can be used to annotate the putative functions of uncharacterized enzymes discovered in genomic or metagenomic sequencing projects, expanding the toolkit of available biocatalysts [8].

Table 2: Key Applications and Potential Impacts of EZSpecificity

| Application Domain | Specific Use Case | Potential Impact |

|---|---|---|

| Industrial Biocatalysis | Design of enzymes for green manufacturing | Sustainable chemical processes, reduced waste |

| Pharmaceutical Development | Prediction of drug metabolism; design of therapeutic enzymes | Faster drug development, personalized medicine |

| Environmental Biotechnology | Discovery of enzymes for plastic degradation (e.g., polyurethane [37]) | Novel solutions for plastic waste pollution |

| Basic Research | Functional annotation of novel enzymes | Deeper understanding of cellular processes and evolution |

Protocols

Protocol 1: Applying EZSpecificity for Substrate Specificity Prediction

This protocol outlines the steps for using a trained EZSpecificity model to predict the specificity of a given enzyme for a panel of candidate substrates.

1. Input Data Preparation

- Enzyme Input: Obtain the 3D structural data of the target enzyme. This can be an experimental structure from the Protein Data Bank (PDB) or a high-confidence predicted structure from sources like AlphaFold DB [38]. Ensure the structure contains coordinates for all heavy atoms.

- Substrate Input: For each candidate substrate, generate a 1D SMILES string or a 2D/3D molecular structure file (e.g., MOL, SDF). The structures should be energy-minimized to a stable conformation.

- Pre-processing: Convert the enzyme structure and each substrate structure into the graph representation required by EZSpecificity. This involves:

- Defining atoms as nodes.

- Establishing edges based on inter-atomic distances and bond types.

- Annotating nodes and edges with relevant chemical features (e.g., atom type, residue type, partial charge).

2. Model Inference Execution

- Load the pre-trained EZSpecificity model. The source code is publicly available on Zenodo [8].

- For each enzyme-substrate pair, feed the respective graphs into the model.

- The model will process the graphs through its SE(3)-equivariant and cross-attention layers. The cross-attention mechanism allows the enzyme and substrate graphs to interact, simulating the binding event.

- The output layer produces a prediction score representing the likelihood of a catalytic interaction.

3. Output Analysis and Interpretation

- Rank the candidate substrates based on their prediction scores. A higher score indicates a higher predicted reactivity.

- Set a confidence threshold based on the model's validated performance metrics (e.g., the threshold that yielded 91.7% accuracy in validation studies) to classify predictions as high-confidence or low-confidence.

- The results provide a specificity profile for the enzyme, highlighting its preferred substrates and revealing potential promiscuous activities.

The following diagram illustrates this workflow:

Protocol 2: Experimental Validation of Computational Predictions

This protocol describes a method for experimentally validating the substrate specificity predictions generated by EZSpecificity, using halogenases as an example based on the model's validation study [8].

1. Reagent and Material Preparation

- Enzymes: Purify the target enzyme(s) (e.g., wild-type and variant halogenases) to homogeneity using standard protein purification techniques (e.g., affinity chromatography, size-exclusion chromatography).

- Substrates: Procure or synthesize the candidate substrates identified by the computational screen. Prepare a stock solution of each substrate at a known concentration in a suitable solvent (e.g., DMSO, water).

- Reaction Buffer: Prepare an appropriate assay buffer, typically a physiologically relevant pH buffer (e.g., 50-100 mM phosphate buffer, pH 7.5) containing any necessary cofactors (e.g., Fe²⁺, α-ketoglutarate for halogenases).

2. Enzymatic Assay Setup

- Set up reactions in a final volume of 100-500 µL. Include the following components:

- Assay Buffer

- Target Enzyme (at a final concentration within the linear range of activity)

- Candidate Substrate (at a concentration around or above the predicted Km)

- Include appropriate negative controls: (1) a no-enzyme control to account for non-enzymatic substrate conversion, and (2) a no-substrate control to account for background signal from the enzyme preparation.

- Incubate the reactions at the optimal temperature for the enzyme (e.g., 30°C) for a predetermined time within the linear reaction rate period (e.g., 10-30 minutes).

3. Reaction Monitoring and Product Detection

- Quenching: Stop the reactions at designated time points by adding a quenching agent (e.g., acid, organic solvent).

- Analysis: Analyze the reaction mixtures using a suitable analytical method to detect product formation. The choice of method depends on the substrate and product properties.

- Liquid Chromatography-Mass Spectrometry (LC-MS) is highly versatile for detecting and identifying most products based on mass and retention time.

- High-Performance Liquid Chromatography (HPLC) with UV/Vis or fluorescence detection can be used if the product has a distinct chromophore.

- Quantification: Quantify the amount of product formed by comparing to a standard curve of authentic product standard.

4. Data Analysis and Model Correlation

- Calculate the enzymatic activity for each substrate (e.g., rate of product formation per mg of enzyme).

- Classify substrates as "reactive" or "non-reactive" based on a statistically significant increase in product formation over the no-enzyme control.

- Compare the experimental results to the EZSpecificity predictions to calculate the accuracy, precision, and recall of the computational model.

The experimental validation workflow is summarized below:

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Enzyme Specificity Research

| Reagent / Material | Function / Application | Example Sources / Notes |

|---|---|---|

| Protein Data Bank (PDB) | Source of experimental 3D enzyme structures for model input. | Worldwide repository (PDB.org) [38]. |

| AlphaFold Protein Structure Database | Source of highly accurate predicted enzyme structures for enzymes without experimental structures. | EMBL-EBI database [38] [18]. |

| ESIbank Database | Comprehensive database of enzyme-substrate interactions used for training models like EZSpecificity. | Tailor-made database; 8,124 enzymes x 34,417 substrates [35]. |

| BRENDA / SABIO-RK Databases | Curated repositories of enzyme functional data, including kinetic parameters (kcat, Km), used for validation. | Essential for benchmarking and creating unbiased test sets [18]. |

| Halogenase Enzymes & Substrates | Model system for experimental validation of specificity predictions in a therapeutically relevant enzyme class. | Used in validation achieving 91.7% accuracy [8]. |

| LC-MS / HPLC Systems | Analytical instrumentation for detecting and quantifying substrate conversion and product formation in validation assays. | Critical for high-throughput experimental verification. |

In the field of enzyme engineering, the optimization of protein stability and fitness represents a central challenge for developing effective biocatalysts and therapeutics. Traditional methods for assessing the impact of mutations on protein stability often rely on labor-intensive experimental assays or physical force fields, which can be time-consuming and limited in scalability [39]. The recent emergence of protein Language Models (pLMs), trained on millions of natural protein sequences, has revolutionized computational protein modeling. These models, including ProtT5 and ESM (Evolutionary Scale Modeling), generate sequence embeddings—dense numerical vector representations that encapsulate complex evolutionary, structural, and functional information [39] [40]. This application note details how these pLM embeddings are being integrated into deep learning frameworks to create powerful, generalizable tools for predicting protein stability and fitness, thereby providing a data-driven guide for protein engineering campaigns.

The Role of pLM Embeddings in Stability and Fitness Prediction