Generative Adversarial Networks in Drug Discovery: A Guide to AI-Driven Molecular Design

This article explores the transformative role of Generative Adversarial Networks (GANs) in designing novel drug molecules.

Generative Adversarial Networks in Drug Discovery: A Guide to AI-Driven Molecular Design

Abstract

This article explores the transformative role of Generative Adversarial Networks (GANs) in designing novel drug molecules. It provides a comprehensive overview for researchers and drug development professionals, covering the foundational principles of GANs, their specific architectures and applications in de novo molecular design, strategies to overcome common training and optimization challenges, and a comparative analysis of their performance against traditional methods and other AI models. The content synthesizes the latest research and real-world case studies to offer a practical guide on leveraging GANs for efficient and diverse ligand design, multi-property optimization, and accelerating early-stage drug discovery.

The Generative AI Revolution in Pharmaceutical Research

The pharmaceutical industry faces a formidable challenge: the cost to develop a new drug has reached approximately $2.8 billion, a process that typically spans over 12 years from discovery to market [1]. This immense cost and time investment occurs despite exploring only a minute fraction of the chemically feasible molecular space, estimated to encompass between 10^60 to 10^100 potential compounds [2]. This vast, unexplored territory represents both the core challenge and a significant opportunity for modern drug discovery. In response, artificial intelligence (AI) has emerged as a transformative force; it is projected that by 2025, 30% of new drugs will be discovered using AI, with the technology demonstrating the potential to reduce preclinical discovery timelines and costs by 25-50% [3].

Among AI methodologies, Generative Adversarial Networks (GANs) have arisen as a particularly powerful architecture for the de novo design of molecular structures. GANs introduce a paradigm of generative modeling that moves beyond traditional virtual screening, enabling researchers to computationally "invent" novel, optimized chemical entities from scratch rather than merely filtering existing compound libraries [4] [1]. This application note details the implementation of GAN frameworks to navigate the immense chemical space efficiently, addressing the central challenges of cost and time in early-phase drug discovery [5].

GAN Architectures for Molecular Design

The foundational GAN architecture, introduced by Goodfellow et al., operates as an adversarial game between two neural networks: a Generator (G) and a Discriminator (D) [4]. The generator creates synthetic molecular instances from random noise, while the discriminator evaluates them against real molecular data, assigning a probability that a sample is authentic. Both networks are trained concurrently, with the generator striving to produce increasingly realistic molecules to fool the discriminator [4]. This minimax objective can be formalized as: minG maxD Ex~Pdata[log D(x)] + Ez~P(z)[log(1 - D(G(z)))] [4]

For the complex, structured data of molecules, several advanced GAN variants have proven particularly effective:

- Wasserstein GAN (WGAN): Replaces the Jensen-Shannon divergence with the Earth-Mover (Wasserstein) distance, which provides a more stable training dynamic and helps overcome issues like mode collapse [4] [2].

- Graph-Transformer GAN: Leverages graph-based representations of molecular structures (atoms as nodes, bonds as edges) combined with transformer models to capture long-range dependencies, enabling the generation of target-specific drug candidates [6].

- Conditional GAN (cGAN): Conditions both the generator and discriminator on auxiliary information (e.g., a specific biological target or desired property), guiding the generation process toward molecules with predefined characteristics [4].

Table 1: Key GAN Architectures in Drug Discovery

| Architecture | Core Mechanism | Advantage | Typical Molecular Representation |

|---|---|---|---|

| Wasserstein GAN (WGAN) | Uses Earth-Mover distance and gradient penalty | Stable training, avoids mode collapse [2] | Graph, SMILES |

| Graph-Transformer GAN | Combines graph convolutions with self-attention | Captures complex topological patterns & long-range dependencies [6] | Graph |

| Conditional GAN (cGAN) | Conditions generation on labels (e.g., target class) | Enables target-specific or property-directed generation [4] | Graph, SMILES |

Performance Metrics and Quantitative Outcomes

Recent studies demonstrate the tangible output of GAN-based generative models. In a case study focused on generating novel quinoline scaffolds, an optimized Wasserstein GAN with a Graph Convolutional Network (GCN), termed MedGAN, achieved striking results [2]. The model was capable of generating 4,831 fully connected, novel, and unique quinoline molecules absent from the original training dataset [2]. This success underscores the potential of scaffold-focused generation to reduce the latent space required for learning, leading to more efficient and accurate models [2].

Table 2: Quantitative Performance of a Generative Model (MedGAN) for Quinoline Scaffolds [2]

| Performance Metric | Reported Result | Implication for Drug Discovery |

|---|---|---|

| Validity | 25% | Quarter of generated structures are chemically valid molecules. |

| Full Connectivity | 62% | Majority of valid molecules are single, connected structures. |

| Scaffold Fidelity | 92% | Overwhelming majority of outputs retain the desired quinoline core. |

| Novelty | 93% | Nearly all generated molecules are new, not present in training data. |

| Uniqueness | 95% | High structural diversity among the generated molecules. |

Beyond specific scaffolds, broader industry analyses project a significant macroeconomic impact. The integration of AI, including generative models, is estimated to reduce drug discovery timelines and associated costs in preclinical stages by 25% to 50%, accelerating the delivery of new treatments to patients [3].

Experimental Protocol: Implementing a GAN for Molecular Generation

This protocol outlines the key steps for implementing a GAN-based molecular generation pipeline, exemplified by the MedGAN study [2].

Data Preparation and Molecular Representation

- Data Sourcing: Obtain molecular structures from public databases such as ChEMBL [1] or ZINC [2]. For a target-specific approach, select compounds with known activity against the target of interest.

- Data Curation: Filter the dataset based on desired properties (e.g., molecular weight between 250-500 Daltons, LogP between -1 and 5) to enforce drug-likeness [2].

- Graph Representation: Convert each molecule into a graph representation. This involves creating:

- An Adjacency Tensor: Encodes the bonds (edges) between atoms.

- A Feature Tensor: Encodes atom (node) characteristics, including atom type, chirality, and formal charge [2].

Model Architecture and Training Configuration

- Generator Network (G): Design a network that maps a random noise vector (latent space of ~256 dimensions) to a molecular graph. Use a combination of Graph Convolutional Network (GCN) layers to build the graph structure [2].

- Discriminator/Critic Network (D): Design a network that takes a molecular graph (real or generated) and outputs a scalar score (realism for a standard GAN, or Wasserstein distance for a WGAN) [2].

- Hyperparameter Selection:

- Optimizer: RMSprop has shown superior performance over Adam for graph generation tasks in some studies, as it better navigates complex loss landscapes [2].

- Learning Rate: A value of 0.0001 is a typical starting point for stable training [2].

- Activation Functions: LeakyReLU, tanh, and ReLU can be evaluated. Empirical results may show best performance with a combination (e.g., tanh and ReLU) depending on the task [2].

Model Training and Validation

- Adversarial Training: Train the generator and discriminator in alternating cycles. The generator strives to produce molecules that the discriminator cannot distinguish from real data, while the discriminator continuously improves its ability to identify fakes [4] [2].

- Validation and Evaluation:

- Validity: Check the percentage of generated molecular graphs that correspond to valid chemical structures using a toolkit like RDKit.

- Uniqueness: Assess the structural diversity of the valid generated molecules.

- Novelty: Determine the percentage of unique generated molecules not found in the training set.

- Property Prediction: Use pre-trained models to predict ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) properties and synthetic accessibility of the generated molecules.

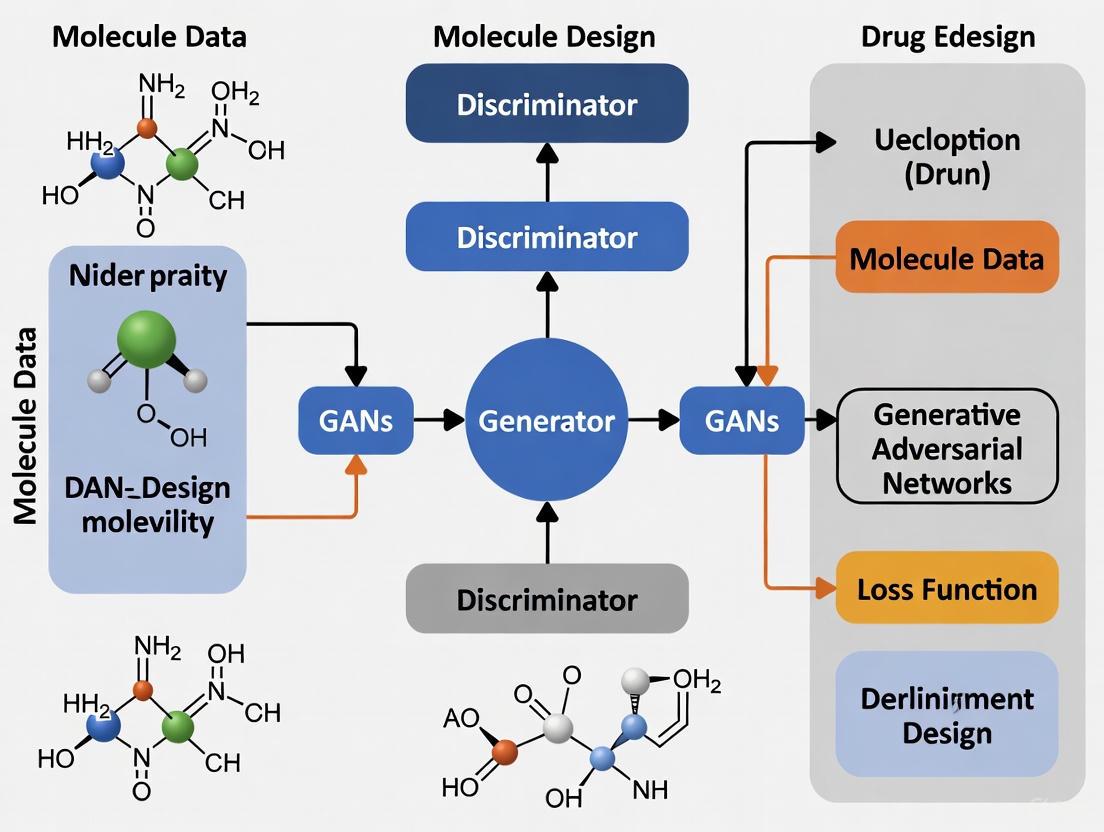

The following workflow diagram illustrates the complete MedGAN process:

The Scientist's Toolkit: Essential Research Reagents & Solutions

Successful implementation of a GAN pipeline for drug discovery relies on a suite of computational tools and data resources.

Table 3: Essential Research Reagents & Solutions for GAN-based Drug Discovery

| Tool/Resource | Type | Function in the Workflow |

|---|---|---|

| ZINC/ChEMBL | Chemical Database | Source of known bioactive molecules and building blocks for training generative models [2] [7]. |

| RDKit | Cheminformatics Toolkit | Handles molecular representation (e.g., SMILES, graph conversion), validity checks, and descriptor calculation [2]. |

| Graph Convolutional Network (GCN) | Deep Learning Layer | Processes molecular graph data, learning patterns from atoms (nodes) and bonds (edges) [2]. |

| Wasserstein GAN (WGAN) | Training Framework | Provides stable training for the generative model using Earth-Mover distance and gradient penalty [4] [2]. |

| RMSprop Optimizer | Optimization Algorithm | An adaptive learning rate optimizer that can outperform others in complex graph generation tasks [2]. |

| Pharmacophore Model | Virtual Screening Filter | Defines steric and electronic features necessary for bioactivity; used to constrain generation or post-filter outputs [8] [9]. |

Integrated Workflow for Target-Specific Molecule Design

For a comprehensive drug discovery campaign, GANs can be integrated into a larger workflow that combines generative and screening approaches. The LEGION framework provides a case study for this, focusing on extensive chemical space coverage around a specific biological target like NLRP3 [9]. This workflow integrates generative AI with AI-guided screening and state-of-the-art cheminformatics.

This integrated process begins with structure-based pharmacophore modeling, which uses the 3D structure of a target protein to define the essential steric and electronic features required for binding [8]. This is complemented by ligand-based design strategies, which extract common features from known active ligands [9]. These constraints then guide a generative AI model (GAN) to create novel molecular structures de novo and to enumerate a massive virtual library (e.g., ~110 million structures in the LEGION case study) [9]. Subsequent AI-guided virtual screening, which may use machine learning models to predict docking scores thousands of times faster than classical docking, prioritizes the most promising candidates for synthesis and in vitro validation [9] [7]. This end-to-end pipeline demonstrates a powerful new paradigm for the intelligent and scalable exploration of chemical space in drug discovery.

What are GANs? Understanding the Adversarial Game Between Generator and Discriminator

Generative Adversarial Networks (GANs) represent a groundbreaking machine learning framework introduced by Ian Goodfellow and his colleagues in 2014 [10] [11]. This innovative approach operates within an unsupervised learning paradigm and utilizes deep learning techniques to generate realistic data by learning patterns from existing training datasets [11]. Unlike traditional models that only recognize or classify data, GANs take a creative approach by generating entirely new content that closely resembles real-world data [10]. The core innovation of GANs lies in their adversarial training process, where two neural networks—the generator and the discriminator—work in opposition to each other, continuously improving through competition [11] [12]. This unique architecture has transformed how computers generate images, videos, music, and more, making GANs particularly valuable in fields requiring synthetic data generation, including drug discovery and development [10] [13].

The Fundamental Architecture of GANs

The Adversarial Components

The GAN architecture consists of two deep neural networks that engage in an adversarial game [11] [12]:

Generator Model: The generator is a deep neural network that takes random noise as input and transforms it into synthetic data samples, such as images or molecular structures [10]. It learns the underlying data patterns by adjusting its internal parameters during training through backpropagation [10]. The generator's objective is to produce samples so realistic that the discriminator cannot distinguish them from genuine data [10] [12].

Discriminator Model: The discriminator acts as a binary classifier that distinguishes between real data from the training set and fake data produced by the generator [10] [11]. It learns to improve its classification ability through training, refining its parameters to detect fake samples more accurately [10]. For image data, the discriminator typically uses convolutional layers or other relevant architectures to extract features and enhance the model's discriminatory capabilities [10].

The Training Dynamics

The training process follows a competitive dynamic [12]:

- The generator starts with a random noise vector and transforms this noise into a fake data sample [10].

- The discriminator receives both real samples from the actual training dataset and fake samples created by the generator, then analyzes each input to determine whether it's real or fake [10].

- Through backpropagation, both networks update their parameters based on the outcome [11]. The generator uses feedback from the discriminator to improve, trying to create more realistic data, while the discriminator updates itself to better spot fake data [10] [11].

- This adversarial learning continues until the generator becomes highly proficient at producing realistic data and the discriminator struggles to distinguish real from fake samples, indicating the GAN has reached a well-trained state [10] [12].

Mathematical Foundation

The GAN training process is formalized through a MinMax loss function between the generator and discriminator [10]:

Where:

- G is the generator network

- D is the discriminator network

- p_data(x) is the true data distribution

- p_z(z) is the distribution of random noise (usually normal or uniform)

- D(x) is the discriminator's estimate that x is real

- D(G(z)) is the discriminator's estimate that generated data is real

The generator loss focuses on how well the generator can deceive the discriminator into believing its data is real, while the discriminator loss measures how well the discriminator can distinguish between fake and real data [11].

GAN Framework Visualization

GAN Training Workflow

GAN Variations and Their Applications in Drug Discovery

Key GAN Architectures

Several GAN architectures have been developed with specific advantages for drug discovery applications:

Vanilla GAN: The simplest GAN type with both generator and discriminator built using multi-layer perceptrons (MLPs) [10] [11]. While foundational, vanilla GANs face problems like mode collapse (producing limited output types) and unstable training [10].

Conditional GAN (cGAN): Adds conditional parameters (labels) to guide the generation process, enabling controlled output generation [10] [11]. This allows researchers to generate molecules with specific characteristics or target affinities [10].

Deep Convolutional GAN (DCGAN): Uses convolutional neural networks (CNNs) for both generator and discriminator, replacing max pooling layers with convolutional stride and removing fully connected layers [10] [11]. This architecture generates higher-quality, more realistic images and molecular representations [10].

Wasserstein GAN (WGAN): Employs Wasserstein distance as the loss function, offering more stable training dynamics and effectively overcoming issues like mode collapse [2]. This approach is particularly valuable for generating complex molecular structures [2].

Advanced GAN Frameworks for Molecular Design

Recent research has developed specialized GAN frameworks optimized for drug discovery:

MedGAN: An optimized architecture combining Wasserstein GAN with Graph Convolutional Networks (GCNs) to generate novel quinoline-scaffold molecules from complex molecular graphs [2]. This approach preserves important molecular properties including chirality, atom charge, and favorable drug-like characteristics while generating novel structures [2].

VGAN-DTI: A comprehensive framework that integrates GANs with variational autoencoders (VAEs) and multilayer perceptrons (MLPs) to improve drug-target interaction (DTI) predictions [14]. This model achieves remarkable performance with 96% accuracy, 95% precision, 94% recall, and 94% F1 score in predicting drug-target interactions [14].

InstGAN: A novel GAN based on actor-critic reinforcement learning with instant and global rewards, designed to generate molecules at the token-level with multi-property optimization [15]. This approach addresses the significant challenge of optimizing multiple chemical properties simultaneously, which is essential for practical drug discovery applications [15].

GAN Performance Comparison in Drug Discovery

Table 1: Performance Metrics of GAN Frameworks in Drug Discovery

| GAN Framework | Primary Application | Key Performance Metrics | Unique Advantages |

|---|---|---|---|

| MedGAN [2] | Novel quinoline-scaffold molecule generation | 25% valid molecules; 62% fully connected; 92% quinolines; 93% novel; 95% unique | Preserves chirality, atom charge, and drug-like properties |

| VGAN-DTI [14] | Drug-target interaction prediction | 96% accuracy; 95% precision; 94% recall; 94% F1 score | Combines GANs, VAEs, and MLPs for enhanced prediction |

| InstGAN [15] | Multi-property molecular optimization | Comparable performance to SOTA models | Efficiently scales from single to multi-property optimization |

Table 2: Molecular Generation Outcomes from Optimized GAN Models

| Evaluation Metric | MedGAN Performance [2] | Industry Significance |

|---|---|---|

| Validity Score | 0.25 | 25% of generated molecules are chemically valid |

| Connectivity Score | 0.62 | 62% of molecules are fully connected |

| Scaffold Specificity | 92% | Success rate in generating target quinoline molecules |

| Novelty | 93% | Percentage of generated molecules not in training data |

| Uniqueness | 95% | Demonstrates diversity in generated molecules |

| Total Novel Molecules | 4,831 | Fully connected, novel quinoline structures generated |

Experimental Protocols for GAN Implementation in Drug Discovery

Protocol 1: Implementing Molecular GAN with PyTorch

This protocol outlines the foundational steps for implementing a GAN for molecular generation using PyTorch, based on the CIFAR-10 dataset implementation [10]:

Step 1: Import Required Libraries

Step 2: Define Image Transformations

- Use PyTorch's transforms to convert images to tensors

- Normalize pixel values between -1 and 1 for better training stability

Step 3: Load and Prepare the Dataset

- Download and load the molecular dataset with defined transformations

- Use a DataLoader to process the dataset in mini-batches with size 32

- Shuffle the data to ensure robust training

Step 4: Define GAN Hyperparameters

- Set latent_dim: Dimensionality of the noise vector (typically 100)

- Set lr: Learning rate of the optimizer (typically 0.0002)

- Set beta1, beta2: Beta parameters for Adam optimizer (e.g., 0.5, 0.999)

- Set num_epochs: Number of training cycles (typically 10+)

Step 5: Build the Generator Network

- Create a neural network that converts random noise into molecular structures

- Use transpose convolutional layers, batch normalization, and ReLU activations

- Apply Tanh activation in the final layer to scale outputs to the range [-1, 1]

Step 6: Build the Discriminator Network

- Implement a binary classifier to distinguish real from generated molecules

- Use convolutional layers with appropriate activation functions

- Output a probability score between 0 and 1

Step 7: Training Loop

- Alternate between training discriminator and generator

- Use appropriate loss functions (e.g., binary cross-entropy)

- Monitor training progress and adjust parameters as needed

Protocol 2: Optimized MedGAN Framework for Quinoline Generation

This protocol details the specialized implementation for generating novel quinoline scaffolds, based on the optimized MedGAN architecture [2]:

Step 1: Data Preparation and Representation

- Represent molecules as graphs with adjacency and feature tensors

- Build feature tensors from chemical information including atom types, chirality, and atom charge

- Structure input data as graphs with nodes (atoms) and edges (bonds)

Step 2: Model Architecture Configuration

- Implement Wasserstein GAN (WGAN) with Gradient Penalty for training stability

- Incorporate Graph Convolutional Network (GCN) layers to analyze relationships between atoms and bonds

- Configure generator with 4,092 units and 63,451,470 trainable parameters

- Configure discriminator with 4,092 units and 22,831,617 trainable parameters

Step 3: Hyperparameter Optimization

- Set latent space dimensions to 256 inputs

- Use RMSprop optimizer instead of Adam for better performance in graph generation

- Set learning rate to 0.0001

- Use combination of tanh and ReLU activation functions

- Implement LeakyReLU to mitigate the "dying ReLU" problem

Step 4: Model Training and Validation

- Train generator and discriminator in alternating cycles

- Monitor validity, connectivity, and scaffold specificity scores

- Validate generated structures for chemical correctness and novelty

- Employ regularization techniques to prevent overfitting

Step 5: Molecular Evaluation and Selection

- Assess generated molecules for drug-likeness using standard metrics

- Evaluate pharmacokinetics, toxicity, and synthetic accessibility

- Select promising candidates for further experimental validation

Table 3: Key Research Reagent Solutions for GAN-Based Drug Discovery

| Resource Category | Specific Tools & Databases | Function in GAN Implementation |

|---|---|---|

| Chemical Databases | ZINC15, ChEMBL, BindingDB | Provide training data of known molecules and their properties [14] [2] |

| Molecular Representations | SMILES, Molecular Graphs, Fingerprints | Standardized formats for representing chemical structures [14] [15] |

| Deep Learning Frameworks | PyTorch, TensorFlow, Keras | Provide building blocks for implementing GAN architectures [10] |

| Computational Resources | GPU Clusters, Cloud Computing | Accelerate training of computationally intensive GAN models [2] |

| Evaluation Metrics | Validity, Uniqueness, Novelty Scores | Quantify performance of molecular generation models [2] |

| Property Prediction | QSAR Models, Docking Simulations | Assess generated molecules for desired biological activity [14] |

GAN Integration in Drug Discovery Workflow

GANs in Drug Discovery Pipeline

Challenges and Future Directions

Despite their promising applications, GANs in drug discovery face several significant challenges. Training instability remains a persistent issue, where the generator and discriminator may not achieve equilibrium, leading to suboptimal performance [10] [15]. Mode collapse, where the generator produces limited diversity in outputs, is another common problem that requires specialized architectural solutions [10] [16]. The high computational cost associated with training GANs on large chemical databases presents practical limitations, particularly when incorporating reinforcement learning with techniques like Monte Carlo Tree Search [15].

Future research directions focus on improving training stability through novel architectures like Wasserstein GAN with gradient penalty [2], enhancing molecular diversity through techniques such as maximized information entropy [15], and developing more efficient multi-property optimization approaches [15]. As these technical challenges are addressed, GANs are poised to become increasingly valuable tools in accelerating drug discovery and reducing development costs, potentially generating between USD 60 billion and USD 110 billion annually in value for the pharmaceutical sector [14].

Why GANs for Molecules? Key Advantages Over Traditional Generative Models

The exploration of chemical space for designing new drug candidates is a monumental challenge due to its vastness and high dimensionality. Traditional inverse design approaches typically rely on heuristic rules or domain-specific knowledge, often encountering difficulties with novelty and generalizability [17]. While machine learning offers promise, its need for massive labeled datasets remains a significant constraint. Generative models provide a revolutionary solution by creating synthetic data that mimics real molecular characteristics, enabling researchers to generate novel compounds with desired properties without sole reliance on extensive experimental datasets [17].

Generative Adversarial Networks (GANs) represent a paradigm shift in this landscape. Unlike traditional generative models such as Gaussian Mixture Models and Hidden Markov Models, GANs employ an adversarial training process that allows them to produce highly realistic and complex molecular structures [18] [4]. This capability is particularly valuable in drug discovery, where researchers can create virtual libraries of molecules with tailored properties, thus accelerating the identification of promising drug candidates [13] [19]. The flexibility of GANs to handle various molecular representations, including SMILES strings and molecular graphs, further enhances their utility across different stages of the drug development pipeline [20] [4].

Key Advantages of GANs Over Traditional Generative Models

When evaluated against traditional generative models, GANs demonstrate distinct and powerful advantages for molecular design, primarily driven by their unique adversarial architecture and capacity for learning complex distributions.

Table 1: Comparative Analysis of Generative Models for Molecular Design

| Feature | Traditional Generative Models (e.g., Gaussian Mixture Models) | Generative Adversarial Networks (GANs) |

|---|---|---|

| Theoretical Foundation | Well-established statistical and probabilistic theories [18] | Adversarial game between generator and discriminator networks [4] |

| Expressive Power | Limited ability to model complex distributions and generate realistic data [18] | High-quality, realistic, and complex molecular structures [18] [14] |

| Handling High-Dimensionality | Struggles due to the curse of dimensionality [18] | Excels at modeling high-dimensional data like molecular structures [18] |

| Output Diversity | Limited by pre-defined distributions | Capable of generating structurally diverse compounds with desired pharmacological traits [14] [21] |

| Primary Training Challenge | Slow and difficult convergence with iterative optimization [18] | Training instability and mode collapse, mitigated by advanced variants [17] [21] |

| Interpretability | Generally more interpretable [18] | Often considered a "black box" [18] |

Generation of Highly Realistic and Diverse Molecular Structures

The most significant advantage of GANs lies in their ability to produce novel molecules that are not only chemically valid but also exhibit high realism and structural diversity. Traditional generative models are often limited in their expressiveness and struggle to capture the complex patterns inherent in molecular data [18]. In contrast, the adversarial training process enables GANs to learn the underlying data distribution of known chemicals with remarkable fidelity, allowing them to generate plausible new candidates that push the boundaries of known chemical space [14]. This diversity is crucial for exploring novel therapeutic mechanisms and avoiding regions of chemical space with known intellectual property constraints.

Superior Handling of High-Dimensional and Complex Chemical Space

The chemical space of possible drug-like molecules is astronomically large and inherently high-dimensional. Traditional generative models often succumb to the "curse of dimensionality," making it difficult for them to model this space effectively [18]. GANs, particularly modern architectures like Graph-Transformer GANs, are exceptionally well-suited for this task. They can natively process complex molecular representations, such as 2D molecular graphs, which retain connectivity information that is lost in simpler linear notations like SMILES [20] [22]. This capability allows for a more nuanced and biologically relevant generation process.

Flexibility and Integration into End-to-End Discovery Platforms

GANs offer remarkable flexibility, both in the types of data they can process and their ability to be integrated into larger, automated drug discovery workflows. They can be conditioned on specific properties, such as high potency or low toxicity, to guide the generation toward molecules with optimized profiles [19] [4]. Furthermore, as evidenced by industry-leading platforms like Insilico Medicine's Chemistry42, GANs form a core component of end-to-end AI-driven discovery systems. These platforms integrate target identification, molecular generation, and clinical outcome prediction, creating a holistic and efficient R&D pipeline [23]. This synergy between generative and predictive components underscores the transformative role of GANs in modern computational drug design.

Detailed Experimental Protocols for GAN Implementation

To leverage the advantages of GANs, researchers require robust and reproducible experimental protocols. The following sections detail a standard workflow for molecular generation using a GAN framework and a specific protocol for an adaptive training technique that enhances exploration.

Protocol 1: Standard GAN Training forDe NovoMolecular Design

This protocol outlines the foundational steps for training a GAN to generate novel molecular structures, using SMILES strings or molecular graphs as input.

Objective: To train a generative adversarial network capable of producing valid, novel, and unique molecules with drug-like properties.

Materials & Reagents:

- Hardware: A high-performance computing workstation with a modern GPU (e.g., NVIDIA A100 or RTX 4090) with a minimum of 16GB VRAM.

- Software: Python 3.8+, PyTorch or TensorFlow, RDKit, Scikit-learn, and a specialized chemical informatics library (e.g., DeepChem or ChemPy).

- Datasets: A curated and pre-processed chemical database such as ZINC, ChEMBL, or PubChem [20] [21].

Table 2: Research Reagent Solutions for GAN Experiments

| Reagent / Resource | Function / Application | Example Sources |

|---|---|---|

| ZINC Database | A large, publicly available database of commercially available compounds for training generative models. | https://zinc.docking.org/ |

| RDKit | An open-source cheminformatics toolkit used for processing molecules, calculating descriptors, and validating generated structures. | https://www.rdkit.org/ |

| PyTorch | A deep learning framework used to build and train the generator and discriminator neural networks. | https://pytorch.org/ |

| BindingDB | A public database of protein-ligand binding affinities used for training conditional GANs or validating generated molecules. | https://www.bindingdb.org/ |

Procedure:

- Data Preprocessing: Standardize the molecular structures from the chosen dataset. For SMILES-based models, canonicalize the SMILES strings and remove duplicates. For graph-based models, convert molecules into graph representations where nodes are atoms and edges are bonds.

- Model Architecture Selection:

- Generator (G): Design a network that maps a random noise vector

zto a molecular structure. For SMILES, this is typically a recurrent neural network (RNN) or Transformer. For graphs, use a graph neural network. - Discriminator (D): Design a network that takes a molecular structure and outputs a probability of it being "real" (from the training data) versus "fake" (from the generator).

- Generator (G): Design a network that maps a random noise vector

- Adversarial Training:

- Initialize the weights of both G and D.

- For each training iteration: a. Train Discriminator: Sample a mini-batch of real molecules from the training data and a mini-batch of generated molecules from G. Update D's parameters to maximize its ability to correctly classify real and fake molecules. b. Train Generator: Update G's parameters to minimize the discriminator's ability to detect its fakes (i.e., maximize the probability that D assigns to generated molecules being real).

- Validation and Sampling: Periodically sample molecules from the generator during training. Use RDKit to validate their chemical correctness and calculate key metrics (validity, uniqueness, novelty).

- Termination: Stop training when the performance on the validation set plateaus or the generated molecules meet predefined quality thresholds.

The following workflow diagram illustrates this standard training procedure:

Protocol 2: Adaptive Training with Genetic Algorithm-inspired Replacement

This advanced protocol addresses the common issue of mode collapse in GANs by incorporating concepts from Genetic Algorithms, which has been shown to drastically improve the exploration of chemical space [21].

Objective: To enhance the diversity and novelty of generated molecules by dynamically updating the training dataset with high-performing generated candidates.

Materials & Reagents: Same as Protocol 1, with the additional requirement of a defined fitness function (e.g., quantitative estimate of drug-likeness - QED).

Procedure:

- Initialization: Begin with a standard GAN training loop (as in Protocol 1) on the initial training dataset (

Training Data). - Sampling and Evaluation: At regular intervals (e.g., every N epochs), use the current generator to produce a large set of candidate molecules.

- Fitness Assessment: Calculate a fitness score (e.g., drug-likeness) for each generated candidate using a predefined metric.

- Replacement Strategy: Select the top-performing generated molecules and use them to replace a portion of the least-fit molecules in the current

Training Data. This creates an updated, evolved training set.- Optional Recombination: Introduce further diversity by applying crossover (recombination) between generated molecules and molecules in the training set [21].

- Continued Training: Resume GAN training using the newly updated

Training Data. This process continuously shifts the training distribution, encouraging the GAN to explore new regions of chemical space. - Termination: Continue for a fixed number of cycles or until a satisfactory level of novelty and fitness is achieved in the generated molecules.

The adaptive training process, which significantly enhances exploration, is visualized below:

Case Study & Performance Validation

The practical efficacy of GANs in molecular design is best demonstrated through concrete examples and quantitative benchmarks. The VGAN-DTI framework, which integrates GANs with Variational Autoencoders (VAEs) and Multilayer Perceptrons (MLPs), serves as a compelling case study. This model was designed to enhance the prediction of drug-target interactions (DTI), a critical task in early-stage drug discovery [14].

In this architecture, the GAN is tasked with generating diverse molecular candidates, while the VAE focuses on producing synthetically feasible molecules by learning smooth latent representations. The MLP then predicts the binding affinity between the generated molecules and target proteins. This synergistic combination leverages the strengths of each component: the GAN's diversity mitigates the VAE's tendency to produce overly smooth distributions, while the VAE's stability aids the GAN's training. When rigorously evaluated on a benchmark dataset, this hybrid framework achieved a predictive accuracy of 96%, with a precision of 95% and a recall of 94%, substantially outperforming existing methods [14]. This result validates that GAN-generated molecules are not only structurally novel but also functionally relevant for specific biological targets.

Furthermore, studies on adaptive training methods have provided quantitative evidence of GANs' superior exploration capability. Research showed that a standard GAN (the control) quickly plateaus in the number of novel molecules it produces. In contrast, a GAN employing a genetic algorithm-inspired replacement strategy continued to generate new molecules throughout training, ultimately producing an order of magnitude more novel compounds (increasing from ~10⁵ to ~10⁶) [21]. This approach also successfully shifted the property distributions of generated molecules (e.g., increasing drug-likeness) away from the original training data, proving its ability to guide exploration toward more desirable regions of chemical space.

Generative Adversarial Networks have firmly established themselves as a transformative technology in computational molecular design. Their key advantages—including the generation of highly realistic and diverse molecular structures, superior handling of high-dimensional chemical space, and flexibility for integration and optimization—provide a powerful toolkit for addressing the inherent challenges of drug discovery. While challenges such as training instability and interpretability persist, advanced strategies like hybrid architectures (e.g., LM-GAN, VAE-GAN) and adaptive training protocols effectively mitigate these issues.

The ongoing development of more sophisticated GAN variants, such as Graph-Transformer GANs, promises to further enhance the fidelity and biological relevance of generated molecules. As the field progresses, the integration of GANs into end-to-end, automated drug discovery platforms signifies a paradigm shift toward a more efficient, data-driven future for pharmaceutical R&D. By enabling the rapid exploration and optimization of novel chemical entities, GANs are poised to significantly accelerate the journey from concept to clinic, reducing the time and cost associated with bringing new therapeutics to patients.

The application of Generative Adversarial Networks (GANs) has revolutionized de novo molecular design, offering an powerful method for exploring the vast chemical space estimated to contain up to 10^60 drug-like molecules [24]. However, GANs face significant challenges, including training instability, mode collapse, and difficulties in generating valid molecular structures from simplified molecular input line entry system (SMILES) representations [25] [26]. To address these limitations, researchers are increasingly turning to hybrid approaches that combine GANs with other powerful artificial intelligence frameworks. Variational Autoencoders (VAEs) provide a stable, probabilistic approach to learning smooth latent representations of molecular structures, while Reinforcement Learning (RL) introduces targeted optimization capabilities for specific chemical properties [27]. This article examines how the strategic integration of VAEs and RL with GAN frameworks is creating more robust and effective generative models, advancing the frontier of AI-driven drug discovery.

Architectural Foundations: VAEs and GANs

Variational Autoencoders (VAEs) for Molecular Representation

Variational Autoencoders employ an encoder-decoder architecture to learn continuous latent representations of molecular structures [14] [28]. The encoder network maps input molecular features (such as fingerprint vectors or SMILES strings) to a probabilistic latent space, characterized by mean (μ) and log-variance (log σ²) parameters [14]. The decoder network then reconstructs molecular structures from samples drawn from this latent space. A key feature of VAEs is their loss function, which combines reconstruction loss with Kullback-Leibler (KL) divergence, ensuring the learned latent distribution approximates a prior distribution (typically Gaussian) while maintaining accurate reconstructions [14] [29]. This probabilistic framework enables smooth interpolation in latent space and generates synthetically feasible molecules, making VAEs particularly valuable for initial exploration of chemical space [30] [31].

Generative Adversarial Networks (GANs) for Realistic Synthesis

GANs operate on an adversarial principle, featuring a generator network that creates synthetic molecular structures from random noise vectors, and a discriminator network that distinguishes between real and generated molecules [28] [26]. The two networks engage in a minimax game, with the generator striving to produce increasingly realistic molecules that fool the discriminator [14]. This adversarial training process enables GANs to generate highly realistic and diverse molecular structures with desirable pharmacological characteristics [14] [31]. However, GAN training is notoriously unstable and susceptible to mode collapse, where the generator produces limited structural diversity [28] [26]. Additionally, applying GANs to discrete data representations like SMILES strings presents significant challenges [25].

Table 1: Comparative Analysis of VAE and GAN Architectures for Molecular Generation

| Feature | Variational Autoencoders (VAEs) | Generative Adversarial Networks (GANs) |

|---|---|---|

| Architecture | Encoder-decoder with probabilistic latent space [28] | Generator-discriminator in adversarial setup [28] |

| Training Stability | Generally stable and predictable [31] | Often unstable, requires careful tuning [31] [26] |

| Sample Quality | Can produce blurrier outputs [28] | High-quality, sharp molecular structures [31] |

| Latent Space | Explicit, probabilistic, interpretable [28] [31] | Implicit, less interpretable [31] |

| Diversity | Better coverage of data distribution [28] | Prone to mode collapse (limited diversity) [28] |

| Primary Strength | Smooth latent space interpolation, anomaly detection [31] | High realism, creative applications [31] |

Research Reagent Solutions for Molecular Generation

Table 2: Essential Research Tools and Resources for AI-Driven Molecular Generation

| Resource Type | Examples | Function in Molecular Generation |

|---|---|---|

| Molecular Representations | SMILES, SELFIES, DeepSMILES, Molecular Graphs [24] | Encodes chemical structures for machine learning processing [24] |

| Chemical Databases | BindingDB, ZINC, ChEMBL [14] [32] | Provides annotated bioactivity and compound data for training [32] |

| Benchmark Datasets | QM9, ZINC [25] | Standardized datasets for model validation and comparison [25] |

| Software Platforms | DeepChem, Schrödinger Glide, AutoDock [32] | Enables virtual screening, docking simulations, and property prediction [32] |

| ADMET Prediction Tools | ADMET Predictor, SwissADME [32] | Predicts pharmacokinetic and toxicity profiles early in design [32] |

Synergistic Integration: VAE-GAN Hybrid Frameworks

The VGAN-DTI Framework

The VGAN-DTI framework represents a cutting-edge approach that synergistically combines VAEs, GANs, and Multilayer Perceptrons (MLPs) for enhanced drug-target interaction (DTI) prediction [14]. In this architecture, VAEs serve as precise encoders of molecular features, generating latent representations and novel molecules for target protein interactions [14]. GANs then generate diverse drug-like molecules, enhancing compound efficacy and structural variety [14]. Finally, MLPs classify interactions and predict binding affinities using labeled datasets from sources like BindingDB [14]. This hybrid model has demonstrated exceptional performance, achieving 96% accuracy, 95% precision, 94% recall, and 94% F1 score in DTI prediction, outperforming existing methods [14]. The VAE component ensures synthetically feasible molecule generation, while the GAN component enhances structural diversity, together creating a more balanced and effective generative system.

Diagram 1: VGAN-DTI Framework Architecture. This workflow illustrates the synergistic integration of VAEs for latent space learning, GANs for molecular generation, and MLPs for interaction prediction.

Experimental Protocol: Implementing VGAN-DTI for Drug-Target Interaction Prediction

Objective: Predict drug-target interactions and generate novel molecular candidates with desired binding properties using the VGAN-DTI framework.

Materials and Software Requirements:

- Molecular datasets (BindingDB, ChEMBL)

- SMILES or molecular graph representations

- Python with TensorFlow/PyTorch

- RDKit or Open Babel for chemical handling

- High-performance computing resources (GPU recommended)

Methodology:

Data Preprocessing:

VAE Component Training:

- Configure encoder with 2-3 hidden layers (512 units each, ReLU activation)

- Implement latent space with mean (μ) and log-variance (log σ²) layers

- Set up decoder mirroring encoder architecture

- Train using combined loss function: ℒ{VAE} = 𝔼[qθ(z|x)] [log pφ(x|z)] - DKL[q_θ(z|x) || p(z)] [14]

- Optimize with Adam optimizer, learning rate 0.001

GAN Component Training:

- Initialize generator network taking latent vectors as input

- Configure discriminator with leaky ReLU activation

- Implement adversarial training with alternating updates:

- Apply gradient penalty or Wasserstein distance to stabilize training [25]

MLP Integration:

- Design MLP with input layer (combined drug-target features)

- Implement 3 hidden layers with ReLU activation

- Configure output layer with sigmoid activation for interaction probability

- Train using Mean Squared Error (MSE) loss [14]

Model Validation:

- Evaluate using 10-fold cross-validation

- Assess generated molecules for validity, uniqueness, and novelty

- Quantify performance with accuracy, precision, recall, and F1 score [14]

Reinforcement Learning-Enhanced GANs

RL-MolGAN: Transformer-Based Molecular Generation

The RL-MolGAN framework addresses fundamental challenges in GAN-based molecular generation by integrating reinforcement learning with a transformer-based architecture [25]. This innovative approach features a first-decoder-then-encoder structure that facilitates both de novo and scaffold-based molecular design [25]. The model incorporates Monte Carlo Tree Search (MCTS) and policy gradient methods to optimize generated molecules against multi-property reward functions, which typically include objectives such as binding affinity, synthetic accessibility, and drug-likeness [25]. To further enhance training stability, the RL-MolWGAN extension incorporates Wasserstein distance with gradient penalty and mini-batch discrimination [25]. Experimental validation on QM9 and ZINC datasets has demonstrated the framework's effectiveness in producing high-quality molecular structures with diverse and desirable chemical properties [25].

Diagram 2: RL-MolGAN Architecture. This framework combines transformer-based generation with reinforcement learning for property-guided molecular optimization.

Experimental Protocol: Reinforcement Learning-Driven Molecular Optimization

Objective: Generate novel molecular structures with optimized chemical properties using RL-enhanced GAN frameworks.

Materials and Software Requirements:

- QM9 or ZINC datasets [25]

- Property prediction models (e.g., for binding affinity, solubility)

- RL frameworks (OpenAI Gym, Stable Baselines)

- Transformer architecture implementation

- Chemical validation tools (RDKit)

Methodology:

Base Model Setup:

- Implement transformer generator with first-decoder-then-encoder structure [25]

- Pre-train on QM9/ZINC datasets using maximum likelihood estimation

- Validate initial output for chemical correctness and diversity

Reinforcement Learning Integration:

- Define reward function incorporating multiple objectives:

- Bioactivity (docking scores or QSAR predictions)

- Drug-likeness (QED, Lipinski parameters)

- Synthetic accessibility (SA score)

- Structural novelty [27]

- Implement policy gradient method (e.g., REINFORCE) or proximal policy optimization

- Configure Monte Carlo Tree Search for exploration of promising molecular trajectories [25]

- Define reward function incorporating multiple objectives:

Adversarial Training Enhancement:

Multi-objective Optimization:

- Employ weighted sum approaches or Pareto optimization for conflicting objectives

- Utilize Bayesian optimization for efficient exploration of chemical space [27]

- Implement reward shaping to guide learning process

Validation and Analysis:

- Evaluate generated molecules for validity, uniqueness, novelty

- Assess property distributions against desired objectives

- Conduct docking studies or in vitro testing for top candidates

The integration of VAEs and RL with GAN frameworks represents a paradigm shift in AI-driven molecular design, creating more robust and effective generative models. VAE-GAN hybrids leverage the stability and interpretability of VAEs with the high-quality generation capabilities of GANs, while RL-enhanced GANs enable targeted optimization of specific chemical properties [14] [25] [27]. Emerging trends include the incorporation of diffusion models for enhanced sample quality, federated learning approaches to address data privacy concerns while leveraging multi-institutional datasets, and explainable AI techniques to interpret model decisions and build trust among medicinal chemists [30] [32]. As these technologies continue to mature, hybrid generative models are poised to significantly accelerate the drug discovery pipeline, reducing both costs and development timelines while increasing the success rate of candidate compounds [14] [32]. The expanding toolbox of generative AI—encompassing VAEs, RL, GANs, and their synergistic combinations—offers unprecedented opportunities to navigate the vast chemical space and design novel therapeutic agents with precision and efficiency.

Architectures and Real-World Applications of GANs in Molecular Design

Application Notes

The integration of Generative Adversarial Networks (GANs) into molecular design has significantly accelerated the early stages of drug discovery. These models address the traditional bottlenecks of cost and time by enabling the in silico generation of novel molecular structures. The evolution from 1D/2D molecular representations to sophisticated 3D structure-based design marks a pivotal shift, enhancing the realism and potential efficacy of generated candidates [14] [33].

This document provides a detailed breakdown of three leading GAN architectures: VGAN-DTI, which excels in 2D drug-target interaction prediction; TopMT-GAN, a 3D topology-driven model for ligand design; and InstGAN, for which specific architectural details could not be located in the provided search results. The notes below will focus on the two identified models, outlining their applications, performance, and experimental protocols.

Table 1: Quantitative Performance Overview of Featured Models

| Model Name | Primary Application | Key Performance Metrics | Dataset(s) Used |

|---|---|---|---|

| VGAN-DTI [14] | Drug-Target Interaction (DTI) Prediction | Accuracy: 96%, Precision: 95%, Recall: 94%, F1 Score: 94% [14] | BindingDB [14] |

| TopMT-GAN [33] | 3D Structure-Based Ligand Design | Enrichment up to 46,000-fold compared to high-throughput virtual screening [33] | Evaluated on five diverse protein pockets [33] |

| InstGAN | Information not available in search results | Information not available in search results | Information not available in search results |

VGAN-DTI: Enhancing DTI Prediction with a Hybrid Generative Framework

VGAN-DTI is a generative framework designed to improve the prediction of drug-target interactions (DTIs), a critical step in identifying new therapeutic candidates. Its primary application is the accurate classification of interactions and prediction of binding affinities, which helps prioritize molecules for further experimental validation [14].

The model operates by first using a Variational Autoencoder (VAE) to create optimal latent representations of molecular structures. A GAN then leverages these representations to generate diverse and realistic drug-like molecules. Finally, a Multilayer Perceptron (MLP) classifier predicts the interaction between the generated molecules and target proteins [14]. This hybrid approach has demonstrated superior performance in predicting DTIs, as evidenced by its high accuracy and robustness in ablation studies [14].

TopMT-GAN: A 3D Topology-Driven Approach for Ligand Design

TopMT-GAN addresses the challenge of efficiently generating a focused library of diverse and potent candidate molecules with precise 3D poses for a given protein pocket. Its application is in early-stage drug discovery, specifically for hit and lead generation [33].

This model employs a novel two-step strategy. First, one GAN constructs the 3D molecular topology within the protein binding pocket. A second GAN then assigns atom and bond types to this topology. This integrated approach allows for the efficient generation of novel ligands that are both effective and synthetically feasible [33] [34]. A key feature is its subsequent "matching" step, which deconstructs the generated 3D structures into fragments available in commercial libraries (like Enamine REAL space), ensuring the molecules can be physically synthesized [34].

Experimental Protocols

Protocol for VGAN-DTI Model Training and Evaluation

This protocol details the procedure for training and evaluating the VGAN-DTI model for drug-target interaction prediction.

1. Data Preprocessing

- Molecular Representation: Represent drug molecules using fingerprint vectors or SMILES strings [14].

- Protein Representation: Represent target proteins using their amino acid sequences [14].

- Dataset: Use the BindingDB dataset. Split the data into training, validation, and test sets [14].

2. Model Training

- VAE Training:

- Encoder: Feed molecular features into an encoder network with 2-3 fully connected hidden layers (512 units each, ReLU activation) to produce parameters (μ, log σ²) for the latent distribution [14].

- Latent Sampling: Sample a latent vector

zusing the reparameterization trick:z = μ + σ ⋅ ε, where ε ~ N(0, I) [14]. - Decoder: Pass

zthrough a decoder network (mirroring the encoder architecture) to reconstruct the molecular structure [14]. - Loss Function: Minimize the VAE loss, which is the sum of the reconstruction loss (binary cross-entropy) and the Kullback-Leibler (KL) divergence between the learned latent distribution and a standard normal prior [14].

- GAN Training:

- Generator: Input a random latent vector and pass it through a fully connected network with ReLU activation to generate a molecular representation [14].

- Discriminator: Input a molecular representation (real or generated) and pass it through a fully connected network with leaky ReLU activation to output a probability of it being real [14].

- Adversarial Loss: Train the generator and discriminator adversarially using the minimax objective [14].

- MLP Classifier Training:

- Input: Concatenate the latent representations of the drug (from VAE/GAN) and target protein.

- Architecture: Process the input through multiple hidden layers (e.g., three) with linear transformations and non-linear activations (e.g., ReLU) [14].

- Output Layer: Use a sigmoid activation function to produce a scalar value indicating the probability of interaction [14].

- Loss Function: Train the MLP using the Mean Squared Error (MSE) loss [14].

3. Model Evaluation

- Metrics: Calculate Accuracy, Precision, Recall, and F1 Score on the held-out test set [14].

- Ablation Study: Perform rigorous ablation studies to validate the contribution of each component (VAE, GAN, MLP) to the model's robustness [14].

Protocol for TopMT-GAN Driven Ligand Design

This protocol outlines the workflow for using the TopMT-GAN model to generate and validate novel ligands for a specific protein target.

1. Target Preparation

- Input: Obtain the 3D structure of the target protein's binding pocket [33].

2. De Novo Ligand Generation with TopMT-GAN

- Topology Generation: The first GAN module constructs 3D molecular topologies within the defined protein pocket [33] [34].

- Atom and Bond Assignment: The second GAN module assigns specific atom and bond types to the generated topologies [33].

- Output: Generate an initial diverse pool of novel ligand structures (e.g., 50,000 molecules) with 3D poses [34].

3. Synthetic Feasibility and Library Expansion (TopMT-Matching)

- Fragment Deconstruction: Deconstruct the generated 3D ligand structures into molecular fragments [34].

- Fragment Matching: Search for the identified fragments within a database of in-stock building blocks (e.g., Enamine's 259K fragment library) to ensure synthetic feasibility [34].

- Library Expansion: Recombine the matched fragments to create a larger library of potential hits (e.g., 200,000 molecules) with well-defined synthetic pathways [34].

4. Hierarchical Virtual Screening

- Initial Docking (Glide SP): Dock the expanded library. Filter based on docking scores, drug-likeness, ADME properties, and structural diversity [34].

- Refined Docking (Glide XP): Perform a second round of docking on the most promising ligands from the initial screen to further validate binding affinities and interaction profiles [34].

5. Visual Inspection

- Expert Review: Manually review the binding poses and interactions of the top-ranked molecules within the binding pocket to ensure favorable geometries [34].

6. Experimental Validation

- MD Simulation: Use Molecular Dynamics (MD) simulations to validate the stability and strength of ligand-target interactions over time [34].

- Wet Lab Testing: Synthesize the top candidate molecules and validate their activity and binding through biochemical or cellular assays [34].

Model Architecture and Workflow Diagrams

VGAN-DTI Architectural Workflow

VGAN-DTI Architecture

TopMT-GAN Ligand Design Pipeline

TopMT-GAN Design Pipeline

The Scientist's Toolkit

Table 2: Essential Research Reagents and Computational Tools

| Item | Function in Research | Application Context |

|---|---|---|

| BindingDB | A public database of measured binding affinities and interactions between drugs and target proteins [14]. | Used as a labeled dataset for training and evaluating DTI prediction models like VGAN-DTI [14]. |

| SMILES Strings | A line notation system for representing molecular structures in a string format that computers can process [14]. | Serves as a standard input for representing drug molecules in many AI models, including VGAN-DTI [14]. |

| Enamine REAL Space | A commercial collection of billions of readily synthesizable chemical compounds and building blocks [34]. | Used by TopMT-GAN's matching module to ensure generated molecules are synthetically feasible [34]. |

| Molecular Fingerprints | A way of representing a molecule as a bit string, encoding the presence or absence of specific substructures [14]. | Used as an alternative input representation for drug molecules in machine learning models [14]. |

| Glide (SP/XP) | A software for performing molecular docking simulations, predicting how a small molecule binds to a protein target [34]. | Used in the hierarchical virtual screening step of the TopMT-GAN workflow to filter and validate generated ligands [34]. |

| Molecular Dynamics (MD) Simulation | A computational method for simulating the physical movements of atoms and molecules over time [34]. | Used to validate the stability and strength of interactions between a generated ligand and its target protein [34]. |

Generative Artificial Intelligence (GenAI) is revolutionizing the field of drug discovery by providing powerful tools for the de novo design of novel molecular structures. This paradigm shift moves beyond traditional virtual screening of existing compound libraries to the on-demand creation of new chemical entities with tailored properties. Among various deep learning architectures, Generative Adversarial Networks (GANs) have emerged as a particularly promising framework for this inverse design problem. GANs facilitate the exploration of the vast chemical space (estimated at ~10⁶⁰ molecules) by learning the underlying data distribution of known compounds and generating novel, synthetically feasible candidates with optimized pharmacological profiles [17] [35]. This application note details the core methodologies, experimental protocols, and practical implementation strategies for employing GANs in de novo molecular generation within drug development research.

Core GAN Architectures for Molecular Generation

The standard GAN framework for molecular design consists of two competing neural networks: a Generator (G) that creates synthetic molecular structures from random noise vectors, and a Discriminator (D) that distinguishes these generated structures from real molecules in the training dataset [36] [14]. This adversarial training process pushes the generator to produce increasingly realistic and valid molecules. However, several specialized GAN architectures have been developed to address the unique challenges of molecular generation.

Table 1: Key GAN Architectures in De Novo Molecular Design

| Architecture | Key Mechanism | Advantages | Reported Performance |

|---|---|---|---|

| Hybrid LM-GAN [17] | Combines a masked Language Model (LM) with a GAN generator. | Enhances efficiency in optimizing properties; superior performance with smaller population sizes. | Outperforms standalone masked LMs; generates novel samples with structural diversity. |

| InstGAN [15] | Uses actor-critic Reinforcement Learning (RL) with instant and global rewards. | Alleviates mode collapse; enables multi-property optimization; faster training than MCTS-based models. | Achieves comparable performance to SOTA models; efficient multi-property optimization. |

| RRCGAN [37] | Integrates a Regressional & Conditional GAN with a reinforcement center and transfer learning. | Generates molecules with targeted, continuous property values; can extrapolate beyond training data range. | 75% of generated molecules have <20% relative error in targeted HOMO-LUMO gap; iteratively increases target property values. |

| VGAN-DTI [14] | Combines a VAE, GAN, and MLP for drug-target interaction (DTI) prediction. | Precisely encodes molecular features; generates diverse candidates; enhances predictive accuracy. | Achieves 96% accuracy, 95% precision, 94% recall, and 94% F1 score in DTI prediction. |

| GAN with Adaptive Training [21] | Incorporates concepts from Genetic Algorithms (GAs) by updating training data with generated molecules. | Promotes incremental exploration; limits mode collapse; drastically increases novel molecule production. | Over 10x improvement in novel molecule production compared to standard GANs. |

A significant challenge in molecular generation is the discrete nature of molecular representations, such as Simplified Molecular-Input Line-Entry System (SMILES) strings. To handle this, Reinforcement Learning (RL) algorithms, particularly Monte Carlo Tree Search (MCTS) and actor-critic methods, are often integrated into the GAN framework to guide the sequential generation of valid SMILES strings [38] [15].

Experimental Protocols & Workflows

Protocol 1: Implementing a GAN with Adaptive Training Data

This protocol, adapted from [21], uses an evolutionary strategy to combat mode collapse and enhance exploration.

- Initialization: Train a standard GAN (e.g., with LSTM-based generator and discriminator) on an initial dataset of known drug-like molecules (e.g., from ZINC or ChEMBL).

- Training Interval: Train the GAN for a predefined number of epochs (e.g., 100).

- Sample Generation & Validation: Use the trained generator to produce a large set of novel molecules. Validate the chemical correctness of the generated SMILES strings using a tool like RDKit.

- Training Data Update (Replacement): Replace a portion of the original training data with the novel, valid molecules generated in the previous step. Two primary strategies exist:

- Random Replacement: Randomly select generated molecules for inclusion.

- Guided Replacement: Select generated molecules based on a fitness function (e.g., quantitative estimate of drug-likeness - QED).

- Recombination (Optional): Introduce further diversity by applying crossover operations between generated molecules and the current training data, simulating genetic recombination.

- Iteration: Repeat steps 2-5 until a stopping criterion is met (e.g., number of iterations, or convergence of property distributions).

The workflow for this protocol is as follows:

Protocol 2: Multi-Property Optimization with Dynamic Reliability (DyRAMO)

This protocol, based on [38], prevents reward hacking by ensuring generated molecules remain within the reliable prediction domain of property models.

- Problem Formulation: Define the multiple target properties for optimization (e.g., inhibitory activity, metabolic stability, solubility).

- Model Setup: Train separate predictive models for each property and define their Applicability Domains (ADs) using a metric like the Maximum Tanimoto Similarity (MTS) to the training data.

- Bayesian Optimization (BO) Loop: Iterate the following steps to find the optimal reliability levels (ρ) for each property's AD:

- Step 1 - Set Reliability Levels: BO proposes a set of reliability levels (ρ₁, ρ₂, ... ρₙ) for the n properties.

- Step 2 - Molecular Generation & Evaluation: Use a generative model (e.g., RNN-based ChemTSv2) to design molecules that fall within the overlapping ADs defined by the ρ-values. Evaluate their multi-property reward.

- Step 3 - Compute DSS Score: Calculate the Degree of Simultaneous Satisfaction (DSS) score (Eq. 1), which balances the desirability of the reliability levels and the top reward values of the generated molecules.

- Step 4 - Update BO: The DSS score is fed back to the BO algorithm to refine its proposal for the next set of ρ-values.

- Final Generation: After the BO loop converges, use the optimal ρ-values to run a final, extensive molecular generation campaign, producing reliable, multi-property optimized candidates.

The logical relationship of the DyRAMO framework is visualized below:

The Scientist's Toolkit: Essential Research Reagents

Successful implementation of GAN-based molecular generation requires a suite of computational tools and resources.

Table 2: Key Research Reagents and Resources

| Tool/Resource | Type | Function in the Workflow |

|---|---|---|

| SMILES/SELFIES | Molecular Representation | Text-based string representations of molecular structure. SELFIES is more robust, guaranteeing 100% syntactic validity [35]. |

| RDKit | Cheminformatics Toolkit | Open-source library for validating generated SMILES, calculating molecular descriptors (e.g., QED, LogP), and handling chemical data. |

| ZINC/ChEMBL/PubChemQC | Chemical Databases | Public repositories of commercially available and bioactive molecules used for training generative models [21] [37]. |

| TensorFlow/PyTorch | Deep Learning Framework | Open-source libraries for building and training the generator and discriminator neural networks. |

| ChemTSv2 | Generative Software | An example platform for de novo molecular generation using RNN and MCTS, which can be integrated into frameworks like DyRAMO [38]. |

| Applicability Domain (AD) | Validation Metric | Defines the chemical space region where a predictive model is reliable, crucial for avoiding reward hacking [38]. |

GANs represent a powerful and flexible framework for the de novo generation of novel drug candidates. By leveraging architectures such as hybrid LM-GANs, InstGAN, and RRCGAN, and by adhering to rigorous experimental protocols that address critical challenges like mode collapse and reward hacking, researchers can efficiently explore the chemical space. The integration of evolutionary strategies, multi-objective optimization with reliability assurance, and iterative transfer learning enables the targeted design of synthetically feasible molecules with desired properties, significantly accelerating the early stages of drug discovery.

Scaffold-Based Design and Multi-Property Optimization with Reinforcement Learning

The integration of artificial intelligence (AI) in drug discovery has revolutionized traditional approaches to molecular design, offering promising opportunities to streamline and enhance the drug development process [39]. Within this landscape, generative adversarial networks (GANs) have emerged as transformative tools for generating novel molecular structures with desirable pharmacological characteristics [14]. This application note focuses specifically on the convergence of scaffold-based design principles with reinforcement learning (RL) techniques for multi-property optimization within GAN-driven molecular generation frameworks.

Scaffold-based design represents a strategic approach in medicinal chemistry where core molecular structures (scaffolds) are modified or replaced to improve drug properties while maintaining biological activity [40]. This approach is particularly valuable for addressing challenges such as toxicity, patentability, and optimizing multiple physicochemical and biological properties simultaneously [41]. When combined with RL—a machine learning paradigm where an agent learns optimal strategies through environment interaction—scaffold-based design transitions from a manual, intuition-driven process to an automated, data-driven workflow capable of navigating complex chemical spaces [42] [39].

The fusion of these methodologies within GAN architectures creates a powerful framework for inverse molecular design, where compounds are generated to meet specific target profiles rather than discovered through serendipity or exhaustive screening [30]. This document provides detailed application notes and protocols for implementing scaffold-based multi-property optimization with reinforcement learning, specifically contextualized within GAN frameworks for drug development research.

Theoretical Foundation

Scaffold-Based Design in Drug Discovery

Scaffold-based design operates on the principle of structural conservation while introducing strategic modifications to optimize molecular properties. The approach encompasses several key techniques:

- Scaffold Hopping: This involves replacing a core structural motif with a non-identical alternative while maintaining similar spatial arrangement of key functional groups [40]. The process enables researchers to circumvent problematic structural elements responsible for toxicity or patent conflicts while preserving pharmacophoric features essential for target binding.

- Core Replacement: In structure-based design, specific molecular portions can be systematically replaced using vector-based approaches that maintain critical decoration patterns (side chains) while introducing novel scaffold architectures [40].

- Fuzzy Similarity: Advanced algorithms introduce controlled variability through "wild card" parameters that retain core functionality while generating structurally distinct motifs, effectively escaping the "gravitational field" of similarity associated with a reference molecule [40].

The biological rationale for scaffold-based approaches stems from the recognition that proteins often accommodate diverse molecular frameworks that present similar pharmacophoric patterns in three-dimensional space. Successful scaffold hopping demonstrates that bioactivity can be maintained across structurally distinct chemotypes through conservation of key interaction features [40].

Reinforcement Learning Fundamentals

Reinforcement learning formulates molecular design as a sequential decision-making process where an agent (generative model) interacts with an environment (scoring functions) to maximize cumulative rewards [42] [39]. The fundamental components include:

- States (S): Represent molecular structures, typically as SMILES strings or molecular graphs [42].

- Actions (A): Defined as structural modifications, such as adding atoms, bonds, or fragments [41] [42].

- Reward (r): A numerical value reflecting the desirability of a generated molecule, typically computed as a function of multiple predicted properties [42].

The objective is to find optimal parameters Θ for a policy network G that maximizes the expected reward: J(Θ) = E[r(s_T)|s_0,Θ] [42]. This formulation enables guided exploration of chemical space toward regions with enhanced multi-property profiles.

GAN Architecture for Molecular Generation

Generative adversarial networks for molecular design typically consist of two neural networks: a generator that creates molecular structures and a discriminator that distinguishes between real and generated compounds [14]. When enhanced with reinforcement learning, the generator functions as a policy network that learns to produce molecules with desirable properties through reward signals [43] [14].

Advanced implementations such as InstGAN incorporate actor-critic RL with instant and global rewards to generate molecules at the token-level with multi-property optimization [43]. These frameworks leverage maximized information entropy to alleviate mode collapse—a common challenge in GAN training where limited diversity is generated [43].

Quantitative Multi-Property Optimization Frameworks

Desirability Functions for MPO

Multi-parameter optimization (MPO) requires balancing various, often competing, molecular properties. Desirability functions provide a mathematical framework for combining multiple property values into a single composite score [41]. The Derringer function, a commonly used approach, transforms individual properties into desirability scores between 0 (undesirable) and 1 (fully desirable):

Table 1: Property Ranges and Desirability Functions for MPO

| Property | Abbreviation | Target Range | Desirability Function | Objective |

|---|---|---|---|---|

| Quantitative Estimate of Drug-likeness | QED | 0-1 | Y = x | Maximize |

| Synthetic Accessibility | SA | 1-10 | Y = (9 - x)/10 | Minimize |

| Partition Coefficient | cLogP | -0.7 to 5 | Y = (-0.7 - x)/5.7 | Optimize range |

| Topological Polar Surface Area | TPSA | 20-130 Ų | Y = (130 - x)/110 | Optimize range |

| Molecular Weight | MW | 150-500 g/mol | Y = (500 - x)/350 | Optimize range |

| Number of Rotatable Bonds | nRotat | 0-9 | Y = (9 - x)/9 | Minimize |

The overall desirability score is computed as an additive mean of all normalized properties: (∑_{i=1}^n d_iY_i)/n, where d_i represents weights assigned to each property [41]. This composite score serves as the reward signal in reinforcement learning frameworks.

Scaffold-Focused Optimization Algorithms

Scaffold-focused Markov molecular Sampling (ScaMARS) represents an advanced implementation of scaffold-based multi-property optimization [41]. This graph-based Markov chain Monte Carlo framework generates molecules with optimal properties while maintaining core scaffold features:

- Architecture: ScaMARS utilizes a message passing neural network (MPNN) that predicts the probability of three possible actions (addition, subtraction, or modification of molecular fragments) to increase overall desirability scores [41].

- Fragment Library: The algorithm employs a curated list of 1,000 commonly occurring fragments extracted from the ChEMBL database to ensure synthetic feasibility [41].

- Exploration-Exploitation Balance: Simulated annealing encourages compound diversity early in the process (exploration) before converging to globally optimal solutions (exploitation) [41].

The self-training nature of ScaMARS eliminates the need for externally annotated data or pretraining, making it particularly suitable for optimization tasks where limited structure-activity relationship data is available [41].

Addressing Sparse Rewards in RL

A significant challenge in applying RL to molecular design is the sparse reward problem, where the majority of generated molecules receive zero or minimal rewards due to failure to meet desired property thresholds [44]. Several technical innovations address this limitation:

- Transfer Learning: Pre-training generative models on diverse chemical databases (e.g., ChEMBL, PubChem) provides initial competence in generating valid molecular structures before property optimization [44] [45].