From Code to Catalysis: Validating AI Predictions in Enzyme Engineering

This article explores the critical process of experimentally validating AI-predicted enzyme functions, a pivotal step for applications in drug development and biotechnology.

From Code to Catalysis: Validating AI Predictions in Enzyme Engineering

Abstract

This article explores the critical process of experimentally validating AI-predicted enzyme functions, a pivotal step for applications in drug development and biotechnology. We first examine the foundational principles of AI-driven enzyme annotation, from traditional machine learning to advanced deep learning models. The discussion then progresses to methodological frameworks that integrate AI with robotic automation for high-throughput experimentation, illustrated with real-world case studies. We address common challenges and optimization strategies for improving prediction accuracy and experimental efficiency. Finally, we present rigorous validation protocols and comparative analyses of AI tools, providing researchers and scientists with a comprehensive guide to bridging the gap between computational prediction and experimental confirmation in enzyme engineering.

The AI Revolution in Enzyme Annotation: From Sequence to Function

Enzymes are the fundamental biocatalysts that drive biochemical processes, and accurately determining their function is critical for advancements in biology, medicine, and biotechnology. The Enzyme Commission (EC) number system provides a hierarchical framework for this purpose, categorizing enzymes from broad reaction types (L1) to specific substrate interactions (L4). However, the exponential growth in genomic data has created an immense annotation gap; as of May 2024, only 0.64% of the 43.48 million enzyme sequences in UniProtKB/Swiss-Prot have manual experimental validation [1] [2]. This scarcity has accelerated the development of machine learning (ML) tools to predict enzyme function computationally, offering the promise of rapid, high-throughput annotation. Yet, these powerful computational approaches create a critical dependency: without rigorous experimental validation, erroneous predictions can propagate through databases, misdirecting research and compromising scientific conclusions. This guide objectively compares the performance of leading AI prediction tools against experimental benchmarks to demonstrate why wet-lab validation remains indispensable for conclusive enzyme characterization, providing researchers with a framework for integrating computational and experimental approaches.

State-of-the-Art AI Models for Enzyme Function Prediction

Comparative Performance of Leading Computational Tools

Recent years have witnessed significant advancements in machine learning approaches for enzyme function prediction, with models evolving from sequence-based homology to sophisticated geometric graph learning on predicted structures. The table below summarizes the key performance metrics of leading tools as reported in independent evaluations.

Table 1: Performance Comparison of Enzyme Function Prediction Tools

| Model | Approach | Key Features | Reported Accuracy/Performance | Limitations |

|---|---|---|---|---|

| EZSpecificity [3] | Cross-attention SE(3)-equivariant GNN | Uses 3D enzyme structure & substrate information | 91.7% accuracy on halogenase experimental validation | Requires structural information |

| SOLVE [1] [2] | Ensemble ML (RF, LightGBM, DT) | Tokenized 6-mer subsequences from primary sequence | 0.97 precision, 0.95 recall for enzyme vs. non-enzyme | Performance decreases at EC L4 level |

| GraphEC [4] | Geometric graph learning | ESMFold-predicted structures & active site prediction | AUC 0.9583 for active site prediction; outperforms CLEAN, ProteInfer on NEW-392 test set | Dependent on structure prediction quality |

| CLEAN [4] [5] | Contrastive learning | Enzyme similarity network based on sequence embeddings | High accuracy for EC number identification | Struggles with novel functions unseen in training |

| ProteInfer [1] [4] | Dilated convolutional network | Direct mapping from sequence to function | Broad EC coverage | Lower accuracy on Price-149 experimental dataset |

These tools represent different methodological philosophies: EZSpecificity and GraphEC leverage structural information, while SOLVE and CLEAN operate primarily from sequence data. GraphEC employs a multi-stage pipeline that first predicts enzyme active sites (GraphEC-AS) with impressive AUC of 0.9583 on the TS124 test set, then uses this information to guide EC number prediction [4]. SOLVE utilizes an ensemble approach with optimized 6-mer tokenization, achieving excellent performance distinguishing enzymes from non-enzymes but with decreasing accuracy at more specific EC classification levels [1] [2].

Inherent Limitations and "Hallucination" Risks

Despite sophisticated architectures, ML models face fundamental challenges. A critical assessment reveals that current methods "mostly fail to make novel predictions" and can make "basic logic errors" that human experts avoid by leveraging contextual knowledge [6]. These limitations stem from several factors:

- Training Data Constraints: Models learn from existing annotations in databases, inheriting and potentially amplifying historical errors that have propagated through homology-based transfers [6].

- Paralog Misannotation: Models struggle with functionally divergent paralogs, where high sequence similarity masks functional differences determined by subtle active site variations [6].

- Extrapolation Inability: ML tools excel at interpolating within known function space but cannot reliably predict truly novel enzymatic activities beyond their training data [6].

- Context Blindness: Computational predictions typically lack biological context regarding tissue specificity, metabolic conditions, or organism-specific adaptations [6].

The Critical Assessment of protein Function Annotation (CAFA) revealed that approximately 40% of computational enzyme annotations are erroneous, highlighting the substantial risk of relying solely on in silico predictions [1] [2].

Experimental Validation: Case Studies and Performance Benchmarks

Quantitative Performance Assessment Against Experimental Data

Rigorous experimental validation provides the essential ground truth for evaluating computational predictions. The table below summarizes experimental performance assessments from recent studies.

Table 2: Experimental Validation Results of AI Predictions

| Study Context | Validation Method | Model Performance | Control/Comparison Performance |

|---|---|---|---|

| Halogenase Specificity [3] | 8 halogenases tested against 78 substrates | EZSpecificity: 91.7% accuracy identifying single reactive substrate | State-of-the-art model: 58.3% accuracy |

| Price-149 Dataset [4] | Experimental validation of 149 sequences | GraphEC outperformed CLEAN, ProteInfer, DeepEC, ECPred, GrAPFI, and ECPICK | Multiple tools showed significantly reduced accuracy on experimentally-validated set |

| SOLVE Validation [1] [2] | Independent test sets with <50% sequence similarity | Precision: 0.97, Recall: 0.95 for enzyme vs. non-enzyme | Performance decreased at substrate (L4) level prediction |

The halogenase case study provides particularly compelling evidence: when tested against 78 potential substrates, EZSpecificity correctly identified the single reactive substrate with 91.7% accuracy, dramatically outperforming the previous state-of-the-art model at 58.3% [3]. This 33.4 percentage point improvement demonstrates how advanced models incorporating 3D structural information can approach experimental reliability for specific applications, while also highlighting that even the best computational tools have error rates (>8%) that necessitate experimental confirmation for definitive characterization.

The Experimental Validation Pipeline

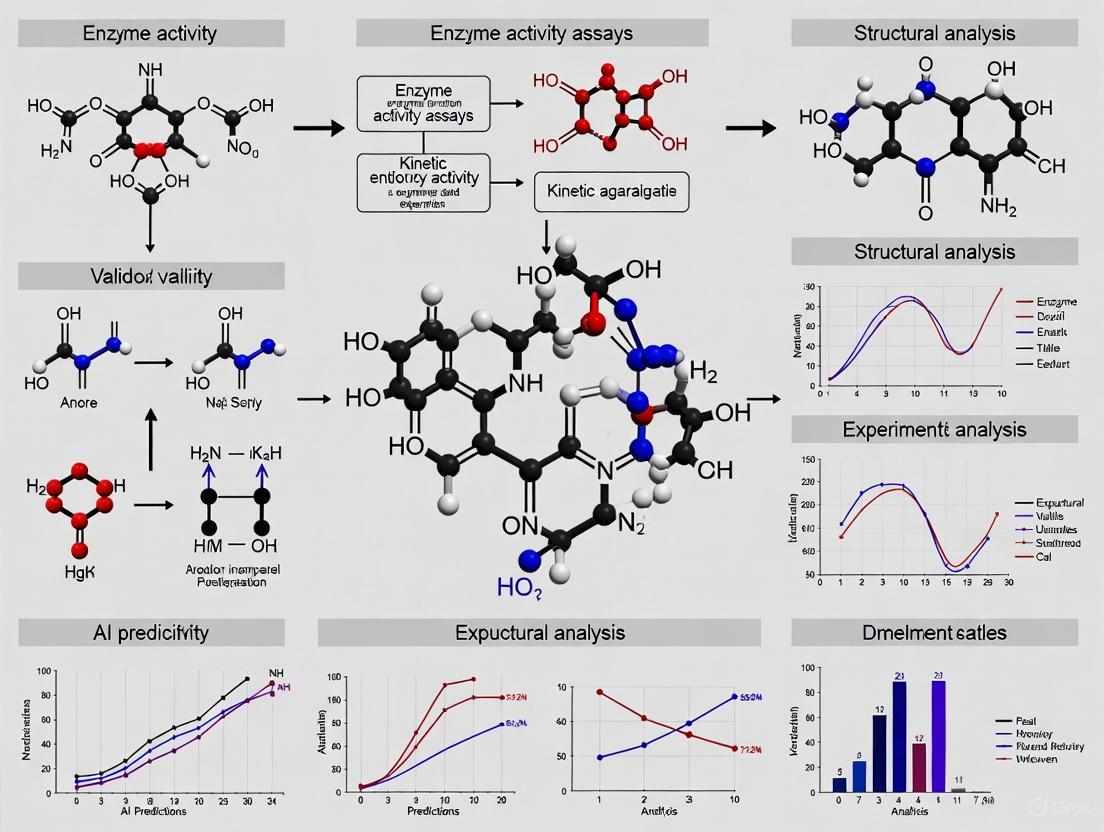

Experimental validation of AI-predicted enzyme functions follows a systematic workflow from computational screening to functional confirmation. The diagram below illustrates this multi-stage process.

Experimental Validation Workflow for AI-Predicted Enzyme Functions

This validation pipeline represents the essential pathway from computational prediction to experimental verification. Each stage requires specific reagents, controls, and methodological considerations to ensure conclusive results.

Essential Experimental Protocols for Validation

Core Methodologies for Functional Characterization

Validating computational predictions requires rigorous experimental protocols tailored to the specific enzyme class and predicted function. Below are detailed methodologies for key validation experiments.

Enzyme Activity Assays

Objective: Quantitatively measure catalytic activity against predicted substrates. Protocol:

- Protein Preparation: Clone target gene into expression vector, express in suitable host (E. coli, yeast, insect cells), purify using affinity chromatography (His-tag, GST-tag), and verify purity via SDS-PAGE.

- Reaction Conditions: Prepare reaction buffer optimized for predicted enzyme class (varying pH, ionic strength, cofactors based on in silico predictions).

- Substrate Incubation: Combine purified enzyme with predicted substrates at varying concentrations (typically 0.1-10× Km if known).

- Product Detection: Employ appropriate detection method (spectrophotometry, HPLC, MS, radioactivity) to measure product formation over time.

- Kinetic Analysis: Determine Michaelis-Menten parameters (Km, Vmax, kcat) from initial velocity measurements at multiple substrate concentrations.

Critical Controls: Heat-inactivated enzyme, no-enzyme controls, no-substrate controls, known positive control substrates.

Substrate Specificity Profiling

Objective: Systematically evaluate enzyme activity across multiple potential substrates to test specificity predictions. Protocol:

- Substrate Library: Curate diverse substrate panel including AI-predicted substrates plus positive/negative controls.

- High-Throughput Screening: Implement microtiter plate-based assays with standardized reaction conditions across all substrates.

- Multiparameter Analysis: Simultaneously monitor multiple reaction parameters (product formation, substrate depletion, cofactor conversion).

- Dose-Response: For active substrates, determine relative activities across concentration gradients.

- Specificity Index: Calculate catalytic efficiency (kcat/Km) for each active substrate to establish specificity hierarchy.

This comprehensive profiling directly tests computational predictions of substrate scope, including models like EZSpecificity which explicitly predict substrate specificity patterns [3].

Active Site Verification

Objective: Experimentally confirm predicted active site residues critical for catalysis. Protocol:

- Site-Directed Mutagenesis: Design mutants for predicted critical residues (catalytic triad, substrate binding, metal coordination).

- Mutant Characterization: Express and purify mutant enzymes using identical conditions to wild-type.

- Activity Comparison: Measure catalytic activity of mutants versus wild-type enzyme.

- Structural Integrity Verification: Confirm proper folding via circular dichroism, thermal shift assays, or size-exclusion chromatography.

- Binding Studies: For inactive mutants, assess substrate binding ability via isothermal titration calorimetry or surface plasmon resonance.

This approach directly tests structural predictions from tools like GraphEC, which incorporates active site prediction into its EC number annotation pipeline [4].

Research Reagent Solutions for Enzyme Validation

Table 3: Essential Research Reagents for Experimental Validation

| Reagent Category | Specific Examples | Function in Validation | Considerations |

|---|---|---|---|

| Expression Systems | E. coli BL21(DE3), insect cell systems, yeast expression systems | Recombinant protein production | Match to enzyme origin (prokaryotic/eukaryotic); post-translational modifications |

| Purification Tools | His-tag systems, GST-tag, affinity resins, size exclusion columns | Obtain pure, functional enzyme | Balance between purity and activity retention; tag removal may be necessary |

| Activity Assay Kits | NAD(P)H-coupled assays, fluorogenic substrates, chromogenic substrates | Detect and quantify enzyme activity | Match detection method to predicted reaction; sensitivity requirements |

| Analytical Instruments | HPLC-MS, GC-MS, spectrophotometers, plate readers | Quantitative reaction monitoring | Resolution, sensitivity, and throughput needs |

| Substrate Libraries | Natural product collections, synthetic analogs, predicted substrate panels | Test specificity predictions | Chemical diversity, solubility, commercial availability |

The Computational-Experimental Partnership Framework

The most effective enzyme function discovery employs an iterative feedback loop between computational prediction and experimental validation. The diagram below illustrates this integrated framework.

Integrated Computational-Experimental Partnership Framework

This framework creates a virtuous cycle where experimental results continuously improve computational models, which in turn generate more accurate predictions for experimental testing. For example, the halogenase validation study [3] not only confirmed EZSpecificity's accuracy but provided curated enzyme-substrate interaction data that can refine future model training. Similarly, the GraphEC approach [4] demonstrates how active site validation can be explicitly incorporated into the computational prediction pipeline.

The dramatic advancement of AI tools for enzyme function prediction has created unprecedented opportunities for discovery, with models like EZSpecificity, SOLVE, and GraphEC achieving impressive accuracy on specific validation tasks. However, experimental validation remains non-negotiable for definitive functional assignment, serving three critical roles: (1) as the ultimate arbiter of prediction accuracy, (2) as a safeguard against model "hallucinations" and database error propagation, and (3) as a source of curated data for model improvement. The most productive path forward recognizes the complementary strengths of both approaches: computational methods for rapid hypothesis generation and prioritization, and experimental validation for conclusive functional characterization. By maintaining this rigorous standard while fostering greater integration between computational and experimental approaches, the scientific community can accelerate the discovery of novel enzymatic functions while ensuring the reliability of the biological knowledge base that underpins research in biochemistry, drug development, and synthetic biology.

The journey of AI tools in bioinformatics, from the foundational BLAST algorithm to sophisticated transformer models, represents a paradigm shift in how researchers approach biological data. This evolution is marked by significant leaps in prediction accuracy, functional understanding, and the ability to model complex biological relationships, fundamentally changing the process of validating AI-predicted enzyme functions.

Table 1: Evolution of Key Bioinformatics Tools

| Tool / Model | Primary Methodology | Key Application | Performance Highlights | Key Limitations |

|---|---|---|---|---|

| BLAST [7] | Local sequence alignment via heuristic search | Homology-based sequence comparison | N/A (Widely used for decades) | Limited accuracy with low-sequence homology; functional misannotations common [2] |

| SOLVE [2] | Ensemble machine learning (Random Forest, LightGBM) | Enzyme vs. non-enzyme classification & EC number prediction | Accurately distinguishes enzymes from non-enzymes; predicts full EC number hierarchy | Relies on manual feature extraction (k-mer tokenization) |

| EZSpecificity [3] [8] | Cross-attention SE(3)-equivariant Graph Neural Network | Enzyme-substrate specificity prediction | 91.7% accuracy in identifying single reactive substrate; outperforms previous model (58.3% accuracy) [3] | Performance may vary across diverse enzyme classes not in training data |

| Transformer Models [9] | Attention-based neural network architecture | Blast loading prediction (showcasing architectural superiority) | 3.5% relative error, outperforming MLP (6.0% error) [9] | High computational cost and data requirements [9] [10] |

From Sequence Alignment to Functional Prediction with BLAST

For decades, the Basic Local Alignment Search Tool (BLAST) has been an indispensable cornerstone of bioinformatics. Its heuristic approach to local sequence alignment allows researchers to quickly find regions of similarity between biological sequences, often providing the first clue about a new protein's function by linking it to characterized homologs [7].

However, BLAST's reliability is intrinsically tied to sequence similarity. When analyzing novel enzymes with low homology to characterized proteins, BLAST can produce misleading annotations, such as identifying homologs that perform dissimilar functions [2]. This fundamental limitation highlighted the need for computational tools that could move beyond pure sequence alignment to predict function based on more complex patterns.

The Rise of Machine Learning and Ensemble Methods

Machine learning models addressed BLAST's limitations by learning complex patterns from protein sequences and structures. The SOLVE framework exemplifies this advancement, using an ensemble of Random Forest, LightGBM, and Decision Tree models to classify enzymes from non-enzymes and predict detailed Enzyme Commission (EC) numbers [2].

Experimental Protocol: Enzyme Function Prediction with SOLVE

- Feature Extraction: Convert raw protein primary sequences into numerical feature vectors using 6-mer tokenization, which optimally captures local sequence patterns [2].

- Model Training: Train the ensemble classifier using a soft-voting optimized learning strategy on curated enzyme function datasets [2].

- Class Imbalance Mitigation: Apply a focal loss penalty during training to handle uneven class distribution in enzyme functional classes [2].

- Validation: Evaluate model performance through stratified k-fold cross-validation and independent testing, measuring accuracy at each level of the EC hierarchy [2].

The Transformer Revolution in Biological Prediction

Transformer architectures have dramatically advanced AI capabilities in bioinformatics by using self-attention mechanisms to weigh the importance of different input elements, such as amino acids in a protein sequence or atoms in a molecular structure. This enables modeling of complex long-range dependencies that simpler models often miss [10].

Case Study: Experimental Validation of EZSpecificity

The EZSpecificity model demonstrates the power of transformer-inspired architectures for biological prediction. Researchers developed a cross-attention-empowered SE(3)-equivariant graph neural network to predict enzyme-substrate specificity by learning from both sequence and structural data [3].

Experimental Workflow for Validation

Key Experimental Steps [3] [8]:

- Data Curation & Docking Simulations: Complement existing experimental data with millions of docking calculations to create a comprehensive database of enzyme-substrate interactions at atomic resolution.

- Model Architecture: Implement a cross-attention graph neural network that respects rotational and translational symmetry (SE(3)-equivariant) for accurate molecular modeling.

- Benchmarking: Test EZSpecificity against the leading model (ESP) across four scenarios mimicking real-world applications.

- Experimental Validation: Validate top predictions using eight halogenase enzymes and 78 substrates, measuring actual catalytic activity to confirm model predictions.

The rigorous experimental validation confirmed EZSpecificity's superior performance, achieving 91.7% accuracy in identifying reactive substrates compared to just 58.3% for the previous state-of-the-art model [8]. This significant accuracy improvement demonstrates how transformer-based models can substantially reduce false leads in enzyme discovery.

The Scientist's Toolkit: Essential Research Reagents & Solutions

| Research Reagent | Function in Experimental Validation |

|---|---|

| Halogenase Enzymes [8] | Model system for testing AI predictions; increasingly used to create bioactive molecules |

| Substrate Libraries [8] | Diverse sets of potential enzyme targets (78 substrates used in EZSpecificity validation) |

| Docking Simulation Software [8] | Generates atomic-level interaction data between enzymes and substrates for training models |

| Curated Enzyme-Substrate Databases [3] | Gold-standard datasets for training and benchmarking specificity prediction models |

Performance Comparison: Quantifying the AI Evolution

Table 2: Experimental Performance Comparison of AI Tools

| Tool / Model | Benchmark / Validation Method | Key Performance Metric | Result | Context & Significance |

|---|---|---|---|---|

| EZSpecificity [3] | Experimental testing with 8 halogenases & 78 substrates | Accuracy in identifying single reactive substrate | 91.7% | Near-perfect accuracy enabling reliable experimental follow-up |

| Previous Model (ESP) [3] | Same experimental setup with halogenases | Accuracy in identifying single reactive substrate | 58.3% | Baseline for comparison, highlighting transformational improvement |

| Transformer [9] | BLEVE pressure prediction benchmark | Relative error | 3.5% | Demonstrates architectural superiority over MLPs (6.0% error) |

| SOLVE [2] | Stratified 5-fold cross-validation | Enzyme vs. non-enzyme classification accuracy | High (exact % not specified) | Effectively addresses critical limitation of previous tools |

The experimental data consistently shows that transformer-based models like EZSpecificity achieve significantly higher accuracy compared to previous generations of tools. This performance improvement is not incremental but transformational, moving from coin-flip accuracy to near-perfect prediction in specific validation scenarios [3].

The implications for drug development and biochemical research are substantial. With AI tools that can accurately predict enzyme-substrate relationships, researchers can prioritize the most promising candidates for experimental validation, dramatically reducing development timelines and costs while increasing the success rate of enzyme engineering and drug discovery projects [8].

Enzymes are fundamental biocatalysts that drive cellular metabolism, and the accurate elucidation of their functions is critical for advancing biochemical research, therapeutic drug design, and sustainable biomanufacturing [2] [11]. The traditional experimental methods for determining enzyme function are notoriously time-consuming, resource-intensive, and ill-suited for the omics era, where sequencing technologies are rapidly expanding the volume of uncharacterized enzyme sequences [2]. As of May 2024, the UniProtKB/Swiss-Prot database contains over 43 million enzyme sequences, yet only a tiny fraction (0.64%) have been manually annotated [2]. This massive annotation gap has accelerated efforts to develop computational tools for high-throughput enzyme function prediction.

Artificial intelligence (AI) has emerged as a transformative solution, with machine learning (ML) and deep learning (DL) approaches at the forefront of this revolution [11] [5]. These data-driven methods learn patterns from known enzyme sequences and their associated functions, typically represented by Enzyme Commission (EC) numbers—a hierarchical classification system that organizes enzymes based on the reactions they catalyze across four levels (L1-L4) from broad reaction classes to specific substrate interactions [2]. This guide provides an objective comparison of core ML and DL approaches for enzyme function prediction, focusing on their methodological foundations, performance characteristics, and, crucially, their validation through experimental results.

Machine Learning Approaches: Engineered Features and Interpretable Models

Traditional machine learning approaches for enzyme function prediction rely on extracting informative features from protein sequences, which then serve as input to various classification algorithms. These methods typically require significant domain expertise for feature engineering but often yield more interpretable models.

Feature Extraction and Algorithm Selection

Feature extraction is a critical first step in traditional ML pipelines. Common approaches include:

- k-mer Composition: This method breaks down protein sequences into overlapping subsequences of length k. Research has demonstrated that 6-mers provide optimal performance for enzyme classification, effectively capturing local sequence patterns that distinguish functional classes while balancing computational efficiency [2].

- Physicochemical Properties: These include manually selected descriptors such as amino acid composition, polarity, charge, and hydrophobicity [11].

- Evolutionary Information: Features derived from sequence homology and position-specific scoring matrices [11].

These engineered features are then processed by various ML algorithms, including:

- Random Forests (RF): Ensemble method using multiple decision trees [2] [11].

- Support Vector Machines (SVM): Effective for high-dimensional data [2] [11].

- k-Nearest Neighbors (kNN): Instance-based learning that leverages similarity measures [2] [11].

- Light Gradient Boosting Machine (LightGBM): High-performance gradient boosting framework [2].

The SOLVE Framework: An Optimized Ensemble Approach

The SOLVE (Soft-Voting Optimized Learning for Versatile Enzymes) framework exemplifies the modern ML approach to enzyme function prediction. SOLVE employs an ensemble method integrating RF, LightGBM, and Decision Tree models with an optimized weighted strategy [2]. This framework addresses several critical challenges in enzyme annotation:

- Comprehensive Prediction: Distinguishes enzymes from non-enzymes and predicts EC numbers from L1 to L4 for both mono-functional and multi-functional enzymes [2].

- Class Imbalance Mitigation: Incorporates a focal loss penalty to address the uneven distribution of examples across enzyme classes [2].

- Interpretability: Utilizes Shapley analyses to identify functional motifs at catalytic and allosteric sites, providing biological insights beyond mere prediction [2].

SOLVE operates directly on tokenized subsequences from primary protein sequences, eliminating the need for complex feature extraction while maintaining high accuracy across all evaluation metrics on independent datasets [2].

Figure 1: Machine Learning Workflow of the SOLVE Framework. The process begins with primary sequence tokenization, proceeds through multiple ML classifiers, and culminates in an optimized ensemble prediction with multiple output types.

Deep Learning Approaches: End-to-End Learning from Raw Sequences

Deep learning represents a paradigm shift in enzyme function prediction, eliminating the need for manual feature engineering by learning relevant representations directly from raw sequence data through multiple layers of neural network architectures.

Architectural Innovations in Deep Learning

Modern DL approaches for enzyme function prediction employ several sophisticated neural network architectures:

- Convolutional Neural Networks (CNNs): Effective at capturing local sequence patterns and motifs through convolutional filters that scan protein sequences [11].

- Recurrent Neural Networks (RNNs): Process sequential data and can model dependencies across different regions of protein sequences [11].

- Transformer Models: Utilize self-attention mechanisms to weigh the importance of different sequence regions, enabling modeling of long-range dependencies in protein sequences [11] [12].

- Graph Neural Networks (GNNs): Represent enzymes as graphs where nodes correspond to amino acids and edges represent spatial or functional relationships, particularly effective for incorporating structural information [3] [11].

EZSpecificity: A Cross-Attention Graph Neural Network

The EZSpecificity model exemplifies the advanced capabilities of DL approaches for predicting enzyme-substrate interactions. This architecture employs a cross-attention-empowered SE(3)-equivariant graph neural network trained on a comprehensive database of enzyme-substrate interactions at sequence and structural levels [3] [13].

Key architectural features of EZSpecificity include:

- SE(3)-Equivariance: Ensures predictions are invariant to translations and rotations of molecular structures, crucial for working with 3D enzyme conformations [3].

- Cross-Attention Mechanisms: Enable the model to learn which parts of an enzyme structure are most relevant for interacting with specific substrates [3].

- Structural Integration: Incorporates 3D structural information through extensive docking simulations that capture how enzymes conform around different substrates [13].

EZSpecificity represents a significant advancement in predicting substrate specificity for enzymes relevant to both fundamental research and applied biotechnology [3].

Figure 2: Deep Learning Architecture of EZSpecificity. The model integrates multiple data types through specialized neural network components, with cross-attention mechanisms learning the relationships between enzyme and substrate representations.

Performance Comparison: Quantitative Metrics and Experimental Validation

Objective evaluation of AI prediction tools requires robust benchmarking against independent datasets and, most importantly, experimental validation to assess real-world performance.

Prediction Accuracy Across Enzyme Hierarchy Levels

Table 1: Performance Comparison of ML and DL Approaches for Enzyme Function Prediction

| Model | Approach | EC Level | Performance Metrics | Experimental Validation |

|---|---|---|---|---|

| SOLVE [2] | Ensemble ML (RF, LightGBM, DT) | L1 (Enzyme vs. non-enzyme) | High accuracy with 6-mer features | N/A |

| L2 (Subclass) | Consistent performance across hierarchy | N/A | ||

| L3 (Sub-subclass) | Maintained accuracy | N/A | ||

| L4 (Substrate) | Moderate accuracy with class imbalance | N/A | ||

| EZSpecificity [3] [13] | Graph Neural Network with Cross-Attention | Enzyme-substrate specificity | 91.7% accuracy on halogenase enzymes | Validated with 8 halogenases and 78 substrates |

| ESP (Baseline) [3] [13] | Existing State-of-the-Art | Enzyme-substrate specificity | 58.3% accuracy | Same validation set |

Experimental Validation Protocols

Rigorous experimental validation is essential for establishing the real-world utility of AI predictions:

4.2.1 Peptide Array-Based Validation For PTM enzyme specificity, researchers synthesized permutation arrays of peptides on cellulose membranes, exposing them to active enzyme constructs (e.g., SET8_{193-352}). Methyltransferase activity was quantified through relative densitometry and analyzed using motif-generating software to identify sequence variants susceptible to methylation [14].

4.2.2 Halogenase Substrate Specificity Validation For EZSpecificity validation, researchers selected eight halogenase enzymes and 78 potential substrates. Predictions were tested experimentally, measuring enzyme activity with different substrates to confirm reactivity. This validation demonstrated the model's ability to identify single potential reactive substrates with high accuracy (91.7%) [3] [13].

4.2.3 Automated Enzyme Engineering Platforms Integrated AI-biofoundry systems enable continuous validation through iterative Design-Build-Test-Learn (DBTL) cycles. These platforms automate the construction and characterization of protein variants, using high-throughput functional assays to validate AI predictions rapidly [15].

Research Reagent Solutions for Experimental Validation

Table 2: Essential Research Reagents and Platforms for AI Validation Studies

| Reagent/Platform | Function | Application in AI Validation |

|---|---|---|

| Peptide Arrays [14] | High-throughput representation of protein segments | Testing enzyme activity across numerous sequence variants |

| Twist Multiplexed Gene Fragments [16] | Synthesis of gene fragment libraries (up to 500 bp) | Testing AI-designed protein libraries in pooled format |

| Twist Oligo Pools [16] | Highly diverse single-stranded DNA oligonucleotides | Encoding peptide libraries or variable protein regions |

| iBioFAB Automated Platform [15] | End-to-end automated biological foundry | Executing continuous DBTL cycles for protein engineering |

| Site-Directed Mutagenesis Kits [15] | Introduction of specific mutations | Creating AI-predicted enzyme variants for functional testing |

| Mass Spectrometry [14] | Comprehensive analysis of cellular mechanics | Confirming PTM status of predicted enzyme substrates |

Integrated Workflows: Combining AI with Automated Experimentation

The most advanced applications of AI for enzyme function prediction now integrate computational models with automated experimental systems, creating closed-loop workflows that accelerate discovery and validation.

The Illinois Biological Foundry for Advanced Biomanufacturing (iBioFAB) exemplifies this integration, combining AI models with robotic automation for autonomous enzyme engineering [15]. This platform employs a state-of-the-art protein language model (ESM-2) and an epistasis model (EVmutation) to design mutant libraries, which are then automatically constructed and screened through optimized modular workflows [15].

In a proof of concept, this integrated platform engineered Arabidopsis thaliana halide methyltransferase (AtHMT) for a 90-fold improvement in substrate preference and 16-fold improvement in ethyltransferase activity, and developed a Yersinia mollaretii phytase (YmPhytase) variant with 26-fold improvement in activity at neutral pH—all accomplished in four rounds over four weeks [15].

Figure 3: Integrated AI-Automation Workflow for Enzyme Engineering. This closed-loop system combines AI-powered design with robotic experimentation, enabling continuous improvement of enzyme variants through iterative DBTL cycles.

The choice between machine learning and deep learning approaches for enzyme function prediction depends on multiple factors, including the specific prediction task, available data resources, and interpretability requirements.

Traditional ML approaches like SOLVE offer advantages in interpretability, computational efficiency, and performance when training data is limited. Their ability to provide biological insights through feature importance metrics (e.g., Shapley values) makes them particularly valuable for exploratory research where understanding sequence-function relationships is as important as prediction itself [2].

Deep learning models like EZSpecificity excel at complex prediction tasks involving structural information and enzyme-substrate interactions, particularly when large-scale training data is available. Their ability to learn relevant features directly from raw data reduces the need for domain expertise in feature engineering but comes with increased computational requirements and reduced interpretability [3] [13].

For researchers seeking to implement these approaches, the following considerations are essential:

- Task Specificity: ML ensembles often suffice for standard enzyme classification, while DL approaches are superior for structural modeling and substrate specificity prediction.

- Data Availability: DL models typically require larger training datasets to reach their full potential.

- Interpretability Needs: ML models generally provide more transparent decision-making processes.

- Validation Imperative: Regardless of approach, experimental validation remains essential, particularly for novel predictions that lack close homologs in training data [12].

As the field advances, the integration of AI prediction with automated experimental validation—exemplified by platforms like iBioFAB—represents the most promising direction for accelerating enzyme discovery and engineering, potentially reducing development timelines from months to weeks while significantly improving success rates [15] [16].

The Enzyme Commission (EC) number system, established by the International Union of Biochemistry and Molecular Biology, provides a hierarchical classification scheme for enzymes based on the chemical reactions they catalyze [17]. This system uses a four-component number (e.g., EC 1.1.1.1) where each digit represents an increasing level of catalytic specificity: the first digit denotes one of seven main enzyme classes, the second indicates the subclass, the third specifies the sub-subclass, and the fourth is the serial identifier [2] [18]. Accurate EC number assignment is fundamental to understanding cellular metabolism and enables advancements in synthetic biology, drug discovery, and biocatalysis [19].

Despite the existence of millions of sequenced enzymes in databases like UniProtKB, over 99% lack high-quality functional annotations, creating a significant gap between sequence information and functional understanding [18]. Experimental determination of enzyme function remains time-consuming, costly, and impractical for characterizing this vast sequence space [2] [4]. Artificial intelligence (AI) approaches have emerged as powerful tools to address this challenge, leveraging machine learning to predict enzyme functions directly from amino acid sequences or predicted structures, thereby accelerating functional annotation and guiding experimental validation [4] [19] [17].

Comparative Analysis of AI Tools for Enzyme Function Prediction

Multiple AI-driven tools have been developed for EC number prediction, each employing distinct computational approaches and input data requirements. The table below summarizes key features of several state-of-the-art tools:

Table 1: Key Features of AI Tools for Enzyme Function Prediction

| Tool Name | Core Methodology | Input Data | Key Features |

|---|---|---|---|

| SOLVE | Optimized ensemble learning (RF, LightGBM, DT) | Protein sequence | Distinguishes enzymes from non-enzymes; uses 6-mer tokenization; provides interpretability via Shapley analysis [2] |

| GraphEC | Geometric graph learning | ESMFold-predicted structures | Predicts active sites and optimum pH; incorporates label diffusion algorithm [4] |

| CLEAN | Contrastive learning | Protein sequence | Effective for poorly studied enzymes; identifies multi-functional enzymes [20] |

| DeepECtransformer | Transformer neural network | Protein sequence | Covers 5,360 EC numbers; identifies functional motifs; provides reasoning interpretation [17] |

| BEC-Pred | BERT-based model | Reaction SMILES | Predicts EC numbers from substrate-product pairs; transfer learning approach [18] |

| CAPIM | Integrated pipeline (P2Rank, GASS, AutoDock Vina) | Protein structure | Combines pocket detection, catalytic site annotation, and docking validation [21] |

| EZSpecificity | SE(3)-equivariant graph neural network | Enzyme-substrate structures | Specifically predicts substrate specificity; cross-attention architecture [3] |

Performance Comparison on Benchmark Datasets

Quantitative evaluation across independent test datasets reveals varying performance metrics for different tools. The following table summarizes reported performance measures:

Table 2: Performance Metrics of AI Tools on Independent Test Datasets

| Tool | Dataset | Accuracy/Precision | Recall | F1 Score | Specialized Strengths |

|---|---|---|---|---|---|

| SOLVE | Independent test | High across all metrics (specific values not provided) | High across all metrics | High across all metrics | Optimal with 6-mer features; handles class imbalance with focal loss [2] |

| GraphEC | NEW-392, Price-149 | Superior to other methods | Superior to other methods | Superior to other methods | Excellent active site prediction (AUC: 0.9583) [4] |

| CLEAN | Various | Better accuracy than alternatives | - | - | Works well on unstudied enzymes; corrects misannotations [20] |

| DeepECtransformer | Test dataset | 0.7589-0.9506 (varies by class) | 0.6830-0.9445 (varies by class) | 0.6990-0.9469 (varies by class) | Best for EC 3,4,5,6 classes; covers translocases (EC 7) [17] |

| BEC-Pred | Reaction dataset | 91.6% | - | 6.6% improvement over alternatives | Predicts from substrate-product pairs only [18] |

| EZSpecificity | Halogenase validation | 91.7% (vs. 58.3% for previous tool) | - | - | Exceptional substrate specificity prediction [3] |

Performance variations across EC classes are notable, with DeepECtransformer showing lower performance for oxidoreductases (EC 1 class), likely due to dataset imbalance with fewer sequences per EC number [17]. SOLVE systematically evaluated different k-mer values and found 6-mers provided optimal performance, with t-SNE visualization showing better separation of enzyme functional classes compared to 5-mers [2].

Methodological Workflows

The AI tools for enzyme function prediction can be categorized by their fundamental approaches, as illustrated in the following workflow diagram:

Experimental Validation of AI Predictions

Validation Methodologies

Validating AI-predicted enzyme functions requires robust experimental protocols to confirm catalytic activities. The following diagram illustrates a generalized workflow for experimental validation:

Case Studies of Experimental Validation

DeepECtransformer Validation with E. coli Y-ome Proteins

DeepECtransformer was used to predict EC numbers for 464 unannotated proteins in Escherichia coli K-12 MG1655, followed by experimental validation of three candidate enzymes [17]:

- YgfF: Predicted as a phosphatase (EC 3.6.1.-). Experimental validation confirmed its activity as a dinucleotide polyphosphate hydrolase.

- YciO: Predicted as a cysteine desulfhydrase (EC 4.4.1.15). Enzyme assays confirmed production of H₂S, pyruvate, and NH₃ from cysteine.

- YjdM: Predicted as a dihydroorotate dehydrogenase (EC 1.3.1.14). Activity was confirmed spectrophotometrically by monitoring NADH formation.

The validation workflow included heterologous gene expression, protein purification, and enzyme activity assays with appropriate substrates and detection methods.

Correction of Misannotated Enzymes

DeepECtransformer successfully corrected misannotated EC numbers in UniProtKB, including:

- P93052 from Botryococcus braunii: Originally annotated as L-lactate dehydrogenase (EC 1.1.1.27) but predicted and experimentally confirmed as malate dehydrogenase (EC 1.1.1.37) [17].

- Q8U4R3 from Pyrococcus furiosus: Misannotated as 1-aminocyclopropane-1-carboxylate deaminase but correctly predicted as D-cysteine desulfhydrase (EC 4.4.1.15).

Autonomous Engineering Platform Validation

An AI-powered autonomous enzyme engineering platform integrated machine learning with biofoundry automation to engineer two enzymes with dramatic improvements [15]:

- Arabidopsis thaliana halide methyltransferase (AtHMT): Achieved 90-fold improvement in substrate preference and 16-fold improvement in ethyltransferase activity.

- Yersinia mollaretii phytase (YmPhytase): Engineered a variant with 26-fold improvement in activity at neutral pH.

This platform completed four engineering rounds in 4 weeks while constructing and characterizing fewer than 500 variants for each enzyme, demonstrating the powerful synergy between AI prediction and automated experimental validation [15].

Essential Research Reagents and Solutions

Successful experimental validation of AI-predicted enzyme functions requires specific research reagents and methodologies. The following table details essential solutions used in the featured studies:

Table 3: Key Research Reagent Solutions for Experimental Validation

| Reagent/Method | Function/Purpose | Examples from Studies |

|---|---|---|

| Heterologous Expression Systems | Production of recombinant proteins for functional characterization | E. coli expression systems for enzyme production [17] |

| Protein Purification Methods | Isolation of enzymes for in vitro assays | Affinity chromatography for purified protein preparation [17] |

| Enzyme Activity Assays | Direct measurement of catalytic function | Spectrophotometric assays monitoring NADH formation [17] |

| Substrate Libraries | Profiling enzyme specificity and promiscuity | 78 substrates for halogenase specificity testing [3] |

| Analytical Instruments | Detection and quantification of reaction products | LC-MS, HPLC for product identification and quantification [15] |

| Automated Biofoundries | High-throughput construction and testing of enzyme variants | iBioFAB for autonomous enzyme engineering [15] |

| Docking Software | In silico validation of enzyme-substrate interactions | AutoDock Vina in CAPIM pipeline [21] |

The integration of AI prediction with experimental validation represents a paradigm shift in enzyme functional annotation. AI tools like SOLVE, GraphEC, CLEAN, and DeepECtransformer demonstrate complementary strengths, with performance varying across EC classes and prediction contexts. Structure-based approaches (GraphEC, CAPIM) provide insights into active sites and substrate specificity, while sequence-based methods (SOLVE, CLEAN, DeepECtransformer) offer broader applicability for high-throughput annotation.

Experimental validation remains essential for confirming AI predictions, as demonstrated by successful characterization of previously unannotated enzymes and correction of database misannotations. The emerging paradigm of autonomous enzyme engineering, which combines AI design with robotic experimentation, dramatically accelerates the engineering of improved enzymes while providing high-quality validation data for refining predictive models.

As AI tools continue to evolve, their integration with experimental workflows will play an increasingly crucial role in bridging the annotation gap for the millions of uncharacterized enzymes in sequence databases, ultimately advancing applications in drug discovery, metabolic engineering, and sustainable biocatalysis.

Computational methods for predicting protein and enzyme function are indispensable tools in modern biology, yet their limitations pose significant challenges for research and drug development. The Critical Assessment of Functional Annotation (CAFA) is a community-wide experiment that provides the most comprehensive evaluation of these methods, revealing critical insights into their performance and reliability. By benchmarking computational predictions against experimental results, CAFA has demonstrated that while prediction methods have improved over time, they still exhibit substantial limitations in accuracy, coverage, and reliability—particularly for novel enzyme functions. This guide examines these limitations through the lens of CAFA assessments and experimental validations, providing researchers with a realistic framework for utilizing computational predictions in biological research and therapeutic development.

What is the CAFA Challenge?

The Critical Assessment of Functional Annotation (CAFA) is a community-wide experiment designed to objectively evaluate the performance of computational protein function prediction methods. Established in 2010, CAFA employs a time-delayed evaluation framework where predictors submit functional annotations for proteins with unknown function, and these predictions are later assessed against experimental annotations that accumulate after the submission deadline [22] [23] [24]. This rigorous methodology provides an unbiased assessment of the state of the art in function prediction.

CAFA evaluates predictions using the Gene Ontology (GO) framework, which categorizes protein functions into three ontologies: Molecular Function (MF), Biological Process (BP), and Cellular Component (CC) [22]. The primary metric for evaluation is the maximum F-measure (Fmax), which represents the harmonic mean of precision and recall across all possible score thresholds [22]. This and other metrics provide a standardized way to quantify prediction accuracy and compare methods.

Key Limitations of Computational Approaches

Incomplete Coverage and Accuracy Gaps

Computational function prediction methods consistently struggle to achieve comprehensive coverage and high accuracy across all functional categories. Data from successive CAFA challenges reveals a complex picture of methodological progress and persistent limitations.

Table 1: Performance Comparison Across CAFA Challenges (Fmax Scores)

| Ontology | CAFA1 Top Methods | CAFA2 Top Methods | CAFA3 Top Methods | Baseline (BLAST) |

|---|---|---|---|---|

| Molecular Function | 0.47-0.52 | 0.55-0.59 | 0.56-0.61 | 0.38-0.48 |

| Biological Process | 0.37-0.41 | 0.40-0.44 | 0.41-0.45 | 0.24-0.31 |

| Cellular Component | 0.45-0.50 | 0.52-0.56 | 0.50-0.54 | 0.45-0.52 |

Data synthesized from CAFA assessments [22] [23] [24]. Fmax scores range from 0-1, with higher values indicating better performance.

While Table 1 shows steady improvement from CAFA1 to CAFA2, progress slowed considerably by CAFA3, particularly for Biological Process and Cellular Component ontologies [23]. The performance gap between Molecular Function and Biological Process predictions is especially notable, reflecting the greater complexity of predicting pathway-level functions compared to basic biochemical activities [22] [24].

The CAFA2 assessment of 126 methods from 56 research groups found that while top methods outperformed baseline sequence similarity approaches like BLAST, "the interpretation of results and usefulness of individual methods remain context-dependent" [24]. This underscores the importance of matching method selection to specific prediction needs.

The Misannotation Crisis

Perhaps the most significant limitation of computational approaches is their tendency to propagate and amplify erroneous annotations. Experimental studies reveal alarming rates of misannotation across enzyme databases:

Table 2: Experimentally Validated Misannotation Rates

| Study Focus | Misannotation Rate | Key Findings |

|---|---|---|

| EC 1.1.3.15 enzyme class [25] | 78% | Only 22.5% of sequences contained canonical protein domains; 79% shared <25% sequence identity with characterized enzymes |

| BRENDA database analysis [25] | 18% overall | Nearly 1 in 5 sequences annotated to enzyme classes share no similarity or domain architecture with experimentally characterized representatives |

| E. coli unknowns evaluation [6] | High failure rate | Machine learning methods mostly failed to make novel predictions and made basic logic errors that human annotators avoid |

The experimental investigation of EC 1.1.3.15 (S-2-hydroxyacid oxidases) provides a particularly compelling case study. Researchers selected 122 representative sequences, expressed and purified the proteins, and tested their catalytic activity [25]. Surprisingly, only a small fraction exhibited the predicted function, with the majority showing either no activity or alternative enzymatic activities [25]. This misannotation problem increases over time as errors propagate through databases [25].

Failure to Predict Novel Functions

Machine learning models for enzyme function prediction struggle significantly when confronted with functions not represented in their training data. A recent evaluation of ML predictions for over 450 E. coli proteins of unknown function found that "current ML methods not only mostly fail to make novel predictions but also make basic logic errors in their predictions that human annotators avoid by leveraging the available knowledge base" [6].

This limitation stems from the fundamental operating principle of most ML methods, which excel at interpolating within known function space but lack the capability to extrapolate to truly novel functions [6]. The problem is compounded by what researchers term "hallucinations"—confident but incorrect predictions that arise from logical failures in the model [6].

Domain Architecture and Context Limitations

Computational methods often fail to account for critical biological context that determines enzyme function:

Multi-domain proteins: Prediction accuracy is significantly higher for single-domain proteins compared to multi-domain proteins, especially in molecular function prediction for eukaryotic targets (P = 1.4 × 10⁻⁵) [22]. This highlights the challenge of combining sequence information from multiple domains to produce accurate functional predictions.

Cellular context: Methods struggle to predict biological process terms because these often depend on cellular and organismal context rather than just amino acid sequence [22].

Active site recognition: Traditional sequence-based methods often miss crucial structural information about active sites, which are critical for determining enzyme function [4].

Experimental Validation: The Essential Corrective

Case Study: Validating EC 1.1.3.15 Predictions

The experimental workflow for validating computational predictions provides a template for assessing prediction reliability:

Experimental Validation Workflow

In the EC 1.1.3.15 study, researchers first mined the BRENDA database to obtain 1,058 unique sequences annotated as S-2-hydroxyacid oxidases [25]. They selected 122 representatives spanning the diversity of this enzyme class, with special attention to sequences with low similarity to characterized enzymes and non-canonical domain architectures [25].

The experimental protocol proceeded through several critical stages:

Gene Synthesis and Cloning: Selected genes were synthesized and cloned into expression vectors [25].

Protein Expression and Solubility Assessment: Proteins were expressed in E. coli and assessed for solubility. Only 65 of the 122 proteins (53%) were soluble, with archaeal and eukaryotic proteins showing proportionally lower solubility than bacterial proteins [25].

Activity Assay: Soluble proteins were tested for S-2-hydroxy acid oxidase activity using the Amplex Red peroxide detection assay, which measures hydrogen peroxide production during enzyme catalysis [25].

The results were striking: only a small minority of tested sequences exhibited the predicted activity, with the majority showing either no activity or alternative enzymatic functions [25]. This comprehensive experimental validation revealed the extensive misannotation within this enzyme class and led to the identification of four alternative activities among the misannotated sequences [25].

Case Study: Evaluating Generated Enzymes

A similar approach was used to evaluate computational metrics for predicting the functionality of AI-generated enzyme sequences [26]. Researchers expressed and purified over 500 natural and generated sequences with 70-90% identity to natural sequences, then tested them for in vitro enzyme activity [26].

The initial round of experiments revealed that only 19% of tested sequences (including natural sequences) were active, with performance varying significantly by generation method [26]. This study led to the development of COMPSS (Composite Metrics for Protein Sequence Selection), a computational filter that improved experimental success rates by 50-150% [26]. This demonstrates how iterative experimental validation can drive improvements in computational methods.

Emerging Solutions and Best Practices

Advanced Computational Approaches

Recent methodological advances aim to address some limitations of traditional computational approaches:

Geometric Graph Learning: GraphEC incorporates protein structural information predicted by ESMFold and uses geometric graph learning to predict enzyme active sites and EC numbers, achieving superior performance compared to sequence-only methods [4].

Ensemble Methods: SOLVE (Soft-Voting Optimized Learning for Versatile Enzymes) utilizes an ensemble of random forest, LightGBM, and decision tree models with an optimized weighted strategy, enhancing prediction accuracy for both mono- and multi-functional enzymes [1].

Active Site Integration: Methods that explicitly incorporate active site prediction, such as GraphEC-AS, show improved function prediction accuracy by focusing on functionally critical regions [4].

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Experimental Validation

| Reagent/Resource | Function in Validation | Application Examples |

|---|---|---|

| Amplex Red Assay | Detects hydrogen peroxide production | Oxidase activity validation [25] |

| ESMFold | Predicts protein structures from sequences | Structural-based function prediction [4] |

| COMPSS Framework | Computationally filters generated sequences | Prioritizing sequences for experimental testing [26] |

| Phobius | Predicts signal peptides and transmembrane domains | Identifying sequences with problematic domains for expression [26] |

| GraphEC-AS | Predicts enzyme active sites from structures | Guiding functional predictions [4] |

The CAFA challenges and accompanying experimental validations provide a clear-eyed assessment of computational function prediction: while methods have improved substantially and can guide experimental design, they cannot replace experimental validation. The limitations are systematic and significant, affecting accuracy, novelty detection, and biological relevance.

For researchers in drug development and biotechnology, these findings underscore the importance of a balanced approach that leverages computational predictions as hypotheses for experimental testing rather than established facts. The most effective functional annotation pipeline combines state-of-the-art computational methods with targeted experimental validation, particularly for enzyme functions that have direct relevance to therapeutic applications or metabolic engineering.

As computational methods continue to evolve—incorporating structural information, active site prediction, and more sophisticated machine learning architectures—their reliability will improve. However, the fundamental need for experimental validation will remain, ensuring that our understanding of enzyme function is built on a foundation of empirical evidence rather than computational inference alone.

Integrating AI with Experimentation: Frameworks for Validation

The field of enzyme engineering is undergoing a profound transformation, shifting from a labor-intensive, specialist-dependent craft to a streamlined, data-driven science. This revolution is powered by the integration of autonomous experimentation platforms that seamlessly combine artificial intelligence (AI), large language models (LLMs), and robotic biofoundries. These systems close the Design-Build-Test-Learn (DBTL) cycle, enabling rapid iteration without human intervention. For researchers and drug development professionals, this paradigm shift is particularly impactful for the critical task of validating AI-predicted enzyme functions with experimental results. By automating the entire workflow, these platforms accelerate the transition from computational predictions to experimentally verified enzymes, providing the robust, empirical data needed to advance therapeutic discovery and development [15] [27] [28].

This guide provides an objective comparison of the components and performance of these emerging platforms, with a specific focus on their application in validating enzyme function and optimizing catalytic properties.

Platform Comparison: Architecture and Performance

The core of an autonomous experimentation platform is its integration of computational design with robotic execution. The table below summarizes the performance of two distinct AI tools, one for general enzyme engineering and another for predicting substrate specificity, highlighting their validated experimental outcomes.

Table 1: Performance Comparison of AI Tools for Enzyme Engineering and Validation

| AI Tool / Platform | Primary Function | Key Architecture | Experimental Validation & Performance | Key Advantage |

|---|---|---|---|---|

| Generalized AI Platform [15] | Autonomous enzyme engineering | Protein LLM (ESM-2), Epistasis model (EVmutation), low-N machine learning, integrated with iBioFAB biofoundry | AtHMT Enzyme: 90-fold improvement in substrate preference; 16-fold improvement in ethyltransferase activity.YmPhytase Enzyme: 26-fold improvement in specific activity at neutral pH. (Achieved in 4 weeks, screening <500 variants each) | Full DBTL automation; requires only a protein sequence and a fitness metric |

| EZSpecificity [3] [29] | Enzyme-substrate specificity prediction | SE(3)-equivariant graph neural network trained on a comprehensive enzyme-substrate database | Halogenase Enzymes: 91.7% accuracy in identifying the single potential reactive substrate across 8 enzymes and 78 substrates, significantly outperforming the state-of-the-art model (58.3%) | High accuracy for predicting optimal enzyme-substrate pairing, even for poorly characterized enzyme classes |

The data demonstrates that while specialized tools like EZSpecificity offer high-precision predictions for specific tasks, generalized platforms provide an end-to-end solution for comprehensive enzyme optimization. The latter's ability to achieve significant functional improvements in enzymes with minimal human input and time marks a watershed moment for the field [15] [27].

Experimental Protocols for Autonomous Enzyme Validation

The validation of AI-predicted enzyme functions within an autonomous platform follows a rigorous, iterative protocol. The following workflow diagram outlines the key stages of this process.

Diagram 1: The Autonomous Design-Build-Test-Learn (DBTL) Cycle.

Module 1: Intelligent Library Design

- Objective: To generate a diverse and high-quality initial library of mutant enzyme sequences without prior experimental data for the specific target.

- Methodology:

- Protein Language Model (LLM): A model like ESM-2, a transformer trained on millions of global protein sequences, is used to predict the likelihood of amino acids at specific positions. This likelihood is interpreted as variant fitness, guiding the selection of beneficial mutations based on deep grammatical rules of protein sequences [15].

- Epistasis Model: A complementary model like EVmutation, which focuses on local homologs of the target protein, is used to account for mutational interactions [15].

- Process: The two models are combined to generate a list of initial variants (e.g., 180 for each enzyme) that maximize diversity and quality, ensuring the first round of experiments explores a promising region of the vast sequence space [15].

Module 2: Automated Build-and-Test

- Objective: To physically construct the designed library and quantitatively characterize the fitness of each variant.

- Methodology:

- Build Phase:

- Automated Cloning: The iBioFAB or similar automated biofoundry executes a high-fidelity, HiFi-assembly-based mutagenesis method. This method achieves ~95% accuracy, eliminating the need for time-consuming intermediate sequence verification and enabling a continuous workflow [15].

- Transformation and Culture: The platform automates microbial transformations, plates cells on agar plates (e.g., 8-well omnitrays), and picks colonies for protein expression [15].

- Test Phase:

- Protein Expression: The system manages cell growth and protein expression in a 96-well format [15].

- Fitness Assay: An automation-friendly, high-throughput assay is used to measure the desired enzymatic property. This could be a colorimetric, fluorometric, or other quantifiable assay that reflects the enzyme's function (e.g., methyltransferase or phytase activity). The platform automates crude cell lysate removal and the functional enzyme assays [15].

- Build Phase:

Module 3: Iterative Machine Learning and Learning

- Objective: To learn from the experimental data and design an improved library for the next cycle.

- Methodology:

- Data Integration: The assay data (fitness scores) for all screened variants are collected.

- Model Training: A supervised "low-N" machine learning regression model is trained on the experimental data. This model learns the complex relationship between the enzyme's sequence/composition and its measured fitness [15].

- Next-Generation Design: The trained model predicts the fitness of a new, larger set of in silico variants, often by proposing combinations of beneficial single mutations from the initial round into higher-order mutants. The top-predicted variants are then selected for the next "Build" phase [15].

This cycle repeats autonomously, with each iteration refining the model's understanding of the fitness landscape, leading to rapid convergence on highly optimized enzyme variants.

Architectural Breakdown of the AI Platform

The power of the autonomous platform stems from a multi-stage AI architecture that systematically eliminates human decision-making bottlenecks. The following diagram illustrates the flow of information and decision-making between these AI components.

Diagram 2: Multi-stage AI Architecture for Autonomous Enzyme Engineering.

- Unsupervised Pre-training (Stage 1): The process begins with general-purpose models that require no prior experimental data on the target enzyme. The Protein LLM (e.g., ESM-2) provides a broad understanding of protein sequence grammar, while the epistasis model (e.g., EVmutation) adds evolutionary context. This combination ensures the initial library is both diverse and of high quality, de-risking the first experimental round [15].

- Supervised Fine-Tuning (Stage 2): Once the first round of experimental data is available, a supervised machine learning model takes over. This "low-N" model is specifically trained on the collected fitness data, allowing it to make highly accurate, context-aware predictions for the target enzyme's fitness landscape. This enables efficient hill-climbing towards optimal sequences [15].

- AI and Robotics Integration: The AI components are not standalone; they are fully integrated with the robotic biofoundry. The AI designs are automatically converted into robotic work instructions, and the resulting experimental data is automatically fed back to the AI, creating a truly closed-loop, self-driving laboratory [15] [28].

The Scientist's Toolkit: Essential Research Reagents and Materials

The experimental validation of AI-predicted enzymes relies on a suite of specific reagents and automated equipment. The following table details key components of this toolkit.

Table 2: Essential Research Reagents and Platforms for Autonomous Enzyme Engineering

| Item / Solution | Function / Description | Role in Experimental Validation |

|---|---|---|

| iBioFAB (Illinois Biological Foundry) [15] | A fully automated, integrated biofoundry for biological experimentation. | Provides the robotic backbone for the "Build" and "Test" phases, enabling high-throughput, reproducible execution of protocols without human intervention. |

| High-Fidelity Mutagenesis Kit [15] | A specialized kit for DNA assembly with high accuracy (~95%). | Crucial for reliable, continuous construction of variant libraries without the need for slow intermediate sequence verification. |

| Protein LLM (ESM-2) [15] | A large language model trained on millions of protein sequences. | Informs the initial "Design" phase by predicting beneficial mutations based on learned evolutionary and structural patterns, bootstrapping the process without prior data. |

| EVmutation Model [15] | An unsupervised statistical model for analyzing epistatic interactions in proteins. | Complements the Protein LLM by providing evolutionary constraints and insights, enhancing the quality of the initial variant library. |

| Activity-Specific Assay Reagents [15] | Reagents tailored to measure a specific enzymatic function (e.g., methyltransferase or phytase activity). | Forms the core of the "Test" phase, providing the quantitative fitness data (e.g., absorbance, fluorescence) that the AI uses to learn and guide subsequent iterations. |

| SOLVE ML Framework [30] [2] | An interpretable ensemble ML model for predicting enzyme function from sequence. | Useful for independent prediction and validation of enzyme function (EC number), adding another layer of computational verification. |

| EZSpecificity Tool [3] [29] | A cross-attention graph neural network for predicting enzyme-substrate specificity. | Helps validate and rationalize why an engineered enzyme shows improved activity for a specific substrate, linking sequence changes to functional outcomes. |

The advent of autonomous experimentation platforms marks a pivotal shift in enzyme engineering and the validation of AI predictions. By objectively integrating AI, LLMs, and robotic biofoundries, these systems have demonstrated remarkable efficiency, achieving multi-fold enzyme improvements in weeks rather than years. For researchers and drug developers, this means a accelerated path from a promising protein sequence to a experimentally validated, high-performance biocatalyst. As these platforms become more accessible and their underlying models continue to improve, they promise to democratize and accelerate innovation across biotechnology, ultimately shortening the timeline for developing new therapeutic agents and sustainable bioprocesses.

Table of Contents

- Introduction to Halide Methyltransferase and the Engineering Goal

- The Autonomous AI-Powered Engineering Platform

- Experimental Protocol & Workflow

- Performance Results and Comparative Analysis

- Key Research Reagents and Solutions

- Conclusions and Outlook

Halide Methyltransferases (HMTs) are enzymes that catalyze the transfer of a methyl group from the cofactor S-adenosyl-L-methionine (SAM) to a halide ion, producing a halogenated hydrocarbon and S-adenosyl-L-homocysteine (SAH). The engineering case study focuses on Arabidopsis thaliana Halide Methyltransferase (AtHMT). Beyond its natural function, AtHMT exhibits promising promiscuous alkyltransferase activity. This means it can utilize alkyl halides larger than methyl iodide (e.g., ethyl iodide) and SAM analogs to synthesize compounds that are difficult to access via chemical synthesis [15].

The primary engineering objective was to enhance this non-native activity. The specific goals were:

- Improve Substrate Preference: Engineer AtHMT for a 90-fold improvement in its preference for ethyl iodide over methyl iodide [15].

- Boost Ethyltransferase Activity: Achieve a 16-fold improvement in its ethyltransferase activity compared to the wild-type enzyme [15].

Success in this endeavor validates a platform for creating tailored biocatalysts, which is significant for producing specialized SAM analogs for applications in biocatalytic alkylation, medicine, and sustainable chemistry [15].

The Autonomous AI-Powered Engineering Platform

This engineering feat was accomplished using a generalized platform for autonomous enzyme engineering. This platform integrates artificial intelligence (AI), large language models (LLMs), and full laboratory automation to execute iterative Design-Build-Test-Learn (DBTL) cycles with minimal human intervention [15].

The core components of the platform are:

- AI and Machine Learning Models:

- Protein LLM (ESM-2): A large language model trained on protein sequences was used to predict the likelihood of beneficial amino acid substitutions, maximizing the diversity and quality of the initial variant library [15].

- Epistasis Model (EVmutation): This model analyzed co-evolutionary patterns and residue-residue interactions within protein sequences to identify mutations with a high probability of improving function [15].

- Low-N Machine Learning Model: In subsequent rounds, experimental data from screened variants was used to train machine learning models capable of predicting variant fitness with high accuracy from limited data, guiding the selection of variants for the next cycle [15].

- Biofoundry Automation (iBioFAB): The Illinois Biological Foundry for Advanced Biomanufacturing is an automated robotic platform that executed all experimental steps, from DNA construction and microbial transformation to protein expression and functional assays [15].

Experimental Protocol & Workflow

The experimental campaign was conducted over four rounds in just four weeks, requiring the construction and characterization of fewer than 500 variants—a fraction of what traditional methods would require [15]. The workflow is a continuous, automated DBTL cycle.

Experimental Workflow Diagram

The diagram below illustrates the integrated, autonomous workflow used in this study.

Detailed Methodologies:

1. AI-Driven Design (Design Phase):

- Protocol: The initial library of 180 AtHMT variants was designed by combining predictions from the ESM-2 protein language model and the EVmutation epistasis model. This combination ensured a high-quality, diverse starting point. In subsequent rounds, a machine learning model was retrained on the collected experimental data to predict the fitness of new variants [15].

- Rationale: This approach moves beyond random mutagenesis, using computational power to intelligently navigate the vast sequence space and prioritize mutations likely to enhance the desired ethyltransferase activity [15].

2. Automated Library Construction (Build Phase):

- Protocol: A high-fidelity (HiFi) assembly-based mutagenesis method was used to construct variant libraries. This method eliminated the need for intermediate sequencing verification, enabling an uninterrupted, fully automated workflow. The iBioFAB robotic system automated all steps, including mutagenesis PCR, DNA assembly, transformation, and colony picking [15].

- Rationale: This optimized high-fidelity approach was crucial for reliability and continuity, with sequencing confirmation showing around 95% accuracy for the targeted mutations [15].

3. Robotic Screening (Test Phase):

- Protocol: The functional screening assay was automated on the iBioFAB. This involved crude cell lysate preparation from expressed variants, followed by a plate-based enzyme activity assay. The assay was designed to quantitatively measure the ethyltransferase activity fitness of each variant [15].

- Rationale: Automation ensures high-throughput, reproducibility, and scalability, generating the consistent and quantitative data required for effective machine learning [15] [31].

4. Machine Learning Analysis (Learn Phase):

- Protocol: The assay data from each cycle was fed back into the machine learning model. The model was retrained to learn the complex sequence-activity relationships, improving its predictive power for the next design cycle [15].

- Rationale: This closed-loop learning process allows the system to rapidly converge on high-performing variants, efficiently exploring the fitness landscape with minimal experimental effort [15].

Performance Results and Comparative Analysis

The autonomous platform successfully engineered a superior AtHMT variant, achieving and exceeding the initial goals. The table below summarizes the key quantitative outcomes.

Table 1: Performance Metrics of Engineered AtHMT

| Metric | Wild-Type AtHMT (Baseline) | Engineered AtHMT Variant | Fold Improvement |

|---|---|---|---|

| Substrate Preference (Ethyl I vs. Methyl I) | 1x | 90x | 90-fold [15] |

| Ethyltransferase Activity | 1x | 16x | 16-fold [15] |

| Engineering Timeline | N/A | 4 Rounds / 4 Weeks | N/A [15] |

| Variants Screened | N/A | < 500 | N/A [15] |

To contextualize this achievement, it is helpful to compare the autonomous AI-driven platform against other established enzyme engineering methods.

Table 2: Engineering Platform Comparison

| Method / Platform | Key Features | Typical Throughput | Pros | Cons |

|---|---|---|---|---|

| Traditional Directed Evolution [32] | Random mutagenesis & high-throughput screening | High (10⁴ - 10⁶) | Well-established; no prior structural knowledge needed | Labor-intensive; can hit evolutionary dead ends; limited by screening capacity |

| Physics-Based Rational Design [32] | Uses molecular mechanics & quantum mechanics | Low (10¹ - 10²) | Provides atomic-level mechanistic insights | Computationally expensive; requires expert knowledge & high-quality structures |

| AI-Powered Autonomous Platform (This Study) [15] | Integrates AI/LLMs with robotic biofoundry | Medium (10² - 10³ variants/cycle) | Extremely fast and efficient; minimal human intervention; generalizable | High initial infrastructure cost; requires robust, automatable assays |

The data demonstrates that the autonomous platform provides a compelling balance of speed, efficiency, and intelligence. It required orders of magnitude fewer variants to be screened than traditional directed evolution while achieving specific, high-level engineering objectives within a condensed timeframe [15] [32].

Key Research Reagents and Solutions

The following table details essential reagents and tools used in this case study, which are also broadly applicable in the field of AI-driven enzyme engineering.

Table 3: Research Reagent Solutions for AI-Driven Enzyme Engineering

| Reagent / Tool | Function in the Experiment | Research Application |

|---|---|---|

| S-adenosyl-L-methionine (SAM) [15] [33] | Native methyl donor cofactor for methyltransferases. | Essential for studying and assaying methyltransferase enzyme activity. |