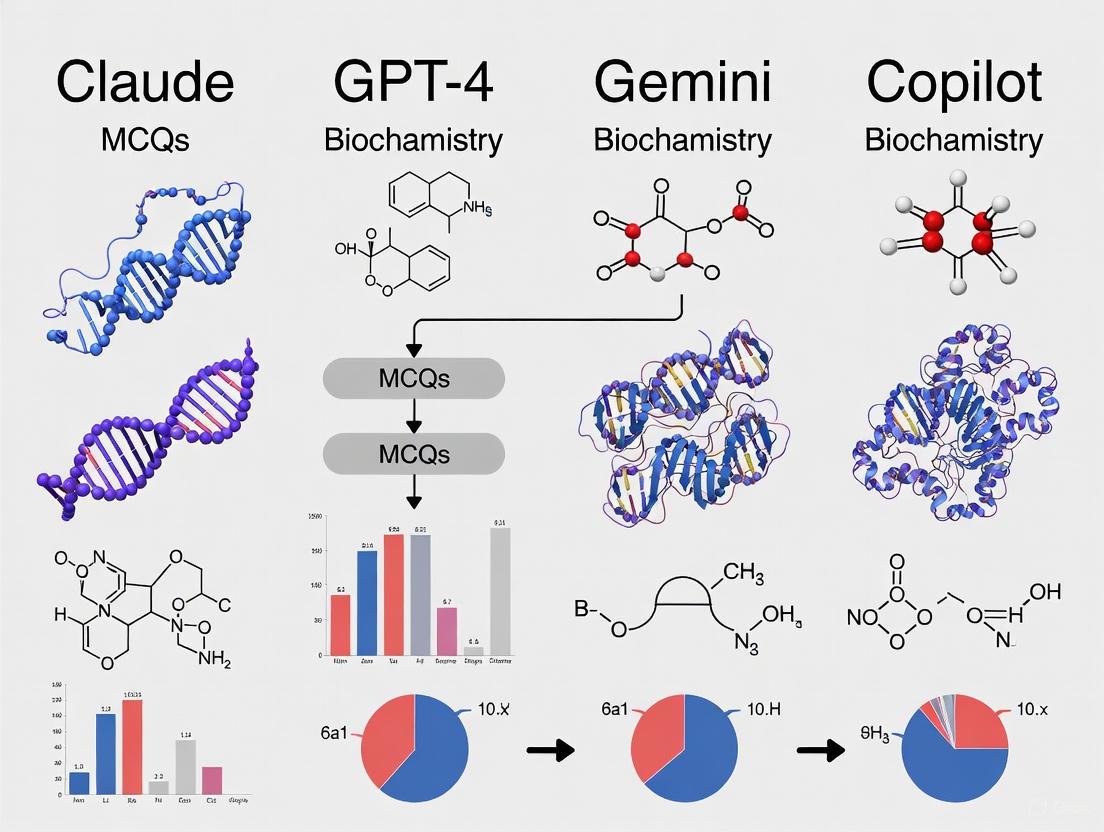

Comparative Analysis of Claude, GPT-4, Gemini, and Copilot on Biochemistry MCQs: Performance, Pitfalls, and Potential for Biomedical Research

This article provides a comprehensive evaluation of four leading large language models (LLMs)—Claude 3.5 Sonnet, GPT-4, Gemini 1.5 Flash, and Microsoft Copilot—in answering biochemistry multiple-choice questions (MCQs).

Comparative Analysis of Claude, GPT-4, Gemini, and Copilot on Biochemistry MCQs: Performance, Pitfalls, and Potential for Biomedical Research

Abstract

This article provides a comprehensive evaluation of four leading large language models (LLMs)—Claude 3.5 Sonnet, GPT-4, Gemini 1.5 Flash, and Microsoft Copilot—in answering biochemistry multiple-choice questions (MCQs). Tailored for researchers, scientists, and drug development professionals, we explore the foundational capabilities, methodological applications, and optimization strategies for these AI tools. Drawing on recent comparative studies, we validate their performance against medical student benchmarks and examine topic-specific strengths and weaknesses. The analysis reveals a clear performance hierarchy, with Claude leading in accuracy, and highlights critical limitations and future directions for integrating LLMs into biomedical research and education workflows.

The Rise of AI in Biochemistry: Understanding LLM Capabilities and Core Strengths

Large Language Models (LLMs) are revolutionizing medical and biochemical education by providing powerful tools for knowledge assessment and learning support. This guide provides a detailed, evidence-based comparison of four leading LLMs—Claude, GPT-4, Gemini, and Copilot—focusing specifically on their performance in biochemistry multiple-choice questions (MCQs). Recent research demonstrates that these models exhibit significant performance variations, with Claude 3.5 Sonnet emerging as the top performer (92.5% accuracy) on standardized biochemistry examinations, surpassing both human medical students and other AI models [1] [2].

The integration of artificial intelligence into medical education represents a paradigm shift in how students access information and validate knowledge. As LLMs become increasingly sophisticated, understanding their respective strengths and limitations in specialized domains like biochemistry is essential for educators, researchers, and healthcare professionals. This analysis examines the comparative performance of major LLM platforms using rigorous experimental data, providing actionable insights for their effective implementation in educational contexts.

Performance Comparison Tables

Table 1: Comparative performance of LLMs on 200 biochemistry MCQs (USMLE-style)

| AI Model | Developer | Accuracy (%) | Ranking |

|---|---|---|---|

| Claude 3.5 Sonnet | Anthropic | 92.5% | 1 |

| GPT-4 | OpenAI | 85.0% | 2 |

| Gemini 1.5 Flash | 78.5% | 3 | |

| Copilot | Microsoft | 64.0% | 4 |

Source: Mavrych et al., 2025 [1] [2]

Performance by Biochemistry Topic Area

Table 2: Topic-wise performance analysis (% accuracy)

| Biochemistry Topic | Claude 3.5 | GPT-4 | Gemini | Copilot |

|---|---|---|---|---|

| Eicosanoids | 100% | 100% | 100% | 100% |

| Bioenergetics & Electron Transport Chain | 96.4% | 96.4% | 96.4% | 96.4% |

| Ketone Bodies | 93.8% | 93.8% | 93.8% | 93.8% |

| Hexose Monophosphate Pathway | 91.7% | 91.7% | 91.7% | 91.7% |

| Amino Acid Metabolism | 89.2% | 82.5% | 76.3% | 65.8% |

| Enzyme Kinetics | 87.6% | 84.1% | 79.5% | 62.3% |

| Lipoprotein Metabolism | 85.3% | 80.2% | 75.4% | 58.9% |

Source: Adapted from Mavrych et al., 2025 [1] [2]

Comparative Performance Across Medical Disciplines

Table 3: Cross-disciplinary performance analysis (% accuracy)

| Model | Biochemistry | Cardiovascular Pharmacology | Emergency Medicine | Overall USMLE-style |

|---|---|---|---|---|

| Claude 3.5 Sonnet | 92.5% | N/A | N/A | 81.2% |

| GPT-4 | 85.0% | 87-100% (MCQs) | 84.1% | 89.3% |

| Gemini | 78.5% | 20-87% (MCQs) | 77.1% | 82.7% |

| Copilot | 64.0% | 53-100% (MCQs) | 92.2% | N/A |

Sources: Mavrych et al., 2025; Ishaq et al., 2025; Aydin et al., 2025 [1] [3] [4]

Experimental Protocols and Methodologies

Standardized Biochemistry MCQ Evaluation

The primary comparative study evaluated four LLM chatbots using 200 United States Medical Licensing Examination (USMLE)-style multiple-choice questions randomly selected from a medical biochemistry course examination database [1] [2]. The experimental protocol included:

- Question Selection: 200 scenario-based MCQs with 4 options and single correct answers, validated by two independent experts

- Topic Coverage: Questions distributed across 23 distinctive biochemistry categories including structural proteins, enzyme kinetics, metabolic pathways, and signaling mechanisms

- Exclusion Criteria: Questions with images and tables were excluded to ensure text-only processing

- Testing Protocol: Each chatbot underwent five successive attempts to answer the complete question set in August 2024

- Prompt Standardization: Identical prompt - "generate the list of correct answers for the following MCQs" - applied to all models

- Statistical Analysis: Chi-square tests used to compare results among different chatbots with statistical significance level of P<.05

This rigorous methodology ensured fair comparison across platforms while focusing specifically on biochemistry knowledge representation and reasoning capabilities.

Cardiovascular Pharmacology Assessment Protocol

A separate study evaluated ChatGPT-4, Copilot, and Google Gemini on cardiovascular pharmacology questions using a stratified difficulty approach [3]:

- Question Design: 45 MCQs and 30 short-answer questions across easy, intermediate, and advanced difficulty levels

- Evaluation Framework: MCQ responses scored as correct/incorrect; SAQs rated on 1-5 scale for relevance, completeness, and correctness

- Expert Validation: Three pharmacology professors with cardiovascular specialization independently rated responses

- Statistical Analysis: Two-way ANOVA used to compare accuracy scores across AI tools and difficulty levels with Bonferroni correction

Visualizing Performance Patterns

Biochemistry Topic Difficulty for LLMs - Performance accuracy decreases with increasing biochemical complexity, with all models performing perfectly on foundational topics but showing significant variation on advanced metabolic pathways.

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential resources for LLM evaluation in biochemical education

| Research Tool | Function | Specifications |

|---|---|---|

| USMLE-style MCQs | Standardized knowledge assessment | 200 questions, 23 biochemistry topics, expert-validated |

| Biomedical NLP Benchmarks | Performance quantification | BLURB, BLUE benchmarks for specialized domain evaluation |

| Statistical Analysis Package | Data validation and significance testing | Chi-square tests, ANOVA with Bonferroni correction, P<0.05 threshold |

| Prompt Standardization Protocol | Experimental consistency | Identical prompts across all model evaluations |

| Domain Expert Validation | Ground truth establishment | Multiple pharmacology professors with cardiovascular specialization |

| Difficulty Stratification | Cognitive level assessment | Easy, intermediate, and advanced question classification |

Key Findings and Performance Analysis

The comparative analysis reveals several critical patterns in LLM performance for biochemical education:

Claude 3.5 Sonnet's superior performance (92.5% accuracy) demonstrates exceptional capability in biochemical reasoning, surpassing even human medical students' average performance by 8.3% [1] [2]. This suggests particular optimization for complex metabolic pathway analysis and enzymatic process understanding.

GPT-4 maintains strong performance (85.0% in biochemistry) with remarkable consistency across diverse medical domains, achieving 89.3% accuracy on comprehensive USMLE-style examinations [5]. Its robust architecture appears well-suited for integrated clinical reasoning tasks.

Gemini shows intermediate performance (78.5% in biochemistry) with significant variability across domains, excelling in some areas while demonstrating notable limitations in complex pharmacological reasoning [3].

Copilot displays the most variable performance profile, ranking last in biochemistry (64.0%) while achieving top performance in emergency medicine (92.2%) [4] [1]. This suggests highly specialized rather than generalized medical knowledge representation.

All models exhibited perfect or near-perfect performance on structured biochemical topics like eicosanoids and bioenergetics, while showing increasing performance divergence on complex, integrated topics requiring multi-step reasoning [1] [2]. This pattern highlights the continuing challenge of contextual reasoning in AI systems for specialized educational domains.

The evidence clearly demonstrates that LLMs have achieved significant capability in biochemical education, with Claude 3.5 Sonnet currently leading in biochemistry-specific applications. However, performance variability across domains and question types indicates that model selection should be guided by specific educational objectives rather than presumed general superiority.

For biochemistry education and assessment applications requiring high accuracy on complex metabolic pathways, Claude 3.5 Sonnet represents the current optimal choice. For broader medical education spanning multiple disciplines, GPT-4 provides the most consistent performance. These tools should be viewed as complementary educational resources rather than replacements for traditional learning methodologies, with their implementations carefully matched to specific educational contexts and continuously validated against domain expertise.

Transformer-based models, introduced in the seminal 2017 paper "Attention is All You Need," have fundamentally reshaped the artificial intelligence landscape [6]. Originally developed for sequence-to-sequence tasks in natural language processing (NLP), their core self-attention mechanism allows for parallel processing of sequential data and superior capture of long-range dependencies compared to previous architectures like recurrent neural networks (RNNs) and convolutional neural networks (CNNs) [7] [6]. This architectural advantage has enabled transformers to transcend their original domain, achieving state-of-the-art performance across diverse scientific fields, from computational biology and medicine to time series forecasting and recommendation systems [7] [8].

This guide provides an objective comparison of transformer-based architectures and their performance against traditional alternatives in key scientific applications. It places particular emphasis on the context of biochemical research, framing the discussion around recent empirical findings on large language models (LLMs). The analysis synthesizes experimental data, detailed methodologies, and practical resources to inform researchers, scientists, and drug development professionals in their selection and implementation of these powerful AI tools.

Performance Comparison in Scientific Applications

Transformer-based models demonstrate versatile and superior performance across a range of scientific tasks. The quantitative results below facilitate a direct comparison with traditional machine learning and deep learning approaches.

Table 1: Performance of Transformer vs. Traditional Models in Classification and Forecasting

| Application Domain | Task | Best Performing Model | Key Metric | Performance | Traditional Model Benchmark |

|---|---|---|---|---|---|

| Breast Cancer Pathology [9] | Binary Classification | ConvNeXT (CNN) & UNI (Transformer) | AUC | 0.999 | Multiple CNNs & Transformers |

| Breast Cancer Pathology [9] | Eight-Class Classification | UNI (Transformer) | Accuracy | 95.5% | Multiple CNNs & Transformers |

| Career Satisfaction Prediction [10] | Classification | BERT (Transformer) | Accuracy | 98% | 80-85% (SVM, LR, RF, GRU) |

| Personalized Movie Recommendation [11] | Rating Prediction | MBT4R (Transformer) | RMSE | 0.62 | Higher (DT, KNN, RF, SVD, GRU) |

Table 2: LLM Performance on Biochemistry MCQ Examination (n=200 questions) [12]

| Large Language Model | Developer | Accuracy | Comparative Performance |

|---|---|---|---|

| Claude 3.5 Sonnet | Anthropic | 92.5% | Surpassed medical student average by 16.7% |

| GPT-4 | OpenAI | 85.0% | Surpassed medical student average by 9.2% |

| Gemini 1.5 Flash | 85.0% | Surpassed medical student average by 4.5% | |

| Copilot | Microsoft | 64.0% | Underperformed against student average |

Detailed Experimental Protocols

Biochemistry MCQ Evaluation

A 2024 comparative study evaluated the performance of advanced LLMs against medical students on a biochemistry examination [12].

- Objective: To conduct a comprehensive analysis comparing the performance of LLM chatbots (Claude, GPT-4, Gemini, Copilot) against the academic results of medical students in a medical biochemistry course.

- Question Bank: The study utilized 200 United States Medical Licensing Examination (USMLE)-style multiple-choice questions (MCQs) selected from a course exam database. The questions encompassed various complexity levels and were distributed across 23 distinctive topics. Questions containing tables and images were excluded.

- Model Testing: Each chatbot made five successive attempts to answer the full set of 200 questions in August 2024. The results were evaluated based on accuracy.

- Statistical Analysis: Data analysis was performed using Statistica 13.5.0.17. Given the binary nature of the data (correct/incorrect), the chi-square test was used to compare results among the different chatbots, with a statistical significance level of P < .05.

Computational Pathology Model Evaluation

A 2025 study trained and evaluated 14 deep learning models, including both CNN-based and Transformer-based architectures, on breast cancer pathology images from the BreakHis v1 dataset [9].

- Objective: To assess model performance in breast cancer diagnosis using key evaluation metrics, including accuracy, specificity, recall (sensitivity), F1-score, Cohen's Kappa coefficient, and the area under the ROC curve (AUC).

- Models: The study evaluated 14 models: AlexNet, VGG16, InceptionV3, ResNet50, DenseNet121, MobileNetV2, ResNeXt, RegNet, EfficientNet_B0, ConvNeXT, ViT, DINOV2, UNI, and GigaPath.

- Tasks: Models were evaluated on two tasks:

- Binary Classification: Distinguishing between benign and malignant tissues.

- Eight-Class Classification: Identifying specific breast cancer tissue subtypes.

- Foundation Model Fine-Tuning: The study also investigated the zero-shot performance of foundation models like UNI without fine-tuning, followed by an evaluation of their performance after simple fine-tuning on the target task.

Architectural Workflows and Signaling Pathways

The self-attention mechanism is the foundational component of the Transformer architecture. The following diagram illustrates the core workflow for processing sequential data, such as text or time-series information.

Self-Attention Data Flow

The application of transformers in scientific domains often involves hybrid architectures. The diagram below outlines a typical workflow for a transformer-based predictive model in a scientific context, such as classifying medical images or predicting career success from behavioral traits.

Scientific Model Pipeline

The Scientist's Toolkit: Research Reagent Solutions

For researchers seeking to implement or evaluate transformer-based models, the following table details essential computational "reagents" and their functions.

Table 3: Essential Tools for Transformer-Based Research

| Research Reagent | Category | Primary Function |

|---|---|---|

| Pre-trained Models (e.g., BERT, ViT, UNI) | Model Architecture | Provides a foundational model pre-trained on vast datasets, which can be fine-tuned for specific scientific tasks, reducing training time and data requirements [9] [6]. |

| FlashAttention | Optimization | A low-level GPU optimization that speeds up attention computation and reduces memory footprint, enabling work with longer sequences [8]. |

| Positional Encoding | Algorithmic Component | Injects information about the relative or absolute position of tokens in a sequence, crucial as the self-attention mechanism is otherwise permutation-invariant [13] [6]. |

| Layer Normalization | Training Stabilization | Stabilizes the activations and gradients throughout the network layers, facilitating faster and more stable training of deep transformer models [13]. |

| Fine-Tuning Dataset | Data | A smaller, domain-specific dataset (e.g., pathology images, biochemical questions) used to adapt a pre-trained model to a specialized scientific task [9] [12]. |

The evaluation of Large Language Models (LLMs) using United States Medical Licensing Examination (USMLE)-style Biochemistry Multiple Choice Questions (MCQs) provides a critical benchmark for assessing their capability in a specialized medical domain. Comparative studies reveal significant performance variations among leading models, offering researchers and professionals actionable insights into their respective strengths and weaknesses.

Table 1: Comparative Performance of LLMs on Biochemistry MCQs

| Large Language Model | Developer | Accuracy on Biochemistry MCQs | Key Strengths |

|---|---|---|---|

| Claude 3.5 Sonnet | Anthropic | 92.5% (185/200) [2] [14] [12] | Highest overall accuracy in biochemistry |

| GPT-4 | OpenAI | 85.0% (170/200) [2] [14] [12] | Strong all-around performer |

| Gemini 1.5 Flash | 78.5% (157/200) [2] [14] [12] | - | |

| Copilot | Microsoft | 64.0% (128/200) [2] [14] [12] | - |

Beyond overall scores, performance varies considerably across specific biochemistry topics. Models demonstrate particular proficiency in structured, pathway-based concepts.

Table 2: Model Performance by Biochemistry Topic

| Biochemistry Topic | Average Model Accuracy | Performance Notes |

|---|---|---|

| Eicosanoids | 100% [2] [14] | All models achieved perfect scores |

| Bioenergetics & Electron Transport Chain | 96.4% [2] [14] | High performance on energy metabolism |

| Ketone Bodies | 93.8% [2] [14] | Strong grasp of metabolic states |

| Hexose Monophosphate Pathway | 91.7% [2] [14] | Effective understanding of metabolic pathways |

Experimental Protocols for LLM Evaluation

The validity of LLM benchmarking relies on standardized, reproducible experimental protocols. The methodology outlined below, drawn from recent comparative studies, ensures a consistent and fair evaluation framework.

Question Bank Curation

- Source and Selection: Studies utilized 200 USMLE-style MCQs randomly selected from medical biochemistry course examination databases [2] [14] [12]. These questions encompassed various complexity levels and were distributed across 23 distinct topics within biochemistry.

- Content Validation: All questions were validated by two independent subject matter experts to ensure clarity and appropriateness [2].

- Exclusion Criteria: To ensure compatibility with text-based LLM interfaces, questions containing images, tables, or charts were systematically excluded from the evaluation dataset [5] [2].

Model Configuration and Prompting

- Model Versions: Evaluations tested publicly available versions: Claude 3.5 Sonnet, GPT-4‐1106, Gemini 1.5 Flash, and Copilot [2] [14].

- Standardized Prompting: Each model received an identical, structured prompt: "generate the list of correct answers for the following MCQs" [2]. This approach minimizes variability introduced by prompt engineering.

- Parameter Settings: To ensure reproducibility, researchers conducted multiple independent runs (e.g., five successive attempts) for each model [2] [12]. The temperature parameter was typically set to 0.0 where possible to maximize deterministic outputs [5].

Data Analysis and Validation

- Accuracy Calculation: The primary metric was the percentage of correctly answered questions from the total set [2] [14].

- Statistical Testing: Researchers employed Pearson chi-square tests to determine if performance differences between models were statistically significant, with a P-value of less than 0.05 considered significant [2] [14].

- Expert Verification: AI-generated answers were compared against established answer keys and reviewed by domain experts to confirm accuracy [2].

Diagram 1: Experimental workflow for benchmarking LLMs on biochemistry MCQs.

The Scientist's Toolkit: Key Research Reagents

The experimental benchmark for evaluating LLMs in biochemistry relies on a defined set of "research reagents" – essential components that ensure a valid, reproducible, and insightful comparison.

Table 3: Essential Reagents for LLM Biochemistry Evaluation

| Research Reagent | Function in the Experiment |

|---|---|

| USMLE-style Biochemistry MCQ Bank | Serves as the standardized stimulus to probe model knowledge and reasoning; ensures clinical relevance [2] [14]. |

| Standardized Prompt Protocol | Acts as the consistent "reaction condition" to eliminate variability in model responses caused by input phrasing [2]. |

| Predefined Scoring Rubric | Functions as the objective measurement tool, defining a correct/incorrect binary outcome for unambiguous performance tracking [2] [15]. |

| Statistical Analysis Package | The "analytical instrument" (e.g., Chi-square test) to determine if observed performance differences are statistically significant and not due to chance [5] [2] [14]. |

Interpreting the Benchmark Results

The collective data from these controlled evaluations leads to several key conclusions relevant for researchers and drug development professionals:

- Model Specialization: Claude 3.5 Sonnet's top performance suggests potential specialization in biochemistry, making it a prime candidate for applications in this domain [2] [14] [12].

- Performance Gap: The significant accuracy range (92.5% to 64.0%) underscores that LLMs are not interchangeable for specialized tasks [2] [14]. Model selection is critical.

- Topic-Specific Proficiency: Consistently high scores in pathway-based topics (e.g., bioenergetics) indicate that structured, logical biochemical concepts may be a current strength of LLMs [2] [14].

Diagram 2: Logical relationship defining the benchmark process from input to performance profile.

Recent advancements in artificial intelligence (AI) have ushered in a new era for medical education and assessment. Large language models (LLMs) are now demonstrating remarkable capabilities on standardized tests, often surpassing human performance in specialized medical subjects such as biochemistry. This guide provides an objective, data-driven comparison of four leading AI platforms—Claude (Anthropic), GPT-4 (OpenAI), Gemini (Google), and Copilot (Microsoft)—focusing on their performance on biochemistry multiple-choice questions (MCQs). The analysis is based on the latest published research, offering researchers, scientists, and drug development professionals a clear overview of the current landscape and the specific strengths of each model [1] [12].

Key Performance Metrics in Biochemistry

The core data for this comparison originates from a comprehensive study published in 2025, which evaluated these AI models using 200 USMLE-style biochemistry MCQs. The table below summarizes their overall performance, benchmarked against human medical students [1] [2] [12].

| AI Model (Developer) | Overall Accuracy (%) | Number of Correct Answers (Out of 200) | Performance Relative to Students |

|---|---|---|---|

| Claude (Anthropic) | 92.5% | 185 | Superior |

| GPT-4 (OpenAI) | 85.0% | 170 | Superior |

| Gemini (Google) | 78.5% | 157 | Superior |

| Copilot (Microsoft) | 64.0% | 128 | Superior |

| Average of AI Chatbots | 81.1% | 162.2 | Superior by 8.3% |

| Medical Students | 72.8% | ~146 | Benchmark |

On average, the selected AI chatbots correctly answered 81.1% of the questions, a performance that was 8.3% higher than the average score achieved by medical students (72.8%), a difference that was statistically significant (P=.02) [1] [12].

Performance also varied significantly by topic, highlighting each model's unique strengths in specific areas of biochemistry. The following table details the mean accuracy of the AI models across the highest and lowest-performing topics [1].

| Biochemistry Topic | Mean AI Accuracy (%) | Standard Deviation (SD) |

|---|---|---|

| Eicosanoids | 100% | 0% |

| Bioenergetics & Electron Transport Chain | 96.4% | 7.2% |

| Ketone Bodies | 93.8% | 12.5% |

| Hexose Monophosphate Pathway | 91.7% | 16.7% |

| Amino Acid Metabolism | 76.0% | 17.4% |

| Nitrogen Metabolism | 72.9% | 22.2% |

| Fast and Fed State | 71.9% | 23.9% |

| Lysosomal Storage Diseases | 68.8% | 17.7% |

Comparative Performance Across Medical Disciplines

The trend of AI outperforming human benchmarks extends beyond biochemistry. Research in other medical subjects reveals a consistent pattern, though the ranking of models can vary by discipline.

- In Anatomy: A 2025 study evaluating 325 USMLE-style MCQs found that current LLMs achieved an average accuracy of 76.8%, significantly higher than the 44.4% accuracy of GPT-3.5 from the previous year. In this domain, GPT-4o led with 92.9% accuracy, followed by Claude (76.7%), Copilot (73.9%), and Gemini (63.7%) [16].

- In Cardiovascular Pharmacology: A 2025 study testing MCQs and short-answer questions (SAQs) across difficulty levels reported that ChatGPT-4 and Copilot maintained high accuracy (87-100%) on easy and intermediate MCQs. However, on advanced MCQs, their performance declined, with Copilot at 53% and Gemini at 20% accuracy. For SAQs, ChatGPT-4 demonstrated the highest overall accuracy, while Gemini's performance was markedly lower [15] [3].

Detailed Experimental Protocol

To ensure transparency and reproducibility, the methodology of the key biochemistry study is outlined below [1] [2].

1. Study Design and Question Selection

- Source: 200 USMLE-style multiple-choice questions were randomly selected from a medical biochemistry course's examination database.

- Validation: Questions were validated by two independent subject-matter experts.

- Scope: The questions encompassed various complexity levels and were distributed across 23 distinctive biochemistry topics.

- Exclusion Criteria: Questions containing tables and images were excluded from the analysis.

2. AI Models and Testing Parameters

- Models Tested: Claude 3.5 Sonnet (Anthropic), GPT-4‐1106 (OpenAI), Gemini 1.5 Flash (Google), and Copilot (Microsoft).

- Testing Window: Data collection occurred over two weeks in August 2024.

- Prompting: Each chatbot was given the standardized prompt: “generate the list of correct answers for the following MCQs” followed by the set of questions.

- Repetition: The process was repeated five successive times for each model to ensure consistency and account for potential variability.

3. Data Analysis

- Performance Metric: Accuracy was calculated based on the proportion of correctly answered questions.

- Statistical Analysis: Basic statistics and chi-square tests were performed using Statistica 13.5.0.17 (TIBCO Software Inc.), with a statistical significance level of P<.05.

Visualizing High-Performance Biochemical Pathways

The AI models demonstrated exceptional accuracy on topics involving key metabolic pathways. Below are simplified diagrams of two pathways where AI performance exceeded 91%.

The Scientist's Toolkit: Key Research Reagents

For researchers aiming to replicate or build upon such AI performance evaluations, the following "research reagents" or essential components are critical.

| Item | Function in Experimental Protocol |

|---|---|

| USMLE-style MCQs | Standardized assessment tool to evaluate and compare AI knowledge and reasoning capabilities against a recognized medical education benchmark [1] [16]. |

| Validated Question Bank | A pre-existing database of questions, reviewed by subject-matter experts, to ensure content validity, appropriate difficulty, and freedom from errors [1] [17]. |

| Standardized Prompt | A consistent text instruction (e.g., "generate the list of correct answers...") used to query each AI model, minimizing variability introduced by prompt engineering [1] [15]. |

| Statistical Analysis Software | Software such as Statistica or GraphPad Prism used to perform rigorous statistical tests (e.g., chi-square) to determine the significance of performance differences [1] [15]. |

| Expert Review Panel | A team of human experts (e.g., licensed pharmacologists, medical professors) required to validate questions, create model answers, and evaluate open-ended AI responses [15] [17]. |

The collective evidence from recent studies indicates that large language models have reached a level of proficiency where they can not only compete with but also surpass the average performance of medical students on standardized biochemistry tests and other specialized medical subjects. Among the models compared, Claude 3.5 Sonnet demonstrated superior performance in biochemistry, while GPT-4 consistently ranks as a top contender across diverse medical disciplines. However, performance is not uniform; it varies significantly by the specific subject matter and the complexity of the questions, with all models showing declines when faced with advanced, complex scenarios [1] [15] [16]. This underscores that AI currently serves best as a powerful complementary tool in educational and research settings, rather than a replacement for deep expert knowledge and critical validation.

The integration of Artificial Intelligence (AI) into biochemistry represents a paradigm shift, revolutionizing how researchers approach complex biological systems. From predicting molecular interactions to analyzing metabolic pathways, AI tools are dramatically enhancing research capabilities across key biochemical domains [18]. This transformation is particularly evident in the educational and research sectors, where large language models (LLMs) are increasingly utilized to navigate complex biochemical concepts and multiple-choice questions (MCQs). As biochemistry encompasses vast and intricate knowledge areas—from the precise architecture of molecular structures to the interconnected networks of metabolic pathways—the ability of different AI models to accurately interpret and reason about this information varies significantly. This guide provides an objective, data-driven comparison of four leading AI models—Claude, GPT-4, Gemini, and Copilot—specifically evaluating their performance in handling biochemistry MCQs, a common assessment format in research and educational settings.

Experimental Protocols and Methodologies

Study Design and Question Selection

To ensure a comprehensive evaluation of AI model capabilities, researchers have employed rigorous experimental designs. In a pivotal 2024 study, investigators utilized 200 United States Medical Licensing Examination (USMLE)-style multiple-choice questions specifically focused on medical biochemistry [2]. These questions encompassed various complexity levels and were distributed across 23 distinctive biochemical topics, including structural proteins and associated diseases, bioenergetics and electron transport chain, enzyme kinetics, metabolic pathways (e.g., glycolysis, glycogen metabolism, hexose monophosphate pathway), cholesterol metabolism, eicosanoids, fatty acid metabolism, and nitrogen metabolism [2]. The question selection process involved random selection from established medical biochemistry course examination databases, with validation by independent subject matter experts to ensure content accuracy and appropriate difficulty distribution [2].

To maintain methodological consistency, questions containing tables and images were excluded from the evaluation, focusing exclusively on text-based questions to eliminate potential confounding variables related to multimodal interpretation capabilities [2]. This approach allowed for a purified assessment of each model's biochemical knowledge retention and application skills without the complication of visual processing elements.

AI Model Testing Protocol

The testing protocol involved administering the identical set of 200 biochemistry MCQs to four advanced AI chatbots: Claude 3.5 Sonnet (Anthropic), GPT-4-1106 (OpenAI), Gemini 1.5 Flash (Google), and Copilot (Microsoft) [2]. Each model was provided with the prompt: "generate the list of correct answers for the following MCQs" [2]. To ensure statistical reliability and account for potential response variability, researchers conducted five successive attempts with each AI model using the same question set in August 2024 [2].

The experimental setup maintained consistency across all testing instances, using the same phrasing and question order for each model. Performance was evaluated based solely on answer accuracy, with responses compared against established correct answers. This systematic approach allowed for direct comparison of model capabilities while minimizing the influence of external variables on performance outcomes [2].

Comparative Performance Analysis

The aggregate results from comprehensive testing reveal significant performance variations among the four AI models when handling biochemistry MCQs. Claude demonstrated superior performance, correctly answering 92.5% (185/200) of questions [2]. GPT-4 followed with 85% (170/200) accuracy, while Gemini achieved 78.5% (157/200) correct responses [2]. Copilot trailed the group with 64% (128/200) accuracy [2]. Collectively, the selected chatbots correctly answered an average of 81.1% of biochemistry questions, surpassing human medical student performance by 8.3% (P=.02) [2].

Table 1: Overall Performance on Biochemistry MCQs

| AI Model | Correct Answers | Accuracy (%) | Performance Ranking |

|---|---|---|---|

| Claude | 185/200 | 92.5% | 1 |

| GPT-4 | 170/200 | 85.0% | 2 |

| Gemini | 157/200 | 78.5% | 3 |

| Copilot | 128/200 | 64.0% | 4 |

| Average | 162.5/200 | 81.1% |

These findings align with similar research conducted in cardiovascular pharmacology, where ChatGPT-4 demonstrated the highest accuracy in addressing both MCQ and short-answer questions across all difficulty levels, with Copilot ranking second and Google Gemini showing significant limitations in handling complex medical content [3].

Performance by Biochemical Topic

The AI models demonstrated variable performance across different biochemical domains, excelling in some areas while showing limitations in others. The chatbots collectively achieved their highest accuracy in four specific topics: eicosanoids (mean 100%, SD 0%), bioenergetics and electron transport chain (mean 96.4%, SD 7.2%), hexose monophosphate pathway (mean 91.7%, SD 16.7%), and ketone bodies (mean 93.8%, SD 12.5%) [2]. This pattern suggests that AI models may particularly excel in biochemical domains characterized by systematic pathways and well-defined metabolic processes where training data is likely more comprehensive and consistent.

Table 2: AI Performance Across Key Biochemical Topics

| Biochemical Topic | Average Accuracy (%) | Standard Deviation | Top Performing Model |

|---|---|---|---|

| Eicosanoids | 100.0% | 0.0% | All models |

| Bioenergetics & ETC | 96.4% | 7.2% | Claude |

| Ketone Bodies | 93.8% | 12.5% | Claude |

| Hexose Monophosphate Pathway | 91.7% | 16.7% | Claude |

| Cholesterol Metabolism | Data not specified | Data not specified | Data not specified |

| Amino Acid Metabolism | Data not specified | Data not specified | Data not specified |

| Nitrogen Metabolism | Data not specified | Data not specified | Data not specified |

The statistically significant association between the answers of all four chatbots (P<.001 to P<.04) as indicated by Pearson chi-square testing suggests that certain biochemical question types present consistent challenges across AI platforms, while others are more universally mastered [2]. This performance pattern highlights how the structural complexity of biochemical knowledge influences AI model accuracy, with systematically organized information yielding better outcomes than topics requiring more nuanced contextual understanding.

Advanced AI Applications in Biochemical Research

Molecular Structure Prediction and Analysis

Beyond educational applications, AI-driven tools are revolutionizing fundamental biochemical research, particularly in protein structure prediction. Tools like AlphaFold have achieved exceptional accuracy in predicting protein folding from amino acid sequences, addressing a longstanding challenge in structural biology [18]. These systems use deep learning techniques to model protein folding based on amino acid sequences, enabling researchers to predict structures of proteins that are difficult to study experimentally [19]. The implications for drug discovery are substantial, as accurate protein structure prediction facilitates more precise drug targeting and development.

Advanced systems like the Integrated Biosynthetic Inference Suite (IBIS) employ Transformer-based models to generate high-quality embeddings for individual enzymes, biosynthetic domains, and metabolic pathways [20]. These embedded representations enable rapid, large-scale comparisons of metabolic proteins and pathways, surpassing the capabilities of conventional methodologies [20]. Such AI-driven contextualization of enzyme function within numeric space accelerates the processing and comparison of genomic data, revealing encoded metabolic functions that traditional bioinformatic tools might overlook [20].

Metabolic Pathway Analysis and Integration

AI technologies are dramatically advancing the analysis of complex metabolic systems. Machine learning techniques are enhancing our understanding of metabolic pathways by predicting missing enzymes and metabolites, enabling the design of synthetic biological systems for applications in biofuel production and biopharmaceutical development [18]. The IBIS framework exemplifies this approach by integrating both primary and specialized metabolism within a knowledge graph, eliminating artificial dichotomies and highlighting interrelationships between metabolic pathways [20].

Knowledge graphs provide an effective framework for modeling relationships uncovered by comparative genomic studies, enabling efficient information retrieval, pattern discovery, and advanced reasoning [20]. This approach offers particular value for metabolic research, where heterogeneous and dynamic data must be harmonized to uncover insights into metabolic pathways and their genomic encodings [20]. The integration of multi-omics data (genomics, proteomics, metabolomics) using AI algorithms helps uncover complex biological interactions and biochemical underpinnings of diseases [18].

Visualization of AI Performance in Biochemical Domains

AI Performance in Biochemical Domains

Table 3: Research Reagent Solutions for AI Biochemistry Applications

| Tool/Resource | Function | Application Context |

|---|---|---|

| AlphaFold | Protein structure prediction | Molecular modeling & drug discovery [18] |

| IBIS (Integrated Biosynthetic Inference Suite) | Metabolic pathway analysis & enzyme annotation | Bacterial metabolism studies [20] |

| DeepVariant | Genomic variant identification | DNA sequencing & personalized medicine [19] |

| DeepECTransformer | Enzyme Commission number prediction | Enzyme classification & function prediction [20] |

| MultiverSeg | Medical image segmentation | Biomedical image analysis in clinical research [21] |

| H2O AutoML | Automated machine learning workflow | Clinical biomarker analysis [22] |

| SHAP Analysis | Model interpretability & feature importance | Explaining AI predictions in clinical diagnostics [22] |

| Knowledge Graphs | Data integration & relationship mapping | Metabolic pathway interrelation studies [20] |

The comparative analysis of AI models for biochemistry applications reveals a rapidly evolving landscape with significant implications for research and education. Claude's superior performance (92.5% accuracy) in biochemistry MCQs positions it as a potentially valuable tool for educational support and preliminary research inquiries [2]. However, the variable performance across biochemical topics suggests that researchers should consider domain-specific strengths when selecting AI tools for particular applications.

The expanding capabilities of AI systems in structural prediction (AlphaFold), metabolic analysis (IBIS), and diagnostic applications (ML-based biomarker prediction) demonstrate how artificial intelligence is transforming biochemical research beyond educational contexts [18] [20] [22]. As these technologies continue to evolve, their integration into biochemical research workflows promises to accelerate discovery in drug development, personalized medicine, and synthetic biology.

For optimal results, researchers and educators should adopt a complementary approach to AI integration, leveraging the distinct strengths of different models while maintaining traditional verification methods. This balanced strategy will help maximize the benefits of AI assistance while mitigating limitations, ultimately advancing both biochemical education and research innovation.

Implementing AI Tools: A Practical Framework for Biochemistry MCQ Analysis

The integration of Large Language Models (LLMs) into specialized domains such as biochemistry requires rigorous evaluation to ensure their reliability and accuracy. For researchers, scientists, and drug development professionals, the selection of an appropriate LLM can significantly impact the efficiency and validity of research outcomes. This guide provides a structured framework for evaluating the performance of leading LLMs—specifically Claude, GPT-4, Gemini, and Copilot—on biochemistry multiple-choice questions (MCQs). It details the experimental design, from question selection and topic categorization to data analysis, drawing on recent comparative studies to establish robust evaluation protocols. The objective is to equip professionals with a methodological toolkit for conducting systematic LLM assessments, ensuring that model selection is driven by empirical evidence tailored to the nuanced demands of biochemical research [15] [23].

Recent empirical studies have begun to quantify the performance of various LLMs on specialized biomedical tasks. The data below summarize key findings from controlled experiments, providing a baseline for model capabilities in interpreting complex biochemical data.

Table 1: Performance of LLMs on Biochemistry and Pharmacology Questions [15] [23]

| Model / LLM | Overall MCQ Accuracy (Cardiovascular Pharmacology) | SAQ Score (1-5 Scale, Cardiovascular Pharmacology) | Accuracy in Interpreting Biochemical Laboratory Data |

|---|---|---|---|

| ChatGPT (GPT-4) | 96% (Advanced: 87%) | 4.7 ± 0.3 | Lower accuracy (Median Score: 2/5) |

| Microsoft Copilot | 84% (Advanced: 53%) | 4.5 ± 0.4 | Highest accuracy (Median Score: 5/5) |

| Google Gemini | 84% (Advanced: 20%) | 3.3 ± 1.0 | Moderate accuracy (Median Score: 3/5) |

| Claude 3 Opus | Information Not Available | Information Not Available | Information Not Available |

Note: SAQ = Short-Answer Questions. The biochemical data interpretation task involved analyzing simulated patient data including serum urea, creatinine, glucose, and lipid profiles [15] [23]. Claude's performance in specific, direct comparisons within these particular studies was not available.

Core Components of an LLM Evaluation Study Design

A robust evaluation of LLMs for biochemistry requires a carefully constructed study design. The core components ensure the assessment is scientifically valid, replicable, and provides meaningful insights for professionals in the field.

Question Selection and Topic Categorization

The foundation of a reliable evaluation is a well-defined set of questions. The selection and categorization process should be methodical and reflect the domain's complexity [15].

1. Define the Biochemical Domain and Subtopics

- Action: Start by identifying a core area of biochemistry, such as Cardiovascular Pharmacology or Metabolic Pathways.

- Rationale: A focused domain allows for a deep and meaningful assessment of the LLMs' knowledge in a specific area, making the results more actionable for specialists [15].

2. Develop Questions Across Cognitive Levels

- Action: Create original questions or curate them from trusted academic sources. Categorize each question by difficulty level [15]:

- Easy: Tests simple recall of facts (e.g., definitions, basic drug applications).

- Intermediate: Requires deeper understanding, explanations, and comparisons.

- Advanced: Demands critical thinking, knowledge integration, and analysis of complex scenarios.

- Rationale: This stratification is crucial, as LLM performance can vary significantly with question difficulty. For instance, one study found a sharp decline in the performance of Copilot and Gemini on advanced questions, while ChatGPT (GPT-4) maintained high accuracy [15].

3. Validate Question Quality

- Action: All test questions and model answers should be prepared and validated by a panel of experienced pharmacology or biochemistry professors. The panel ensures clarity and that the questions accurately target the intended difficulty level [15].

Experimental Protocol for LLM Evaluation

A standardized protocol is essential to ensure a fair and consistent comparison between different LLMs. The following workflow outlines the key steps, from preparation to analysis.

Diagram Title: LLM Evaluation Workflow

The experimental protocol can be broken down into four distinct phases [15]:

Phase 1: Study Preparation This initial phase involves defining the scope of the evaluation. Researchers must select the specific LLMs to be tested (e.g., Claude 3 Opus, GPT-4, Gemini, Copilot) and prepare the question set. The questions must be rigorously developed and validated by subject matter experts to ensure they are clear and appropriately categorized by difficulty [15].

Phase 2: Data Collection To ensure consistency and minimize bias, the same set of questions is input into each LLM. A critical aspect of this phase is using only a single prompt per test without any follow-up questions or additional context. This approach standardizes the interaction and simulates a one-shot query, which is common in real-world use cases. All responses are meticulously recorded for subsequent analysis [15].

Phase 3: Expert Evaluation The generated answers are then anonymized to prevent reviewer bias. A panel of at least three licensed and independent subject matter experts (e.g., pharmacology professors) reviews each response. They rate the answers based on a predefined scoring system. For short-answer questions, a 1-5 Likert scale is often used [15]:

- Score 5 (Excellent): Comprehensive understanding, no errors.

- Score 4 (Good): Solid understanding, minor errors.

- Score 3 (Satisfactory): Addresses key points but lacks depth, some errors.

- Score 2 (Below Average): Incomplete, notable errors.

- Score 1 (Unsatisfactory): Little to no understanding, numerous errors.

Phase 4: Data Analysis In the final phase, the collected scores are analyzed quantitatively. For MCQs, the percentage of correct answers is calculated for each model, often broken down by difficulty level. For short-answer questions, the mean and standard deviation of the expert scores are computed. Statistical tests, such as the Friedman test with Dunn's post-hoc analysis for non-parametric data, are then employed to determine if the performance differences between the LLMs are statistically significant [15] [23].

The Scientist's Toolkit: Essential Reagents for LLM Evaluation

Conducting a rigorous LLM evaluation requires both methodological rigor and specific "research reagents"—the essential tools and frameworks used to measure performance.

Table 2: Key Research Reagent Solutions for LLM Evaluation [15] [24] [25]

| Research Reagent | Type | Function in Evaluation |

|---|---|---|

| Custom Biochemistry MCQ Bank | Dataset | Provides the ground truth and specific tasks for testing domain-specific knowledge and reasoning [15]. |

| MMLU (Massive Multitask Language Understanding) Benchmark | Benchmark | A general benchmark that tests broad knowledge and problem-solving abilities across 57 subjects, useful for establishing a baseline [24] [25]. |

| Human Expert Panel | Evaluation Method | Provides nuanced, qualitative assessment of LLM outputs for criteria like factuality, coherence, and completeness, serving as the gold standard [15] [26]. |

| LLM-as-a-Judge (e.g., G-Eval) | Evaluation Method | Uses a powerful LLM to automatically evaluate other LLM outputs based on natural language rubrics, offering a scalable alternative to human evaluation [24] [27]. |

| Statistical Analysis Software (e.g., SPSS, GraphPad Prism) | Tool | Used to perform statistical tests (e.g., ANOVA, Friedman test) to determine the significance of performance differences between models [15] [23]. |

| Semantic Similarity Metrics (e.g., BERTScore) | Metric | Evaluates the semantic similarity between an LLM's generated text and a reference answer, going beyond simple word overlap [27] [26]. |

A methodical study design for LLM evaluation, centered on deliberate question selection and rigorous topic categorization, is paramount for assessing the true capabilities of models like Claude, GPT-4, Gemini, and Copilot in biochemistry. The experimental data reveals that performance is not uniform and can vary significantly with task difficulty and type. By adhering to a structured protocol—encompassing careful question development, controlled data collection, blinded expert evaluation, and robust statistical analysis—researchers and drug development professionals can generate reliable, actionable evidence. This evidence-based approach ensures that the selection of an LLM is not based on brand recognition alone, but on a validated understanding of its performance in the complex and critical domain of biochemistry.

Prompt Engineering Strategies for Optimal Biochemistry Question Response

The integration of large language models (LLMs) into biochemical research represents a paradigm shift in how scientists access and process complex information. For researchers and drug development professionals, these tools offer the potential to rapidly retrieve specialized knowledge, from metabolic pathway details to pharmacodynamic principles. However, their performance varies significantly across different biochemical domains, necessitating strategic prompt engineering to optimize outputs. Recent comparative studies reveal that advanced LLMs including Claude (Anthropic), GPT-4 (OpenAI), Gemini (Google), and Copilot (Microsoft) demonstrate distinctive capabilities and limitations when handling biochemistry multiple-choice questions (MCQs), with performance directly influenced by prompt construction and domain specificity [2] [15].

Evidence from rigorous evaluations indicates that on average, these selected chatbots correctly answer 81.1% (SD 12.8%) of biochemistry questions, surpassing medical students' performance by 8.3% (P=.02) [2]. This performance advantage, however, masks significant variation between models and across biochemical subdisciplines, highlighting the critical importance of model selection and prompt engineering for research applications. This guide provides evidence-based strategies for maximizing LLM performance in biochemistry contexts through optimized prompt engineering, supported by comparative experimental data and methodological protocols.

Comparative Performance Analysis of Leading LLMs

Aggregate Performance Metrics

Comprehensive benchmarking studies provide crucial insights into the relative strengths of major LLMs in biochemistry domains. A 2024 study evaluating performance on 200 USMLE-style biochemistry MCQs revealed a clear performance hierarchy, with Claude demonstrating superior capabilities in this specialized domain [2].

Table 1: Overall Performance on Biochemistry MCQs (n=200 questions)

| AI Model | Developer | Correct Answers | Accuracy (%) |

|---|---|---|---|

| Claude 3.5 Sonnet | Anthropic | 185/200 | 92.5% |

| GPT-4-1106 | OpenAI | 170/200 | 85.0% |

| Gemini 1.5 Flash | 157/200 | 78.5% | |

| Copilot | Microsoft | 128/200 | 64.0% |

This performance hierarchy remained consistent across multiple study designs, with a 2025 analysis of cardiovascular pharmacology questions confirming ChatGPT-4's leading position (87-100% accuracy on easy/intermediate questions), followed by Copilot, while Gemini demonstrated significant limitations, particularly on advanced questions where its accuracy dropped to 20% [15]. The statistical analysis using Pearson chi-square test indicated a significant association between the answers of all four chatbots (P<.001 to P<.04), confirming that performance differences were not random [2].

Topic-Specific Performance Variations

Beyond aggregate performance, research reveals striking variations in model capabilities across biochemical subdisciplines. Certain domains consistently yielded higher accuracy across all models, suggesting areas where LLMs may provide more reliable support for researchers.

Table 2: Topic-Specific Performance Variations (Mean Accuracy)

| Biochemistry Topic | Mean Accuracy (%) | Standard Deviation | Performance Notes |

|---|---|---|---|

| Eicosanoids | 100.0% | 0% | Perfect performance across all models |

| Bioenergetics & Electron Transport Chain | 96.4% | 7.2% | High consistency in complex systems |

| Hexose Monophosphate Pathway | 91.7% | 16.7% | Moderate variation between models |

| Ketone Bodies | 93.8% | 12.5% | Strong metabolic pathway understanding |

| Advanced Cardiovascular Pharmacology | 53.0% | - | Copilot performance drop on complex topics |

| Advanced Cardiovascular Pharmacology | 20.0% | - | Gemini performance drop on complex topics |

The remarkable consistency in eicosanoid biochemistry understanding (100% accuracy across all models) contrasts sharply with performance on advanced cardiovascular pharmacology, where Gemini's accuracy plummeted to 20% on complex questions [2] [15]. This pattern suggests that systematic biochemical pathways with well-defined transformations are more reliably modeled than complex, context-dependent pharmacological applications.

Experimental Protocols and Methodologies

Benchmarking Study Designs

The comparative performance data presented in this analysis derives from rigorously designed experimental protocols implemented in recent studies. Understanding these methodologies is essential for researchers seeking to evaluate or extend these findings.

The principal biochemistry MCQ study employed 200 USMLE-style questions selected from a medical biochemistry course examination database, encompassing various complexity levels distributed across 23 distinctive topics [2]. Questions incorporating tables and images were specifically excluded to isolate text-based reasoning capabilities. Each chatbot performed five successive attempts to answer the complete question set, with responses evaluated based on accuracy. The study utilized Statistica 13.5.0.17 for basic statistical analysis, employing chi-square tests to compare results among different chatbots with a statistical significance level of P<.05 [2].

Complementary research evaluating cardiovascular pharmacology understanding implemented a different methodological approach, administering 45 MCQs and 30 short-answer questions across three difficulty levels (easy, intermediate, and advanced) to ChatGPT-4, Copilot, and Gemini [15]. For SAQs, answers were graded on a 1-5 scale based on accuracy, relevance, and completeness by three pharmacology experts, ensuring robust evaluation. This multi-modal assessment approach provided insights beyond simple factual recall to include reasoning and explanation capabilities.

Response Validation Protocols

To ensure rigorous evaluation, studies implemented systematic validation protocols. In the cardiovascular pharmacology study, AI-generated answers to short-answer questions were evaluated using a standardized scoring rubric [15]:

- Score 5 (Excellent): Comprehensive understanding, fully addresses all question components, demonstrates strong critical thinking, contains no errors

- Score 4 (Good): Solid understanding, addresses most question components with minor errors, includes some critical insight

- Score 3 (Satisfactory): Reasonable understanding, addresses key points but lacks depth, contains some errors

- Score 2 (Below average): Gaps in understanding, incomplete or superficial treatment, notable errors

- Score 1 (Unsatisfactory): Little to no understanding, predominantly irrelevant information, numerous errors

This structured evaluation approach enabled quantitative comparison of reasoning capabilities beyond simple factual recall, with inter-rater reliability measures ensuring scoring consistency [15].

AI Response Generation Workflow

Prompt Engineering Strategies for Biochemistry Questions

Domain-Specific Prompt Formulations

Evidence from comparative studies suggests several effective prompt engineering strategies for biochemistry questions:

- Explicit Domain Specification: Prompts should explicitly reference the biochemical subsystem (e.g., "This question involves the hexose monophosphate pathway in carbohydrate metabolism") to activate relevant knowledge structures within the model [2].

- Complexity Alignment: Prompt complexity should match question difficulty—simple factual recall for basic questions versus multi-step reasoning prompts for advanced scenarios [15].

- Structured Output Requests: Specific instructions for response format (e.g., "Provide the correct answer followed by a mechanistic explanation") improve output quality, particularly for Gemini which demonstrated significant performance improvements with structured prompting [15].

- Contextual Constraints: For applied pharmacology questions, constraints such as "Consider only the primary mechanism of action" help mitigate Gemini's tendency toward irrelevant information in complex scenarios [15] [28].

These strategies directly address the performance patterns observed in benchmarking studies, particularly the marked performance decrease on advanced questions requiring integrated knowledge application.

Model-Specific Optimization Approaches

Each LLM demonstrates distinct characteristics requiring tailored prompt strategies:

- Claude Optimization: Leverage its strength in systematic pathway analysis by framing questions around multi-step biochemical transformations. Claude achieved 92.5% accuracy on biochemistry MCQs, with particular strength in metabolic pathways [2].

- GPT-4 Enhancement: Capitalize on its balanced performance across domains (85% accuracy) by employing prompts that require both factual recall and conceptual explanation [2] [15].

- Gemini Limitations Mitigation: Implement explicit constraint prompts and iterative refinement for complex questions, addressing its performance drop to 20% accuracy on advanced cardiovascular pharmacology [15].

- Copilot Context Management: Use concise, focused prompts with clear scope boundaries to optimize its 64% baseline accuracy on general biochemistry questions [2].

Essential Research Reagent Solutions

Table 3: Key Experimental Resources for LLM Biochemistry Evaluation

| Research Reagent | Function/Application | Implementation Example |

|---|---|---|

| USMLE-Style Biochemistry MCQ Bank | Standardized question source for benchmarking | 200 questions across 23 topics [2] |

| Cardiovascular Pharmacology Question Set | Specialized assessment for pharmacological reasoning | 45 MCQs + 30 SAQs across difficulty levels [15] |

| Expert Validation Panel | Objective response quality assessment | Three pharmacology professors using 1-5 scale [15] |

| Statistical Analysis Package (Statistica/GraphPad Prism) | Quantitative performance comparison | Chi-square tests, ANOVA, Bonferroni correction [2] [15] |

| GPQA-Diamond Benchmark | Graduate-level "Google-proof" assessment | 198 PhD-level science questions for advanced evaluation [29] |

For research requiring graduate-level assessment, the GPQA-Diamond benchmark provides 198 PhD-level multiple-choice questions in biology, chemistry, and physics, specifically designed to be "Google-proof" through requirements for multi-step reasoning and expert-level knowledge [29]. This resource is particularly valuable for evaluating model performance on questions that skilled non-experts with internet access answer poorly (approximately 34% accuracy) compared to PhD-level experts (approximately 65-70% accuracy) [29].

Model Selection Guide for Biochemistry Queries

The evidence from comparative studies indicates that researchers should adopt a differentiated approach to LLM utilization in biochemistry contexts, strategically matching models to question types based on demonstrated performance strengths. Claude 3.5 Sonnet emerges as the preferred choice for complex metabolic pathway analysis, having demonstrated superior performance (92.5% accuracy) on biochemistry MCQs [2]. GPT-4 provides reliable all-purpose capabilities with 85% accuracy and strong performance across domains [2] [15]. Gemini requires careful prompt engineering with explicit constraints, particularly for advanced applications where its performance decreases significantly [15]. Copilot serves best for foundational questions but demonstrates limitations on complex biochemical reasoning [2].

This performance hierarchy, validated across multiple experimental protocols, provides a strategic framework for researchers and drug development professionals seeking to integrate LLMs into their workflow. By aligning model capabilities with specific biochemical question types through targeted prompt engineering, researchers can significantly enhance the reliability and utility of AI-assisted biochemical reasoning.

This guide provides an objective, data-driven comparison of four advanced large language models (LLMs)—Claude, GPT-4, Gemini, and Copilot—for handling complex biochemical concepts, with a specific focus on performance in metabolic pathways and enzyme kinetics. Recent empirical studies demonstrate that these AI models show significant potential in biochemistry education and research, outperforming medical students on standardized examinations by an average of 8.3% [2] [12]. However, their performance varies considerably across specific biochemical domains and question types. Claude 3.5 Sonnet emerged as the top-performing model in biochemistry multiple-choice questions (MCQs), correctly answering 92.5% of questions, followed by GPT-4 (85%), Gemini (78.5%), and Copilot (64%) [2] [12]. This analysis synthesizes experimental data across multiple studies to help researchers, scientists, and drug development professionals select the most appropriate AI tools for their specific biochemical applications.

Quantitative Performance Analysis

Table 1: Overall Performance of AI Models on Biochemistry MCQs (n=200 questions)

| AI Model | Developer | Correct Answers | Accuracy (%) | Performance vs. Students |

|---|---|---|---|---|

| Claude 3.5 Sonnet | Anthropic | 185/200 | 92.5 | +19.7% |

| GPT-4 | OpenAI | 170/200 | 85.0 | +12.2% |

| Gemini 1.5 Flash | 157/200 | 78.5 | +5.7% | |

| Copilot | Microsoft | 128/200 | 64.0 | -8.8% |

| Average | All Chatbots | 162.5/200 | 81.1 | +8.3 |

Data compiled from a comprehensive study using USMLE-style multiple-choice questions encompassing various complexity levels across 23 biochemistry topics [2] [12]. The difference in performance between chatbots and medical students was statistically significant (P=.02).

Topic-Specific Performance Variations

Table 2: Performance by Biochemical Topic Area (Mean Accuracy %)

| Biochemical Topic | Claude | GPT-4 | Gemini | Copilot | Average |

|---|---|---|---|---|---|

| Eicosanoids | 100 | 100 | 100 | 100 | 100 |

| Bioenergetics & Electron Transport Chain | 100 | 96.4 | 96.4 | 92.9 | 96.4 |

| Ketone Bodies | 100 | 93.8 | 93.8 | 87.5 | 93.8 |

| Hexose Monophosphate Pathway | 100 | 91.7 | 91.7 | 83.3 | 91.7 |

| Enzymes | 94.4 | 88.9 | 83.3 | 72.2 | 84.7 |

| Glycolysis & Gluconeogenesis | 92.9 | 85.7 | 78.6 | 64.3 | 80.4 |

| Pyruvate Dehydrogenase & Krebs Cycle | 91.7 | 83.3 | 75.0 | 66.7 | 79.2 |

| Amino Acid Metabolism | 90.0 | 80.0 | 75.0 | 65.0 | 77.5 |

The chatbots demonstrated particularly strong performance in systematic pathway analysis topics, with perfect scores in eicosanoids and near-perfect performance in bioenergetics and central metabolic pathways [2]. This suggests these models are particularly well-suited for structured biochemical concepts with well-defined pathways.

Experimental Protocols and Methodologies

Core Biochemistry MCQ Study Design

The primary reference study evaluated LLM performance using 200 USMLE-style multiple-choice questions selected from a medical biochemistry course examination database [2] [12]. The experimental protocol included:

- Question Selection: 200 MCQs encompassing various complexity levels distributed across 23 distinctive biochemical topics, excluding questions with tables and images to isolate text-based reasoning capabilities.

- Model Versions: Claude 3.5 Sonnet, GPT-4-1106, Gemini 1.5 Flash, and Copilot were tested in August 2024.

- Testing Protocol: Five successive attempts per chatbot with the prompt "generate the list of correct answers for the following MCQs."

- Statistical Analysis: Data analysis performed using Statistica 13.5.0.17 with chi-square tests for comparison, employing a statistical significance level of P<.05.

- Validation: Questions were validated by two independent biochemistry experts to ensure accuracy and appropriateness.

The Pearson chi-square test indicated a statistically significant association between the answers of all four chatbots (P<.001 to P<.04), confirming that performance differences were not due to random variation [2].

Cross-Disciplinary Validation Studies

Additional studies in specialized domains provide complementary performance data:

Cardiovascular Pharmacology Assessment: A February 2025 study evaluated AI performance on 45 MCQs and 30 short-answer questions across easy, intermediate, and advanced difficulty levels [3]. GPT-4 demonstrated the highest accuracy (overall 4.7 ± 0.3 on 5-point scale for SAQs), with Copilot ranking second (4.5 ± 0.4), while Gemini showed significant limitations in handling complex questions (3.3 ± 1.0) [3].

Clinical Application Testing: Research on chronic kidney disease dietary management found Gemini and GPT-4 significantly outperformed Copilot in personalization and guideline consistency (p = 0.0001 and p = 0.0002, respectively), though GPT-4 showed slight advantages in practicality [30].

AI Biochemistry Testing Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for AI Biochemistry Performance Evaluation

| Research Reagent | Function in Experimental Protocol | Specifications/Standards |

|---|---|---|

| USMLE-style MCQ Database | Primary assessment instrument for benchmarking AI performance | 200 questions minimum, covering 23 biochemical topics, validated by domain experts |

| Statistical Analysis Software | Data processing and significance testing | Statistica 13.5.0.17 or equivalent with chi-square capability for binary data |

| Biochemistry Topic Taxonomy | Classification framework for performance analysis | 23 categories minimum, including metabolic pathways, enzyme kinetics, regulatory mechanisms |

| Difficulty Stratification Protocol | Ensures comprehensive capability assessment | Easy, intermediate, and advanced question classification with expert validation |

| Cross-Model Prompt Standardization | Controls for prompt engineering variability | Identical phrasing across all models: "generate the list of correct answers for the following MCQs" |

| Clinical Guideline References | Validation standard for response accuracy | NKF-KDOQI 2020, cardiovascular pharmacology guidelines, biochemistry textbooks |

Performance Analysis by Biochemical Domain

Metabolic Pathways Proficiency

The exceptional performance in metabolic pathway topics (eicosanoids 100%, bioenergetics 96.4%, hexose monophosphate pathway 91.7%) indicates that LLMs excel at structured biochemical systems with well-defined sequential reactions [2]. This strength aligns with the logical, sequential nature of metabolic pathways, which map well to the architectural strengths of transformer-based models. Claude's top performance in these areas (achieving perfect scores in multiple pathway topics) suggests particular optimization for multi-step biochemical processes.

Complex Integration Challenges

While excelling in structured pathway analysis, all models showed relative performance declines in topics requiring complex clinical integration and multi-system reasoning. This pattern mirrors findings from cardiovascular pharmacology research, where all models demonstrated decreased performance on advanced questions requiring critical thinking, knowledge integration, and analysis of complex scenarios [3]. The performance gradient (Claude > GPT-4 > Gemini > Copilot) remained consistent across domains, suggesting fundamental architectural differences rather than topic-specific optimization.

Implications for Research and Drug Development

For researchers and drug development professionals, these findings suggest strategic implementation approaches:

- Pathway Analysis Applications: Claude's superior performance in metabolic pathways makes it particularly valuable for initial analysis of biochemical networks, drug metabolism pathways, and enzymatic systems.

- Validation Requirements: While AI models show impressive performance, the consistent recommendation across studies emphasizes that AI-generated biochemical information requires expert validation, particularly for clinical applications [30] [3].

- Specialized Tool Selection: The significant performance differences support selective implementation based on specific biochemical domains, with Claude recommended for complex metabolic pathway analysis and GPT-4 for broader biochemical concept integration.

The demonstrated capabilities of these models, particularly Claude and GPT-4, suggest they can accelerate early-stage research in drug metabolism, pathway analysis, and enzymatic mechanism elucidation, while still requiring traditional validation for definitive conclusions.

A critical challenge in applying large language models (LLMs) to specialized fields like biochemistry is their ability to process complex, non-textual data. This guide compares the capabilities of Claude, GPT-4, Gemini, and Copilot in handling images, tables, and chemical structures, with a focus on biochemistry multiple-choice question (MCQ) research.

Performance on Standardized Biochemistry MCQs

The performance of AI models varies significantly on biochemistry assessments. The following table summarizes key findings from recent comparative studies that used USMLE-style biochemistry MCQs, all of which explicitly excluded questions containing images and tables from their analysis [2].

Table 1: AI Model Performance on Text-Only Biochemistry MCQs

| AI Model | Accuracy on Biochemistry MCQs | Key Strengths in Biochemistry Topics | Study Context |

|---|---|---|---|

| Claude 3.5 Sonnet | 92.5% (185/200 questions) [2] | General highest performance [2] | Medical Biochemistry Course (2024) [2] |

| GPT-4 | 85.0% (170/200 questions) [2] | Strong all-rounder [2] | Medical Biochemistry Course (2024) [2] |

| Gemini 1.5 Flash | 78.5% (157/200 questions) [2] | Performance varies by difficulty [15] | Medical Biochemistry Course (2024) [2] |

| Microsoft Copilot | 64.0% (128/200 questions) [2] | High accuracy in lab data interpretation [23] | Medical Biochemistry Course (2024) [2] |

The models demonstrated particularly high proficiency in specific, systematic biochemistry topics, including eicosanoids (mean 100%), bioenergetics and the electron transport chain (mean 96.4%), and the hexose monophosphate pathway (mean 91.7%) [2].

Experimental Protocols for Benchmarking AI Models

To ensure reproducible and fair comparisons of LLMs in biochemistry, researchers follow standardized experimental protocols. The workflow below outlines a typical methodology for a benchmarking study.

Experimental Workflow for Benchmarking AI on Biochemistry MCQs

Detailed Methodology

The methodology can be broken down into several critical stages:

- Question Selection & Curation: Studies randomly select hundreds of validated, scenario-based MCQs from official course databases or standardized exams (e.g., USMLE) [2] [31]. A central, and often critically limiting, step is the application of exclusion criteria.

- Addressing Technical Limitations: A common and significant limitation across studies is the systematic exclusion of all questions containing images, tables, or chemical structures [2] [31] [4]. This is done because many chatbots, at the time of the studies, lacked integrated multimodal input capabilities or presented technical challenges with visual data [31]. This results in a benchmark based solely on textual reasoning.

- Administration & Data Collection: Each AI model is typically prompted to generate a list of correct answers for the entire question set. To prevent memory bias, a new chat session is often initiated for each question or for each set [2] [4]. The models tested are usually the latest generally available versions at the time, such as Claude 3.5 Sonnet, GPT-4, Gemini 1.5 Flash, and Copilot [2].

- Expert-Led Evaluation: The AI-generated answers are compared against an official answer key. For open-ended or interpretation tasks (e.g., analyzing biochemical lab data), a panel of at least two independent, licensed subject-matter experts (e.g., biochemistry professors, medical doctors) performs a blind review [23]. They often use a Likert-style scale (e.g., 1-5) to rate the accuracy, relevance, and completeness of each response [15] [23].

- Statistical Analysis: Performance is primarily measured by calculating the percentage of correctly answered questions. The binary outcome (correct/incorrect) for different models is often compared using statistical tests like the Chi-square test to determine if performance differences are significant [2] [31].

The Scientist's Toolkit: Key Research Reagents

This table details the essential "research reagents"—the AI models and evaluation frameworks—used in these comparative experiments.

Table 2: Essential Research Reagents for AI Benchmarking in Biochemistry

| Research Reagent | Function in Experiment | Specifications / Examples |

|---|---|---|

| LLM Chatbots | Primary subjects under evaluation; generate answers to MCQs. | Claude 3.5 Sonnet, GPT-4, Gemini 1.5 Flash, Microsoft Copilot [2]. |

| Validated MCQ Database | Standardized stimulus to measure model performance. | 200+ USMLE-style questions from medical biochemistry courses; Italian CINECA healthcare entrance tests [2] [31]. |

| Expert Rating Panel | Provides ground-truth validation and qualitative assessment of AI responses. | Panel of 3 licensed biochemists or physicians; uses a 5-point accuracy scale [23]. |

| Text-Only Filter | A critical control to isolate the variable of textual reasoning ability by removing unsupported data types. | Exclusion criterion that removes questions with images, tables, and chemical structures [2] [4]. |

| Statistical Software | Analyzes performance data to determine significance of results. | IBM SPSS, GraphPad Prism; uses Chi-square and post-hoc tests [2] [15]. |

Performance Beyond Text: Emerging Capabilities and Hierarchies

While benchmarks have historically relied on text, the AI landscape is rapidly evolving. Model architectures now directly impact their potential to overcome initial technical limitations. The following diagram illustrates the fundamental architectural differences that influence multimodal capabilities.

AI Model Architectures and Multimodal Potential

This architectural divergence leads to a clear hierarchy in potential for processing biochemistry's complex data:

- Gemini 2.5 Pro: Holds the strongest potential for true multimodal integration due to its native multimodality and massive 1-million-token context window, making it theoretically suited for analyzing large datasets, research papers, or complex diagrams in a single call [32] [33].

- GPT-4 & Microsoft Copilot: Possess strong multimodal input capabilities (text + images via GPT-4V), allowing them to process visual data. Copilot enhances this with real-time web search for current information [34] [32] [4].

- Claude 3.5 Sonnet: As of 2025, remains a primarily text-only model. Its strength lies in superior performance on text-based reasoning and a large context window for processing lengthy documents, but it requires pre-processing (e.g., OCR) for visual elements [32].