Beyond the Prediction: A Practical Guide to Validating AlphaFold Models with Experimental Data

The release of AlphaFold has revolutionized structural biology, providing unprecedented access to protein structure predictions.

Beyond the Prediction: A Practical Guide to Validating AlphaFold Models with Experimental Data

Abstract

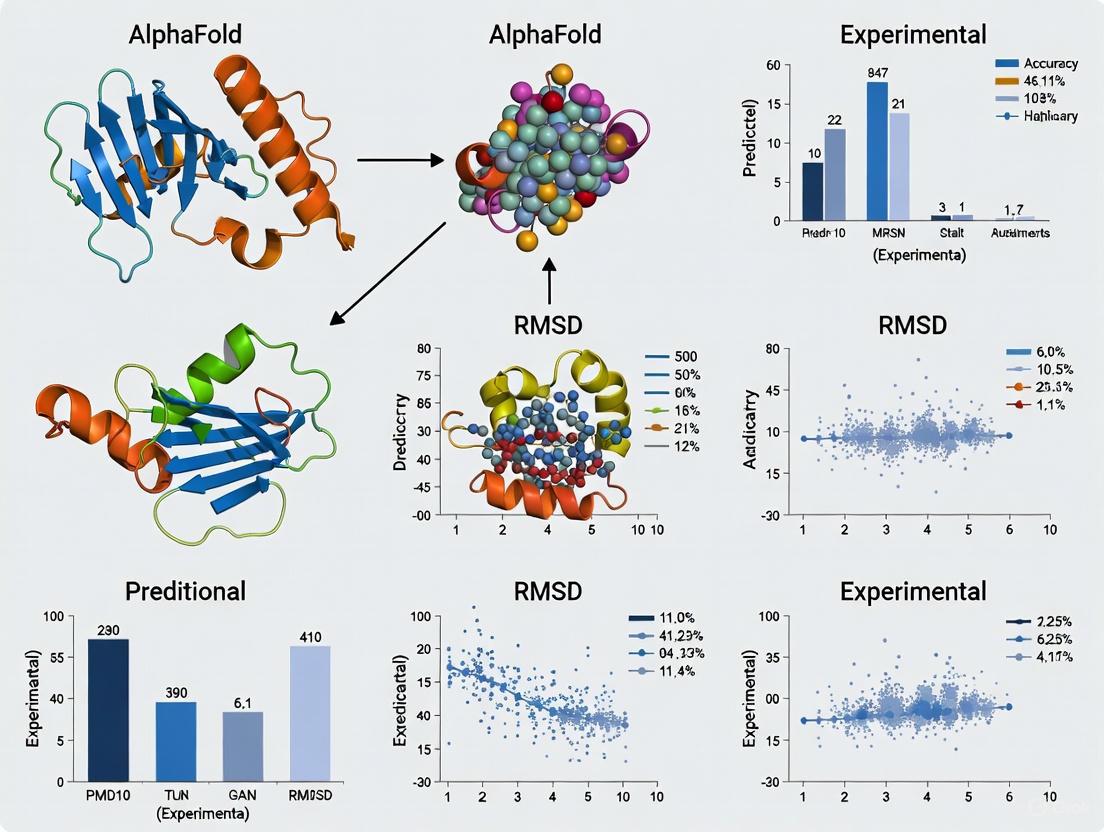

The release of AlphaFold has revolutionized structural biology, providing unprecedented access to protein structure predictions. However, as these models permeate research and drug discovery, a critical question emerges: how reliable are they? This article provides a comprehensive framework for researchers, scientists, and drug development professionals to rigorously assess the accuracy of AlphaFold predictions against experimental data. We explore the foundational principles of AlphaFold's capabilities and limitations, detail methodological applications in experimental workflows, address common challenges and optimization strategies, and present a comparative analysis of validation metrics. By synthesizing the latest validation studies, this guide empowers scientists to confidently leverage AlphaFold's strengths while recognizing scenarios where experimental validation remains indispensable.

AlphaFold's Breakthrough and Inherent Limitations: Setting Realistic Expectations

The "protein folding problem"—the challenge of predicting a protein's three-dimensional native structure solely from its amino acid sequence—has been a central focus of structural biology for decades [1]. The significance of this problem stems from the foundational principle that a protein's structure dictates its biological function [2]. For over 50 years, experimental techniques such as X-ray crystallography, Nuclear Magnetic Resonance (NMR) spectroscopy, and cryo-electron microscopy (cryo-EM) have been the primary methods for determining protein structures [3]. However, these methods are often time-consuming, expensive, and technically challenging, resulting in only a tiny fraction of the known protein universe being structurally characterized [4] [3].

The revolutionary achievement of DeepMind's AlphaFold artificial intelligence system in accurately predicting protein structures has fundamentally transformed the field [3] [1]. Its performance at the 14th Critical Assessment of Structure Prediction (CASP14) in 2020 was described as "astounding" and "transformational," marking a pivotal moment where computational prediction began to achieve accuracies competitive with experimental methods [4]. This guide provides an objective comparison of AlphaFold's performance against experimental structure determination, examining the validation data that defines its capabilities and limitations within the scientific toolkit.

AlphaFold's Predictive Performance: A Quantitative Comparison with Experimental Methods

Accuracy Metrics and Confidence Measures

AlphaFold's predictive capability is most frequently quantified by comparing its models to experimentally-determined structures from the Protein Data Bank (PDB) using the Global Distance Test (GDTTS) score, which measures the percentage of amino acid residues within a certain distance threshold in the superimposed structures [4]. A GDTTS above 90 is considered competitive with experimental methods [2]. In the CASP14 competition, AlphaFold 2 achieved a score above 90 for approximately two-thirds of the proteins, significantly outperforming all other methods [4].

Internally, AlphaFold provides a per-residue confidence metric called pLDDT (predicted Local Distance Difference Test). Residues with pLDDT > 90 are considered to be predicted with very high confidence, while those with scores below 50 have very low confidence [5]. Analysis shows that regions predicted with high pLDDT generally agree closely with experimental electron density maps, though notable exceptions occur even in high-confidence regions [5].

Table 1: Key Performance Metrics for AlphaFold 2

| Metric | Performance Value | Context and Comparison |

|---|---|---|

| Median Cα RMSD | 1.0 Å [5] | When compared to experimental PDB structures; reduced to 0.4 Å after correcting for domain-level distortions [5] |

| Median GDT_TS | >90 for ~2/3 of proteins [4] | Scores above 90 are considered comparable to low-resolution experimental structures [2] |

| Map-Model Correlation | 0.56 (mean) [5] | Substantially lower than the 0.86 mean correlation of deposited experimental models with their own electron density maps [5] |

| Inter-domain Distance Deviation | Increases to 0.7 Å for distant atoms (48-52 Å apart) [5] | Indicates systematic distortion; approximately double the deviation (0.4 Å) observed between experimental structures of the same protein crystallized differently [5] |

Limitations in Capturing Biological Complexity

While AlphaFold excels at predicting static, single-domain structures, systematic analyses reveal limitations in capturing the dynamic and complex nature of biological systems:

- Conformational Diversity: A comprehensive analysis of nuclear receptor structures found that AlphaFold 2 captures only single conformational states in homodimeric receptors where experimental structures reveal functionally important asymmetry [6].

- Ligand-Binding Sites: The same study reported that AlphaFold 2 systematically underestimates ligand-binding pocket volumes by 8.4% on average, which has direct implications for drug discovery and structure-based design [6].

- Intrinsically Disordered Regions: Approximately one-third of the human proteome, largely corresponding to intrinsically disordered regions, is poorly predicted by AlphaFold 2 [2]. These regions are crucial for cellular regulation and signaling but lack a fixed structure [2].

- Dynamic Behavior and Environmental Effects: AlphaFold does not account for ligands, covalent modifications, or environmental factors that influence protein structure and function [5]. It shows limitations in capturing the full spectrum of biologically relevant states, particularly in flexible regions [6].

Table 2: Comparative Analysis of Structural Capabilities

| Aspect | AlphaFold 2 | Experimental Methods |

|---|---|---|

| Conformational States | Typically predicts a single, ground-state conformation [6] | Can capture multiple conformations, including functionally relevant asymmetric states [6] |

| Ligand Binding Sites | Systematically underestimates pocket volumes; misses ligand-induced conformational changes [6] | Reveals precise binding pocket geometry and conformational changes upon ligand binding [6] [5] |

| Domain Flexibility | Shows distortion and domain orientation errors with median Cα r.m.s.d. of 1.0 Å [5] | Provides accurate inter-domain relationships; different crystallization conditions can reveal flexibility [5] |

| Disordered Regions | Poorly predicts intrinsically disordered regions with low confidence scores [2] | NMR can characterize structural and dynamic properties of disordered regions [2] |

| Time Resolution | Static prediction; no dynamic information [2] | Can capture kinetic intermediates and folding pathways (NMR, stopped-flow) [2] |

Experimental Protocols for AlphaFold Validation

X-ray Crystallography Validation Workflow

The most direct method for validating AlphaFold predictions involves comparison with experimental electron density maps. A 2024 study in Nature Methods established a rigorous protocol for this validation [5]:

Methodology Details:

- Researchers used 102 crystallographic electron density maps determined without reference to deposited models to eliminate bias [5].

- AlphaFold predictions were superimposed on deposited models, and their agreement with experimental density was quantified using map-model correlation coefficients [5].

- To distinguish between local errors and global distortions, researchers applied a morphing procedure to gradually deform predictions toward deposited models, quantifying the distortion field required [5].

- Results showed that AlphaFold predictions had a mean map-model correlation of 0.56, substantially lower than the 0.86 correlation of deposited models with their experimental maps [5].

NMR Spectroscopy Validation Framework

NMR spectroscopy provides unique validation capabilities, particularly for assessing protein dynamics and minor conformational states:

Methodology Details:

- A 2025 preprint study developed heuristics comparing N-edited NOESY spectra with AlphaFold predicted structures to determine if the prediction reasonably describes the actual protein structure [7].

- Researchers established a large collection of data connecting entries across the Biological Magnetic Resonance Data Bank (BMRB), PDB, and AlphaFold Database to serve as a benchmark for hybrid methods [7].

- The method involves back-calculating expected NOESY spectra from AlphaFold models and comparing them with experimental data [7].

- A support vector machine was trained to test the consistency of NMR data with predicted structures, providing an automated validation framework [7].

- In some cases, AlphaFold 2 predictions agreed better with NMR spectral data than structures obtained from standard NMR data analysis, highlighting its potential as a validation tool for experimental models [2].

Table 3: Key Research Resources for AlphaFold and Experimental Validation

| Resource | Type | Function and Application |

|---|---|---|

| AlphaFold Protein Structure Database | Database | Provides open access to AlphaFold predictions for entire proteomes, including human [4] [8] |

| Protein Data Bank (PDB) | Database | Primary repository for experimentally determined protein structures; serves as gold standard for validation [6] [1] |

| Biological Magnetic Resonance Data Bank (BMRB) | Database | Repository for NMR spectroscopy data; enables validation of dynamics and allosteric states [7] |

| Crystallographic Electron Density Maps | Experimental Data | Unbiased experimental standard for evaluating local atomic accuracy of predictions [5] |

| N-edited NOESY Spectra | Experimental Data | NMR data for validating interatomic distances and detecting conformational dynamics [7] |

| pLDDT Confidence Metric | Analytical Tool | Per-residue confidence score (0-100) indicating predicted reliability; essential for interpreting models [5] |

| Multiple Sequence Alignments (MSAs) | Computational Tool | Evolutionary information used by AlphaFold to infer residue contacts; quality impacts prediction accuracy [4] |

AlphaFold 3 and Future Directions

The recent introduction of AlphaFold 3 extends capabilities beyond single protein chains to predict the structures of protein complexes with DNA, RNA, post-translational modifications, and selected ligands [4] [3]. AlphaFold 3 introduces a new "Pairformer" architecture and uses a diffusion-based approach similar to those in image-generation AI, which begins with a cloud of atoms and iteratively refines their positions [4]. Early reports indicate a minimum 50% improvement in accuracy for protein interactions with other molecules compared to existing methods [4].

Despite these advances, the fundamental relationship between prediction and experiment remains complementary. As noted in Nature Methods, AlphaFold predictions are best considered as "exceptionally useful hypotheses" that can accelerate but not replace experimental structure determination [5]. The integration of AI predictions with experimental validation creates a powerful synergy—what NMR spectroscopy researcher Dr. D. F. Hansen describes as a partnership where "NMR spectroscopy and AlphaFold 2 can collaborate to advance our comprehension of proteins" [2].

The AlphaFold revolution has fundamentally transformed structural biology, providing immediate access to reliable protein models for the vast majority of the human proteome. Quantitative validation against experimental data confirms that AlphaFold achieves near-atomic accuracy for well-folded domains under stable conditions, with performance competitive with medium-resolution experimental methods.

However, systematic comparisons reveal that AI predictions cannot yet capture the full complexity of protein behavior, including conformational dynamics, ligand-induced changes, and the structural heterogeneity essential for biological function. For researchers in drug development and structural biology, the most powerful approach combines the speed and coverage of AlphaFold with the precision and biological context of experimental methods to illuminate both the structure and function of the molecular machinery of life.

AlphaFold has revolutionized structural biology by providing high-accuracy protein structure predictions. Central to interpreting these models are two primary confidence metrics: the predicted local distance difference test (pLDDT) and the predicted aligned error (PAE). These scores provide complementary information about prediction reliability at different scales. The pLDDT offers per-residue local confidence estimates, while the PAE assesses global confidence in the relative positioning of different structural regions. Understanding these metrics is essential for researchers validating AlphaFold predictions against experimental data and applying these models in drug discovery and functional studies.

Understanding pLDDT: Local Confidence Metric

Definition and Interpretation

The predicted local distance difference test (pLDDT) is a per-residue measure of local confidence scaled from 0 to 100, with higher scores indicating higher confidence and typically more accurate prediction [9]. This metric estimates how well the prediction would agree with an experimental structure based on the local distance difference test Cα (lDDT-Cα), which assesses the correctness of local distances without relying on structural superposition [9]. The pLDDT score varies significantly along a protein chain, indicating which regions AlphaFold predicts with high confidence and which are potentially unreliable.

pLDDT Scoring Categories and Structural Implications

pLDDT scores are categorized into four confidence levels with distinct structural interpretations [9]:

Table 1: pLDDT Score Interpretations and Structural Correlations

| pLDDT Range | Confidence Level | Structural Interpretation | Typical Backbone Accuracy | Side Chain Accuracy |

|---|---|---|---|---|

| >90 | Very high | High accuracy in both backbone and side chains | 0.6 Å RMSD [10] | Correctly positioned [9] |

| 70-90 | Confident | Correct backbone with possible side chain errors | ~1.0 Å RMSD [10] | Potential misplacement [9] |

| 50-70 | Low | Low confidence, potentially disordered | ~2.0 Å RMSD or higher [10] | Unreliable |

| <50 | Very low | Intrinsically disordered or lacking evolutionary information | Highly unreliable | Unreliable |

Factors Influencing Low pLDDT Scores

Low pLDDT scores (<50) generally indicate one of two scenarios: naturally flexible or intrinsically disordered regions that lack a fixed structure, or regions where AlphaFold lacks sufficient evolutionary information to make a confident prediction [9]. Most intrinsically disordered regions (IDRs) remain disordered, though AlphaFold sometimes predicts bound conformations with high confidence for IDRs that undergo binding-induced folding, as demonstrated with eukaryotic translation initiation factor 4E-binding protein 2 (4E-BP2) [9].

Understanding PAE: Global Confidence Metric

Definition and Interpretation

The predicted aligned error (PAE) represents the expected positional error in angstroms (Å) between residue pairs when structures are aligned on one residue [11] [12]. Unlike pLDDT, which measures local confidence, PAE assesses the reliability of relative orientations between different parts of the structure. The PAE plot is presented as an N×N matrix where N is the number of residues, with the x and y axes both representing residue indices, and the color at any point (i,j) indicating the expected distance error between residues i and j when the structures are aligned on residue i.

Structural Insights from PAE Plots

PAE plots provide crucial information about:

- Domain boundaries: Well-defined domains typically show low PAE values within themselves but higher values between domains

- Relative domain positioning confidence: Low inter-domain PAE values indicate confident relative positioning

- Flexible regions: High PAE values often correspond to flexible linkers or disordered regions

- Complex formation: For protein complexes, PAE can indicate confidence in subunit interactions

For multi-domain proteins connected by flexible linkers, PAE plots typically show high error values between domains, reflecting their dynamic nature in solution [10]. This is particularly important for membrane proteins, where AlphaFold may position domains in ways that would clash with the membrane bilayer despite high local pLDDT scores [10].

Experimental Validation of Confidence Metrics

Correlation with Experimental Accuracy

Extensive validation against experimental structures confirms that pLDDT reliably predicts local accuracy. The median root mean square deviation (RMSD) between AlphaFold predictions and experimental structures is approximately 1.0 Å, comparable to the 0.6 Å median RMSD between different experimental structures of the same protein [10]. In high-confidence regions (pLDDT >90), the median RMSD improves to 0.6 Å, matching experimental variability [10].

Side chain accuracy also correlates with pLDDT scores. Approximately 80% of side chains in AlphaFold models show perfect fit to experimental data, compared to 94% in experimental structures, with most errors occurring in low-confidence regions [10].

Relationship to Protein Dynamics

Research indicates that pLDDT scores and PAE maps may reflect protein dynamical properties. Studies comparing molecular dynamics (MD) simulations with AlphaFold predictions found that pLDDT scores correlate with root mean square fluctuations (RMSF) from MD for structured proteins with deep multiple sequence alignments [12]. Similarly, PAE matrices show patterns comparable to distance variation matrices from MD simulations, suggesting these metrics capture aspects of native flexibility [12].

Table 2: Experimental Validation Metrics for AlphaFold Predictions

| Validation Metric | Definition | Typical AlphaFold Performance | Experimental Baseline |

|---|---|---|---|

| Global RMSD | Average distance between corresponding atoms after superposition | ~1.0 Å [10] | 0.6 Å (between experimental structures) [10] |

| High-confidence RMSD | RMSD for residues with pLDDT >90 | 0.6 Å [10] | Same as experimental baseline |

| Side Chain Accuracy | Percentage of correctly positioned side chains | 80% perfect fit [10] | 94% perfect fit [10] |

| Backbone Accuracy | Percentage of correct backbone predictions | >90% for pLDDT >70 [9] | Reference standard |

AlphaFold Confidence Metrics Interpretation Workflow

Methodologies for Comparative Analysis

PDBe-KB Structure Superposition Protocol

The PDBe-KB resource provides a standardized methodology for comparing AlphaFold predictions with experimental structures:

- Input Processing: Provide UniProt accession number to retrieve both AlphaFold models and experimental PDB structures for the protein [11]

- Structure Superposition: Automated superposition process clusters PDB structures by conformational states and superposes the AlphaFold model onto each cluster [11]

- Quantitative Comparison: System calculates RMSD between AlphaFold model and representative structures from each conformational state [11]

- Visualization: Integrated Mol* viewer displays superposed structures with AlphaFold models colored by pLDDT score [11]

- PAE Analysis: Interface displays AlphaFold PAE plot alongside superposed structures for integrated interpretation [11]

This protocol was applied to Calpain-2 from Rat, revealing that the AlphaFold model better matched the inactive conformation (RMSD 2.84 Å) than the active form (RMSD 4.97 Å) [11].

Domain-Specific Accuracy Assessment

For multi-domain proteins and complexes, specialized assessment protocols are essential:

- Domain Segmentation: Divide structure into functional domains based on UniProt annotations or structural clustering [13]

- Independent Alignment: Calculate RMSD for individual domains after optimal superposition [14]

- Relative Domain Positioning: Assess placement of domains relative to each other (e.g., im~fd~RMSD for autoinhibited proteins) [14]

- Interface Analysis: For complexes, calculate interface-specific metrics like ipLDDT and ipTM [15]

This approach revealed that while AlphaFold accurately predicts individual domains of autoinhibited proteins, it frequently mispositions inhibitory modules relative to functional domains [14].

Advanced Applications and Limitations

Protein Complex Assessment with ipTM and pDockQ

For protein complexes, interface-specific metrics provide enhanced assessment:

- ipTM (interface pTM): Specialized version of predicted TM-score focusing on interface regions, outperforms global pTM for complex quality assessment [15]

- pDockQ: Predicted DockQ score derived from interfacial contacts and residue quality estimates [15]

- Model Confidence: Composite score used by AlphaFold Multimer, effectively discriminates between correct and incorrect protein-protein interactions [15]

Recent benchmarking shows these interface-specific scores are more reliable for evaluating protein complex predictions compared to global scores [15].

Conformational Diversity Limitations

AlphaFold exhibits systematic limitations in predicting proteins with large-scale conformational changes:

- Single Conformation Bias: Tends to predict single conformational states even when multiple functional states exist [13] [14]

- Autoinhibited Proteins: Struggles with accurate prediction of autoinhibited proteins, with only ~50% reproducing experimental structures within 3 Å RMSD [14]

- Domain Positioning: Accurate individual domain predictions but frequently incorrect relative positioning in multi-domain proteins [14]

- Homodimer Asymmetry: Misses functional asymmetry in homodimeric receptors where experimental structures show conformational diversity [13]

These limitations persist in AlphaFold3, though marginal improvements are observed [14].

Research Reagent Solutions

Table 3: Essential Resources for AlphaFold-Experimental Comparative Studies

| Resource | Type | Function | Access |

|---|---|---|---|

| PDBe-KB Aggregated Views | Web Resource | Structure superposition of AlphaFold and experimental models | https://www.ebi.ac.uk/pdbe/ [11] |

| AlphaFold Protein Structure Database | Database | Repository of precomputed AlphaFold predictions | https://alphafold.ebi.ac.uk/ [13] |

| Mol* Viewer | Visualization Tool | 3D structure visualization with confidence metric mapping | Integrated in PDBe-KB [11] |

| ChimeraX | Software Platform | Advanced molecular visualization with AlphaFold integration | Downloadable software [15] |

| PICKLUSTER v.2.0 | ChimeraX Plugin | Protein complex analysis with C2Qscore assessment | Plugin installation [15] |

| CAPRI Criteria | Assessment Standard | Quality evaluation for protein-protein complexes | Community standard [15] |

pLDDT and PAE scores provide essential guidance for interpreting AlphaFold predictions, with strong experimental validation confirming their correlation with accuracy. These metrics enable researchers to identify reliable regions suitable for downstream applications while flagging uncertain areas requiring experimental validation. As AlphaFold continues to evolve, understanding these confidence metrics remains fundamental to effective integration of computational predictions with experimental structural biology in drug discovery and basic research.

While AlphaFold has revolutionized structural biology by providing highly accurate protein structure predictions, it is not a universal solution for all structural challenges. This guide systematically compares AlphaFold's performance against experimental data, highlighting key limitations in predicting dynamic protein regions, multi-chain complexes, ligand interactions, and nucleic acid structures. The analysis confirms that AlphaFold predictions serve as exceptionally useful hypotheses that require experimental validation, particularly for drug discovery applications where atomic-level precision is critical.

The development of DeepMind's AlphaFold represents a paradigm shift in structural biology, solving a 50-year-old grand challenge by predicting protein structures from amino acid sequences with unprecedented accuracy [16]. The AI system has now predicted structures for over 200 million proteins, providing broad coverage of known protein sequences [17]. However, as researchers increasingly integrate these predictions into scientific workflows, understanding their limitations has become crucial, particularly for applications in drug development and mechanistic biology.

AlphaFold's core limitation stems from its training on static structural snapshots from the Protein Data Bank (PDB), which inherently constrains its ability to model biological complexity including conformational dynamics, environmental influences, and rare states [18] [5]. This analysis provides a systematic assessment of what AlphaFold cannot predict, validated through direct comparisons with experimental data across multiple protein classes and systems.

Methodological Framework: Validating Predictions Against Experimental Data

Experimental Validation Protocols

Researchers employ multiple methodologies to assess AlphaFold prediction accuracy:

Cross-validation with crystallographic electron density maps: High-quality crystallographic maps determined without reference to deposited models serve as unbiased standards for evaluating predictions [5]. Map-model correlation coefficients quantify compatibility between predictions and experimental data.

NMR ensemble comparison: Solution NMR structures provide dynamic ensembles that highlight limitations in AlphaFold's static predictions [18]. This is particularly valuable for assessing conformational flexibility.

Cryo-EM density fitting: For large complexes, cryo-EM maps validate quaternary structure predictions and domain orientations [18] [19].

Molecular dynamics simulations: MD simulations test the stability and physical realism of predicted structures under physiological conditions [18].

Confidence Metrics and Their Interpretation

AlphaFold provides two primary confidence metrics that researchers must correctly interpret:

pLDDT (predicted Local Distance Difference Test): Per-residue confidence score (0-100) where values >90 indicate very high confidence, 70-90 confident, 50-70 low confidence, and <50 very low confidence [18] [5].

PAE (Predicted Aligned Error): Matrix evaluating relative positioning accuracy between residues, with higher values indicating lower confidence in domain orientations [18].

caption: High pLDDT scores do not guarantee biological accuracy, particularly for regions involved in conformational changes or ligand binding [5].

Quantitative Performance Assessment: AlphaFold vs. Experimental Structures

Global Accuracy Metrics

Table 1: Overall Accuracy Comparison Between AlphaFold Predictions and Experimental Structures

| Assessment Metric | AlphaFold Performance | Experimental Structure Benchmark | Significance |

|---|---|---|---|

| Mean map-model correlation | 0.56 (after superposition) [5] | 0.86 (deposited models) [5] | Predictions show substantially lower compatibility with experimental density |

| Median Cα RMSD | 1.0 Å [5] | 0.6 Å (same protein, different crystal forms) [5] | Predictions more dissimilar than structures with different crystal contacts |

| Inter-domain distance deviation | 0.7 Å (48-52 Å range) [5] | 0.4 Å (48-52 Å range) [5] | Significant distortion in global structure prediction |

| Confident residue coverage | 36% (human proteome) [5] | N/A | Majority of human proteome lacks high-confidence prediction |

Performance Across Biological Contexts

Table 2: AlphaFold Limitations Across Protein Classes and Contexts

| Protein Class/Context | Specific Limitations | Experimental Validation |

|---|---|---|

| Multi-protein complexes | Inaccurate relative domain positioning despite high pLDDT [5] | Cryo-EM and X-ray structures reveal domain packing errors |

| Proteins with ligands/cofactors | Missing functionally relevant co-factors, prosthetic groups, ligands [18] | Experimental structures show binding-induced conformational changes |

| Nucleic acid complexes | Struggles with unusual DNA/RNA structures, single mutations [20] | NMR reveals errors in ion-coordinated RNA structures |

| Dynamic/Disordered regions | Poor prediction of conformational ensembles [18] | NMR ensembles show multiple accessible states not captured by AF2 |

| Membrane proteins | Challenges with mixed secondary structure elements [18] | Experimental structures reveal topological errors |

| Peptides (<10 residues) | Difficulty generating reliable MSAs, inaccurate structures [18] | Benchmark of 588 peptides shows poor performance on mixed structures |

Critical Limitations: What AlphaFold Cannot Predict

Protein Dynamics and Alternative States

AlphaFold predicts single, static structural snapshots rather than the conformational ensembles that characterize biologically functional proteins [18]. This limitation is particularly significant for:

- Proteins with large-scale conformational changes: AlphaFold cannot predict alternate biological states that differ from the most stable conformation [18].

- Intrinsically disordered regions: The AI consistently struggles with natively disordered proteins that lack well-defined states [18] [5].

- Rare conformational states: Transient intermediates and templating conformational conversions critical for processes like protein aggregation are not captured [21].

Experimental comparison reveals that NMR ensembles often provide more accurate representations for dynamic proteins than static AlphaFold models [18]. For example, the AF2 model of insulin deviates significantly from its experimental NMR structure, potentially due to an inability to properly orient disulfide-bond forming cysteine pairs [18].

Multi-Chain Complexes and Quaternary Structure

While AlphaFold Multimer extends capability to protein complexes, significant limitations remain:

- Inaccurate domain orientations: Even high-confidence predictions show global distortions and incorrect domain positioning [5]. Morphing AlphaFold predictions to match experimental structures reduces Cα RMSD from 1.0 Å to 0.4 Å, indicating substantial initial domain placement errors [5].

- Unreliable protein-protein interfaces: Predictions for novel protein-protein interactions often require experimental validation [19].

- Limited accuracy for large complexes: Performance decreases with increasing complex size and complexity [16].

Ligand Binding and Allosteric Regulation

AlphaFold cannot reliably predict:

- Ligand-induced conformational changes: Structures in bound versus unbound states often differ significantly [5].

- Allosteric regulation: Mechanisms involving distant regulatory sites are not captured [18].

- Cofactor binding sites: Functionally essential cofactors are typically absent from predictions [18].

Small errors in binding site geometry (1-2 Å) can be catastrophic for predicting drug binding, as chemical forces that interact at one angstrom can disappear at two [16].

Nucleic Acids and Unusual Structures

AlphaFold 3 extends capabilities to nucleic acids but shows specific weaknesses:

- Struggles with unusual DNA/RNA structures, particularly those involving single mutations that dramatically alter structure [20].

- Performance varies with ion coordination: Predictions for RNA coordinated to monovalent sodium ions often incorrectly resemble structures with divalent ions [20].

- Limited accuracy for non-canonical base pairing: Less common motifs beyond standard Watson-Crick pairing are poorly predicted [20].

The tool performs best on common structural motifs well-represented in training data but fails on rare or unusual configurations [20].

Experimental Integration Workflow

Validating AlphaFold Predictions: This workflow illustrates the essential process of testing AlphaFold models against experimental data, highlighting that both high and low-confidence predictions require experimental validation.

Research Reagent Solutions for Validation

Table 3: Essential Reagents and Tools for Experimental Validation of AlphaFold Predictions

| Reagent/Resource | Function in Validation | Application Context |

|---|---|---|

| Crystallography kits | Protein crystallization screening | High-resolution structure determination |

| Cryo-EM grids | Vitrification for single-particle analysis | Large complex structure validation |

| NMR isotopes | 15N, 13C, 2H labeling for NMR studies | Dynamic region analysis |

| SAXS instruments | Solution scattering profile measurement | Global shape and flexibility assessment |

| Cross-linkers | Distance constraint generation | Validation of spatial relationships |

| Synchrotrons | High-intensity X-ray source | High-resolution data collection |

| PDBe-KB tools | Experimental-prediction structure comparison | Automated model validation [22] |

AlphaFold represents a transformative tool that has "augmented but not replaced" experimental structure determination [16]. The technology serves best as a "hypothesis generator" that accelerates research but requires experimental validation, particularly for drug discovery applications where small structural errors can determine success or failure [16] [5].

The most effective structural biology workflow integrates AlphaFold predictions with experimental data, using the AI-generated models to guide targeted experiments rather than as definitive answers. As John Jumper, AlphaFold's lead developer, notes: "This was not the only problem in biology. It's not like we were one protein structure away from curing any diseases" [16]. Future developments focusing on conformational ensembles, environmental factors, and molecular interactions will address current limitations, but the integration of prediction and experimentation will remain the cornerstone of reliable structural biology.

The advent of advanced artificial intelligence systems for protein structure prediction, particularly AlphaFold2, has revolutionized structural biology by providing accurate three-dimensional models of proteins from their amino acid sequences alone. This breakthrough, recognized by the 2024 Nobel Prize in Chemistry for its developers, has enabled researchers worldwide to access reliable structural predictions for nearly any protein, dramatically accelerating the pace of discovery [23]. The AlphaFold database hosted by EMBL-EBI has swelled to contain more than 240 million structural predictions and has been accessed by approximately 3.3 million users across 190 countries, democratizing access to structural information [23]. However, as the scientific community has gained experience with these AI-generated models, a crucial understanding has emerged: these predictions represent exceptionally useful hypotheses rather than definitive endpoints [5]. They serve as powerful starting points for scientific investigation but require experimental validation to confirm structural details, especially those involving interactions with ligands, covalent modifications, or environmental factors not accounted for in the prediction process.

This article examines the "hypothesis paradigm" for AI-predicted protein structures through a comprehensive analysis of AlphaFold's performance against experimental data. We objectively compare AlphaFold's predictions with structures determined through X-ray crystallography, NMR spectroscopy, and cryo-electron microscopy, providing supporting experimental data and detailed methodologies. Within the broader thesis of validating protein structure predictions, we demonstrate that while AlphaFold regularly achieves remarkable accuracy, it does not replace experimental structure determination but rather accelerates and guides it [5]. For researchers, scientists, and drug development professionals, understanding the capabilities and limitations of these AI tools is essential for their effective integration into the scientific workflow.

Performance Analysis: AlphaFold vs. Experimental Methods

Accuracy Metrics and Comparative Performance

The accuracy of AlphaFold2 was conclusively demonstrated during the 14th Critical Assessment of protein Structure Prediction (CASP14), where it achieved a median backbone accuracy of 0.96 Å r.m.s.d.95 (Cα root-mean-square deviation at 95% residue coverage), greatly outperforming other methods and demonstrating accuracy competitive with experimental structures in most cases [24]. This represented a revolutionary leap forward from previous computational methods. However, comprehensive comparisons with experimental structures reveal a more nuanced picture of its capabilities and limitations.

Table 1: AlphaFold Performance Across Experimental Structure Types

| Experimental Method | Typical Agreement with AlphaFold | Key Limitations | Notable Strengths |

|---|---|---|---|

| X-ray Crystallography | Median Cα r.m.s.d. ~1.0 Å (reducible to 0.4 Å with morphing) [5] | Global distortion and domain orientation differences; local backbone/side-chain conformation variances [5] | High accuracy for well-folded domains; excellent molecular replacement templates [25] |

| NMR Spectroscopy | More accurate than NMR ensembles in ~30% of cases; comparable in most others [26] | Struggles with dynamic regions where NMR performs better (2% of cases) [26] [27] | Superior hydrogen-bond networks and static regions [26] |

| Cryo-EM | Excellent fit for medium-resolution maps (3.5 Å or better) [25] | Does not explicitly account for lipid bilayers in membrane proteins [28] | Provides atomic details for lower-resolution regions; enables unknown subunit identification [25] |

| Protein Complexes | Varies significantly; improved with specialized implementations (DeepSCFold shows 11.6% improvement over AlphaFold-Multimer) [29] | Challenging for antibody-antigen and transient interactions without clear co-evolution [29] | Simultaneous modeling of multiple chains captures interface details [28] |

When comparing AlphaFold predictions directly with experimental crystallographic electron density maps—without bias from deposited PDB models—the mean map-model correlation for AlphaFold predictions was 0.56, substantially lower than the mean map-model correlation of deposited models to the same maps (0.86) [5]. This indicates that while predictions are highly accurate, they still differ significantly from experimental data in many cases. Analysis of 102 high-quality crystal structures revealed that even high-confidence predictions (pLDDT > 90) can show global-scale differences through distortion and domain orientation, and local-scale differences in backbone and side-chain conformation [5].

Table 2: Confidence Metric Interpretation Guide

| pLDDT Score Range | Predicted Accuracy | Recommended Usage | Experimental Validation Priority |

|---|---|---|---|

| >90 | High confidence | Molecular replacement; detailed mechanistic hypotheses | Lower priority for backbone confirmation |

| 70-90 | Confident | Functional analysis; interaction site identification | Medium priority; validate side chains |

| 50-70 | Low confidence | Domain organization awareness | High priority; limited trust in atomic positions |

| <50 | Very low confidence | Possible disordered regions | Very high priority; consider alternative methods |

For nuclear magnetic resonance (NMR) structures, AlphaFold's performance reveals important insights about the relationship between computational predictions and solution-state structures. A comprehensive survey of 904 human proteins with both AlphaFold and NMR structures demonstrated that AlphaFold predictions are typically more accurate than NMR ensembles, with the best NMR structures in each ensemble being of comparable accuracy to AlphaFold2 [26] [27]. In approximately 30% of cases, AlphaFold was significantly better, mainly in hydrogen-bond networks, while in only 2% of cases was NMR more accurate, primarily in dynamic regions [26]. This suggests that for most well-structured proteins, AlphaFold provides excellent models of the solution state, but for dynamic regions, NMR retains advantages.

Experimental Protocols for Validation

To objectively assess AlphaFold predictions against experimental data, researchers have developed rigorous validation protocols. The following methodologies represent current best practices for comparative analysis:

X-ray Crystallography Validation Protocol:

- Obtain crystallographic electron density maps determined without reference to deposited models to eliminate bias [5]

- Superimpose AlphaFold prediction on deposited model using Cα atoms

- Calculate map-model correlation coefficient to quantify agreement between prediction and experimental density

- Measure root-mean-square deviation (r.m.s.d.) of Cα atoms between prediction and deposited model

- Analyze local regions where pLDDT confidence scores and density map quality diverge

- Apply morphing algorithms to distinguish between global distortion and local errors [5]

NMR Solution Structure Validation Protocol:

- Apply ANSURR (Accuracy of NMR Structures Using RCI and Rigidity) analysis, which computes protein flexibility from backbone chemical shifts and compares it with flexibility derived from the structure using rigidity theory [26]

- Calculate rank Spearman correlation coefficient and RMSD between flexibility measures

- Compare correlation and RMSD scores for both NMR ensemble and AlphaFold prediction

- Identify regions where dynamics may explain discrepancies between computational and experimental structures [26]

- Validate identified dynamic regions with 15N relaxation dispersion and 1H-15N heteronuclear NOE data [26]

Cryo-EM Integration Protocol:

- Fit AlphaFold predictions into medium-resolution (3.5 Å or better) cryo-EM density maps using tools like ChimeraX or COOT [25]

- Focus initial fitting on high-confidence regions (pLDDT > 90)

- Use iterative rebuilding where the fitted structure is provided to AlphaFold as a template for improved prediction [25]

- Apply deep learning-based quality scores (e.g., DAQ) to identify and rebuild low-quality regions [25]

- For unknown densities, perform structural searches against AlphaFold Database to identify potential matches [25]

Limitations and Challenges in AI Structure Prediction

Molecular Interactions and Environmental Factors

Despite its remarkable capabilities, AlphaFold has significant limitations that reinforce its role as a hypothesis generator rather than a definitive determination method. A primary limitation is its inability to reliably model interactions with ligands, drug molecules, DNA, RNA, and metal ions—though AlphaFold3 has made substantial progress in this area [28]. The system shows at least 50% better accuracy than existing methods for protein-molecule interactions, with accuracy doubling for specific cases like protein-ligand binding [28]. However, it still does not calculate binding energies or predict kinetic rates, limiting its direct utility for drug discovery without experimental validation.

Protein dynamics and multiple conformational states represent another fundamental challenge. AlphaFold3 provides static snapshots rather than movies of molecular motion [28]. This limitation becomes particularly significant for proteins that undergo large conformational changes or exist in multiple stable states. For drug development professionals, this means that AI predictions may miss functionally relevant alternative conformations that could represent valuable therapeutic targets.

Membrane proteins, despite improvements, remain challenging for AlphaFold. The model does not explicitly account for lipid bilayers, leading to potential artifacts in transmembrane regions [28]. This is particularly problematic for critical drug targets like GPCRs and ion channels, which require careful interpretation when using computational predictions. Similarly, RNA structure prediction represents AlphaFold3's "Achilles heel," with recent evaluations showing mixed performance due to RNA's conformational flexibility and context-dependent folding [28].

Protein Complexes and Multimeric Assemblies

Predicting the structures of protein complexes remains significantly more challenging than predicting single protein monomers. While AlphaFold-Multimer and subsequent implementations have improved accuracy, specialized approaches like DeepSCFold demonstrate that there's still substantial room for improvement, showing 11.6% and 10.3% improvement in TM-score compared to AlphaFold-Multimer and AlphaFold3 respectively on CASP15 targets [29]. This enhanced performance comes from incorporating sequence-derived structure complementarity rather than relying solely on sequence-level co-evolutionary signals.

The accuracy of multimer predictions is particularly important for drug discovery, as most therapeutic targets involve complexes rather than isolated proteins. For antibody-antigen complexes—crucial for biologic drug development—AlphaFold3 has shown promising but inconsistent performance. DeepSCFold reportedly enhances the prediction success rate for antibody-antigen binding interfaces by 24.7% and 12.4% over AlphaFold-Multimer and AlphaFold3, respectively [29], suggesting that specialized implementations may be necessary for specific applications.

Diagram 1: Hypothesis-Driven Workflow for AI Structure Prediction. This workflow illustrates the iterative process of using AlphaFold predictions as initial hypotheses that require experimental validation, particularly for low-confidence regions.

For researchers leveraging AlphaFold predictions in their work, a comprehensive toolkit of computational and experimental resources is essential for proper validation and refinement. The following table details key solutions and their applications in the hypothesis-validation paradigm:

Table 3: Essential Research Reagent Solutions for Structure Validation

| Tool/Resource | Type | Primary Function | Application in Validation |

|---|---|---|---|

| AlphaFold Server | Computational | Free academic access to AlphaFold3 predictions | Initial hypothesis generation for protein-ligand complexes [28] |

| ANSURR | Computational | Measures accuracy of solution structures by comparing flexibility from chemical shifts and 3D structures [26] | Validating AlphaFold predictions against NMR data; identifying dynamic regions [26] [27] |

| ChimeraX | Computational | Molecular visualization and analysis | Fitting AlphaFold predictions into cryo-EM density maps [25] |

| PHENIX | Computational | Comprehensive crystallography software suite | Molecular replacement using AlphaFold predictions; iterative model rebuilding [25] |

| COSMIC | Experimental | Cryo-EM structure determination pipeline | Combining AlphaFold predictions with experimental density maps [25] |

| MRBUMP | Computational | Automated molecular replacement pipeline | Template search and model preparation using AlphaFold Database structures [25] |

| DeepSCFold | Computational | Protein complex structure modeling using sequence-derived complementarity | Enhanced prediction of protein-protein interactions, especially antibody-antigen complexes [29] |

| BoltzGen | Computational | Generative AI for protein binder design | Creating novel protein binders for therapeutically relevant targets [30] |

Specialized Applications and Emerging Solutions

Beyond general-purpose validation tools, specialized resources have emerged to address specific challenges in the AI structure prediction pipeline. For molecular replacement in crystallography, tools like Slice'n'Dice in CCP4 and PHENIX's processpredictedmodel can split AlphaFold predictions into domains based on predicted aligned error (PAE) plots or spatial clustering, significantly improving success rates for challenging targets [25]. The Low Resolution Structure Refinement pipeline (LORESTR) has been updated to automatically fetch models from the AlphaFold Database and use them for restraints generation [25].

For cryo-EM applications, an iterative procedure for model building begins by fitting an initial AlphaFold prediction into experimental density using PHENIX tooling, then using the fitted structure as a template for subsequent AlphaFold predictions that more closely match the density [25]. This iterative approach improves resulting structures beyond simple rebuilding against experimental data. Another automated solution uses a deep learning-based quality score (DAQ) to identify low-quality regions and rebuild them in a targeted fashion with AlphaFold [25].

In the rapidly evolving field of generative AI for protein design, BoltzGen represents a significant advancement as the first model capable of generating novel protein binders that are ready to enter the drug discovery pipeline [30]. Its ability to perform a variety of tasks while unifying protein design and structure prediction makes it particularly valuable for addressing "undruggable" targets that have resisted conventional approaches.

Evolving Capabilities and Research Implications

The field of AI-based protein structure prediction continues to evolve rapidly, with several clear directions for future development. The most obvious next step is the incorporation of dynamics—predicting not just structures but movements, conformational changes, and molecular breathing [28]. Better handling of cellular environments, crowding, pH effects, and realistic conditions would also improve biological relevance, as current predictions assume idealized conditions that rarely exist in living systems [28].

Integration with experimental data promises hybrid approaches that combine the best of both worlds. Using sparse experimental constraints to guide predictions could significantly enhance accuracy for challenging targets. The success of tools like DeepSCFold in capturing structural complementarity information suggests that combining physical principles with pattern recognition may yield further improvements [29]. For RNA structure prediction—currently AlphaFold3's weakest area—specialized innovations are likely to emerge in the near future [28].

The philosophical implications for structural biology are profound. AlphaFold has inverted the traditional workflow—instead of determining structures experimentally, researchers now predict them computationally and validate selectively [28]. This shift has dramatically accelerated research, with AlphaFold users submitting approximately 50% more protein structures to the PDB than non-users [23]. For the scientific community, this represents a fundamental transformation in how structural hypotheses are generated and tested.

The evidence from comprehensive validation studies supports a clear conclusion: AlphaFold predictions are valuable hypotheses that accelerate but do not replace experimental structure determination [5]. While these AI-generated models achieve remarkable accuracy—often within 1-2 Ångstroms of experimental structures for high-confidence predictions [28]—they consistently show limitations in capturing global distortions, domain orientations, local backbone and side-chain conformations, and dynamic processes [5].

For researchers, scientists, and drug development professionals, this necessitates a nuanced approach to using these powerful tools. AlphaFold predictions serve as exceptional starting points for scientific investigation, enabling hypothesis generation and guiding experimental design. However, they cannot fully capture the complexity of biological systems, including environmental influences, ligand interactions, and dynamic behavior. The confidence metrics provided with predictions, particularly pLDDT scores, offer valuable guidance for identifying regions requiring experimental validation [5].

The "hypothesis paradigm" for AI-predicted structures thus represents both a practical workflow and a philosophical approach to structural biology. By treating computational predictions as testable hypotheses rather than definitive answers, researchers can harness the unprecedented power of AI tools like AlphaFold while maintaining the scientific rigor that comes from experimental validation. This balanced approach ensures continued progress in understanding biological mechanisms and developing novel therapeutics, leveraging the best of both computational and experimental structural biology.

Integrating Predictions with Experiments: From Molecular Replacement to Cryo-EM

Accelerating Experimental Structure Determination

The revolutionary development of deep learning-based protein structure prediction tools, particularly AlphaFold2, has transformed structural biology. These AI-powered systems can predict protein structures with accuracies often rivaling experimental methods, achieving unprecedented success in blind assessments like CASP14 where AlphaFold2 attained a median GDT_TS score of 92.4, indicating near-experimental accuracy [26] [31]. However, a critical question remains: to what extent can these predictions accelerate or potentially replace experimental structure determination? This guide provides a comprehensive comparison of AlphaFold's performance against experimental structural biology methods, examining validation data across X-ray crystallography, nuclear magnetic resonance (NMR) spectroscopy, and cryo-electron microscopy (cryo-EM) to offer researchers practical insights for integrating computational and experimental approaches.

Performance Comparison: AlphaFold vs. Experimental Methods

Table 1: Overall Accuracy Metrics Across Structure Determination Methods

| Method | Typical Global Accuracy (GDT_TS) | Local Accuracy (Backbone RMSD) | Confidence Metrics | Key Limitations |

|---|---|---|---|---|

| AlphaFold2 | 88±10 (CASP14 median) [32] | ~1.5Å for high-confidence regions [32] | pLDDT (per-residue) | Systematic underestimation of flexible regions [6] |

| X-ray Crystallography | Considered reference standard | ~0.1-0.5Å (high resolution) | Resolution, R-factors | Crystal packing effects, static conformations [5] |

| Solution NMR | Variable across ensemble | 1-2Å (well-defined regions) [26] | RMSD of ensemble | Size limitations, dynamics interpretation [26] |

| Cryo-EM | Near-atomic (3Å+) | 3-4Å (medium resolution) | Resolution, map quality | Size requirements, flexibility challenges |

Independent validation studies demonstrate that AlphaFold predictions frequently match experimental structures with remarkable precision. When compared directly with experimental electron density maps, high-confidence AlphaFold predictions (pLDDT > 90) typically show map-model correlations of 0.56-0.72, though this remains lower than the 0.86 correlation typically seen for deposited crystallographic models [5]. This indicates that while highly accurate, AlphaFold structures are not perfect replacements for experimental models.

Domain-Specific Performance Variations

Table 2: Performance Across Protein Functional Categories

| Protein Category | AlphaFold Performance | Experimental Concordance | Notable Limitations |

|---|---|---|---|

| Single-domain soluble proteins | Excellent (GDT_TS >90) [32] | High agreement with both X-ray and NMR [31] | Minimal - suitable for most applications |

| Nuclear receptors | Good overall accuracy | Systematic 8.4% underestimation of ligand pocket volumes [6] | Misses functional conformational diversity |

| Autoinhibited proteins | Reduced accuracy (50% below 3Å RMSD) [14] | Poor domain placement accuracy | Fails to capture allosteric transitions |

| Multi-domain proteins | Variable domain arrangement accuracy | Improved with experimental restraints [33] | Challenging inter-domain orientations |

| Dynamic/flexible regions | Lower confidence predictions | NMR captures dynamics better [26] | Misses biologically relevant states |

Nuclear receptors exemplify AlphaFold's systematic limitations, with ligand-binding domains (LBDs) showing higher structural variability (CV = 29.3%) compared to more stable DNA-binding domains (CV = 17.7%) [6]. AlphaFold also systematically underestimates ligand-binding pocket volumes by 8.4% on average, which has significant implications for drug design applications [6].

For autoinhibited proteins that toggle between active and inactive states, AlphaFold's performance is notably reduced. Only slightly more than half of autoinhibited protein predictions match experimental structures within 3Å RMSD, compared to nearly 80% for conventional two-domain proteins [14]. The primary inaccuracy lies in domain positioning, particularly the placement of inhibitory modules relative to functional domains.

Experimental Validation Protocols and Workflows

NMR Validation Methods

NMR spectroscopy provides particularly valuable experimental validation through several rigorous protocols:

ANSURR (Accuracy of NMR Structures Using RCI and Rigidity) Analysis This method computes protein flexibility from backbone chemical shifts and compares it with flexibility derived from structural rigidity theory [26] [27]. The correlation between these measures provides a reliability score for solution structures, enabling direct comparison between AlphaFold predictions and experimental NMR ensembles [26] [27].

Residual Dipolar Coupling (RDC) Validation RDCs provide orientation restraints that are independent of distance measurements. Researchers calculate Q-factors between predicted and experimental RDCs to assess how well AlphaFold models represent solution-state conformations [32].

NOESY Peak List Analysis (RPF-DP Scores) The RPF-DP score quantifies agreement between predicted structures and experimental NOESY peak lists, validating both the global fold and local atomic contacts [32].

Crystallographic Validation Approaches

Molecular Replacement with AlphaFold Models AlphaFold predictions increasingly serve as search models for molecular replacement in X-ray crystallography, successfully replacing experimental structures for phasing [31]. This application demonstrates their substantial accuracy and practical utility in experimental workflows.

Electron Density Map Comparison Researchers directly fit AlphaFold predictions into experimental electron density maps to assess local and global accuracy [5]. This approach revealed that while high-confidence regions generally match well, global distortions and domain orientation errors are common, with median Cα RMSD of 1.0Å compared to 0.6Å for different crystal forms of the same protein [5].

Integrative Approaches: Combining AI and Experiment

Restraint-Assisted Structure Prediction

The RASP (Restraint Assisted Structure Predictor) model represents a significant advancement in integrating experimental data with AI prediction. Built on AlphaFold's architecture, RASP incorporates experimental restraints as biases in the Evoformer MSA attention and invariant point attention blocks [33]. This approach enables:

- Improved prediction for multi-domain proteins (TM-score improvement from 0.51 to 0.79 in case study)

- Enhanced accuracy for proteins with limited evolutionary information (TM-score improvement from 0.43 to 0.77 with 50 restraints)

- Direct utilization of NMR-derived distance restraints [33]

AI-Assisted NMR Assignment

The FAAST (iterative Folding Assisted peak ASsignmenT) pipeline leverages AlphaFold predictions to accelerate NMR NOESY assignment, reducing analysis time from months to hours [33]. This symbiotic approach addresses key bottlenecks in experimental structure determination while maintaining accuracy through iterative validation.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Research Reagents and Computational Tools

| Tool/Reagent | Type | Primary Function | Application Context |

|---|---|---|---|

| ANSURR | Software | Validates solution structure accuracy using chemical shifts [26] [27] | NMR validation and comparison with predictions |

| RASP | AI Model | Structure prediction with experimental restraints [33] | Integrating sparse data with deep learning |

| FAAST | Computational Pipeline | Accelerated NOESY peak assignment [33] | Rapid NMR structure determination |

| CYANA | Software | NMR structure calculation from NOE data | Traditional NMR structure determination |

| 15N/13C-labeled proteins | Biochemical Reagent | Enables multidimensional NMR spectroscopy | Experimental NMR structure studies |

| Crystallization screens | Chemical Library | Identifies protein crystallization conditions | X-ray crystallography experiments |

| Cross-linking reagents | Chemical Reagents | Captures proximal residues in native environment | Validation of protein complexes and interactions |

AlphaFold represents a transformative tool that accelerates rather than replaces experimental structure determination. The quantitative comparisons reveal that while AlphaFold predictions achieve remarkable accuracy for well-folded domains and single conformational states, they systematically struggle with proteins exhibiting large-scale conformational dynamics, allosteric regulation, and ligand-dependent structural changes. The most powerful applications emerge from integrative approaches that combine AI prediction with experimental validation, such as using AlphaFold models as starting points for NMR refinement or as search models for molecular replacement in crystallography. For researchers in structural biology and drug development, the current paradigm should leverage AlphaFold predictions as exceptionally accurate hypotheses to guide and accelerate experimental workflows, while recognizing that critical structural details—particularly those involving flexibility, regulation, and molecular interactions—still require experimental verification.

Molecular Replacement with AlphaFold Models in X-ray Crystallography

The solution of the phase problem remains a significant challenge in X-ray crystallography. Molecular replacement (MR), which relies on a previously solved structure as a template, has long been the most common phasing method. However, its success was historically limited by the availability of a sufficiently similar (>30% sequence identity) homologous structure. The emergence of AlphaFold (Google DeepMind) has fundamentally altered this landscape. By providing highly accurate de novo protein structure predictions, AlphaFold has democratized MR, making it possible to phase proteins without experimentally-determined homologs in the Protein Data Bank (PDB) [34].

This guide objectively compares the performance of AlphaFold-generated models against traditional experimental models within the MR pipeline. It provides a rigorous framework for validation, grounded in the broader thesis that while AI predictions are powerful tools, they must be critically evaluated against experimental data to ensure biological accuracy [35].

Performance Comparison: AlphaFold vs. Experimental Models

Quantitative Assessment of Accuracy and Limitations

Extensive benchmarking against experimental structures reveals a nuanced picture of AlphaFold's performance. The table below summarizes key comparative metrics.

Table 1: Performance Metrics of AlphaFold Models in Structural Biology

| Metric | AlphaFold Model Performance | High-Quality Experimental Structure | Key Findings |

|---|---|---|---|

| Overall Global RMSD | Varies by protein class; higher for dynamic proteins [14] | Benchmark | AF2 predicts ~50% of autoinhibited proteins within 3Å gRMSD vs. ~80% for static two-domain proteins [14]. |

Domain Placement Accuracy (imfdRMSD) |

Significantly less accurate for flexible systems [14] | Benchmark | ~50% of predicted autoinhibitory modules are misaligned (>3Å RMSD) relative to functional domains [14]. |

| Ligand-Binding Pocket Geometry | Systematically underestimated by 8.4% on average [6] | Benchmark | Impacts accuracy for structure-based drug design [6]. |

| Stereochemical Quality | Higher than experimental structures [6] | More outliers | Lacks functionally important Ramachandran outliers present in real structures [6]. |

| Error in High-Confidence Regions | ~2x larger than high-quality experimental structures [35] | Benchmark | About 10% of highest-confidence predictions contain substantial errors [35]. |

Success Rates in Molecular Replacement Pipelines

The true test of an MR model is its practical utility in solving new structures. Automated pipelines like MrBUMP have been updated to incorporate AlphaFold models, which has streamlined the process and provided more robust initial models [34]. In a striking demonstration of this capability, structural data submitted to the CASP14 experiment were solved via molecular replacement using the very AlphaFold models generated for the test itself [34]. This success highlights a profound shift, potentially moving the major bottleneck in structure determination from solving the phase problem to growing high-quality crystals.

However, performance is not uniform. AlphaFold models are exceptionally good at predicting stable, folded domains with well-defined secondary structures. This makes them highly effective for MR of single-domain proteins or rigid complexes. The models provide an excellent starting point for subsequent refinement, often requiring only minor adjustments to fit the experimental electron density [34].

Table 2: Application Suitability and Comparison with Other Methods

| Application / Protein Class | AlphaFold Model Suitability | Traditional Experimental Model Suitability | Notes |

|---|---|---|---|

| Rigid Single-Domain Proteins | Excellent | Excellent (if available) | AF models often rival experimental accuracy for these targets [34]. |

| Multi-Domain Proteins with Static Interactions | Excellent [14] | Excellent (if available) | AF2 accurately predicts proteins with permanent domain interactions [14]. |

| Proteins with Large-Scale Allosteric Transitions | Poor to Mixed [14] | Required for confirmation | AF2/3 struggle with autoinhibited proteins and large conformational changes [14]. |

| Ligand/Drug-Binding Site Analysis | Caution Advised [6] [35] | Essential | AF systematically underestimates pocket volume; experimental data critical for drug design [6] [35]. |

| Intrinsically Disordered Regions | Poor [34] | Required for characterization | AF2 is significantly limited in regions of disorder [34]. |

| Complexes with Ions/Cofactors | Not Modeled | Essential | AF does not account for these, limiting functional insight [34]. |

Experimental Protocols for Validation

Workflow for Molecular Replacement Using AlphaFold

The following diagram illustrates the integrated workflow for using an AlphaFold-predicted model to solve a novel crystal structure via Molecular Replacement.

Key Validation Methodologies

Once a model is placed in the unit cell via MR, its quality and fit to the experimental data must be rigorously validated.

- Electron Density Fit (σA-weighted 2mFo-DFc Map): The primary validation metric. A high-quality model will have continuous density for the main chain and well-defined side-chain density. As noted by Terwilliger et al., even high-confidence AlphaFold predictions can show regions that do not agree with experimental electron density, necessitating manual rebuilding in tools like Coot [35].

- Real-Space Correlation Coefficient (RSCC): Quantifies the agreement between the model and the electron density on a per-atom or per-residue basis. This is a sensitive indicator for local errors.

- Ramachandran Plots and Stereochemistry: While AlphaFold models generally have high stereochemical quality, they may lack the functionally important Ramachandran outliers sometimes present in experimental structures [6]. Use MolProbity (integrated in Phenix) to assess.

- Atomic Displacement Parameters (B-factors): The B-factor distribution in a refined AlphaFold model should be physically reasonable and consistent with the experimental data's temperature factors.

The Scientist's Toolkit: Essential Research Reagents and Software

Table 3: Key Resources for Molecular Replacement with AlphaFold Models

| Resource/Solution | Type | Primary Function |

|---|---|---|

| AlphaFold Database | Database | Pre-computed structure predictions for a vast number of proteomes [34]. |

| AlphaFold Server | Software | Platform to generate new predictions, including for protein complexes [14]. |

| Phenix Suite | Software | Comprehensive platform for macromolecular structure determination, including MR, refinement, and validation [35]. |

| CCP4 Suite | Software | Standard collection of programs for protein crystallography, including MR pipelines like Phaser and MrBUMP [34]. |

| Coot | Software | Molecular graphics tool for model building, validation, and manipulation, essential for manual adjustment [35]. |

| PyMOL / ChimeraX | Software | Molecular visualization for comparing predicted models, experimental maps, and final structures. |

| Protein Data Bank (PDB) | Database | Archive of experimentally determined structures used for benchmarking and validation [14] [35]. |

AlphaFold has irrevocably transformed the practice of molecular replacement, turning it from a method dependent on the chance existence of a homologous structure into a nearly universal tool for de novo phasing. The experimental data confirms that AlphaFold models are astonishingly accurate for a wide range of proteins and can successfully phase structures that were previously intractable [34] [35].

However, the guiding principle for structural biologists must be that AlphaFold models are exceptionally useful hypotheses, not final answers [35]. They systematically struggle with conformational dynamics, allosteric regulation, and the precise geometry of functional sites like ligand-binding pockets [6] [14]. For detailed mechanistic insights, especially in structure-based drug design, there is no substitute for experimental data [35]. The most robust structural biology pipeline will therefore continue to be a hybrid one: leveraging the power of AI prediction to obtain an initial model, and then using high-quality experimental data to refine, validate, and correct that model to reveal the full, functional truth of the protein.

The field of structural biology has been transformed by two independent revolutions: the breakthrough accuracy of deep learning-based protein structure prediction tools like AlphaFold 2 (AF2) and the "resolution revolution" in cryo-electron microscopy (cryo-EM) and cryo-electron tomography (cryo-ET) [3] [36]. While AF2 can predict protein structures from amino acid sequences with near-experimental accuracy, it faces inherent limitations in capturing the full spectrum of biologically relevant, dynamic states [13] [37]. Conversely, cryo-EM and cryo-ET provide experimental snapshots of proteins in various functional conformations, often within complex cellular contexts, but determining atomic models from these maps—especially at lower resolutions—remains a significant challenge [38] [39]. Integrative modeling, which involves fitting, refining, and validating AF2 predictions against experimental cryo-EM and cryo-ET density maps, has therefore emerged as a powerful approach to overcome the limitations of each method individually. This guide provides a comparative overview of the protocols and performance metrics for integrating AF2 models with cryo-EM data, framing this within the broader thesis of validating computational predictions against experimental structural data.

Performance Comparison: AF2 Predictions vs. Experimental Structures

Systematic comparisons between AF2-predicted models and experimental structures provide critical benchmarks for understanding the strengths and weaknesses of integrative approaches.

Accuracy and Limitations in Key Structural Features

The following table summarizes findings from a comprehensive analysis of nuclear receptor structures, illustrating specific areas where AF2 predictions diverge from experimental data [13].

Table 1: Performance of AlphaFold2 on Nuclear Receptor Family Structures

| Structural Feature | AF2 Performance vs. Experimental Structures | Biological Implication |

|---|---|---|

| Overall Fold Accuracy | High accuracy for stable conformations with proper stereochemistry [13]. | Reliable for core structure determination. |

| Ligand-Binding Pockets (LBDs) | Systematically underestimates pocket volumes by 8.4% on average; higher structural variability (CV=29.3%) [13]. | Impacts drug design and ligand docking studies. |

| DNA-Binding Domains (DBDs) | Lower structural variability (CV=17.7%) compared to LBDs [13]. | More reliable prediction for DNA-binding interfaces. |

| Conformational Diversity | Captures single conformational states; misses functional asymmetry in homodimers and alternative states [13] [40]. | Limited insight into dynamics and allostery. |

| Intrinsically Disordered Regions | Low confidence (pLDDT < 50); poorly modeled regions [13]. | Incomplete models for flexible linkers and domains. |

Refinement Success Across Cryo-EM Resolutions

The utility of an AF2 model is often determined by how well it can be refined against an experimental density map. Success rates are highly dependent on the initial quality of the prediction and the resolution of the cryo-EM map.

Table 2: Refinement Outcomes of AF2 Models in Cryo-EM Maps of Varying Resolution

| Cryo-EM Resolution Range | Refinement Outcome | Key Dependency |

|---|---|---|

| < 4.5 Å | 22 of 25 models refined to >90% Cα accuracy [38]. High success rate [39]. | Quality of the experimental density map. |

| 4 – 6 Å (Experimental Maps) | Good refinement success; TM-scores >0.8 for 9 of 10 larger chains (226-373 residues) [38]. | Quality of the initial AF2 model and its alignment with the density. |

| 6 – 8 Å (Hybrid Maps) | Successful refinement possible, with TM-score improvements observed in multiple cases [39]. | Robustness of the refinement protocol (e.g., in Phenix). |

| > 8 Å | Isolated success cases; refinement becomes increasingly challenging [39]. | Initial model quality and presence of stabilizing templates. |

A study refining 10 protein chains against experimental 4–6 Å resolution maps found that for 9 larger chains (226–373 residues), the initial AF2 models were highly accurate (TM-scores > 0.9), and subsequent refinement maintained or slightly improved this accuracy [38]. However, a smaller 115-residue chain with three helices was poorly predicted (TM-score 0.52), demonstrating that model quality is not uniform and can depend on factors like chain length and the availability of evolutionary data [38].

Experimental Protocols for Integrative Modeling

This section details specific methodologies for integrating and refining AF2 predictions against cryo-EM density maps.

Protocol 1: Phenix Refinement of AF2 Models

This protocol is designed for refining AF2 models against cryo-EM density maps, particularly in the intermediate resolution range (4–6 Å) [38] [39].

- Step 1: Data Preparation. Obtain the experimental cryo-EM map from the Electron Microscopy Data Bank (EMDB) and the corresponding amino acid sequence from the Protein Data Bank (PDB) or UniProt. Generate the AF2 model using a local installation or a publicly accessible web service.

- Step 2: Initial Rigid-Body Fitting. Fit the AF2 model into the experimental density map as a rigid body using tools like

UCSF ChimeraorCOOT. This initial placement maximizes the cross-correlation between the model and the map. - Step 3: Refinement with Phenix. Use the

phenix.real_space_refinefunction within the Phenix software suite. Key parameters include:resolution=- Set to the global resolution of the cryo-EM map.macro_cycle=true- To run multiple refinement cycles.minimization_global=true- For global minimization of the model.simulated_annealing=true- Can help in escaping local minima, useful for lower-resolution maps.

- Step 4: Model Validation. After refinement, validate the model using geometry quality metrics (e.g., MolProbity) and the fit-to-density metrics (e.g., cross-correlation). Tools like

MonoResshould be used to assess the local resolution of the map, as refinement success can vary with local map quality [38].

Protocol 2: Density-Guided MD with AF2 Ensembles

For more challenging cases involving conformational changes, a robust protocol combines AI-generated ensembles with density-guided molecular dynamics (MD) simulations [41].

- Step 1: Generate a Conformational Ensemble. Use stochastic subsampling of the Multiple Sequence Alignment (MSA) in AlphaFold2 to generate a diverse set of models (e.g., 1250 per target), rather than relying on a single prediction. This explores alternative conformations inherent in the coevolutionary data.

- Step 2: Cluster and Select Representative Models. Filter out misfolded models using a structure-quality score like the generalized orientation-dependent all-atom potential (GOAP). Then, use k-means clustering based on Cartesian coordinates to identify a limited set of structurally distinct, representative models for simulation.

- Step 3: Density-Guided Molecular Dynamics. Perform MD simulations where a biasing potential is added to the classical forcefield to guide the model toward the experimental density map. This is implemented in software like