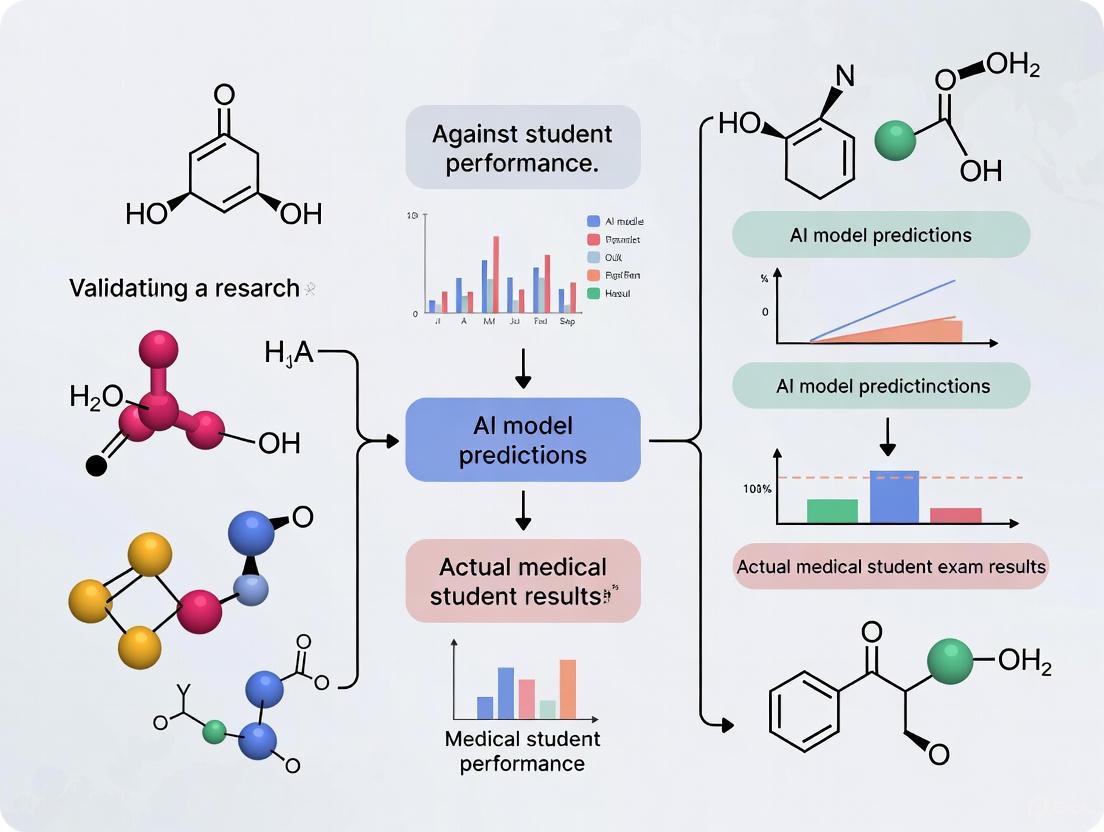

Beyond the Benchmark: A Framework for Validating AI Model Performance Against Medical Student Exam Results

This article provides a comprehensive framework for researchers and professionals in biomedical science and drug development seeking to validate artificial intelligence (AI) models against medical student examination performance.

Beyond the Benchmark: A Framework for Validating AI Model Performance Against Medical Student Exam Results

Abstract

This article provides a comprehensive framework for researchers and professionals in biomedical science and drug development seeking to validate artificial intelligence (AI) models against medical student examination performance. It explores the foundational rationale for using exam data as a validation metric, detailing methodological approaches for robust study design and model application. The scope includes troubleshooting common pitfalls, such as model overfitting and reasoning deficiencies, and offers strategies for optimization. Finally, it presents rigorous techniques for the comparative validation of AI against human performance, synthesizing key takeaways and outlining future implications for AI integration in clinical research and decision-support systems.

The New Gold Standard: Why Medical Exams are Critical for AI Validation

Medical Licensure Exams as a Proxy for Clinical Reasoning

Medical licensure exams are designed to ensure that physicians possess the essential knowledge and clinical reasoning skills to provide safe and effective patient care. Clinical reasoning—the cognitive process underlying diagnosis and treatment decisions—is a core competency assessed through these examinations. In the evolving landscape of artificial intelligence (AI), these standardized tests have become critical benchmarks for evaluating the clinical capabilities of large language models (LLMs). Researchers, scientists, and drug development professionals are leveraging these exams to validate whether AI systems can replicate the complex diagnostic reasoning of human physicians. This guide provides a structured comparison of human and AI performance on key medical licensing examinations, details the experimental methodologies enabling these comparisons, and outlines the essential tools for related research.

Comparative Performance: Human vs. AI Models on Medical Assessments

The following tables summarize quantitative performance data across different assessment types, contrasting human examination benchmarks with the capabilities of state-of-the-art AI models.

Table 1: Performance on US Medical Licensing Examination (USMLE) Components

| Exam Component | Human Passing Threshold | Single AI (GPT-4) Performance | Collaborative AI Council Performance |

|---|---|---|---|

| USMLE Step 1 | ~ | ~ | 97% Accuracy [1] [2] |

| USMLE Step 2 CK | ~ | ~ | 93% Accuracy [1] [2] |

| USMLE Step 3 | ~ | ~ | 94% Accuracy [1] [2] |

Note: The "Collaborative AI Council" refers to a system where five GPT-4 instances deliberate to reach a consensus [1] [2]. The exact human passing thresholds were not explicitly detailed in the search results.

Table 2: Performance on Clinical Reasoning and Skills Assessments

| Assessment Type | Human Performance Benchmark | AI Model Performance | Key Findings |

|---|---|---|---|

| Script Concordance (Clinical Reasoning) | Senior Residents/Attending Physicians | Performs similarly to 1st/2nd year medical students [3] | Struggles to adapt to new, irrelevant information (red herrings) [3] |

| Short Answer Grading | Faculty Graders (Reference Standard) | GPT-4o equivalent to faculty for Remembering, Applying, Analyzing questions (Mean difference: -0.55%) [4] | Discrepancies noted on "Understanding" and "Evaluating" questions [4] |

| Objective Structured Clinical Exam (OSCE) | Medical Students/Graduates | Not directly tested; assesses history-taking, physical exam, communication [5] | Used to verify fundamental osteopathic clinical skills for licensure [5] |

Experimental Protocols for Validating AI Clinical Reasoning

The Collaborative AI Council Protocol

A 2025 study established a novel method for enhancing AI reliability on medical exams by treating variability in model responses as a strength rather than a flaw [1] [2].

- Objective: To evaluate whether structured deliberation between multiple AI instances improves accuracy on the USMLE beyond single-model performance [1] [2].

- Materials: 325 publicly available questions from all three steps of the USMLE; five instances of OpenAI's GPT-4 model [2].

- Workflow:

- Initial Response: Each of the five GPT-4 instances generates an independent answer and rationale for the same USMLE question.

- Facilitated Deliberation: A facilitator algorithm summarizes the differing rationales and prompts the models to discuss their reasoning.

- Consensus Building: The council of AIs iteratively discusses and refines the answer until a consensus emerges.

- Final Output: The consensus answer is recorded and scored against the correct response.

- Key Metric: The deliberation process corrected over half of the initial errors when models disagreed, improving the odds of converting an incorrect answer to a correct one by a factor of five [1].

The diagram below illustrates this collaborative workflow.

Script Concordance Testing (SCT) Protocol

To move beyond multiple-choice exams and probe nuanced clinical reasoning, researchers developed a benchmark based on Script Concordance Testing (SCT), a tool used in medical education [3].

- Objective: To assess the flexibility of AI models in adapting their diagnostic reasoning in response to new and potentially uncertain clinical information [3].

- Materials: The

concor.dancetest, built from medical school SCTs for surgery, pediatrics, obstetrics, psychiatry, emergency medicine, neurology, and internal medicine from Canada, the U.S., Singapore, and Australia [3]. - Workflow:

- Clinical Scenario: The model is presented with an initial clinical scenario (e.g., a patient with chest pain).

- New Information: A new piece of information is introduced, which may be highly relevant, somewhat relevant, or a complete red herring (e.g., the patient stubbed their toe last week).

- Judgment Measurement: The test measures how well the model updates its diagnostic judgment or management plan in light of the new information, compared to the responses of expert clinicians.

- Key Findings: The most advanced LLMs struggled significantly with irrelevant information (red herrings), often trying to incorrectly incorporate it into their diagnostic reasoning. This "overconfidence" problem was sometimes worse in more advanced models [3].

Automated Short-Answer Grading Protocol

This protocol evaluates the potential for AI to assist in grading complex, non-multiple-choice assessments, which are resource-intensive for faculty [4].

- Objective: To determine if an LLM (GPT-4o) can grade narrative short-answer questions (SAQs) in case-based learning exams with equivalence to faculty graders [4].

- Materials: 1,450 de-identified student SAQs from pre-clinical medical examinations [4].

- Workflow:

- AI Grading: SAQs are input into GPT-4o with specific grading instructions.

- Faculty Grading: The same SAQs are graded by human faculty members, serving as the reference standard.

- Equivalence Analysis: A bootstrapping procedure calculates 95% confidence intervals (CIs) for the mean score differences between AI and faculty. Equivalence is defined as the entire 95% CI falling within a ±5% margin.

- Subgroup Analysis: Performance is analyzed across different cognitive levels based on Bloom's taxonomy (Remembering, Understanding, Applying, etc.) [4].

- Key Findings: While overall scores were equivalent to faculty, the AI showed discrepancies specifically when grading questions requiring "Understanding" and "Evaluating" skills [4].

The Scientist's Toolkit: Key Reagents and Materials

Table 3: Essential Research Reagents and Platforms for AI Clinical Reasoning Validation

| Reagent / Platform | Function in Research |

|---|---|

| USMLE Question Banks | Serves as a standardized, clinically relevant benchmark for initial validation of AI knowledge and diagnostic accuracy [1] [2]. |

| Script Concordance Tests (SCTs) | Provides a specialized tool for assessing adaptive clinical reasoning and the ability to handle uncertainty, beyond rote knowledge [3]. |

| Objective Structured Clinical Exam (OSCE) | A standardized patient-based assessment used to verify hands-on clinical skills such as history-taking, physical examination, and communication, which are required for licensure [5]. |

| Occupational English Test (OET) Medicine | Assesses English language communication proficiency in a healthcare context, a requirement for international medical graduates seeking ECFMG certification [6]. |

| LLM APIs (e.g., GPT-4, etc.) | Provide the core AI models for testing. Access is typically via API, allowing researchers to prompt models with exam questions or clinical scenarios [1] [4]. |

| Custom Benchmarking Code (Python/R) | Essential for running automated tests, statistical comparison of results (e.g., bootstrapping, ICC), and analyzing performance data [4]. |

Medical licensure exams provide a crucial, though incomplete, proxy for validating the clinical reasoning capabilities of AI. As the data shows, collaborative AI systems can surpass human passing thresholds on knowledge-based USMLE multiple-choice questions [1] [2]. However, more nuanced evaluations like Script Concordance Testing reveal significant limitations in AI's ability to manage uncertain or irrelevant information—a core component of expert human reasoning [3]. For researchers and developers in this field, a multi-faceted approach is essential. Relying solely on exam scores is insufficient; protocols must be designed to test the adaptive, flexible, and often messy reasoning required at the bedside. The future of safe and effective clinical AI depends on validation tools that measure not just knowledge, but the depth of clinical understanding.

The integration of artificial intelligence (AI) into healthcare necessitates rigorous validation of its capabilities. Medical licensing examinations, particularly the United States Medical Licensing Examination (USMLE), have emerged as a critical benchmark for assessing AI's medical knowledge and clinical reasoning potential. This guide provides a comprehensive, data-driven comparison of how advanced AI models perform on these high-stakes assessments relative to human medical students and professionals. Recent studies demonstrate that AI is not only achieving passing scores but, in some cases, surpassing human performance on standardized medical exams, with one collaborative "AI council" approach achieving up to 97% accuracy on the USMLE [2] [1]. However, this performance must be contextualized within AI's current limitations in real-world clinical reasoning, where experienced physicians still maintain a significant advantage in adapting to new information and handling diagnostic uncertainty [3].

This analysis objectively compares the performance of various AI models across different medical examination formats, details the experimental methodologies used for evaluation, and provides resources for researchers interested in this rapidly evolving field. Understanding these benchmarks is crucial for researchers, scientists, and drug development professionals who are exploring the potential applications of AI in medicine and healthcare.

Quantitative Performance Analysis

Comparative Performance Tables

Table 1: AI Performance on Medical Licensing Examinations

| AI Model / System | Exam Type | Accuracy (%) | Key Finding / Context |

|---|---|---|---|

| AI Council (GPT-4) | USMLE (3 Steps) | 97, 93, 94 [2] | Five instances deliberating; outperformed single AI instances. |

| OpenEvidence AI | USMLE | 100 [7] | Also provides explanatory reasoning for answers. |

| GPT-5 (per OpenEvidence) | USMLE | 97 [7] | Evaluated by an independent company. |

| GPT-4.0 | Brazilian Progress Test | 87.2 [8] | Outperformed medical students' average scores. |

| GPT-4.0 | Medical Licensing Exams (Pooled) | 81.8 [9] | Meta-analysis of 53 studies across various countries. |

| Claude 3.5 Sonnet v2 | MedAgentBench (Clinical Tasks) | ~70 [10] | Success rate on real-world clinical tasks in a virtual EHR. |

| GPT-3.5 | Medical Licensing Exams (Pooled) | 60.8 [9] | Meta-analysis of 53 studies; significantly lower than GPT-4. |

Table 2: AI Performance Breakdown by Specialty (Brazilian Progress Test)

| Medical Specialty | GPT-4.0 Accuracy (%) | GPT-3.5 Accuracy (%) |

|---|---|---|

| Basic Sciences | 96.2 | 77.5 |

| Gynecology & Obstetrics | 94.8 | 64.5 |

| Surgery | 88.0 | 73.5 |

| Public Health | 89.6 | 77.8 |

| Pediatrics | 80.0 | 58.5 |

| Internal Medicine | 75.1 | 61.5 |

Source: Alessi et al. (2025) [8]

Key Performance Insights

The data reveals a consistent and significant performance gap between different generations of AI models. A systematic meta-analysis of 53 studies found that GPT-4 was 36% more likely to provide correct answers than GPT-3.5 across both medical licensing and residency exams [9]. This underscores the rapid pace of improvement in large language models (LLMs) for specialized domains.

Furthermore, performance varies considerably by medical specialty and question type. As shown in Table 2, AI models excel in disciplines like Basic Sciences and Gynecology & Obstetrics but find more challenge in Pediatrics and Internal Medicine, which often require more nuanced clinical reasoning [8]. This suggests that overall exam scores can mask important subject-specific strengths and weaknesses.

Most notably, simply passing these exams does not equate to clinical proficiency. Research shows that while AI can outperform humans on multiple-choice questions, it struggles with the dynamic and often ambiguous reasoning required in real patient care, a domain where experienced clinicians still significantly outperform AI [3].

Detailed Experimental Protocols

The AI Council Deliberation Framework

A groundbreaking study from John Hopkins University introduced a "council" approach to improve AI reliability and accuracy on the USMLE [2] [1].

1. Objective: To harness the natural response variability of LLMs, using structured dialogue between multiple AI instances to achieve higher accuracy and self-correction than any single model.

2. Methodology:

- Council Formation: Five separate instances of OpenAI's GPT-4 were initialized.

- Facilitator Algorithm: A central algorithm orchestrated the deliberation process without providing expert knowledge.

- Structured Deliberation Workflow:

- Initial Response: Each of the five AI instances provided an initial answer and rationale to the medical question.

- Divergence Check: If all five agreed, the process stopped, and that answer was selected.

- Deliberation Cycle: If answers differed, the facilitator summarized the differing rationales and asked the group to reconsider.

- Iteration: This process repeated until a consensus emerged or a predetermined number of rounds was completed.

- Outcome Measurement: The final consensus answer was compared against the exam key to determine accuracy.

3. Key Findings: This collaborative approach corrected more than half of the initial errors when the models disagreed, ultimately achieving the correct conclusion 83% of the time in non-unanimous cases. The council's performance (97%, 93%, 94% across USMLE steps) exceeded both individual AI instances and human passing thresholds [2] [1].

The following diagram illustrates this structured deliberation workflow:

Script Concordance Testing for Clinical Reasoning

To evaluate AI beyond factual recall, researchers have adapted Script Concordance Testing (SCT), a method used in medical education to assess clinical reasoning under uncertainty [3].

1. Objective: To evaluate the ability of LLMs to adapt their diagnostic and management plans in response to new clinical information, including the critical skill of identifying irrelevant data ("red herrings").

2. Methodology:

- Test Development: SCTs for surgery, pediatrics, obstetrics, psychiatry, emergency medicine, neurology, and internal medicine were gathered from medical programs in Canada, the US, Singapore, and Australia.

- Question Structure: Each item presents a clinical scenario followed by a diagnostic or management hypothesis. New information is then provided, and the test-taker must judge its impact on the initial hypothesis on a Likert-scale (e.g., -2 to +2).

- Scoring: Responses are scored against a reference panel of experienced senior clinicians. The goal is to match the reasoning patterns of experts.

- AI Testing: Ten popular LLMs from Google, OpenAI, DeepSeek, and Anthropic were evaluated using these tests and their performance was compared to that of first-year medical students, senior residents, and attending physicians.

3. Key Findings: The advanced AI models generally performed at the level of first- or second-year medical students but failed to reach the standard of senior residents or attending physicians. A major weakness was identified in handling irrelevant information. The models were often unable to recognize "red herrings" and would instead invent explanations to fit the irrelevant facts into their diagnostic reasoning, demonstrating a significant limitation in real-world clinical judgment [3].

The logical flow of a script concordance test is outlined below:

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for AI Medical Benchmarking Research

| Reagent / Resource | Type | Function & Application | Example (From Search Results) |

|---|---|---|---|

| MedQA Dataset | Public Benchmark Dataset | A comprehensive collection of USMLE-style questions for evaluating AI model medical knowledge and identifying potential biases [11]. | Used to test for racial bias by injecting demographic stereotypes into clinical scenarios [11]. |

| concor.dance Tool | Custom Benchmark | A script concordance test (SCT) platform to assess clinical reasoning flexibility and adaptability to new information [3]. | Revealed AI's difficulty in recognizing irrelevant clinical information ("red herrings") [3]. |

| MedAgentBench | Virtual Testing Environment | A simulated Electronic Health Record (EHR) with realistic patient profiles to benchmark how well AI agents can perform clinical tasks (e.g., ordering tests) [10]. | Tested AI's ability to execute real-world clinical workflows, with top models achieving ~70% success rate [10]. |

| AI Council Framework | Experimental Methodology | A structured deliberation protocol that leverages multiple AI instances to improve answer accuracy through debate and self-correction [2]. | Achieved record-breaking scores (up to 97%) on the USMLE by having five GPT-4 instances deliberate [2]. |

| FHIR API Endpoints | Data Interoperability Standard | Allows AI agents to interface with and navigate virtual EHR systems to retrieve patient data and enter orders in benchmark tests [10]. | Enabled the testing of AI "agents" that can do things in a clinical system, not just answer questions [10]. |

The benchmarking data clearly demonstrates that advanced AI models, particularly those using collaborative reasoning or the latest architectures, have achieved a level of proficiency on medical licensing exams that meets and often exceeds human passing standards. However, these exam scores represent a narrow slice of medical capability. The same models that ace multiple-choice questions struggle with the dynamic, often ambiguous reasoning required in real-world clinical settings, as shown by script concordance tests and real-world task benchmarks like MedAgentBench [3] [10].

For researchers and professionals, this underscores a critical point: success on the USMLE is a necessary but insufficient benchmark for validating AI's readiness for clinical application. Future research and development must prioritize creating and utilizing more nuanced evaluation frameworks that test not just medical knowledge, but also clinical judgment, adaptability, and the safe execution of tasks within complex healthcare environments. The tools and methodologies outlined in this guide provide a foundation for this essential work.

In the high-stakes domain of artificial intelligence applied to medical education and research, model performance validation transcends technical exercise to become an ethical imperative. The deployment of AI for predicting medical student performance or diagnosing pathologies carries significant consequences, influencing educational pathways and clinical decisions. Within this context, evaluation metrics serve as the crucial translation layer between algorithmic outputs and actionable insights. While accuracy often serves as an intuitive starting point for model assessment, its limitations in isolation are particularly pronounced in medical contexts where data imbalances are common and the costs of different error types are vastly unequal [12] [13]. A comprehensive understanding of accuracy, precision, recall, F1-score, and AUC-ROC is therefore indispensable for researchers and developers working at the intersection of AI and medical science. This guide provides a structured comparison of these key metrics, grounded in experimental protocols and data from real-world medical education applications, to inform responsible model selection and validation.

Metric Definitions and Clinical Interpretations

Core Binary Classification Metrics

In binary classification tasks common to medical AI—such as predicting student exam failure or identifying pathological findings—model performance is fundamentally derived from four outcomes in the confusion matrix: True Positives (TP), True Negatives (TN), False Positives (FP), and False Negatives (FN) [14]. These building blocks form the basis for all subsequent metrics:

- True Positive (TP): A correctly identified positive instance (e.g., a student at risk of failing is correctly flagged).

- True Negative (TN): A correctly identified negative instance (e.g., a student likely to pass is correctly identified).

- False Positive (FP): A negative instance incorrectly classified as positive (Type I error).

- False Negative (FN): A positive instance incorrectly classified as negative (Type II error) [14].

From these fundamentals, the primary evaluation metrics are derived:

Accuracy measures overall correctness by calculating the proportion of all correct predictions among the total predictions: Accuracy = (TP + TN) / (TP + TN + FP + FN) [14]. While intuitive and widely used, accuracy provides a misleadingly optimistic picture when class distribution is imbalanced, a phenomenon known as the "accuracy paradox" [13].

Precision (Positive Predictive Value) quantifies the reliability of positive predictions by measuring the proportion of correctly identified positives among all instances predicted as positive: Precision = TP / (TP + FP) [12] [14]. High precision indicates that when the model predicts a positive, it can be trusted.

Recall (Sensitivity or True Positive Rate) measures completeness by calculating the proportion of actual positives correctly identified: Recall = TP / (TP + FN) [12] [14]. High recall indicates the model misses few positive instances.

F1-Score provides a single metric that balances both precision and recall through their harmonic mean: F1-Score = 2 × (Precision × Recall) / (Precision + Recall) [15]. This metric is particularly valuable when seeking an equilibrium between false positives and false negatives.

AUC-ROC (Area Under the Receiver Operating Characteristic Curve) represents the model's ability to distinguish between classes across all classification thresholds [15]. The ROC curve plots the True Positive Rate (recall) against the False Positive Rate at various threshold settings, with AUC providing an aggregate measure of performance across all thresholds [15].

Metric Selection Framework for Medical Education Applications

The diagram below illustrates the decision pathway for selecting appropriate metrics based on research objectives and dataset characteristics in medical education contexts:

Comparative Analysis of Key Performance Metrics

Metric Characteristics and Applications

Table 1: Comprehensive Comparison of AI Evaluation Metrics for Medical Education Research

| Metric | Formula | Optimal Value | Strengths | Weaknesses | Medical Education Use Case |

|---|---|---|---|---|---|

| Accuracy | (TP + TN) / (TP + FP + TN + FN) [14] | 1.0 | Intuitive; Easy to calculate and explain [13] | Misleading with imbalanced data [13] | Initial screening when pass/fail rates are comparable |

| Precision | TP / (TP + FP) [14] | 1.0 | Measures reliability of positive predictions [12] | Ignores false negatives [15] | When false alarms are costly (e.g., incorrectly predicting high performance) |

| Recall (Sensitivity) | TP / (TP + FN) [14] | 1.0 | Identifies most at-risk students [12] | Ignores false positives [15] | Critical for early intervention systems where missing at-risk students is unacceptable |

| F1-Score | 2 × (Precision × Recall) / (Precision + Recall) [15] | 1.0 | Balanced view of PPV and TPR [15] | Obscures which metric (P or R) is driving score [15] | Holistic assessment when both FP and FN have consequences |

| AUC-ROC | Area under ROC curve | 1.0 | Threshold-independent; Measures ranking capability [15] | Overoptimistic with imbalanced data [15] | Comparing model architecture performance across institutions |

Experimental Evidence from Medical Education Research

Table 2: Experimental Performance Metrics from AI in Medical Education Studies

| Study Context | Model Type | Accuracy | Precision | Recall | F1-Score | AUC-ROC | Dataset Characteristics |

|---|---|---|---|---|---|---|---|

| Medical Student Performance Prediction [16] | Stacking Meta-Model | Not Reported | 0.966 (CMPIE) 0.994 (CCA) | Not Reported | 0.966 (CMPIE) 0.994 (CCA) | 0.97 (CMPIE) 0.99 (CCA) | 997 students (CMPIE) 777 students (CCA) |

| Colorectal Cancer LNM Prediction [17] | Deep Learning (Meta-Analysis) | Not Reported | Not Reported | 0.87 | Not Reported | 0.88 | 12 studies, 8,540 patients |

| Brain CT Report Classification [18] | DistilBERT Transformer | Not Reported | Not Reported | 0.91 | 0.89 | Not Reported | 1,861 CT reports |

Experimental Protocols for Metric Validation

Model Training and Evaluation Framework

The experimental methodology employed in rigorous medical education AI research typically follows a structured protocol to ensure validity and generalizability. The study on predicting medical students' performance in comprehensive assessments provides an exemplary framework [16]:

Data Preparation and Preprocessing:

- Conduct significance testing (Chi-square) to identify attributes with significant differences between pass/fail groups (p < 0.05)

- Address missing data through appropriate imputation or exclusion

- Encode categorical variables using one-hot encoding

- Apply Cramer's V (> 0.8) to eliminate redundant categorical pairs

- Implement resampling techniques (SMOTE, Tomek Links, SMOTE-ENN) to address class imbalance [16]

Model Development and Validation:

- Utilize ensemble models (Random Forest, Adaptive Boosting, XGBoost) as base learners

- Implement stacking meta-models with logistic regression as meta-learner

- Employ rigorous train-test splits (67%-33% partition)

- Apply nested cross-validation (5 outer folds, 3 inner folds) with GridSearchCV for hyperparameter tuning

- Compute final performance metrics exclusively on held-out test sets to prevent data leakage [16]

Explainability and Clinical Translation:

- Implement SHapley Additive exPlanations (SHAP) for model interpretability

- Generate global feature importance plots (cohort-level insights)

- Create individual force/waterfall plots for student-level predictions [16]

The Researcher's Toolkit: Essential Methodological Components

Table 3: Essential Research Components for AI Validation in Medical Education

| Component | Function | Example Implementation |

|---|---|---|

| Resampling Techniques | Address class imbalance in educational outcomes | SMOTE, Borderline SMOTE, Tomek Links, SMOTE-ENN [16] |

| Ensemble Methods | Improve predictive performance through model diversity | Random Forest, Adaptive Boosting, XGBoost [16] |

| Stacking Meta-Models | Synthesize complementary strengths of base models | Logistic Regression as meta-learner [16] |

| Explainable AI (XAI) | Provide transparency for model logic and predictions | SHapley Additive exPlanations (SHAP) [16] |

| Cross-Validation | Ensure robustness and generalizability of performance estimates | Nested cross-validation with separate hyperparameter tuning [16] |

| Statistical Analysis | Identify significant predictors and relationships | Chi-square tests, Cramer's V for redundancy checking [16] |

The validation of AI models for medical education applications requires a nuanced, multi-metric approach that aligns with both statistical rigor and clinical relevance. As evidenced by experimental results from medical student performance prediction research, exclusive reliance on accuracy provides an incomplete picture of model utility, particularly given the inherent class imbalances in educational outcomes [16]. The integration of precision, recall, F1-score, and AUC-ROC creates a comprehensive assessment framework that addresses different aspects of model performance relevant to educational decision-making. Furthermore, the implementation of explainable AI techniques such as SHAP values enhances translational potential by providing interpretable insights for educators and administrators [16]. As AI continues to transform medical education assessment paradigms, researchers must select evaluation metrics that not only quantify predictive performance but also reflect the real-world consequences of algorithmic decisions on student pathways and institutional resource allocation.

This comparison guide examines the critical disconnect between artificial intelligence (AI) performance on standardized medical benchmarks and its application in genuine clinical reasoning environments. For researchers, scientists, and drug development professionals, understanding this gap is paramount for developing AI tools that translate safely into patient care and regulatory acceptance. Current evidence reveals that high test scores on synthetic benchmarks often fail to predict real-world clinical utility, creating a significant translational barrier that the industry must overcome through rigorous validation frameworks and sociotechnical integration [19] [20].

The validation of AI models in healthcare increasingly relies on standardized testing approaches analogous to medical student examinations. However, emerging evidence suggests that strong performance on controlled benchmarks does not necessarily equate to clinical competence in real-world settings [19]. This gap mirrors concerns in medical education where pass-fail standardized testing has raised questions about adequately assessing clinical readiness [21]. In AI development, this paradox manifests when models excel at pattern recognition in curated datasets but struggle with the nuanced, dynamic, and uncertain environments characteristic of actual clinical practice [20]. Understanding this disconnect is particularly crucial for drug development professionals who must navigate regulatory pathways increasingly focused on real-world performance evidence rather than technical metrics alone [22] [23].

Comparative Analysis of AI Evaluation Frameworks

HealthBench: Standardized Clinical Conversation Analysis

OpenAI's HealthBench represents a significant advancement in systematic AI evaluation, encompassing 5,000 multi-turn clinical conversations benchmarked against 48,562 clinician-developed criteria [19]. This framework evaluates models across five key behavioral dimensions: accuracy, completeness, context awareness, communication, and instruction-following.

Table: HealthBench Evaluation Framework Metrics

| Evaluation Dimension | Assessment Focus | Methodology | Key Findings |

|---|---|---|---|

| Clinical Accuracy | Factual correctness of medical information | Comparison against clinician-developed rubrics | High scores possible in controlled settings |

| Completeness | Thoroughness of clinical assessment | Evaluation of coverage across symptom domains | May miss nuanced patient presentations |

| Context Awareness | Appropriate response to conversation flow | Analysis of dialog coherence and relevance | Struggles with complex, multi-system cases |

| Communication Quality | Patient-friendly explanation and empathy | Assessment of language appropriateness | Often technically accurate but clinically awkward |

| Instruction-Following | Adherence to specific clinical guidelines | Evaluation against protocol requirements | May rigidly apply rules without clinical judgment |

HealthBench's development involved 262 clinicians across 26 specialties and 60 countries, providing broad expert validation [19]. The automated grading system demonstrated high concordance with physician ratings (macro F1 = 0.71), comparable to inter-physician agreement, enabling scalable evaluation. However, this approach primarily assesses static, offline interactions rather than dynamic clinical reasoning processes [19].

Real-World Clinical Reasoning Assessment

In contrast to standardized benchmarks, genuine clinical reasoning operates within complex, uncertain environments where AI systems frequently demonstrate performance degradation.

Table: Real-World Clinical Reasoning Challenges for AI Systems

| Clinical Reasoning Aspect | AI Performance Gap | Clinical Impact | Example Cases |

|---|---|---|---|

| Reasoning Under Uncertainty | Struggles with ambiguous or conflicting data | May lead to inappropriate diagnostic certainty | Sepsis diagnosis with nonspecific symptoms [20] |

| Longitudinal Patient Assessment | Limited integration of evolving patient status | Inability to detect subtle clinical trends | Deteriorating patients vs. recovering patients with similar data points [20] |

| Multimodal Data Integration | Difficulty synthesizing disparate data sources | Fragmented clinical picture | Combining labs, imaging, and clinical notes [19] |

| Adaptation to New Information | Limited contextual updating capability | Failure to revise diagnoses with new data | Changing diagnostic considerations in evolving illness |

| Cognitive Bias Mitigation | May amplify biases in training data | Perpetuates healthcare disparities | Reduced accuracy for specific demographic groups [24] |

The case of sepsis management illustrates these challenges particularly well. Despite AI systems achieving high accuracy on retrospective data, they often struggle with the inherent ambiguity of sepsis definitions, variability in clinical presentations, and the need for dynamic treatment adjustments based on patient response [20]. This performance gap becomes most evident in pediatric populations where disease heterogeneity further compounds these issues [20].

Experimental Protocols for Bridging the Validation Gap

Prospective "Silent-Mode" Clinical Trials

To address the limitations of benchmark-based validation, researchers propose prospective, "silent-mode" clinical trials that embed AI within real clinical workflows without initially affecting patient care [19].

Methodology:

- Integration: Implement AI tools within electronic health record (EHR) systems to generate recommendations in real-time based on live, multimodal patient data

- Concealment: Record AI recommendations for analysis without displaying them to treating clinicians

- Comparison: Compare AI recommendations with clinician decisions at the encounter level

- Outcome Assessment: Evaluate association between model-clinician discordance and prespecified longitudinal outcomes (e.g., 30-day readmission, adjudicated diagnostic accuracy, adverse events) with appropriate risk adjustment [19]

This approach provides high-quality evidence of clinical utility and safety without compromising patient care, effectively bridging the gap between benchmark performance and real-world impact.

Randomized Controlled Trials for High-Impact AI

For AI systems with significant potential clinical impact, the same rigorous validation required for therapeutic interventions should be applied [22].

Methodology:

- Adaptive Trial Designs: Implement statistical approaches that allow for continuous model updates while preserving rigor

- Digitized Workflows: Utilize electronic systems for efficient data collection and analysis

- Pragmatic Designs: Focus on real-world effectiveness rather than ideal conditions

- Economic and Clinical Utility Endpoints: Incorporate cost-effectiveness and workflow improvement metrics alongside traditional efficacy measures [22]

The FDA's 2025 draft guidance emphasizes a risk-based credibility assessment framework with seven key steps for evaluating AI model reliability in specific contexts of use [23]. This approach recognizes that validation requirements should be proportionate to the model's potential impact on patient safety and regulatory decisions.

The Scientist's Toolkit: Essential Research Reagents

Table: Key Reagent Solutions for AI Clinical Reasoning Research

| Research Reagent | Function | Application in Validation |

|---|---|---|

| Synthetic Clinical Datasets | Provides standardized benchmark scenarios | Initial model training and validation (e.g., HealthBench) [19] |

| De-identified Real Patient Data | Offers authentic clinical complexity | Testing model performance in realistic environments [20] |

| Model-as-Judge Architectures | Enables scalable evaluation | Automated assessment alignment with clinician ratings [19] |

| Bias Detection Frameworks | Identifies performance disparities | Ensuring equitable performance across demographic groups [24] |

| Digital Twin Simulations | Creates virtual patient populations | Protocol optimization and hypothesis testing [24] |

| Explainability Toolkits | Provides model decision transparency | Interpreting AI outputs for clinical validation [23] |

Visualization of AI Clinical Validation Workflows

Current AI Validation Paradigm

Proposed Enhanced Validation Framework

Regulatory and Implementation Considerations

Evolving Regulatory Frameworks

Regulatory agencies worldwide are developing frameworks to address the gap between AI test performance and clinical utility:

- FDA's 2025 Draft Guidance: Establishes a risk-based credibility assessment framework focusing on model influence and decision consequence [23]

- EMA's Reflection Paper: Emphasizes rigorous upfront validation and comprehensive documentation [23]

- MHRA's AI Airlock: Provides a regulatory sandbox for testing innovative approaches [23]

These frameworks increasingly recognize that prospective clinical evidence rather than retrospective accuracy metrics should form the basis for regulatory decisions about AI tools in healthcare [22] [23].

Sociotechnical Integration Strategies

Successful AI implementation requires moving beyond technical performance to address workflow integration:

- Complement, Don't Replace: Design AI systems to augment rather than disrupt clinical reasoning processes [20]

- Workflow Compatibility: Ensure AI tools integrate seamlessly with established clinical workflows and EHR systems [20]

- Human-Centered Design: Prioritize user experience, training requirements, and interpretability of AI outputs [20]

- Continuous Monitoring: Implement post-deployment surveillance for model drift and performance degradation [22] [24]

The critical gap between high test scores and genuine clinical reasoning represents both a challenge and opportunity for AI in healthcare. For drug development professionals, addressing this gap requires:

- Moving beyond synthetic benchmarks to real-world validation

- Embracing prospective evaluation methodologies that measure clinical impact

- Prioritizing sociotechnical integration over pure technical performance

- Aligning with evolving regulatory expectations for clinical evidence

By adopting these approaches, the field can transition from AI systems that excel at tests to those that genuinely enhance clinical reasoning, patient care, and drug development outcomes.

Building a Robust Validation Framework: From Data Curation to Model Deployment

The pursuit of creating AI models that can match or exceed human expertise in medical domains requires rigorous validation against standardized benchmarks. One critical benchmark involves comparing model performance against medical student exam results, which demands specialized approaches to data sourcing and preprocessing. This comparative guide examines the core methodologies for handling academic datasets and addressing class imbalances, which are pivotal for validating AI model performance against medical student capabilities. Research on medical question-answering datasets like MEDQA, which contains professional medical执照 exam questions from the United States, Mainland China, and Taiwan, demonstrates the complexity of this task, with even state-of-the-art methods achieving only 36.7%, 70.1%, and 42.0% accuracy on these respective datasets [25].

The validation of AI models against medical student exam performance presents unique data challenges that extend beyond conventional machine learning applications. Medical AI validation requires processing multimodal data—including structured electronic health records, medical imagery, clinical text, and temporal physiological data—while maintaining the capacity for complex reasoning and knowledge application that defines medical expertise [26]. Furthermore, the inherent imbalances in medical datasets, where certain conditions or outcomes are naturally rare, necessitate specialized handling techniques to prevent model bias and ensure generalizable performance. This guide systematically compares the current methodologies for addressing these challenges, providing researchers with evidence-based approaches for robust medical AI validation.

Data Sourcing Strategies for Medical AI Validation

Academic Medical Dataset Acquisition

Sourcing appropriate data for medical AI validation requires accessing diverse, high-quality datasets that reflect the complexity of medical knowledge assessment. The MEDQA dataset represents a pioneering effort in this domain, comprising 12,723 English, 34,251 Simplified Chinese, and 14,123 Traditional Chinese questions sourced from professional medical执照 examinations in the United States, Mainland China, and Taiwan respectively [25]. These questions demand not only factual recall but also clinical decision-making capabilities, mirroring the challenges faced by medical students. Researchers typically acquire such datasets through formal academic channels, often requiring ethical approvals and data use agreements due to the sensitive nature of medical information.

The process of sourcing medical data for AI validation extends beyond mere collection to encompass careful curation and documentation. For instance, the MEDQA project collected 18 widely-used English medical textbooks for the USMLE component, 33简体中文medical textbooks for the MCMLE, and shared documentation between USMLE and TWMLE due to overlapping source materials [25]. This meticulous approach ensures that models have access to the relevant knowledge sources that medical students would utilize. When sourcing medical data, researchers must consider linguistic and regional variations in medical practice, disease prevalence, and treatment protocols, all of which can significantly impact model performance and generalizability across different healthcare contexts.

Multimodal Medical Data Integration

Modern medical AI validation increasingly leverages multimodal data to more comprehensively assess model capabilities against human performance. Recent advances have demonstrated the value of integrating structured electronic health records (including demographics, physiological parameters, laboratory findings, medications, procedures, and diagnoses) with unstructured data such as medical images (X-rays, CT, MRI), clinical text, temporal physiological signals, and genomic information [26]. This multimodal approach more accurately reflects the integrative reasoning processes employed by medical professionals and enables more meaningful comparisons between AI and human performance.

The MedMPT model developed by researchers at Tsinghua University exemplifies the potential of multimodal integration, utilizing 154,274 chest CT images and corresponding radiology reports for multi-modal self-supervised learning [27]. This approach enables the model to process multi-source heterogeneous data and supports multiple typical clinical tasks, including lung disease diagnosis, radiology report generation, and medication recommendation. For medical AI validation against student performance, such multimodal frameworks provide a more comprehensive assessment of clinical reasoning capabilities compared to unimodal approaches, potentially identifying specific strengths and limitations in both artificial and human intelligence.

Table 1: Representative Multimodal Medical Datasets for AI Validation

| Dataset Name | Data Modalities | Sample Size | Primary Application | Performance Metrics |

|---|---|---|---|---|

| MEDQA [25] | Medical exam questions (text) | 61,097 questions | Medical knowledge assessment | Accuracy: 36.7% (EN), 70.1% (CN-S), 42.0% (CN-T) |

| MedMPT [27] | CT images, radiology reports | 154,274 cases | Respiratory disease diagnosis, report generation | Leading performance in multiple clinical tasks |

| EHR Multimodal [26] | Structured data, images, text, signals | Varies by study | Comprehensive clinical decision support | Superior to single-modal approaches |

Preprocessing Techniques for Imbalanced Medical Data

Understanding Data Imbalance in Medical Contexts

Imbalanced datasets present a fundamental challenge in medical AI validation, particularly when comparing model performance to human capabilities on rare conditions or complex clinical scenarios. An imbalanced dataset refers to one where class representations are unequal, with some classes having significantly fewer samples than others [28] [29]. In medical contexts, this imbalance reflects real-world clinical realities—for instance, in fraud transaction detection where most transactions are legitimate, or in patient churn prediction where most patients continue services [28]. When the imbalance ratio exceeds approximately 4:1, classifiers tend to become biased toward the majority class, potentially compromising performance on critical minority classes that may represent rare but clinically significant conditions [28].

The conventional accuracy metric becomes particularly misleading with imbalanced medical data. As demonstrated in research, a classifier achieving 90% accuracy on a dataset where 90% of samples belong to a single class may be practically useless if it simply predicts the majority class for all samples [28] [30]. This limitation necessitates alternative evaluation metrics and specialized processing techniques when validating medical AI systems against human performance, particularly for recognizing rare conditions where medical students might demonstrate specific expertise compared to AI models.

Data-Level Approaches: Sampling Techniques

Data-level approaches address class imbalance by modifying the dataset composition through various sampling strategies before training models. These techniques are particularly valuable for medical AI validation where collecting additional rare case samples may be impractical or ethically challenging.

Random Sampling Methods

Random sampling represents the most straightforward approach to addressing data imbalance. Random oversampling (over-sampling) increases the representation of minority classes by replicating existing samples, while random undersampling (under-sampling) reduces majority class representation by selecting a subset of samples [28] [29]. In Python's imblearn library, these approaches can be implemented as follows:

While simple to implement, random oversampling may lead to overfitting by creating exact duplicates of minority class samples, while random undersampling may discard potentially useful majority class information [29]. The appropriate balance between these approaches depends on the specific medical validation context and the degree of initial imbalance.

Intelligent Sampling Algorithms

Advanced sampling techniques improve upon random approaches by generating synthetic samples or employing more strategic selection criteria. The Synthetic Minority Over-sampling Technique (SMOTE) generates synthetic minority class samples by interpolating between existing instances rather than simply replicating them [28] [29]. For a minority sample x, SMOTE identifies its k-nearest neighbors, then creates new samples along the line segments joining x to its neighbors according to the formula: x_new = x + rand(0,1) * (x' - x), where x' is a randomly selected neighbor [29].

Borderline-SMOTE represents a refinement that focuses specifically on minority samples near class boundaries, which are often most critical for classification accuracy [29]. Adaptive Synthetic Sampling (ADASYN) further extends this approach by generating more synthetic samples for minority class examples that are harder to learn [29]. These advanced techniques can be particularly valuable for medical AI validation where decision boundaries between conditions may be nuanced and clinically significant.

Ensemble Sampling Approaches

Ensemble methods combine sampling with multiple model training to address imbalance while maintaining model diversity. EasyEnsemble employs independent sampling to create multiple balanced subsets, training separate classifiers on each subset and combining their predictions [29]. BalanceCascade uses a sequential approach where correctly classified majority class samples are progressively removed from subsequent training sets [29]. These approaches can be particularly effective for medical AI validation where robustness across different clinical scenarios is essential.

Table 2: Comparison of Sampling Techniques for Imbalanced Medical Data

| Technique | Mechanism | Advantages | Limitations | Medical Validation Context |

|---|---|---|---|---|

| Random Oversampling [28] | Replicates minority samples | Simple implementation, preserves all minority information | Risk of overfitting to repeated samples | Suitable for small minority classes in medical data |

| Random Undersampling [28] | Removes majority samples | Reduces computational burden, addresses imbalance | Discards potentially useful majority information | Appropriate for very large majority classes |

| SMOTE [29] | Generates synthetic minority samples | Reduces overfitting risk, creates diverse samples | May create implausible medical samples | Useful for interpolatable medical features |

| Borderline-SMOTE [29] | Focuses on boundary samples | Targets most informative samples | Complex implementation | Valuable for fine diagnostic distinctions |

| ADASYN [29] | Adaptive synthetic generation | Emphasis on difficult samples | May amplify noise | Suitable for heterogeneous medical conditions |

| EasyEnsemble [29] | Multiple balanced subsets | Model diversity, robust performance | Computational intensity | Ideal for high-stakes medical validation |

| BalanceCascade [29] | Progressive sample removal | Strategic sample selection, efficient | Sequential dependency | Appropriate for cascaded clinical decisions |

Algorithm-Level Approaches: Cost-Sensitive Learning

Algorithm-level approaches address data imbalance by modifying the learning process itself rather than altering the training data distribution. Cost-sensitive learning incorporates varying misclassification costs for different classes, directly enforcing a preference for correctly classifying minority samples that might otherwise be overlooked [28] [29]. In medical validation contexts, this approach aligns with clinical priorities where misdiagnosing a serious but rare condition typically carries greater consequences than misclassifying a common benign condition.

The AdaCost algorithm represents an advancement in cost-sensitive learning that adaptively adjusts misclassification costs during training, increasing weights for costly misclassifications and decreasing weights for costly correct classifications [29]. This dynamic adjustment can be particularly valuable for medical AI validation where the clinical significance of different error types may vary across patient populations or clinical contexts. Implementation typically involves modifying the loss function to incorporate asymmetric costs for different types of errors, effectively forcing the model to prioritize performance on medically critical minority classes.

Alternative algorithm-level approaches include one-class learning and anomaly detection, which reformulate the classification problem to focus specifically on identifying the minority class instances [28] [29]. One-class SVM, for instance, models the distribution of the majority class and identifies deviations as potential minority class instances [29]. These approaches can be particularly effective for medical outlier detection, such as identifying rare diseases or unusual presentations within predominantly healthy populations.

Experimental Framework for Medical AI Validation

Dataset Partitioning and Cross-Validation Strategies

Robust experimental design is essential for meaningful comparison between AI models and medical student performance. Dataset partitioning should carefully maintain class distributions across splits, particularly for imbalanced medical data. The standard approach involves separate training, validation, and test sets, with the validation set used for hyperparameter tuning and early stopping, while the test set remains completely untouched until final evaluation [31]. This separation prevents optimistic bias in performance estimates, which is especially crucial when validating against human capabilities.

Stratified k-fold cross-validation provides enhanced reliability for imbalanced medical data by preserving class proportions in each fold [28]. This approach is particularly valuable for medical AI validation where certain conditions may be rare but clinically significant. Implementation typically involves:

For extremely limited medical data, leave-one-out cross-validation (where k equals the number of samples) may be appropriate, despite computational intensity [28]. The critical consideration in medical AI validation is ensuring that evaluation reflects real-world clinical scenarios where models will encounter rare conditions with limited examples during training.

Evaluation Metrics for Imbalanced Medical Data

Conventional accuracy metrics are particularly misleading for imbalanced medical datasets, where a naive classifier predicting only the majority class might achieve high accuracy while failing completely on medically critical minority classes [28] [30] [31]. Comprehensive medical AI validation requires multiple complementary metrics that capture different aspects of model performance, particularly for rare conditions.

Precision and recall provide more nuanced insights, with precision measuring the reliability of positive predictions and recall measuring the completeness of positive identification [31]. The F1-score harmonizes these potentially competing objectives into a single metric. For medical validation, the precision-recall curve (PRC) and area under this curve (AUPRC) often provide more meaningful performance characterization than the conventional ROC curve, particularly when positive cases are rare [31]. Additional metrics including true positives, false positives, true negatives, and false negatives enable comprehensive understanding of model behavior across different error types [31].

These comprehensive metrics enable nuanced comparison between AI models and medical student performance, particularly for recognizing rare conditions where human expertise might demonstrate advantages over pattern recognition systems.

Comparative Analysis of Medical AI Performance

Performance on Standardized Medical Examinations

Rigorous comparison of AI models against medical student performance requires standardized assessment frameworks. The MEDQA benchmark, comprising medical执照 examination questions from the United States, Mainland China, and Taiwan, provides precisely such a framework [25]. Current state-of-the-art methods achieve 36.7% accuracy on English questions, 70.1% on Simplified Chinese questions, and 42.0% on Traditional Chinese questions, demonstrating both the challenge of this domain and significant variation across linguistic and educational contexts [25]. These results suggest that while AI models have made substantial progress in medical knowledge assessment, they still trail competent medical students who typically achieve passing scores on these examinations.

Error analysis reveals distinctive patterns in AI performance on medical assessment. Successful models typically handle questions involving single reasoning steps with specific terminology that information retrieval systems can effectively match [25]. In contrast, models struggle with questions involving common symptoms where retrieved evidence may be non-specific, or multi-step reasoning where partial evidence may be misleading [25]. These limitations highlight specific areas where medical students may maintain advantages, particularly in integrative reasoning and contextual interpretation that transcend pattern matching approaches.

Multimodal Integration Performance

Multimodal approaches represent a promising direction for enhancing medical AI performance to better match human clinical reasoning. The MedMPT model, which integrates chest CT images with corresponding radiology reports, demonstrates the potential of multimodal learning, achieving leading performance in lung disease diagnosis, radiology report generation, and medication recommendation [27]. Such integrative capabilities more closely mirror the multimodal reasoning employed by medical students and practitioners, suggesting pathways for narrowing the performance gap between artificial and human intelligence in medical domains.

Research on electronic health record multimodal integration further demonstrates the superiority of combined data approaches over single-modality analysis [26]. Fusion methods—including early fusion (feature-level integration), late fusion (decision-level integration), and hybrid approaches—enable more robust performance across diverse clinical tasks including disease diagnosis, readmission prediction, mortality risk assessment, and medication recommendation [26]. The transformer architecture with its attention mechanisms has proven particularly effective for medical multimodal integration, enabling modeling of complex relationships across different data types [26].

Table 3: Multimodal Medical AI Performance Across Clinical Tasks

| Clinical Application | Data Modalities | Fusion Method | Performance Advantage |

|---|---|---|---|

| Alzheimer's Dementia Assessment [26] | MRI, Structured EHR | Hybrid fusion (CNN + CatBoost) | Enhanced diagnostic accuracy over single modality |

| Breast Lesion Subtype Diagnosis [26] | Mammography, Structured EHR | Deep feature fusion (CNN + XGBoost) | Improved subtype classification |

| Patient Readmission Prediction [26] | Medical text, Structured EHR | Deep feature fusion (SapBERT + ClinicalBERT) | Superior temporal prediction |

| Drug Recommendation [26] | Medical text, Structured EHR | Attention-based fusion (GAT + Transformer) | More appropriate therapeutic suggestions |

| Mortality Risk Prediction [26] | Temporal physiological data, Structured EHR | Decision fusion (CNN + Dense Network) | Enhanced risk stratification |

Implementation Workflow and Technical Toolkit

Preprocessing Workflow for Imbalanced Medical Data

The following workflow diagram illustrates a comprehensive approach to handling imbalanced medical data for AI validation:

The Researcher's Toolkit for Medical AI Validation

Table 4: Essential Research Reagents and Computational Tools

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| Imbalanced-learn (imblearn) [28] [29] | Python Library | Imbalance sampling algorithms | All sampling techniques (SMOTE, ADASYN, etc.) |

| TensorFlow with Keras [31] | Deep Learning Framework | Model building with class weights | Cost-sensitive learning implementation |

| MEDQA Dataset [25] | Benchmark Dataset | Medical knowledge assessment | Direct comparison with medical student performance |

| MedMPT Framework [27] | Multimodal Architecture | Medical image and text integration | Multimodal clinical reasoning validation |

| Stratified K-Fold [28] | Validation Method | Maintains class distribution in splits | Robust evaluation on imbalanced data |

| Precision-Recall Metrics [31] | Evaluation Framework | Comprehensive performance assessment | Meaningful metric for rare conditions |

| Transformer Architectures [26] | Model Framework | Multimodal data fusion | Complex clinical reasoning tasks |

The validation of AI models against medical student exam performance represents a rigorous benchmark for assessing medical artificial intelligence. Effective data sourcing and preprocessing, particularly for handling inherent class imbalances, is foundational to meaningful performance comparison. Current evidence suggests that while AI models have made substantial progress in specific medical domains, they still trail human medical expertise in areas requiring complex reasoning, contextual interpretation, and integration of multimodal clinical information [25]. Sampling techniques including SMOTE and ensemble methods, coupled with algorithm-level approaches like cost-sensitive learning, provide essential methodologies for addressing data imbalance and enabling fair comparison between artificial and human medical intelligence [28] [29] [31].

The future of medical AI validation will likely involve increasingly sophisticated multimodal approaches that more closely mirror the integrative reasoning processes of medical experts [27] [26]. Transformer-based architectures with attention mechanisms show particular promise for capturing complex relationships across diverse medical data types, potentially narrowing the performance gap between AI systems and human clinical reasoning. As these technologies evolve, maintaining rigorous approaches to data sourcing and preprocessing will remain essential for ensuring that medical AI validation accurately reflects real-world clinical capabilities and limitations, ultimately supporting the responsible integration of artificial intelligence into medical education and practice.

The ability of artificial intelligence (AI) models to pass rigorous medical licensing examinations has become a critical benchmark for assessing their potential in healthcare and drug development. These exams, such as the United States Medical Licensing Examination (USMLE), establish a high bar for medical knowledge, reasoning, and application, providing a standardized metric against which to validate AI performance. Research has progressively shifted from evaluating individual large language models (LLMs) to exploring sophisticated ensemble learning strategies that combine multiple models to achieve superior accuracy and reliability. This guide provides a comparative analysis of model performance, details key experimental methodologies, and presents a framework for researchers and scientists to select optimal AI models for biomedical applications, directly contextualized within validation research against medical student exam results.

Performance Comparison Tables

Performance of Ensemble Models on Medical QA Datasets

Table 1: Performance comparison of individual LLMs versus ensemble methods on standardized medical question-answering datasets. Accuracy values are presented as percentages (%).

| Model / Ensemble Method | MedMCQA Accuracy | PubMedQA Accuracy | MedQA-USMLE Accuracy |

|---|---|---|---|

| Best Individual LLM (Baseline) | 71.00 [32] | 89.50 [32] | 37.26 [32] |

| Boosting-based Weighted Majority Vote | 35.84 [32] | 96.21 [32] | 37.26 [32] |

| Cluster-based Dynamic Model Selection | 38.01 [32] | 96.36 [32] | 38.13 [32] |

Performance of Leading Individual LLMs on Medical Benchmarks (2025)

Table 2: Performance and characteristics of leading individual Large Language Models as of 2025, based on synthesis of recent reports and analyses. [33]

| Model | Reported MedQA/USMLE Accuracy | Key Strengths | Notable Limitations |

|---|---|---|---|

| OpenAI o1 | 96.9% [33] | Exceptional accuracy on standardized tests [33]. | High latency, cost, and performance drop with biased questions [33]. |

| DeepSeek-R1 | 96.3% [33] | Open-source, excellent for clinical workflow automation and patient communication [33]. | High computational requirements [33]. |

| Grok 2 (xAI) | 92.3% [33] | Strong performance with lower latency and cost (good value) [33]. | Not the absolute top performer in raw accuracy [33]. |

| Polaris 3.0 (Hippocratic AI) | Information Missing | Suite of 22 safety-focused models for patient-facing tasks [33]. | Information Missing |

| Claude 3 Opus | Information Missing | Superior performance on complex radiology diagnostic puzzles (54% accuracy) [33]. | Information Missing |

| GPT-4 | 86% [7] (Earlier benchmark); 78% on surgical image questions [34] | High performance on text and image-based surgical exam questions [34]. | Being surpassed by newer, more specialized models [33]. |

| Med-PaLM 2 | 86.5% [33] | Pioneering model that demonstrated expert-level performance [33]. | Surpassed by more recent models [33]. |

Detailed Experimental Protocols

The LLM-Synergy Ensemble Framework

The LLM-Synergy framework was designed to harness the collective strengths of diverse LLMs for medical question-answering. The experimental protocol for validating this framework is as follows [32]:

- *Step 1: Benchmarking Individual Models*

- Models: A set of diverse LLMs, including both general-purpose and medically-specialized models, are selected (e.g., GPT-4, Llama2, Vicuna, MedAlpaca, MedLlama).

- Task: Each model is evaluated in a zero-shot setting on the target medical QA datasets to establish baseline performance.

- *Step 2: Ensemble Method Implementation*

- Method 1: Boosting-based Weighted Majority Vote

- A boosting algorithm (e.g., AdaBoost) is employed to iteratively learn optimal weights for each constituent LLM based on their historical performance.

- During inference, the final answer is determined by a weighted majority vote, where models with higher weights have a greater influence on the decision.

- Method 2: Cluster-based Dynamic Model Selection

- Question-context embeddings are generated for all training queries.

- These embeddings are clustered (e.g., using k-means) to identify groups of semantically similar questions.

- For each cluster, the best-performing LLM from the benchmarking phase is identified and assigned.

- During inference, a new query is embedded, assigned to the nearest cluster, and the pre-selected optimal LLM for that cluster is used to generate the answer.

- Method 1: Boosting-based Weighted Majority Vote

- *Step 3: Evaluation*

- Datasets: The framework is tested on multiple medical QA datasets, such as PubMedQA, MedQA-USMLE, and MedMCQA.

- Metric: Accuracy is used as the primary evaluation metric, comparing the ensemble methods against the best individual baseline model.

The AI Council Deliberation Protocol

A distinct ensemble-style approach, termed the "AI Council," demonstrates how structured dialogue between AI instances can enhance performance. The protocol is as follows [2]:

- *Step 1: Council Formation*

- Multiple instances (e.g., five) of the same base LLM (e.g., GPT-4) are instantiated.

- *Step 2: Structured Deliberation*

- A facilitator algorithm presents a medical question (e.g., from the USMLE) to all council members.

- Each member provides an initial answer and, crucially, its reasoning.

- If responses diverge, the facilitator summarizes the differing rationales and prompts the council to reconsider.

- *Step 3: Consensus Building*

- The deliberation process continues for multiple rounds until a consensus emerges.

- This process corrects a significant portion of initial individual errors, leading to higher collective accuracy.

Critical Considerations for Model Evaluation

The Limitations of Multiple-Choice Benchmarks

While MCQs are a common benchmark, research indicates they can significantly overestimate an LLM's true medical capability. A 2025 study introduced FreeMedQA, a benchmark of paired free-response and multiple-choice questions [35].

- Key Finding: LLMs exhibited an average absolute performance deterioration of 39.43% when switching from multiple-choice to free-response format, a drop greater than the 22.29% observed in senior medical students [35].

- Implication: This suggests LLMs may leverage test-taking strategies, such as pattern recognition from answer options, rather than demonstrating genuine reasoning. Free-response or multi-turn dialogue evaluations provide a more rigorous assessment of clinical understanding [35].

The MedHELM Holistic Evaluation Framework

The MedHELM framework addresses the need for context-driven evaluation beyond exam scores. It provides a structured methodology for researchers [36]:

- Principle: Evaluate LLMs on the specific tasks and data contexts relevant to the real-world application.

- Process: MedHELM comprises over 120 scenarios across 22 health-related task categories (e.g., clinical note summarization, patient communication). It tests multiple foundation models on these scenarios to stratify performance by specific use case [36].

- Utility: This allows researchers to select the best base model for developing specialized tools, such as one that identifies alcohol dependence from a patient's medical history, ensuring the model is validated against appropriate data and tasks [36].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential resources and datasets for conducting experimental validation of AI models in medicine.

| Research Reagent | Function & Utility in Experimental Validation |

|---|---|

| MedQA-USMLE Dataset [32] | A benchmark dataset based on USMLE-style questions used to evaluate model performance on graduate-level medical knowledge. |

| PubMedQA Dataset [32] | A biomedical QA dataset where answers are derived from corresponding research paper abstracts, testing research comprehension. |

| MedMCQA Dataset [32] | A large-scale dataset of multiple-choice questions from Indian medical entrance exams, useful for testing breadth of knowledge. |

| FreeMedQA Benchmark | A paired benchmark (multiple-choice and free-response) used to assess the gap between model test-taking and genuine reasoning capability [35]. |

| MedHELM Framework | An evaluation infrastructure that enables holistic testing of LLMs across numerous health-related tasks and scenarios [36]. |

| LLM-Blender | An ensemble framework that can be used to combine outputs from multiple LLMs to generate superior responses, though not medically-specific [32]. |

Implementing Explainable AI (XAI) for Transparent Decision-Making

The integration of Artificial Intelligence (AI) into high-stakes domains like medical education and healthcare has highlighted a critical challenge: the "black-box" nature of complex models undermines trust and accountability. Explainable AI (XAI) has emerged as an essential solution, providing transparency into AI decision-making processes. In medical education, where AI predictions can influence student progression and institutional policy, the need for interpretability is particularly acute [16]. Traditional AI models often lack the transparency required for educational decision-making, creating barriers to adoption despite their predictive capabilities [16]. XAI methods bridge this gap by making model predictions understandable to humans, enabling users to trust and rely on AI systems for critical decision-making [37].

The validation of AI model performance against medical student exam results represents a compelling use case for XAI implementation. When predicting student performance on high-stakes comprehensive assessments, educators need to understand not just the prediction itself, but the underlying factors driving that prediction to implement effective interventions [16]. This article provides a comprehensive comparison of XAI methodologies, their performance characteristics, and implementation frameworks, with specific focus on applications in medical education research and validation against medical student outcomes.

Comparative Analysis of XAI Methodologies

Taxonomy of XAI Approaches

XAI methods can be broadly categorized into several distinct approaches based on their underlying mechanisms and implementation strategies. Attribution-based methods like Grad-CAM (Gradient-weighted Class Activation Mapping) generate saliency maps by tracing a model's internal representations backward from the prediction to the input, typically using gradients or activations [38]. These methods highlight the specific regions of input data (such as image areas) that most significantly influenced the model's output. Perturbation-based techniques, including RISE (Randomized Input Sampling for Explanation), assess feature importance through systematic modifications of the input and observation of output changes without requiring access to the model's internal architecture [38]. Model-agnostic methods such as SHAP (SHapley Additive exPlanations) and LIME (Local Interpretable Model-agnostic Explanations) can be applied to any machine learning model by treating the model as a black box and analyzing input-output relationships [37]. Transformer-based methods leverage the self-attention mechanisms inherent in transformer architectures to provide global interpretability by tracing information flow across layers [38]. Native explainable models represent an emerging category where explainability is built directly into the model architecture rather than being applied as a post-hoc analysis [39].

Performance Comparison of XAI Methods

The table below summarizes the key characteristics and performance metrics of major XAI methods based on recent comparative studies:

Table 1: Performance Comparison of XAI Methods

| XAI Method | Category | Key Strengths | Computational Efficiency | Faithfulness Metrics | Primary Domains |

|---|---|---|---|---|---|

| SHAP | Model-agnostic | Theoretical guarantees from game theory; granular feature importance; global and local explanations | Moderate to high | High (identified in 35/44 Q1 journal articles) [37] | Healthcare, finance, general predictive analytics |

| Grad-CAM | Attribution-based | Class-discriminative localization; no architectural changes required; intuitive visual explanations | High | Moderate (improves overlap with human annotations by 30-35%) [38] | Computer vision, medical imaging |

| LIME | Model-agnostic | Intuitive local approximations; model-agnostic flexibility | Moderate | Moderate (faithfulness depends on perturbation strategy) [37] | General predictive tasks, text classification |

| RISE | Perturbation-based | High faithfulness scores; model-agnostic implementation | Low (computationally expensive) | High (highest in comparative studies) [38] | Critical systems, nuclear power plant diagnosis [40] |

| Transformer-based | Self-attention | Global interpretability; inherent to model architecture | High during inference | High (strong IoU scores in medical imaging) [38] | Medical imaging, natural language processing |

| SpikeNet | Native explainable | Integrated explanations; high alignment with expert annotations; low latency | Very high (31ms per image) [39] | High (XAlign score: 0.89±0.03 MRI, 0.91±0.02 ultrasound) [39] | Medical imaging, real-time diagnostics |

Quantitative Performance Metrics in Medical Education

In a recent study applying XAI to predict medical students' performance in comprehensive assessments, researchers developed a machine learning framework enhanced with explainable AI that demonstrated outstanding discriminative performance [16]. The stacking meta-model combining ensemble techniques (Random Forest, Adaptive Boosting, XGBoost) achieved remarkable results: AUC-ROC values of 0.97 for Comprehensive Medical Pre-Internship Examination (CMPIE) predictions and 0.99 for Clinical Competence Assessment (CCA) predictions, along with F1-scores of 0.966 and 0.994 respectively [16]. The implementation of SHAP provided granular insights into model logic, identifying high-impact courses as dominant predictors of success and enabling individualized risk profiles [16].

Experimental Protocols and Implementation Frameworks

XAI Workflow for Predictive Modeling in Medical Education

The following diagram illustrates the complete experimental workflow for implementing XAI in medical education prediction tasks, synthesized from multiple studies:

Detailed Experimental Protocol for Medical Education Assessment